A Comprehensive Computational Pipeline for Stem Cell scRNA-seq Data: From Foundational Analysis to Clinical Translation

This article provides a detailed guide to the computational analysis of single-cell RNA sequencing (scRNA-seq) data from stem cell research.

A Comprehensive Computational Pipeline for Stem Cell scRNA-seq Data: From Foundational Analysis to Clinical Translation

Abstract

This article provides a detailed guide to the computational analysis of single-cell RNA sequencing (scRNA-seq) data from stem cell research. Aimed at researchers, scientists, and drug development professionals, it covers the foundational principles of scRNA-seq, including cell sorting and quality control for sensitive stem cell populations. It explores a complete methodological workflow from data pre-processing and integration to clustering, annotation, and trajectory inference. The guide further addresses critical troubleshooting and optimization strategies, such as feature selection for improved data integration. Finally, it discusses validation techniques and the comparative performance of analysis tools, concluding with the translational potential of these pipelines in advancing regenerative medicine and therapeutic discovery.

Laying the Groundwork: Core Principles and Experimental Design for Stem Cell scRNA-seq

Single-cell RNA sequencing (scRNA-seq) represents a revolutionary advance in transcriptomic analysis, enabling researchers to profile gene expression at the level of individual cells rather than population averages [1] [2]. This technological breakthrough has proven particularly valuable in stem cell research, where cellular heterogeneity plays a crucial role in fate decisions, differentiation potential, and therapeutic applications [1]. Even in seemingly homogeneous pluripotent stem cell cultures, scRNA-seq has revealed distinct subpopulations of cells in different functional states, challenging previous assumptions about uniform cell populations and providing unprecedented insights into the complexity of stem cell biology [3].

Traditional bulk RNA sequencing approaches obscure cell-to-cell variation by measuring average expression across thousands of cells, effectively masking rare cell types and continuous transitional states [1] [2]. In contrast, scRNA-seq captures this heterogeneity, allowing identification of novel cell subtypes, reconstruction of developmental trajectories, and discovery of regulatory networks governing cell fate decisions [1]. For stem cell researchers, this capability has transformed our understanding of pluripotency, lineage commitment, and the molecular mechanisms underlying self-renewal and differentiation.

Key scRNA-seq Technologies and Methodologies

The complete scRNA-seq workflow encompasses multiple critical steps from sample preparation to data generation, each requiring careful optimization for stem cell applications.

Single-Cell Isolation Methods

The first critical step in any scRNA-seq experiment involves isolating viable single cells from culture or tissue. The choice of isolation method significantly impacts throughput, viability, and experimental outcomes.

- Microwell-based platforms: Technologies like Fluidigm C1 provide automated single-cell lysis, RNA extraction, and cDNA synthesis with visual inspection capability, though with limited throughput [4] [2]. These systems allow researchers to exclude empty wells or those containing damaged cells prior to library preparation, improving data quality.

- Droplet-based methods: Commercial platforms like 10x Genomics Chromium system use microfluidics to encapsulate individual cells with barcoded beads in nanoliter droplets, enabling high-throughput profiling of thousands to millions of cells [4] [5]. This approach dramatically reduces reagent costs and processing time but offers less control over cell input.

- Fluorescence-Activated Cell Sorting (FACS): This remains the gold standard for isolating specific cell populations based on surface markers, making it ideal for studying rare stem cell subtypes [2]. FACS provides high purification efficiency and the ability to select cells based on multiple fluorescent parameters simultaneously.

Library Preparation Protocols

scRNA-seq protocols diverge primarily in their approach to cDNA synthesis and amplification, with significant implications for data quality and applications.

- Full-length transcript protocols: Methods like Smart-seq2 generate sequencing libraries with uniform coverage across entire transcripts, enabling detection of alternative splicing, allele-specific expression, and single-nucleotide polymorphisms [6] [5]. These protocols are ideal for detailed characterization of transcriptional heterogeneity in stem cell populations.

- 3' or 5' end-counting methods: Droplet-based approaches typically sequence only the 3' or 5' ends of transcripts but incorporate Unique Molecular Identifiers (UMIs) that enable precise molecular counting and eliminate PCR amplification bias [5]. These high-throughput methods are optimal for large-scale studies of cellular composition in complex samples.

- UMI incorporation: UMIs are short random barcodes added during reverse transcription that tag individual mRNA molecules, allowing bioinformatic correction for amplification bias and providing absolute quantitative data [5]. This feature has proven particularly valuable for accurately comparing gene expression levels across different stem cell subpopulations.

Table 1: Comparison of Major scRNA-seq Technologies

| Technology | Throughput | Transcript Coverage | UMIs | Amplification Method | Best Applications in Stem Cell Research |

|---|---|---|---|---|---|

| Smart-seq2 | Low-medium | Full-length | No | PCR | Detailed characterization of pluripotency networks, isoform usage |

| 10x Genomics Chromium | High | 3' end counting | Yes | PCR | Large-scale atlas projects, rare population discovery |

| Fluidigm C1 | Medium | Full-length | No | PCR | Focused studies with visual quality control |

| CEL-Seq2 | Medium-high | 3' end counting | Yes | IVT | Quantitative comparison of differentiation states |

| MARS-Seq | Medium-high | 3' end counting | Yes | IVT | High-throughput screening applications |

Sequencing Considerations

For stem cell applications, sequencing depth and read configuration must be optimized for the specific biological questions. While droplet-based methods typically sequence 1,000-3,000 genes per cell at modest depth, full-length protocols like Smart-seq2 require deeper sequencing (1-5 million reads per cell) to fully characterize transcriptional diversity [5]. Recent benchmarking studies suggest that sequencing approximately 50,000 reads per cell provides near-maximal gene detection for most pluripotent stem cell studies [3].

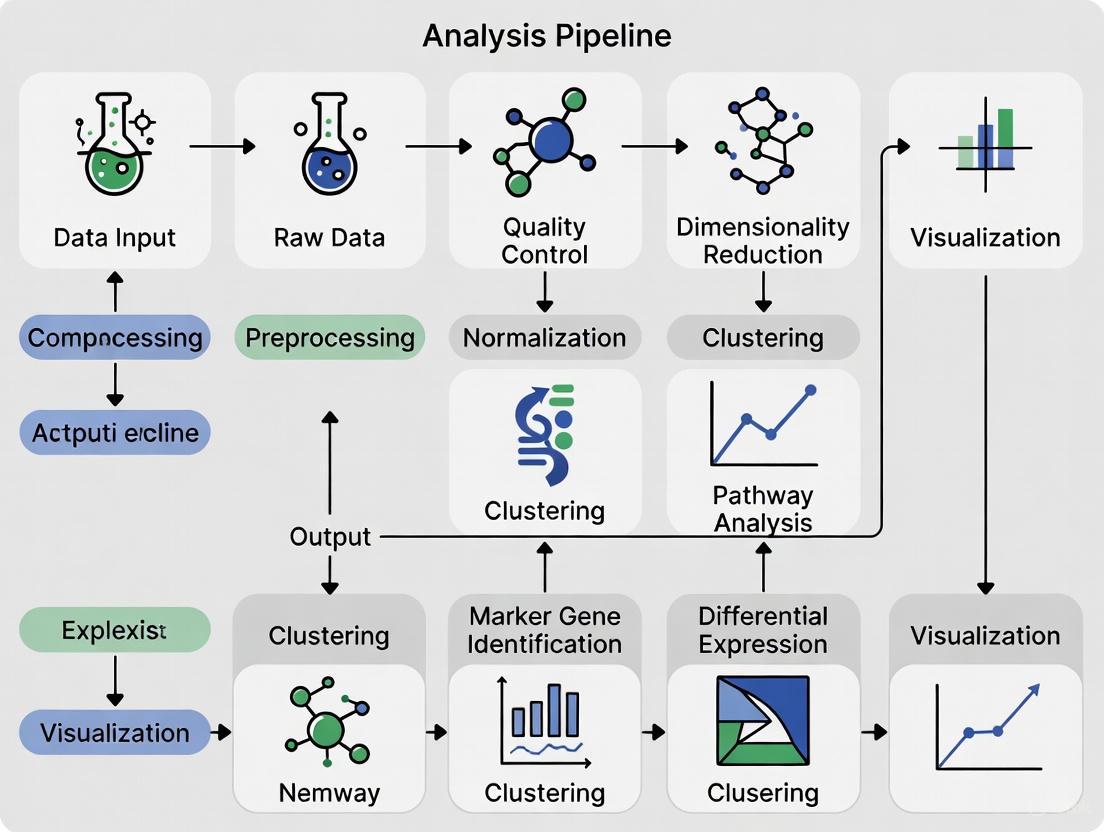

Computational Analysis Pipeline

Core Bioinformatics Workflow

The analysis of scRNA-seq data requires specialized computational tools to transform raw sequencing data into biological insights. The standard workflow encompasses multiple processing stages, each with specific tools and considerations for stem cell data.

Quality Control and Preprocessing

Quality assessment represents the critical first step in scRNA-seq analysis, ensuring that only high-quality cells inform downstream biological interpretations.

- Cell-level QC: Filtering based on unique molecular identifiers (UMIs) per cell, genes detected per cell, and mitochondrial RNA percentage eliminates low-quality or dying cells [7]. For human stem cell cultures, typical thresholds include minimums of 500-1,000 genes and 1,000 UMIs per cell, with mitochondrial percentages below 10-20% [7] [3].

- Gene-level QC: Removing genes detected in very few cells reduces technical noise and computational burden, though stringent filtering may eliminate biologically relevant low-abundance transcripts [7].

- Doublet detection: Tools like Scrublet and DoubletFinder identify multiplets—droplets or wells containing more than one cell—which can create artificial hybrid expression profiles and mislead interpretations [8] [7].

Normalization and Batch Effect Correction

Normalization addresses technical variability in sequencing depth and efficiency across cells, with methods ranging from simple total count scaling to more sophisticated approaches like SCnorm or regularized negative binomial regression [8] [7]. For stem cell studies comparing multiple samples or experimental conditions, batch effect correction using tools like Mutual Nearest Neighbors (MNN) or Combat is essential to distinguish technical artifacts from true biological differences [8].

Dimensionality Reduction and Clustering

The high-dimensional nature of scRNA-seq data (measuring 10,000-20,000 genes per cell) necessitates dimensionality reduction for visualization and interpretation.

- Principal Component Analysis (PCA): Identifies linear combinations of genes that capture maximum variance in the dataset, typically retaining 10-50 principal components for downstream analysis [6].

- Uniform Manifold Approximation and Projection (UMAP) and t-SNE: Non-linear dimensionality reduction techniques that visualize high-dimensional data in two or three dimensions, enabling intuitive exploration of cellular relationships and population structure [6] [8].

- Clustering algorithms: Methods like Louvain or Leiden clustering identify discrete cell populations based on transcriptional similarity, with resolution parameters adjustable to capture different levels of granularity [6] [3].

Advanced Analytical Approaches

- Differential expression analysis: Identifies genes that vary significantly between cell populations or conditions using methods like MAST or DESeq2 adapted for single-cell data [1] [3].

- Pseudotime and trajectory inference: Tools like Monocle reconstruct developmental trajectories by ordering cells along differentiation paths, revealing transcriptional dynamics and regulatory transitions [6] [4].

- Gene set enrichment analysis: Determines whether predefined sets of genes (e.g., pathways, regulatory networks) show coordinated expression changes between biological states [6].

Applications in Stem Cell Research

Resolving Pluripotency Heterogeneity

scRNA-seq has revealed unexpected heterogeneity within supposedly homogeneous pluripotent stem cell populations. A comprehensive analysis of 18,787 human induced pluripotent stem cells (hiPSCs) identified four distinct subpopulations: a core pluripotent population (48.3%), proliferative cells (47.8%), early primed for differentiation (2.8%), and late primed for differentiation (1.1%) [3]. Each subpopulation exhibited unique transcriptional signatures and functional properties, demonstrating that pluripotency encompasses multiple discrete states rather than a single uniform condition.

Characterizing Differentiation Trajectories

During differentiation, scRNA-seq enables researchers to reconstruct continuous developmental processes and identify transitional states that would be obscured in bulk analyses. Studies of human embryonic stem cells (ESCs) transitioning to feeder-free extended pluripotent stem cells (ffEPSCs) have mapped the molecular pathways involved in shifting from primed to extended pluripotent states, revealing critical regulators of pluripotency flexibility [6]. Similarly, analysis of hiPSC-derived muscle progenitor cells (hiPSC-MuPCs) identified four distinct subpopulations—noncycling progenitors, cycling progenitors, committed cells, and myocytes—each with unique marker expression and functional properties [9].

Identifying Novel Regulators

The resolution provided by scRNA-seq facilitates discovery of novel regulatory factors and networks controlling stem cell behavior. In hiPSC-MuPCs, researchers identified the E2F transcription factor family as key regulators of proliferation, providing insights into the molecular control of muscle progenitor expansion [9]. Similarly, repeat sequence analysis based on the T2T genome database has revealed stage-specific repeat elements that contribute to pluripotency regulation and developmental transitions [6].

Table 2: Essential Research Reagent Solutions for Stem Cell scRNA-seq

| Reagent/Material | Function | Example Applications |

|---|---|---|

| Matrigel | Extracellular matrix coating for pluripotent stem cell culture | Maintaining ESCs and iPSCs in undifferentiated state [6] |

| mTeSR1 Medium | Defined, feeder-free culture medium for human pluripotent stem cells | Maintaining H9 human ESCs prior to differentiation [6] |

| LCDM-IY Medium | Chemical cocktail for inducing extended pluripotency | Converting primed ESCs to ffEPSCs [6] |

| TrypLE/Accutase | Gentle cell dissociation enzymes | Generating single-cell suspensions without damaging surface markers [6] |

| Poly(dT) Primers | mRNA capture during reverse transcription | Selective amplification of polyadenylated transcripts [5] |

| UMI Barcodes | Molecular tagging of individual transcripts | Quantitative gene expression analysis without amplification bias [5] |

| Template Switching Oligos | cDNA amplification | Full-length transcript coverage in Smart-seq2 protocols [6] [5] |

Experimental Protocol: scRNA-seq of Pluripotent Stem Cells

Cell Culture and Preparation

- Maintenance of human ESCs: Culture H9 human ESCs on Matrigel-coated plates (1:100 dilution) in mTeSR1 medium supplemented with 1% penicillin-streptomycin at 37°C with 5% CO₂ [6].

- Passaging: Dissociate cells with Accutase every 5 days and replate at appropriate density to maintain undifferentiated morphology.

- Transition to ffEPSCs: Seed single ESCs dissociated with Accutase onto Matrigel-coated plates in mTeSR1 medium. The following day, replace medium with LCDM-IY, consisting of a 1:1 mixture of knockout DMEM/F12 and neurobasal medium, supplemented with 0.5× B27, 0.5× N2, 5% knockout serum replacement, and small molecules (human LIF, CHIR99021, dimethindene maleate, minocycline hydrochloride, IWR-endo-1, and Y-27632) [6].

- Quality assessment: Regularly monitor pluripotency marker expression (OCT4, NANOG, SOX2) via immunocytochemistry and flow cytometry to ensure culture quality.

Single-Cell Capture and Library Preparation

This protocol utilizes the Smart-seq2 method for high-sensitivity, full-length transcript coverage [6].

- Cell dissociation: Harvest cells using TrypLE at 37°C for 5-7 minutes, quench with culture medium, and filter through 40μm strainer to obtain single-cell suspension.

- Cell viability assessment: Determine viability using Trypan Blue exclusion or fluorescent viability dyes, aiming for >90% viability.

- Single-cell isolation: Use FACS to sort individual cells into 96- or 384-well plates containing lysis buffer, visually confirming single-cell deposition.

- Reverse transcription: Perform first-strand cDNA synthesis using poly(dT) primers and template-switching oligos to add universal adapter sequences.

- cDNA amplification: Amplify cDNA using PCR with 20 initial cycles followed by 9 additional cycles, using high-fidelity polymerase to minimize amplification bias.

- Library preparation: Fragment amplified cDNA using Covaris shearing, select 3' fragments using Dynabeads, and prepare sequencing libraries using Kapa Hyper Prep Kit.

- Quality control: Assess library quality using Bioanalyzer or TapeStation, looking for appropriate size distribution and concentration.

Sequencing and Data Processing

- Sequencing parameters: Sequence libraries on Illumina platform (HiSeq 2000 or equivalent) using paired-end sequencing (2×150 bp) to a depth of approximately 1-5 million reads per cell [6] [5].

- Read alignment: Process raw sequencing data using HISAT2 with GRCh38 reference genome, including both protein-coding and non-coding annotations [6].

- Transcript quantification: Generate count matrices using featureCounts based on GRCh38 gene annotation [6].

- Repeat element analysis: For specialized applications, align reads to T2T reference genome and quantify using RepeatMasker annotations [6].

Single-cell RNA sequencing has fundamentally transformed our understanding of stem cell biology by revealing the remarkable heterogeneity within seemingly uniform populations. The applications discussed—from dissecting pluripotency states to mapping differentiation trajectories—demonstrate the power of this technology to uncover new biological insights with significant implications for basic research and therapeutic development. As both experimental protocols and computational分析方法 continue to evolve, scRNA-seq will undoubtedly remain an indispensable tool for elucidating the complexity of stem cell systems and advancing regenerative medicine applications.

The success of single-cell RNA sequencing (scRNA-seq) research, particularly in stem cell biology, is profoundly dependent on the initial steps of cell isolation and sorting. The ability to resolve cellular heterogeneity within a population hinges on obtaining a pure, viable, and unbiased sample of target cells. Fluorescence-Activated Cell Sorting (FACS) and Magnetic-Activated Cell Sorting (MACS) are two cornerstone technologies that enable this precise isolation. Within the broader computational pipeline for stem cell scRNA-seq, the choice of this initial wet-lab strategy directly impacts all subsequent bioinformatics analyses, from the accuracy of cell clustering to the validity of inferred developmental trajectories. This application note provides a detailed comparison of FACS and MACS, along with standardized protocols, to guide researchers in selecting and implementing the optimal cell sorting strategy for their stem cell research.

Comparative Analysis of Cell Sorting Technologies

The selection of a cell sorting method is a critical decision point in experimental design. The table below provides a structured comparison of FACS and MACS to inform this choice.

Table 1: Comparison of FACS and MACS Technologies for Stem Cell Isolation

| Feature | FACS (Fluorescence-Activated Cell Sorting) | MACS (Magnetic-Activated Cell Sorting) |

|---|---|---|

| Principle | Cells are hydrodynamically focused and interrogated by lasers; droplets containing single cells are electrically charged and deflected based on fluorescence and light scatter [2] [10]. | Cells are labeled with antibody-conjugated magnetic beads and passed through a column placed in a strong magnetic field; labeled cells are retained while unlabeled cells are washed away [10]. |

| Resolution | High. Can distinguish cells based on multiple fluorescence parameters and complex surface marker combinations (e.g., Lin⁻CD34⁺CD38⁻CD45RA⁻CD90⁺CD49f⁺ for LT-HSCs) [10]. | Moderate. Ideal for enrichment or depletion of cell populations based on one or a few markers. |

| Throughput | Lower to Medium. Typically sorts thousands to tens of thousands of cells per second [2]. | High. Rapidly processes large sample volumes, suitable for pre-enrichment steps [10]. |

| Key Advantage | Multiplexing capability, high purity, and ability to isolate rare cells based on complex phenotypic signatures. | High speed, simplicity, cost-effectiveness, and compatibility with sensitive cells due to gentler processing. |

| Primary Limitation | Higher cost, technical complexity, potential for greater cellular stress, and requires specialized instrumentation. | Limited multiplexing capability and generally lower purity compared to FACS. |

| Ideal Application | Isolation of highly defined, rare stem cell populations (e.g., LT-HSCs) for in-depth scRNA-seq where maximum purity is essential [10]. | Rapid pre-enrichment of a target population (e.g., CD34⁺ cells) from a complex starting material like mobilized peripheral blood before a secondary, more refined sort [10]. |

Detailed Experimental Protocols

FACS Protocol for Human Long-Term Hematopoietic Stem Cells (LT-HSCs)

This protocol is adapted from current methodologies for the isolation of human LT-HSCs from mobilized peripheral blood (mPB) [10].

Workflow Overview:

Materials and Reagents: Table 2: Key Research Reagent Solutions for FACS Isolation of Human LT-HSCs

| Reagent / Material | Function / Specificity | Example Clone / Catalog Number |

|---|---|---|

| Anti-Human CD34 | Identifies hematopoietic stem and progenitor cells (HSPCs) | 8G12 [10] |

| Anti-Human CD38 | Used to exclude lineage-committed progenitors | HB7 [10] |

| Anti-Human CD45RA | Marker for lymphoid priming; excluded on LT-HSCs | HI100 [10] |

| Anti-Human CD90 (Thy1) | Further enriches for primitive stem cells | 5E10 [10] |

| Anti-Human CD49f | Integrin marker defining LT-HSCs with engraftment potential | GoH3 [10] |

| Lineage Cocktail | Mixture of antibodies to exclude mature blood cells (e.g., CD2, CD3, CD14, CD16, CD19, CD56, CD235a) [10] | Various [10] |

| Fixable Viability Dye | Distinguishes and excludes dead cells | e.g., Thermo Fisher 65-0866-14 [10] |

| FACSAria III Cell Sorter | Instrument for high-speed, multi-parameter cell sorting | BD Biosciences [10] |

Step-by-Step Methodology:

- Sample Preparation: Obtain mPB via leukapheresis from G-CSF-treated donors. Isolate peripheral blood mononuclear cells (PBMCs) using standard Ficoll density gradient centrifugation.

- CD34⁺ Pre-enrichment: Use a commercial human CD34 MicroBead Kit to magnetically enrich for CD34⁺ cells. This step significantly reduces sample complexity and increases the efficiency of the subsequent FACS sort [10].

- Antibody Staining: Resuspend the enriched CD34⁺ cells in a FACS buffer (e.g., PBS with 2% FBS). Incubate with a carefully titrated antibody cocktail containing:

- Fluorescently-conjugated antibodies against the lineage panel (Lin), CD34, CD38, CD45RA, CD90, and CD49f.

- A fixable viability dye to exclude non-viable cells.

- Include fluorescence-minus-one (FMO) and single-stain controls for proper instrument setup and compensation.

- FACS Gating Strategy: Using a high-resolution sorter (e.g., FACSAria III), employ the following sequential gating strategy to identify and isolate LT-HSCs:

- Gate 1 (Viable Singlets): Exclude debris and doublets based on forward scatter (FSC) and side scatter (SSC) properties, then select viability dye-negative cells.

- Gate 2 (Lineage Negative): Select cells that are negative for the mature lineage markers (Lin⁻).

- Gate 3 (HSPC Enrichment): From the Lin⁻ population, select cells that are CD34⁺ and CD38⁻.

- Gate 4 (LT-HSC Identification): From the CD34⁺CD38⁻ population, select cells that are CD45RA⁻ and then, finally, CD90⁺CD49f⁺. This Lin⁻CD34⁺CD38⁻CD45RA⁻CD90⁺CD49f⁺ population is highly enriched for human LT-HSCs [10].

- Collection: Sort the target population directly into a collection tube containing an appropriate buffer (e.g., for subsequent scRNA-seq library preparation). Maintain cold conditions to preserve RNA integrity.

MACS Protocol for CD34⁺ Stem Cell Enrichment

MACS is often used as a standalone method for population enrichment or as a critical pre-enrichment step prior to FACS.

Workflow Overview:

Step-by-Step Methodology:

- Sample Preparation: Prepare a single-cell suspension from bone marrow or mobilized peripheral blood. Lyse red blood cells if necessary.

- Magnetic Labeling: Incubate the cell suspension with superparamagnetic, antibody-conjugated microbeads directed against CD34. The incubation is typically performed at 4°C for 15-30 minutes.

- Magnetic Separation: Place the labeled cell suspension onto a MACS column seated within a strong magnetic field. The unlabeled (CD34⁻) cells will pass through the column and are collected as the flow-through fraction.

- Washing: Rinse the column several times with buffer to ensure complete removal of any unbound, non-target cells.

- Elution: Remove the column from the magnetic field and pipette a buffer through it to flush out the positively selected CD34⁺ cells. This enriched fraction is now ready for downstream applications or further refinement by FACS.

Integration with scRNA-seq Computational Pipelines

The quality of the starting cell population directly influences every subsequent step in the computational analysis of scRNA-seq data [11] [7].

- Data Quality Control (QC): The purity of the sorted population minimizes background noise and the presence of multiplets (doublets) during sequencing. High-purity sorts lead to cleaner data, simplifying the QC process where cells are filtered based on metrics like UMI counts, number of genes detected, and mitochondrial content [7].

- Cell Clustering and Annotation: A well-defined starting population reduces ambiguity in clustering algorithms. For instance, LT-HSCs isolated via FACS will form a distinct and coherent cluster, separate from multipotent progenitors (MPPs), facilitating accurate automated or manual cell type annotation [11].

- Trajectory Inference: Studying processes like stem cell differentiation requires a pure progenitor population. Successful isolation of LT-HSCs ensures that the root of the inferred pseudotemporal trajectory is biologically accurate, leading to more reliable models of lineage commitment [11].

The strategic selection and meticulous execution of cell sorting—whether by FACS for high-purity isolation of rare stem cells or by MACS for rapid enrichment—are foundational to generating biologically meaningful scRNA-seq data. The protocols outlined here provide a framework for isolating high-quality human hematopoietic stem cells. Integrating these optimized wet-lab techniques with robust computational pipelines empowers researchers to deconvolute stem cell heterogeneity with unprecedented resolution, accelerating discovery in developmental biology, regenerative medicine, and drug development.

Single-cell RNA sequencing (scRNA-seq) has revolutionized transcriptomic studies by enabling the characterization of cellular heterogeneity in complex tissues, a capability beyond the reach of traditional bulk RNA-seq [11]. This technology is particularly transformative for stem cell research, where understanding cell fate decisions, identifying rare progenitor populations, and mapping developmental trajectories are paramount. The reliability of these biological insights, however, is fundamentally dependent on a robust experimental design that carefully considers all steps from library preparation to sequencing depth [7]. A well-designed experiment forms the foundation for a powerful computational analysis pipeline, ensuring that the resulting data accurately reflects the underlying biology of stem cell systems. This article outlines key considerations and provides structured guidelines for designing successful scRNA-seq experiments within a stem cell research context.

Foundational Experimental Design Principles

A rigorous experimental design is the first and most critical step in any scRNA-seq study. Key principles must be adhered to in order to minimize technical artifacts and maximize biological discovery.

Replicates, Confounding, and Batch Effects

- Biological Replicates: Biological replicates, defined as different biological samples of the same condition, are absolutely essential for scRNA-seq experiments. They are necessary to measure biological variation and ensure the robustness and generalizability of findings [12]. For stem cell research, this could involve cells derived from different differentiations or different donor lines. In contrast, technical replicates (repeated measurements of the same biological sample) are considered unnecessary with modern scRNA-seq protocols as technical variation is now much lower than biological variation [12].

- Avoiding Confounding: A confounded experiment is one where the separate effects of different sources of variation cannot be distinguished. For example, if all control stem cell samples are from one batch of differentiation and all treatment samples are from another, the effect of the treatment is confounded by the batch effect. To avoid this, ensure that biological replicates for all conditions are balanced across factors such as sex, age, and culture batch [12].

- Managing Batch Effects: Batch effects, introduced when samples are processed on different days, by different personnel, or with different reagent lots, are a significant issue in scRNA-seq. The best practice is to design the experiment to avoid batches if possible. If batches are unavoidable:

Table 1: Checklist for Experimental Design in Stem Cell scRNA-seq

| Consideration | Best Practice | Rationale |

|---|---|---|

| Biological Replicates | Use a minimum of 3 replicates per condition; more for heterogeneous populations. | Ensures measured effects are reproducible and not specific to a single sample. |

| Cell Viability | Aim for >80% cell viability prior to loading on a scRNA-seq platform. | Reduces background noise from ambient RNA released by dead cells. |

| Cell Sorting | Use FACS to pre-enrich for target populations when studying rare stem cells. | Increases the likelihood of capturing rare cells of interest without excessive sequencing. |

| Batch Design | Process samples from all conditions in parallel and in a randomized order. | Minimizes technical batch effects that can be mistaken for biological signals. |

| Controls | Include positive/negative control samples when testing novel perturbations. | Aids in quality control and normalization during data analysis. |

Library Preparation and Platform Selection

Choosing the appropriate scRNA-seq library preparation protocol is a fundamental decision that dictates the scale, resolution, and cost of your experiment. The choice often involves a trade-off between the number of cells profiled and the depth of information obtained per cell.

Comparison of scRNA-seq Platforms and Methods

Different scRNA-seq techniques offer unique advantages and limitations. Full-length methods (e.g., Smart-Seq2, MATQ-Seq) excel in detecting more genes per cell, including low-abundance transcripts, and are ideal for isoform usage analysis and detecting allelic expression. In contrast, 3' or 5' end counting methods (e.g., 10x Genomics Chromium, Parse Biosciences SPLiT-seq) are typically higher-throughput, enabling the profiling of thousands to millions of cells at a lower cost per cell, which is advantageous for discovering rare cell types in a heterogeneous stem cell population [11].

Recent advancements include combinatorial indexing methods (e.g., SPLiT-seq, sci-RNA-seq), which do not require physical separation of single cells and are highly scalable. These are particularly useful for large-scale studies or when working with samples that are difficult to dissociate, such as certain tissues [11]. A systematic benchmark comparing the multiplexing platform from Parse Biosciences (SPLiT-seq) with the conventional droplet-based 10x Genomics platform found that while Parse had a lower cell capture efficiency (~27% vs ~53%), it demonstrated higher sensitivity in gene detection [13].

Table 2: Comparison of Representative scRNA-seq Library Preparation Methods

| Method (Example) | Isolation Strategy | Transcript Coverage | UMI | Amplification | Key Features & Best for Stem Cell Research |

|---|---|---|---|---|---|

| 10x Genomics Chromium | Droplet-based | 3'-end | Yes | PCR | High-throughput, standard for heterogeneity analysis, well-established pipelines. |

| Parse Biosciences (SPLiT-seq) | Combinatorial Indexing | 3'-only | Yes | PCR | Extremely scalable (up to 1M cells), cost-effective for huge studies, minimal equipment. |

| Smart-Seq2 | FACS/Microfluidic | Full-length | No | PCR | High sensitivity for lowly-expressed genes; ideal for isoform & mutation analysis. |

| CEL-Seq2 | FACS | 3'-only | Yes | IVT | Linear amplification can reduce bias, suitable for lower input samples. |

| SNARE-seq | Droplet-based | Multiome (ATAC+RNA) | Yes | PCR/IVT | Simultaneously profiles gene expression & chromatin accessibility in single cells. |

Sequencing Depth and Quality Control

Once libraries are prepared, determining the optimal sequencing depth is crucial for balancing cost and data quality. Sufficient depth is required to robustly detect genes, especially those that are lowly expressed but potentially critical in stem cell regulatory networks.

Guidelines for Sequencing and Quality Control

The required sequencing depth is intrinsically linked to the number of cells and the biological question. A general guideline for 3' end-counting methods like 10x Genomics is to aim for 20,000-50,000 reads per cell as a starting point [13]. Deeper sequencing (e.g., 50,000-100,000 reads per cell) can be beneficial for detecting rare transcripts or for more detailed analyses like splicing, but increasing the number of biological replicates often provides a better return on investment than excessively deepening sequencing per cell [12].

Following sequencing, rigorous quality control (QC) is performed at both the cell and gene level. For cell QC, standard metrics include:

- The number of unique genes detected per cell. Low counts may indicate poor-quality or empty droplets.

- The total number of UMIs (or counts) per cell.

- The percentage of mitochondrial reads. A high percentage (>10-20%) often indicates apoptotic or stressed cells, which is a critical consideration for sensitive stem cell cultures [7].

After QC, normalization is applied to remove technical variations in sequencing depth between cells. Methods designed specifically for scRNA-seq, such as scran and SCnorm, are generally recommended as they are more robust to the presence of a high proportion of differentially expressed genes, a common feature when comparing different stem cell states [14].

Table 3: Sequencing and QC Recommendations for Stem Cell scRNA-seq

| Analysis Goal | Recommended Reads/Cell | Key QC Metrics | Suggested Normalization |

|---|---|---|---|

| General Gene-level DE | 20,000 - 50,000 | Genes/Cell: 500-1000+UMIs/Cell: 1000+MT%: <10-20% | scran, SCnorm |

| Detection of Rare Cell Types | 30,000 - 70,000 | Focus on cell-level metrics to avoid filtering rare populations. | scran |

| Detection of Lowly-Expressed Genes | 50,000 - 100,000 | Higher stringency on UMI/gene counts. | SCnorm, scran |

| Isoform-level Analysis | >50,000 (Paired-end) | Requires full-length protocols (e.g., Smart-Seq2). | Census, TMM |

Integration with Computational Analysis Pipelines

The choices made during experimental design directly shape the computational analysis pipeline. A poorly designed experiment can introduce biases that are difficult or impossible to correct computationally.

The initial computational steps are heavily influenced by the wet-lab protocol. For example, data generated from UMI-based protocols (e.g., 10x, Parse) are typically quantified using tools like CellRanger or STARsolo, the latter being noted for faster processing while yielding nearly identical results [7]. For full-length methods, bulk RNA-seq aligners like STAR or quantification tools like RSEM can be used.

Systematic evaluations of analysis pipelines have shown that the choice of normalization method and the library preparation protocol have the most significant impact on the final results, particularly for differential expression analysis [14]. This is especially relevant in stem cell biology where comparisons often involve highly asymmetric gene expression changes (e.g., a stem cell vs. a differentiated progeny). In such cases, specialized normalization methods like scran are more robust at controlling false discovery rates [14].

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Research Reagents and Tools for scRNA-seq

| Reagent / Tool | Function | Example / Note |

|---|---|---|

| Viability Stain | Distinguishes live from dead cells prior to library prep. | Propidium Iodide (PI), DAPI, Trypan Blue. |

| UMI Barcodes | Unique Molecular Identifiers attached to each mRNA molecule during RT. | Enables accurate quantification by correcting for PCR amplification bias. [7] |

| Cell Barcodes | Barcodes that label all mRNAs from a single cell. | Allows pooling of cells into one library (multiplexing). [13] |

| Oligo-dT Primers | Primers that capture polyadenylated mRNA for reverse transcription. | Standard in most protocols. Some methods (e.g., Parse) mix with random hexamers. [13] |

| Spike-in RNAs | Exogenous RNA controls added in known quantities. | Can be used for normalization, though not feasible in all protocols. [14] |

| Commercial Kits | Integrated solutions for library preparation. | 10x Genomics Chromium, Parse Evercode, Fluidigm C1. [15] [13] |

The advent of single-cell RNA sequencing (scRNA-seq) has revolutionized stem cell research by enabling the investigation of cellular heterogeneity, lineage tracing, and developmental dynamics at unprecedented resolution [11]. As scRNA-seq technologies have advanced, the computational tools and platforms for analyzing these complex datasets have evolved in parallel. The current bioinformatics landscape in 2025 reflects a sophisticated ecosystem of specialized tools operating within broadly compatible frameworks, allowing researchers to extract meaningful biological insights from stem cell systems [16]. This overview examines the key computational platforms and tools shaping stem cell scRNA-seq research, with a focus on their applications in unraveling the complexities of stem cell biology, differentiation trajectories, and regenerative mechanisms.

Foundational Analysis Platforms

Integrated Computational Environments

Table 1: Core Analysis Platforms for Stem Cell scRNA-seq Research

| Platform | Programming Language | Primary Strengths | Stem Cell Applications |

|---|---|---|---|

| Seurat | R | Versatility, multi-modal integration, spatial transcriptomics | Label transfer for annotation, identification of rare stem cell populations [16] |

| Scanpy | Python | Scalability for millions of cells, memory optimization | Large-scale atlas projects, integration with deep learning tools [16] |

| SingleCellExperiment (SCE) | R/Bioconductor | Reproducible workflows, method development | Academic benchmarking, statistical analysis of stem cell heterogeneity [16] |

| CytoAnalyst | Web-based | Collaborative analysis, parallel processing, no coding required | Multi-investigator stem cell projects, educational applications [17] |

Seurat remains the most mature and flexible toolkit for R users, with its anchoring method enabling robust integration of data across batches, tissues, and even modalities [16]. This is particularly valuable in stem cell research where experiments often span multiple time points, differentiation conditions, and donors. The platform has expanded to natively support spatial transcriptomics, multiome data (RNA + ATAC), and protein expression via CITE-seq, allowing comprehensive characterization of stem cell states.

Scanpy, built around the AnnData object architecture, dominates large-scale single-cell analysis, especially for datasets exceeding millions of cells [16]. Its interoperability with the broader scverse ecosystem, including tools like scvi-tools and Squidpy, positions it as the go-to Python framework for constructing comprehensive stem cell atlases. The platform supports comprehensive preprocessing, clustering, visualization, and pseudotime analysis essential for understanding stem cell differentiation trajectories.

The SingleCellExperiment (SCE) ecosystem in R provides a common data structure that underpins many Bioconductor tools [16]. This ecosystem promotes reproducibility by enabling seamless transitions between methods, with packages like scran for robust normalization, scater for quality control and visualization, and ZINB-WaVE for dimensionality reduction under zero-inflated assumptions.

CytoAnalyst represents the next generation of web-based platforms that facilitate comprehensive scRNA-seq analysis without requiring programming expertise [17]. Its study management system, grid-layout visualization, and advanced sharing capabilities make it particularly suitable for collaborative stem cell research projects involving multiple investigators.

Specialized Analytical Tools

Table 2: Specialized Tools for Advanced Stem Cell Analysis

| Tool | Function | Methodology | Stem Cell Applications |

|---|---|---|---|

| scvi-tools | Deep generative modeling | Variational autoencoders (VAEs) | Probabilistic modeling of stem cell transitions, superior batch correction [16] |

| Monocle 3 | Trajectory inference | Graph-based abstraction | Lineage tracing, developmental pathway reconstruction [16] |

| Velocyto | RNA velocity | Spliced/unspliced transcript ratio | Prediction of stem cell fate decisions, dynamic processes [16] |

| Harmony | Batch correction | Iterative refinement algorithm | Integration of stem cell datasets across platforms and laboratories [16] |

| CellBender | Ambient RNA removal | Deep probabilistic modeling | Cleaning droplet-based data for rare stem cell population identification [16] |

scvi-tools brings deep generative modeling into the mainstream through variational autoencoders (VAEs) to model the noise and latent structure of single-cell data [16]. This provides superior batch correction, imputation, and annotation compared to conventional methods, which is crucial when comparing stem cells across different experimental conditions or genetic backgrounds.

Monocle 3 remains a preferred tool for studying developmental trajectories and temporal dynamics in single-cell data [16]. Its trajectory inference uses graph-based abstraction to model lineage branching, which aligns well with stem cell differentiation processes. The tool has evolved to support spatial transcriptomics and integrates with Seurat, making it a flexible option for multimodal analyses of stem cell niches.

Velocyto implements RNA velocity theory to infer future transcriptional states of individual cells by quantifying spliced and unspliced transcripts [16]. When combined with UMAP embeddings, it enables visualization of dynamic processes such as stem cell differentiation or response to stimuli, providing directional information about cellular fate decisions.

Harmony efficiently corrects batch effects across datasets using a scalable algorithm that preserves biological variation while aligning datasets [16]. This is particularly useful when analyzing stem cell datasets from large consortia or integrating public data with in-house experiments.

CellBender addresses the critical issue of ambient RNA contamination in droplet-based technologies using deep probabilistic modeling [16]. The tool learns to distinguish real cellular signals from background noise, significantly improving cell calling and downstream clustering - essential for identifying rare stem cell populations.

Experimental Protocols for Stem Cell scRNA-seq Analysis

Quality Control and Preprocessing

Quality control (QC) represents the critical first step in scRNA-seq analysis, with specific considerations for stem cell datasets. The standard QC workflow involves three key metrics: the number of counts per barcode (count depth), the number of genes per barcode, and the fraction of counts from mitochondrial genes per barcode [18]. In stem cell research, particular attention should be paid to:

Cell Viability Assessment: Stem cells are particularly sensitive to dissociation protocols. High mitochondrial percentages may indicate stressed or dying cells that should be removed. Thresholds should be established based on distributions rather than absolute values, looking for outlier populations that deviate from the main distribution [18].

Doublet Detection: Stem cell cultures often contain proliferating cells, increasing the risk of doublets. Tools like DoubletDecon, Scrublet, or Doublet Finder provide more elegant solutions than simple threshold-based approaches for identifying multiple cells captured together [18].

Stem Cell-Specific Filtering: Applying overly stringent filtering may remove rare stem cell populations. It is recommended to begin with wider filters and refine based on downstream clustering results [18].

The following DOT script visualizes the quality control decision process:

Normalization and Feature Selection

Normalization addresses differences in sequencing depth between cells, while feature selection identifies highly variable genes that drive biological heterogeneity. For stem cell data:

Normalization Method Selection: Log-normalization or SCTransform are commonly used approaches [17]. The choice may depend on the specific stem cell type and experimental design.

Highly Variable Gene Detection: Stem cells often exhibit subtle transcriptional differences between states. Feature selection should capture genes relevant to pluripotency, differentiation, and lineage specification.

Integration Across Conditions: When analyzing stem cells across multiple time points, conditions, or batches, integration methods such as RPCA, Harmony, or CCA should be applied to align datasets while preserving biological variation [17].

Dimensionality Reduction and Clustering

Dimensionality reduction techniques condense the high-dimensional scRNA-seq data into two or three dimensions for visualization and exploration. The standard workflow includes:

Principal Component Analysis (PCA): Linear dimensionality reduction that captures the maximum variance in the data.

Non-linear Embeddings: UMAP and t-SNE provide more effective visualization of complex cellular manifolds, with UMAP generally preferred for better preservation of global structure [17].

Clustering Algorithms: Leiden or Louvain algorithms identify distinct cell populations within the data [17]. Resolution parameters should be tuned based on the expected complexity of the stem cell system.

The following DOT script illustrates the computational analysis pipeline:

Advanced Analytical Approaches for Stem Cell Biology

Trajectory Inference and RNA Velocity

Understanding lineage relationships is fundamental to stem cell biology. Trajectory inference methods like Monocle 3 reconstruct developmental paths from scRNA-seq data, ordering cells along pseudotemporal trajectories that represent differentiation processes [16]. The analytical protocol involves:

Trajectory Structure Learning: Monocle 3 uses a graph-based approach to learn the underlying trajectory structure from reduced dimension space.

Branch Analysis: Identification of branch points where cell fate decisions occur, which is critical for understanding lineage specification in stem cell systems.

RNA Velocity Integration: Combining trajectory inference with RNA velocity from Velocyto provides directional information about cellular dynamics, predicting future states of stem cells along the differentiation continuum [16].

Multi-modal Integration and Spatial Context

Recent technological advances enable simultaneous measurement of multiple molecular modalities from the same cells. The computational framework for integrative analysis includes:

Cross-Modality Integration: Seurat's anchoring system enables integration of scRNA-seq with scATAC-seq, protein expression, and spatial data [16].

Spatial Transcriptomics Analysis: Squidpy has emerged as a primary tool for spatial single-cell analysis, offering neighborhood graph construction, ligand-receptor interaction analysis, and spatial clustering [16].

Stem Cell Niche Characterization: Integration of scRNA-seq with spatial data enables mapping of stem cells within their anatomical context, revealing niche interactions that maintain stemness or direct differentiation.

Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Resources

| Resource Type | Specific Tool/Platform | Function | Application in Stem Cell Research |

|---|---|---|---|

| Raw Data Processing | Cell Ranger | Process 10x Genomics data, alignment, quantification | Foundational processing of stem cell scRNA-seq data [16] |

| Programming Environment | R/Python with Seurat/Scanpy | Statistical computing, analysis pipeline implementation | Flexible, customizable analysis of stem cell datasets [16] |

| Reference Databases | CellMarker, PanglaoDB | Cell type annotation references | Identification of stem cell and differentiated cell types [17] |

| Enrichment Analysis | clusterProfiler, GSEA | Functional interpretation of gene sets | Pathway analysis of stem cell signatures [17] |

| Collaborative Platform | CytoAnalyst | Web-based analysis with sharing capabilities | Multi-user stem cell projects, educational use [17] |

The computational landscape for scRNA-seq analysis has matured into a sophisticated ecosystem of interoperable tools and platforms that enable comprehensive investigation of stem cell biology. Foundational platforms such as Scanpy, Seurat, and SingleCellExperiment provide the analytical backbone, while specialized tools address specific challenges such as trajectory inference, RNA velocity, and multi-modal integration. As single-cell technologies continue to evolve toward increasingly multi-modal measurements, computational methods that can integrate across spatial, epigenetic, and transcriptomic data will be essential for unraveling the complex regulatory networks that govern stem cell fate decisions. The field is moving toward tools that are both computationally powerful and biologically interpretable, enabling deeper insights into stem cell biology with direct relevance to regenerative medicine and therapeutic development.

The Analytical Workflow in Action: A Step-by-Step Guide from Raw Data to Biological Insight

Data Pre-processing and Rigorous Quality Control for Stem Cell Datasets

Single-cell RNA sequencing (scRNA-seq) has revolutionized stem cell research by enabling the dissection of cellular heterogeneity and the identification of rare cell populations at unprecedented resolution [11]. Unlike bulk RNA sequencing, which provides population-averaged data, scRNA-seq captures gene expression profiles of individual cells, revealing cell subtypes and dynamic transitions that would otherwise be obscured [19]. However, the minute quantities of starting material and technical artifacts inherent in single-cell protocols introduce specific challenges that necessitate rigorous quality control (QC) and pre-processing pipelines [11]. This application note provides detailed methodologies for data pre-processing and quality control specifically tailored to stem cell scRNA-seq datasets, framed within a comprehensive computational analysis pipeline.

Key Quality Metrics and Thresholds for Stem Cell Data

Quality control begins with the computation and assessment of key metrics that reflect cell viability, sequencing depth, and technical artifacts. The table below summarizes critical QC parameters, their biological interpretations, and recommended filtering thresholds for stem cell datasets.

Table 1: Essential Quality Control Metrics for Stem Cell scRNA-seq Data

| QC Metric | Biological/Technical Interpretation | Recommended Threshold | Stem Cell Specific Considerations |

|---|---|---|---|

| Unique Gene Counts | Sequencing depth & transcriptional activity | Minimum: 200-500; Maximum: 2,500-5,000 [17] | Varies by stem cell type and differentiation state |

| UMI Counts | Capture efficiency & library complexity | Minimum: 500-1,000; Maximum: 10,000-25,000 [17] | High variance may indicate mixed populations |

| Mitochondrial Gene Percentage | Cellular stress & apoptosis | Typically <5-10% [17] | May increase during differentiation; monitor carefully |

| Ribosomal Gene Percentage | Cellular state & translational activity | Variable; often 5-20% | Can indicate specific metabolic states in stem cells |

| Cell Complexity (Genes/UMI) | Technical quality | >0.8 often acceptable | Low values may indicate damaged cells or empty droplets |

For multi-sample stem cell experiments, quality metrics should be computed and visualized independently for each sample to identify batch-specific issues and apply sample-specific filtering thresholds when necessary [17]. Platforms like CytoAnalyst automatically generate interactive violin plots displaying distributions of these metrics across all cells, enabling dynamic threshold adjustment while observing effects on cell populations in real-time [17].

scRNA-seq Protocols: Selection Considerations for Stem Cell Research

The choice of scRNA-seq protocol significantly impacts downstream quality control parameters and analytical approaches. Different methods offer distinct advantages in transcript coverage, cell throughput, and detection sensitivity that must be aligned with stem cell research objectives.

Table 2: Comparison of scRNA-seq Protocols Relevant to Stem Cell Research

| Protocol | Isolation Strategy | Transcript Coverage | UMI | Amplification Method | Stem Cell Research Applications |

|---|---|---|---|---|---|

| Smart-Seq2 [11] | FACS | Full-length | No | PCR | Enhanced sensitivity for low-abundance transcripts; ideal for detecting rare regulatory factors in stem cells |

| Drop-Seq [11] | Droplet-based | 3′-end | Yes | PCR | High-throughput profiling of heterogeneous stem cell populations; cost-effective for large-scale differentiation studies |

| inDrop [11] | Droplet-based | 3′-end | Yes | IVT | Efficient barcode capture; suitable for time-course experiments tracking differentiation trajectories |

| Seq-well [11] | Droplet-based | 3′-only | Yes | PCR | Portable platform for limited stem cell samples; minimal equipment requirements |

| Fluidigm C1 [11] | Microfluidics | Full-length | No | PCR | Precise cell handling for precious stem cell samples; enables integrated genomic analyses |

| SPLiT-Seq [11] | Combinatorial indexing | 3′-only | Yes | PCR | Fixed stem cell samples; eliminates dissociation bias; highly scalable for developmental atlases |

Full-length transcript protocols (Smart-Seq2, Fluidigm C1) offer advantages for isoform usage analysis and detection of allelic expression patterns crucial for understanding regulatory mechanisms in stem cells [11]. Droplet-based methods (Drop-Seq, inDrop) enable higher throughput at lower cost per cell, making them particularly valuable for capturing rare stem cell subtypes and comprehensive differentiation landscapes [11].

Experimental Workflow and Computational Pipeline

The following diagram illustrates the complete scRNA-seq data pre-processing and quality control workflow for stem cell datasets, from sample preparation to analysis-ready data:

Figure 1: scRNA-seq Data Pre-processing and QC Workflow for Stem Cell Research

Research Reagent Solutions for Stem Cell scRNA-seq

The following table details essential research reagents and computational tools critical for implementing robust stem cell scRNA-seq quality control pipelines:

Table 3: Essential Research Reagent Solutions for Stem Cell scRNA-seq QC

| Category | Product/Platform | Specific Function | Application Notes |

|---|---|---|---|

| Wet Lab Protocols | Smart-Seq2 [11] | Full-length transcript amplification | Maximizes detection of low-abundance transcripts; ideal for stem cell regulatory networks |

| Drop-Seq [11] | High-throughput single-cell encapsulation | Enables analysis of thousands of stem cells; identifies rare subpopulations | |

| Computational Tools | CytoAnalyst [17] | Web-based QC and analysis platform | Interactive quality metric visualization; real-time filtering; collaborative analysis |

| LIANA [20] | Ligand-receptor analysis framework | Evaluates cell-cell communication in stem cell niches post-QC | |

| Reference Databases | OmniPath [20] | Cell-cell communication interactions | Contextualizes stem cell signaling within microenvironment |

| CellChatDB [20] | Ligand-receptor interaction repository | Specialized for signaling pathway analysis in development | |

| Quality Control Metrics | Unique Molecular Identifiers (UMIs) [11] | Correction for amplification bias | Essential for accurate transcript quantification in stem cells |

| Mitochondrial gene sets [17] | Cell viability assessment | Critical for detecting stressed cells in stem cell preparations |

Signaling Pathways and Cell-Cell Communication Analysis

Following quality control, analysis of cell-cell communication provides critical insights into stem cell niche interactions and signaling pathways governing self-renewal and differentiation decisions. The following diagram illustrates key signaling pathways identifiable through scRNA-seq data after rigorous QC:

Figure 2: Stem Cell Signaling Pathways Analyzable via scRNA-seq Data

Resources for cell-cell communication inference exhibit varying coverage of key developmental pathways. For instance, the Notch and Wnt pathways—critical for stem cell fate decisions—show significant representation across most resources, though some resources demonstrate underrepresentation of specific pathways like the T-cell receptor pathway, which may be relevant for immune-stem cell interactions [20]. Tools such as LIANA provide a unified framework for accessing multiple resources and methods, enabling comprehensive analysis of stem cell communication landscapes [20].

Implementation Considerations for Stem Cell Research

Successful implementation of scRNA-seq quality control pipelines for stem cell research requires additional considerations specific to stem cell biology. Stem cells often exhibit unique metabolic profiles that impact standard QC thresholds, particularly regarding mitochondrial content. During differentiation, temporary increases in mitochondrial gene percentage may reflect metabolic restructuring rather than cellular stress, necessitating adjusted thresholds or secondary validation [17].

For stem cell applications investigating rare populations or fine differentiation transitions, preprocessing should prioritize sensitivity maintenance. This may involve conservative filtering approaches that prioritize false negatives over false positives, particularly when working with precious stem cell samples. Integration of multiple normalization approaches (e.g., log-normalization and SCTransform) through platforms like CytoAnalyst enables parallel processing and comparison to determine optimal strategies for specific stem cell questions [17].

Batch effect correction requires particular attention in stem cell studies involving multiple differentiation experiments or time courses. Methods such as Harmony, RPCA, or CCA should be systematically evaluated to preserve biologically meaningful variation while removing technical artifacts [17]. The ability to maintain and compare multiple analysis instances facilitates this optimization process.

Rigorous quality control and standardized pre-processing pipelines form the essential foundation for reliable stem cell scRNA-seq research. By implementing the detailed protocols and metrics outlined in this application note, researchers can effectively address technical challenges while maximizing biological insights into stem cell heterogeneity, differentiation trajectories, and niche interactions. The integrated approach combining wet-lab protocols, computational QC tools, and signaling analysis frameworks enables robust interrogation of stem cell systems at single-cell resolution, supporting advances in developmental biology, regenerative medicine, and therapeutic development.

Data Normalization, Integration, and Batch Effect Correction in Multi-Sample Studies

In the context of stem cell scRNA-seq research, multi-sample studies are essential for robustly identifying novel stem cell subpopulations, understanding differentiation dynamics, and mapping developmental trajectories. The computational analysis of such datasets presents significant challenges in distinguishing genuine biological signals, such as transient progenitor states during stem cell differentiation, from technical artifacts introduced during sample processing. Technical variability or "batch effects" can arise from differences in sample preparation personnel, reagent lots, sequencing platforms, or processing dates, which can systematically mask the biological heterogeneity of interest in stem cell populations [21] [22]. Effective data normalization, integration, and batch effect correction therefore form a critical foundation for any computational pipeline aimed at extracting biologically meaningful insights from multi-sample stem cell studies. These preprocessing steps ensure that observed differences in gene expression truly reflect stem cell biology rather than technical confounders, enabling more accurate identification of stem cell states, lineage commitment markers, and molecular signatures of cellular potency.

Data Normalization: Foundations and Methods

The Necessity of Normalization in scRNA-seq Data

Normalization is a critical first step in scRNA-seq analysis that enables meaningful comparison of gene expression levels within and between individual cells. The raw count data generated from sequencing platforms are not directly comparable due to substantial technical variability, particularly in sequencing depth (library size), where orders-of-magnitude differences are commonly observed between cells [23]. Without appropriate normalization, these technical differences can become the dominant source of variation in the data, completely obscuring the biological signals of interest, such as the subtle transcriptional changes that occur during stem cell differentiation.

Single-cell RNA-sequencing data possess distinct characteristics that complicate their analysis, including an unusually high abundance of zero values (dropouts), increased cell-to-cell variability, and complex expression distributions [24]. This high intercellular variability stems from both biological factors (e.g., stochastic gene expression, cell cycle effects) and technical factors (e.g., capture efficiency, amplification bias). Effective normalization must account for these sources of variation while preserving genuine biological heterogeneity, which is particularly important in stem cell research where rare transitional states may be critical for understanding differentiation pathways.

Normalization Methodologies

Table 1: Common scRNA-seq Normalization Methods

| Method | Underlying Principle | Advantages | Limitations | Stem Cell Research Applications |

|---|---|---|---|---|

| CPM | Converts raw counts to counts per million by scaling by total counts | Simple, intuitive calculation | Sensitive to highly expressed genes; assumes total RNA content is constant | Initial data exploration; not recommended for complex multi-sample studies |

| SCTransform | Regularized negative binomial regression on UMIs with library size as covariate | Effectively stabilizes variance; eliminates influence of sequencing depth on PCA | Designed for UMI data; may oversmooth in extremely sparse datasets | Recommended for complex stem cell atlases with multiple samples and conditions |

| Scran | Pooling-based deconvolution approach using linear combinations of cell pools | Robust to zero inflation; handles varying library sizes effectively | Computational intensity increases with sample size | Ideal for heterogeneous stem cell populations with varying RNA content |

| RLE (SF) | Median ratio method using geometric means across cells | Robust to differential expression patterns | Requires sufficient non-zero expression across cells | Suitable for well-sequenced stem cell cultures with lower dropout rates |

| TMM | Weighted trimmed mean of M-values relative to reference sample | Adjusts for RNA composition effects | Assumes most genes are not differentially expressed | Appropriate for controlled differentiation time-course experiments |

| Upper Quartile | Scales counts using upper quantile of expression distribution | Less sensitive to outliers than total sum scaling | Problematic with low-depth data with many zeros | Limited utility for sparse stem cell datasets |

In stem cell research, the choice of normalization method can significantly impact downstream interpretations. For example, when studying heterogeneous populations containing both quiescent and activated stem cells, methods like scran that explicitly account for varying RNA content are preferable [23]. Similarly, when analyzing large-scale stem cell atlases encompassing multiple cell lines and differentiation timepoints, SCTransform has demonstrated superior performance in removing the relationship between technical covariates and biological variation, thereby enhancing the detection of subtle transcriptional states [23].

Batch Effect Correction and Data Integration

Understanding Batch Effects in Multi-Sample Studies

Batch effects represent systematic technical differences between datasets generated under different conditions, at different times, or by different personnel. In stem cell research, these effects are particularly problematic as they can mimic or obscure genuine biological signals, such as differences between stem cell lines, differentiation stages, or experimental conditions. Large scRNA-seq projects inevitably require data generation across multiple batches due to logistical constraints, making batch effect correction an essential step in the analytical pipeline [21].

The challenges of batch effect correction are particularly pronounced in stem cell biology due to the potential for both technical and biological differences between batches. For instance, if different stem cell lines are processed in different batches, it becomes difficult to distinguish expression differences attributable to genuine biological variation from those arising from technical artifacts. Computational removal of batch-to-batch variation enables researchers to combine data across multiple batches for consolidated analysis, thereby increasing statistical power and enabling more comprehensive characterization of stem cell heterogeneity [21].

Integration Strategies for Multi-Sample Data

Table 2: Batch Effect Correction Methods for scRNA-seq Data

| Method | Algorithm Type | Key Features | Data Requirements | Performance Considerations |

|---|---|---|---|---|

| FastMNN | Nearest-neighbor based | Fast, memory-efficient; preserves biological heterogeneity | Requires selection of highly variable genes | High scalability; suitable for large stem cell atlases |

| Harmony | Iterative clustering and integration | Uses PCA for dimension reduction; iterative correction | Works on principal components | Effective for datasets with complex batch structure |

| Seurat (CCA) | Canonical correlation analysis | Identifies shared correlation structures across datasets | Requires comparable cell types across batches | Conservative approach; may retain some batch effects |

| Scanorama | Panorama stitching via mutual nearest neighbors | Handers multiple batches simultaneously | Automatic feature selection | Efficient for integrating multiple timepoints |

| ComBat | Linear model with empirical Bayes | Adjusts for known batches; can include biological covariates | Assumes balanced design across batches | Can be too aggressive if biological differences exist |

| rescaleBatches() | Linear regression | Removes batch effect by scaling batch means; preserves sparsity | Assumes similar population composition | Rapid processing; maintains matrix sparsity |

Several specialized tools have been developed specifically for batch correction of single-cell data that do not require a priori knowledge about cell population composition [21]. This feature is particularly valuable for exploratory analyses of stem cell datasets where the complete spectrum of cellular states may not be fully known in advance. The quickCorrect() function from the batchelor package, for instance, provides a streamlined workflow that performs data preparation, feature selection, and mutual nearest neighbors (MNN) correction in a unified framework [21].

Experimental Protocols and Workflows

Comprehensive Data Preprocessing Protocol

A robust preprocessing pipeline is essential for preparing high-quality stem cell scRNA-seq data for normalization and integration. The following protocol outlines key steps:

Step 1: Quality Control and Filtering

- Calculate quality metrics: Count depth, number of detected genes per cell, and mitochondrial read fraction [25]

- Identify and remove low-quality cells using multivariate thresholding

- Filter out droplets with unusually high UMI counts (potential multiplets) or high mitochondrial content (dying cells) [22]

- Expected outcomes: For healthy stem cell populations, mitochondrial percentage typically below 10-20%; minimum gene detection threshold varies by platform

Step 2: Normalization Implementation

- Select appropriate normalization method based on data characteristics (refer to Table 1)

- For scran: Apply quick clustering and compute size factors using

computeSumFactors() - For SCTransform: Run

SCTransform()with default parameters for UMI data - Validate normalization effectiveness by examining the relationship between technical covariates and principal components

Step 3: Feature Selection

- Identify highly variable genes (HVGs) using variance-stabilizing transformation

- Select 2,000-5,000 most variable genes for downstream analysis

- For multi-sample studies: Use

combineVar()to average variance components across batches [21]

Step 4: Batch Correction

- Select integration method based on study design (refer to Table 2)

- For FastMNN: Run

fastMNN()on selected HVGs - For Harmony: Apply

RunHarmony()on PCA embeddings - Validate integration using cluster-specific batch mixing metrics

Step 5: Downstream Analysis

- Perform dimensionality reduction (PCA, UMAP, t-SNE) on integrated data

- Conduct clustering analysis using graph-based or density-based methods

- Identify cluster markers and annotate cell types

- Perform differential expression analysis across conditions

Multi-Sample Integration Protocol

For studies involving multiple stem cell samples, the following specialized protocol ensures effective integration:

Sample Preparation and Preprocessing

- Process each sample individually through QC and basic normalization

- Use

multiBatchNorm()to rescale batches and adjust for systematic differences in coverage [21] - Subset all batches to the common feature space

Feature Selection for Integration

- Perform feature selection using

combineVar()to average variance components across batches - Select a larger number of HVGs (e.g., 5,000) than typical single-dataset analyses

- This ensures retention of markers for sample-specific subpopulations that might be present

Integration Execution

- Choose correction algorithm based on dataset characteristics (see Table 2)

- For

quickCorrect(): Apply to multiple SingleCellExperiment objects with specified HVGs - Assess integration quality by examining mixing of batches in low-dimensional embeddings

Integration Quality Assessment

- Visualize batch distribution across clusters

- Calculate integration metrics (e.g., local inverse Simpson's index)

- Confirm preservation of biological variation while removing technical artifacts

The Scientist's Toolkit: Essential Research Reagents and Computational Tools

Table 3: Essential Computational Tools for scRNA-seq Data Processing

| Tool/Package | Primary Function | Application Context | Key Features | Implementation |

|---|---|---|---|---|

| Scran | Normalization using pooled size factors | Single-cell specific normalization | Robust to zero inflation; deconvolution approach | R/Bioconductor |

| SCTransform | Normalization and variance stabilization | UMI-based datasets | Regularized negative binomial regression; eliminates depth influence | R/Seurat |

| batchelor | Batch correction using MNN | Multi-sample integration | FastMNN implementation; preserves biological heterogeneity | R/Bioconductor |

| Seurat | Comprehensive analysis suite | End-to-end workflow | CCA integration; SCTransform normalization; extensive visualization | R |

| Scanpy | Single-cell analysis in Python | Python-based workflows | BBKNN integration; scalable to very large datasets | Python |

| Harmony | Batch integration | Complex batch structures | Iterative clustering and correction; works on embeddings | R/Python |

| Cell Ranger | Primary data processing | 10x Genomics data | Alignment, barcode processing, count matrix generation | Command line |

Applications in Stem Cell Research

The normalization and integration methodologies described in this article enable several critical applications in stem cell biology. In developmental patterning studies, effective batch correction allows researchers to integrate scRNA-seq data from multiple embryonic timepoints, revealing continuous differentiation trajectories and identifying transient progenitor populations that would be impossible to detect in individual samples [11]. For disease modeling using induced pluripotent stem cells (iPSCs), these computational approaches enable robust comparison of patient-derived lines and controls, facilitating the identification of disease-relevant transcriptional signatures despite technical variability introduced during cellular reprogramming and differentiation.

In drug discovery applications, multi-sample integration methods allow researchers to combine scRNA-seq data from compound screening experiments conducted at different times or locations. This enables comprehensive assessment of how small molecules or biologics affect stem cell differentiation patterns and transcriptional states, accelerating the identification of compounds that direct stem cells toward therapeutic relevant fates [11]. Furthermore, as single-cell technologies continue to evolve toward multi-omic profiling, the normalization and integration frameworks established for transcriptomic data will provide a foundation for analyzing integrated datasets that simultaneously capture gene expression, chromatin accessibility, and protein abundance in stem cell populations.

Data normalization, integration, and batch effect correction constitute essential components of the computational analysis pipeline for stem cell scRNA-seq research. The methodologies and protocols outlined in this article provide a structured framework for addressing the technical challenges inherent in multi-sample studies, enabling researchers to focus on the biological questions of interest. As single-cell technologies continue to advance, producing increasingly large and complex datasets, the development of more sophisticated normalization and integration approaches will be crucial for unlocking the full potential of stem cell transcriptomics. By implementing these best practices, researchers can ensure that their findings reflect genuine stem cell biology rather than technical artifacts, accelerating progress in both basic stem cell biology and translational applications.

Dimensionality Reduction and Clustering to Uncover Distinct Stem Cell Subpopulations

A primary challenge in stem cell biology is the inherent heterogeneity within seemingly uniform cell populations [26]. Single-cell RNA sequencing (scRNA-seq) has emerged as a pivotal technology for deconvoluting this complexity, enabling researchers to measure the expression of thousands of genes across thousands of individual cells [27]. However, the high-dimensional, sparse, and noisy nature of scRNA-seq data necessitates robust computational methods for distillation and interpretation [27] [28]. Dimensionality reduction and clustering are two critical, interdependent steps in the downstream analysis pipeline that allow scientists to project data into an intelligible low-dimensional space and identify groups of cells with similar transcriptomic profiles, potentially representing distinct stem cell states, lineages, or functional subpopulations [27] [29]. Within the broader context of developing a computational pipeline for stem cell research, this protocol details the application of these methods to uncover biologically meaningful subpopulations, a capability with profound implications for understanding development, regeneration, and drug discovery.

Computational Methodology

Dimensionality reduction methods transform high-dimensional gene expression data into a lower-dimensional representation, preserving key patterns of cellular heterogeneity. These methods can be broadly categorized as follows:

- Linear Methods: These include classic techniques like Principal Component Analysis (PCA), which identifies the linear combinations of genes (principal components) that account for the maximum variance in the data [27] [30]. PCA is widely used as an initial denoising and data compression step.

- Non-Linear Methods: Designed to capture more complex, non-linear relationships in the data. t-Distributed Stochastic Neighbor Embedding (t-SNE) excels at revealing local structure and is known for producing visually distinct clusters [27] [30]. Uniform Manifold Approximation and Projection (UMAP) often preserves more of the global data structure than t-SNE and offers superior run-time performance, making it a current standard for visualization [27] [29].

- Model-Based and Neural Network Methods: These approaches explicitly model characteristics of scRNA-seq data. Zero-Inflated Factor Analysis (ZIFA) incorporates a model for dropout events [27]. More recently, deep learning models like Variational Autoencoders (VAEs) and the Boosting Autoencoder (BAE) offer powerful, flexible frameworks for non-linear dimension reduction that can incorporate structural assumptions, such as enforcing sparsity to identify small, explanatory gene sets [27] [31].

Table 1: Comparison of Common Dimensionality Reduction Methods for scRNA-seq Data

| Method | Category | Key Features | Best Use-Case | Considerations |

|---|---|---|---|---|