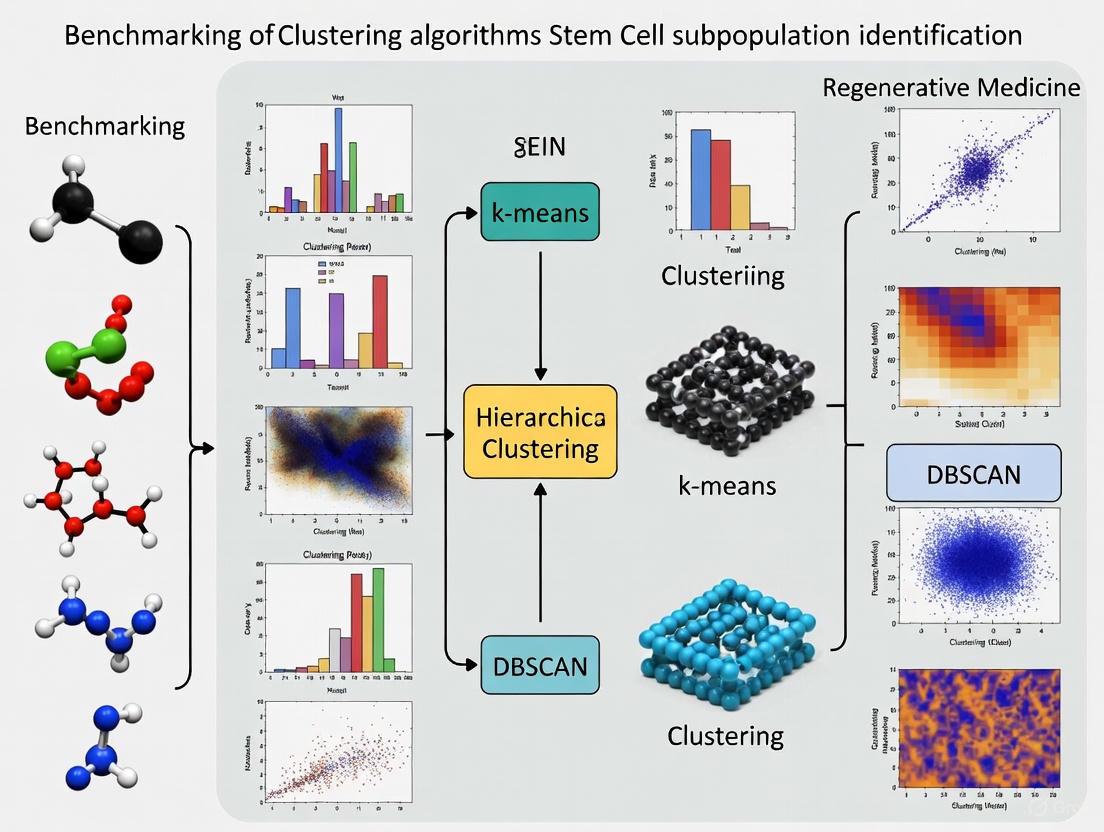

Benchmarking Clustering Algorithms for Stem Cell Subpopulation Identification: A Practical Guide for Single-Cell Data Analysis

The accurate identification of stem cell subpopulations is crucial for advancing regenerative medicine, understanding disease mechanisms, and developing targeted therapies.

Benchmarking Clustering Algorithms for Stem Cell Subpopulation Identification: A Practical Guide for Single-Cell Data Analysis

Abstract

The accurate identification of stem cell subpopulations is crucial for advancing regenerative medicine, understanding disease mechanisms, and developing targeted therapies. This article provides a comprehensive benchmark of computational clustering algorithms for stem cell research, evaluating their performance on single-cell transcriptomic and proteomic data. We explore foundational concepts of stem cell heterogeneity and the critical role of clustering in delineating distinct cellular states. Based on recent large-scale benchmarking studies, we recommend top-performing algorithms like scAIDE, scDCC, and FlowSOM for their balanced performance across metrics. The article addresses common analytical challenges including parameter optimization, handling high-dimensional data, and integration of multi-omics information. Finally, we discuss validation strategies and future directions where artificial intelligence and systems biology are poised to transform stem cell analysis and clinical translation.

Understanding Stem Cell Heterogeneity and the Critical Role of Clustering in Single-Cell Analysis

Stem cell heterogeneity represents a fundamental biological characteristic with profound implications for both developmental biology and regenerative medicine. This phenomenon refers to the existence of distinct subpopulations within a stem cell pool, each possessing unique functional capacities, differentiation potentials, and molecular signatures. Far from being a uniform population, stem cells comprise a consortium of different cell types with distinct steady-state characteristics, including variations in self-renewal capacity, proliferation rates, differentiation bias, and lifespan [1]. This heterogeneity is not merely biological noise but serves critical functions in development, tissue maintenance, and response to injury or disease.

The recognition of stem cell heterogeneity has evolved significantly over time. Initially, stem cells were perceived as a homogeneous population with flexible behavior, but advanced single-cell technologies have revealed a more complex landscape [2]. For example, in the hematopoietic system, once thought to be sustained by a single type of flexible stem cell, we now know the compartment consists of a limited number of discrete stem cell subsets with epigenetically fixed differentiation and self-renewal programs [2]. This paradigm shift has forced a reevaluation of stem cell biology across tissues and has important consequences for therapeutic applications.

Understanding stem cell heterogeneity is particularly crucial for advancing cell-based therapies and regenerative medicine applications. The inherent variability in stem cell populations contributes significantly to the inconsistent outcomes observed in clinical trials [3] [4]. For mesenchymal stem cells (MSCs), heterogeneity manifests through multiple dimensions, including uncertainty in nomenclature, differences between donors, variations across tissue sources, and intercellular differences even within clonally derived populations [3]. Addressing these challenges requires sophisticated computational and experimental approaches to dissect and characterize the diverse subpopulations that constitute the stem cell compartment.

Computational Benchmarking of Clustering Algorithms

The Critical Role of Clustering in Heterogeneity Analysis

Single-cell RNA-sequencing (scRNA-seq) has revolutionized our ability to profile gene expression at individual cell resolution, enabling the precise characterization of stem cell heterogeneity [5] [6]. Clustering algorithms serve as fundamental computational tools in this process, allowing researchers to identify distinct cell subpopulations and estimate the number of unique cell types present in a given dataset [6]. The performance of these algorithms directly impacts the accuracy of stem cell subpopulation identification and consequently affects downstream biological interpretations.

The challenge of clustering single-cell data is compounded by the unique characteristics of different omics modalities. Single-cell proteomic data, for instance, often exhibits markedly different data distributions and feature dimensionalities compared to transcriptomic data, posing non-trivial challenges for applying clustering techniques uniformly across modalities [5]. As the field progresses toward multi-omics approaches, understanding the strengths and limitations of clustering algorithms across different data types becomes increasingly important.

Comprehensive Performance Evaluation

A recent systematic benchmark evaluation assessed 28 computational clustering algorithms on 10 paired transcriptomic and proteomic datasets, providing critical insights into their performance for stem cell heterogeneity research [5]. The study evaluated methods across multiple criteria, including clustering accuracy, robustness, running time, and peak memory usage. The results revealed that while numerous clustering algorithms have been developed for single-cell transcriptomic data, relatively few methods have been specifically tailored for single-cell proteomic data.

Table 1: Top-Performing Clustering Algorithms Across Omics Modalities

| Algorithm | Transcriptomic Performance (Rank) | Proteomic Performance (Rank) | Computational Efficiency | Key Strengths |

|---|---|---|---|---|

| scAIDE | 2nd | 1st | Moderate | Excellent cross-modality performance |

| scDCC | 1st | 2nd | High memory efficiency | Strong generalization across omics |

| FlowSOM | 3rd | 3rd | High robustness | Fast processing with consistent results |

| CarDEC | 4th | 16th | Variable | Transcriptomic-specific optimization |

| PARC | 5th | 18th | Variable | Limited proteomic performance |

The benchmarking results demonstrated that scAIDE, scDCC, and FlowSOM consistently achieved top performance across both transcriptomic and proteomic data types, suggesting strong generalization capabilities [5]. This cross-modality consistency is particularly valuable for stem cell researchers working with diverse data types. Importantly, the study revealed that algorithms performing well on one modality did not necessarily maintain their performance on another, highlighting the importance of selecting appropriate methods based on specific data characteristics.

Algorithm Performance Across Experimental Conditions

Further benchmarking examined how clustering algorithms perform under varying biological conditions relevant to stem cell research. A separate comprehensive evaluation focused on algorithm performance in estimating the number of cell types across datasets with different characteristics, including varying numbers of cell types, different cell counts per type, and imbalanced cell type proportions [6]. These conditions mirror the challenges faced when analyzing stem cell populations, where subpopulations may exist at different abundances and possess distinct transcriptional profiles.

Table 2: Algorithm Performance for Cell Type Number Estimation

| Algorithm | Estimation Bias | Performance with Imbalanced Populations | Stability Across Datasets | Recommended Use Cases |

|---|---|---|---|---|

| Monocle3 | Low deviation | Moderate | High | General purpose estimation |

| scLCA | Low deviation | Moderate | Moderate | Balanced population designs |

| scCCESS-SIMLR | Low deviation | Good | High | Complex population structures |

| SHARP | Underestimation | Poor | Moderate | Computational efficiency priority |

| SC3 | Overestimation | Moderate | Low | Exploration of potential subtypes |

| ACTIONet | Overestimation | Poor | Low | Large dataset exploration |

The findings revealed that methods exhibited different bias patterns, with some consistently overestimating (e.g., SC3, ACTIONet, Seurat) or underestimating (e.g., SHARP, densityCut) the number of cell types [6]. These biases can significantly impact stem cell research, potentially leading to either oversplitting of continuous differentiation trajectories or missing rare stem cell subpopulations. Methods such as Monocle3, scLCA, and scCCESS-SIMLR demonstrated more balanced performance with smaller median deviations from the true number of cell types [6].

Experimental Methodologies for Resolving Heterogeneity

Single-Cell RNA Sequencing Workflows

The characterization of stem cell heterogeneity relies heavily on robust experimental methodologies that enable resolution at the single-cell level. Single-cell RNA sequencing (scRNA-seq) has emerged as a cornerstone technology for profiling the transcriptomic landscape of individual cells within heterogeneous stem cell populations [6]. A typical scRNA-seq workflow begins with the preparation of a single-cell suspension from stem cell cultures or primary tissues, followed by cell capture, reverse transcription, cDNA amplification, library preparation, and high-throughput sequencing.

The critical importance of proper experimental design cannot be overstated when studying stem cell heterogeneity. Factors such as cell viability, capture efficiency, sequencing depth, and batch effects can significantly impact the ability to resolve biologically meaningful subpopulations. For stem cells specifically, considerations about cell cycle status, differentiation stage, and metabolic state must be incorporated into experimental planning, as these factors contribute substantially to observed heterogeneity [7] [1]. Following data generation, quality control metrics including reads per cell, percentage of mitochondrial genes, and detection of housekeeping genes should be rigorously assessed to ensure data reliability.

Surface Protein Profiling with CITE-seq

While scRNA-seq provides comprehensive transcriptomic information, the addition of surface protein profiling through technologies such as CITE-seq (Cellular Indexing of Transcriptomes and Epitopes by Sequencing) enables simultaneous measurement of mRNA and protein expression in individual cells [5]. This multi-modal approach is particularly valuable for stem cell research, as protein expression often more closely reflects functional cellular states than transcript levels alone.

The CITE-seq methodology involves labeling cells with oligonucleotide-tagged antibodies against specific surface markers, followed by simultaneous capture of transcriptomic and proteomic information using standard single-cell sequencing platforms [5]. For stem cell applications, panels of antibodies targeting known stem cell markers (e.g., CD90, CD73, CD105 for MSCs) can be combined with antibodies against differentiation markers to resolve heterogeneity along developmental trajectories. The resulting multi-modal data provides complementary information that enhances the identification of functionally distinct subpopulations within heterogeneous stem cell cultures.

Functional Validation Approaches

Following computational identification of putative stem cell subpopulations, functional validation remains essential to establish biological significance. In vitro differentiation assays represent a cornerstone approach for validating functional heterogeneity within stem cell populations. The standard trilineage differentiation assay for MSCs, as defined by International Society for Cell & Gene Therapy (ISCT) criteria, evaluates adipogenic, osteogenic, and chondrogenic differentiation potential [3] [8] [4].

Clonal tracking methods provide another powerful approach for validating stem cell heterogeneity. Through genetic barcoding or lineage tracing, researchers can directly monitor the differentiation potential and self-renewal capacity of individual stem cells over time [2] [1]. These studies have been instrumental in demonstrating the existence of preprogrammed hematopoietic stem cell subsets with distinct differentiation biases [2]. Similarly, in vivo transplantation assays remain the gold standard for assessing functional stem cell activity, particularly for hematopoietic stem cells, where reconstitution capacity can be quantitatively measured in recipient models [2].

Research Reagent Solutions for Heterogeneity Studies

The experimental and computational approaches for analyzing stem cell heterogeneity depend on a suite of specialized reagents and tools. The following table outlines essential research reagent solutions for designing robust studies of stem cell heterogeneity.

Table 3: Essential Research Reagents for Stem Cell Heterogeneity Studies

| Reagent Category | Specific Examples | Function in Heterogeneity Studies | Application Notes |

|---|---|---|---|

| Surface Marker Antibodies | CD105, CD73, CD90, CD45, CD34, CD14 | Identification and isolation of stem cell populations using ISCT criteria [3] [8] | Essential for flow cytometry and CITE-seq experiments; validate specificity for each species |

| Oligonucleotide-Tagged Antibodies | CITE-seq antibodies | Simultaneous protein and RNA measurement at single-cell level [5] | Enables multi-omics approaches; requires compatibility with sequencing platform |

| Cell Culture Supplements | FGF, EGF, TGF-β inhibitors | Maintenance of stemness or directed differentiation [7] [2] | Different stem cell subpopulations may have distinct growth factor requirements |

| Cell Separation Matrices | Ficoll, Percoll, BSA gradients | Enrichment of specific subpopulations based on density [4] | Can reduce cellular stress compared to fluorescence-activated cell sorting |

| Single-Cell Library Preparation Kits | 10x Genomics, Parse Biosciences | Generation of barcoded libraries for single-cell sequencing [5] [6] | Choice affects cell throughput, sequencing depth, and cost considerations |

| Lineage Tracing Systems | Genetic barcodes, Cre-lox, Fluorescent reporters | Tracking clonal dynamics and differentiation trajectories [2] [1] | Critical for functional validation of computationally identified subpopulations |

The selection of appropriate reagents should be guided by the specific stem cell type under investigation and the particular aspects of heterogeneity being studied. For example, the study of age-related heterogeneity in hematopoietic stem cells requires different marker panels (e.g., CD41, CD150) than the analysis of mesenchymal stem cell subpopulations [2] [1]. Similarly, the investigation of pluripotent stem cell heterogeneity necessitates reagents specific to pluripotency markers (e.g., OCT4, NANOG, SOX2) and early lineage commitment [7].

Biological Implications of Stem Cell Heterogeneity

Developmental Regulation and Fate Decisions

Stem cell heterogeneity is not merely biological noise but serves crucial functions in development and tissue homeostasis. Emerging evidence indicates that multiple aspects of cellular physiology, including epigenetic regulation, transcriptional networks, mitotic behavior, signal transduction, and metabolic pathways, differ among heterogeneous stem cells [1]. These differences enable stem cell populations to participate in multilineage differentiation throughout life and maintain homeostasis or remodel tissues in response to physiological changes.

In the hematopoietic system, heterogeneity is developmentally regulated, with different stem cell subsets dominating at various life stages [2]. Lymphoid-biased HSCs are found predominantly early in life, while myeloid-biased HSCs accumulate in aged organisms, contributing to age-related changes in immune function [2] [1]. This programmed heterogeneity has profound implications for understanding developmental biology and age-related diseases. Similarly, in mesenchymal stem cells, heterogeneity reflects developmental origins, with cells from different tissue sources (bone marrow, adipose tissue, umbilical cord) exhibiting distinct gene expression profiles and functional properties [3] [4].

Implications for Regenerative Medicine

The inherent heterogeneity of stem cell populations presents both challenges and opportunities for regenerative medicine applications. On one hand, heterogeneity contributes to inconsistent outcomes in clinical trials of MSC-based therapies, making it difficult to predict and replicate therapeutic effects [3] [8] [4]. Different MSC subpopulations may exhibit varying potencies for specific therapeutic applications, such as immunomodulation, tissue repair, or angiogenesis.

On the other hand, understanding and harnessing heterogeneity could lead to more targeted and effective therapies. For example, the identification of specific subpopulations with enhanced immunomodulatory capacity or trophic factor secretion could enable purification of cells optimized for particular clinical indications [4]. Strategies to address heterogeneity challenges in clinical applications include donor cell pooling to reduce inter-donor variability, functional pre-screening of cell batches, and development of more precise characterization methods that go beyond surface marker expression to include functional potency assays [8] [4].

The challenge of stem cell heterogeneity represents both a fundamental biological phenomenon and a significant technical hurdle in the field of regenerative medicine. Through the integration of advanced computational approaches, particularly sophisticated clustering algorithms like scAIDE, scDCC, and FlowSOM, with multi-omics experimental methodologies, researchers are making steady progress in resolving the complexity of stem cell populations. The benchmarking studies summarized in this review provide critical guidance for selecting appropriate analytical tools based on specific data modalities and research questions.

As our understanding of stem cell heterogeneity deepens, it becomes increasingly clear that this diversity is not merely biological noise but rather a functionally important feature of stem cell populations. The regulated heterogeneity enables flexible responses to developmental cues, tissue damage, and aging processes. For clinical translation, addressing heterogeneity through improved characterization, standardization, and potentially subpopulation selection will be essential for developing more consistent and effective stem cell-based therapies. The continued refinement of both computational and experimental approaches for dissecting stem cell heterogeneity will undoubtedly yield new insights into basic biology and accelerate the development of regenerative medicine applications.

In the rapidly evolving field of regenerative medicine, accurately identifying and characterizing cellular subpopulations stands as a fundamental prerequisite for developing effective therapies. Single-cell RNA sequencing (scRNA-seq) has revolutionized our ability to profile gene expression in individual cells, enabling researchers to dissect cellular heterogeneity within complex tissues. Clustering algorithms serve as the computational backbone for this process, transforming high-dimensional transcriptomic data into biologically meaningful cell type classifications. The critical importance of this step cannot be overstated—the precise definition of cellular identity directly influences downstream applications, including stem cell differentiation protocols, disease modeling, and the identification of novel therapeutic targets.

Despite technological advancements, clustering remains a challenging endeavor due to the inherent complexity and high dimensionality of single-cell data. The performance of clustering algorithms varies significantly across different biological contexts, data types, and computational parameters. Recent comprehensive benchmarking studies have revealed that no single algorithm consistently outperforms others across all scenarios, highlighting the need for careful method selection tailored to specific research goals in regenerative medicine [5]. This guide provides an objective comparison of clustering performance, experimental protocols, and practical implementation guidelines to empower researchers in making informed decisions for their stem cell research.

Benchmarking Clustering Performance: A Quantitative Comparison

Comprehensive Algorithm Performance Assessment

A systematic benchmark evaluation of 28 computational clustering algorithms was conducted on 10 paired transcriptomic and proteomic datasets, providing robust performance comparisons across multiple metrics. The evaluation employed standardized measures including Adjusted Rand Index (ARI), Normalized Mutual Information (NMI), Clustering Accuracy (CA), and Purity to ensure comprehensive assessment [5]. The table below summarizes the top-performing algorithms based on their overall rankings:

Table 1: Top-Performing Clustering Algorithms Across Single-Cell Omics Data

| Algorithm | Overall Ranking (Transcriptomics) | Overall Ranking (Proteomics) | Key Strengths | Computational Profile |

|---|---|---|---|---|

| scAIDE | 2nd | 1st | Superior performance across omics, excellent for heterogeneous populations | Balanced efficiency |

| scDCC | 1st | 2nd | Top transcriptomic performance, memory-efficient | Memory efficient |

| FlowSOM | 3rd | 3rd | Excellent robustness, maintains performance across data types | Time efficient |

| CarDEC | 4th | 16th | Strong transcriptomic performance | Variable performance |

| PARC | 5th | 18th | Effective for specific transcriptomic applications | Context-dependent |

The benchmarking analysis revealed that scAIDE, scDCC, and FlowSOM demonstrated consistent top-tier performance across both transcriptomic and proteomic data, suggesting strong generalization capabilities across different omics modalities [5]. Interestingly, some methods that performed exceptionally well on transcriptomic data (e.g., CarDEC and PARC) showed significantly reduced effectiveness on proteomic data, highlighting the modality-specific strengths of certain algorithms.

Performance Across Computational Metrics

Beyond overall accuracy, the benchmarking study evaluated critical computational resources including peak memory usage and running time, providing practical insights for researchers working with large-scale datasets:

Table 2: Computational Efficiency of Leading Clustering Algorithms

| Algorithm | Memory Efficiency | Time Efficiency | Recommended Use Case |

|---|---|---|---|

| scDCC | Excellent | Moderate | Large datasets with limited RAM |

| scDeepCluster | Excellent | Moderate | Memory-constrained environments |

| TSCAN | Moderate | Excellent | Rapid prototyping |

| SHARP | Moderate | Excellent | Time-sensitive projects |

| MarkovHC | Moderate | Excellent | Quick iterative analyses |

| Leiden | Good | Good | Balanced workflows |

| Louvain | Good | Good | General-purpose applications |

For researchers prioritizing computational efficiency, scDCC and scDeepCluster offer excellent memory efficiency, while TSCAN, SHARP, and MarkovHC provide superior time efficiency [5]. Community detection-based methods like Leiden and Louvain strike a reasonable balance between both dimensions, making them suitable for general-purpose applications in regenerative medicine research.

Experimental Protocols and Methodologies

Standardized Benchmarking Framework

The comparative benchmarking study employed a rigorous methodology to ensure fair and informative algorithm evaluation. The experimental protocol encompassed several critical phases:

Dataset Curation and Preparation: Ten real datasets across five tissue types encompassing over 50 cell types and more than 300,000 cells were obtained from SPDB (the largest single-cell proteomic database) and Seurat v3 [5]. These datasets included paired single-cell mRNA expression and surface protein expression data generated using multi-omics technologies (CITE-seq, ECCITE-seq, and Abseq), ensuring identical biological conditions across omics modalities for comparable analysis.

Algorithm Selection and Configuration: The study evaluated 28 clustering algorithms representing diverse computational approaches: 15 classical machine learning-based methods, 6 community detection-based methods, and 7 deep learning-based methods [5]. Most methods were developed after 2020, representing current state-of-the-art approaches. Each algorithm was applied according to its recommended settings with standardized preprocessing to ensure comparability.

Evaluation Metrics and Validation: Multiple validation metrics were employed including ARI, NMI, CA, and Purity. The robustness assessment utilized 30 simulated datasets with varying noise levels and dataset sizes to evaluate method stability under different conditions [5]. Additionally, the impact of highly variable genes (HVGs) and cell type granularity on clustering performance was systematically investigated.

Parameter Optimization Framework

A specialized study focused on clustering parameter optimization utilized intrinsic goodness metrics to predict clustering accuracy across different parameter configurations. The experimental approach included:

Dataset Selection: Three datasets with ground truth cell annotations from distinct anatomical districts (liver, skeletal muscle, and kidney) were selected from the CellTypist organ atlas to ensure biologically reliable labels independent of annotation algorithms [9].

Clustering Methods and Parameters: The investigation employed two clustering methods: the Leiden algorithm and the Deep Embedding for Single-cell Clustering (DESC) algorithm [9]. Parameters including resolution, number of nearest neighbors, dimensionality reduction approach, and number of principal components were systematically varied.

Linear Modeling and Metric Evaluation: A robust linear mixed regression model analyzed the impact of clustering parameters on accuracy [9]. Fifteen intrinsic measures were calculated and used to train an ElasticNet regression model in both intra- and cross-dataset approaches to evaluate accuracy prediction potential.

The workflow for this parameter analysis is illustrated below:

Enhanced Consensus Clustering for Single-Cell Methylation Data

For single-cell DNA methylation data, the scMelody algorithm employs an enhanced consensus-based clustering model that addresses limitations of single-similarity measures:

Similarity Reconstruction: scMelody utilizes multiple basic similarity measures to reconstruct cell-to-cell methylation similarity patterns, capturing more complete cellular heterogeneity than single-metric approaches [10].

Dual Weighting Strategy: The method incorporates a regularization process and dual weighting strategy that balances both diversity and separability of basic clustering partitions, improving consensus matrix construction [10].

Validation Framework: The algorithm was assessed on seven distinct real single-cell methylation datasets with known cell types, plus synthetic datasets with varying cell numbers, cluster numbers, and CpG dropout proportions to evaluate robustness [10].

The enhanced consensus approach demonstrates how integrating multiple similarity measures can overcome limitations of individual metrics:

Essential Research Reagent Solutions

Implementing effective clustering workflows requires both computational tools and wet-lab reagents that ensure high-quality input data. The following table details key solutions for single-cell research in regenerative medicine:

Table 3: Essential Research Reagent Solutions for Single-Cell Clustering Studies

| Reagent/Resource | Function | Application in Regenerative Medicine |

|---|---|---|

| CellTypist Organ Atlas | Provides meticulously curated cell annotations with ground truth labels | Benchmarking clustering performance against reliable biological standards [9] |

| CITE-seq Reagents | Simultaneous measurement of mRNA and surface protein expression | Paired transcriptomic and proteomic data generation for multi-modal clustering [5] |

| scBS/scRRBS/scWGBS Kits | Single-cell DNA methylation sequencing | Epigenetic heterogeneity analysis in stem cell populations [10] |

| SPDB Database | Largest single-cell proteomic database | Access to diverse proteomic datasets for method validation [5] |

| Highly Variable Gene Selection Tools | Identification of informative features for clustering | Improved clustering efficiency and biological relevance [5] |

Key Findings and Practical Recommendations

Optimal Parameter Configuration

The parameter optimization study yielded several critical insights for practical implementation. The analysis demonstrated that using UMAP for neighborhood graph generation combined with increased resolution parameters has a beneficial impact on accuracy [9]. The effect of resolution is particularly pronounced with fewer nearest neighbors, resulting in sparser, more locally sensitive graphs that better preserve fine-grained cellular relationships. Additionally, testing different numbers of principal components is essential as this parameter is highly affected by data complexity.

The study identified that within-cluster dispersion and the Banfield-Raftery index serve as effective intrinsic proxies for accuracy, enabling rapid comparison of different parameter configurations without requiring ground truth labels [9]. This approach facilitates more biologically plausible clustering outcomes in scenarios where cell type information is incomplete or unknown.

Algorithm Selection Guidelines

Based on the comprehensive benchmarking results, the following recommendations emerge for regenerative medicine applications:

- For top overall performance across both transcriptomic and proteomic data, prioritize scAIDE, scDCC, or FlowSOM [5]

- For memory-constrained environments, select scDCC or scDeepCluster due to their excellent memory efficiency [5]

- For time-sensitive projects, choose TSCAN, SHARP, or MarkovHC for their exceptional time efficiency [5]

- For single-cell methylation data, consider scMelody for its enhanced consensus approach and robust performance across diverse datasets [10]

- For general-purpose applications with balanced requirements, community detection-based methods (Leiden, Louvain) offer reliable performance [5]

Implications for Regenerative Medicine

The advancements in clustering methodologies have profound implications for regenerative medicine. AI-powered clustering can accelerate therapy development by analyzing complex molecular patterns in stem cell populations, identifying novel subpopulations, and predicting differentiation outcomes [11]. As single-cell technologies continue to evolve, incorporating multi-omic data integration and leveraging intrinsic validation metrics will be crucial for unlocking deeper insights into cellular identity and function in regenerative processes.

Single-cell technologies have fundamentally transformed stem cell research by enabling the examination of the fundamental units comprising biological organs, tissues, and cells [12]. These technologies have emerged as powerful tools with profound impact, opening new pathways for acquiring cell-specific data and gaining insights into the molecular pathways governing organ function and biology [12]. Traditional bulk omics approaches average signals from heterogeneous cell populations, thereby obscuring important cellular nuances and rare cell populations that are critical for understanding stem cell biology [13]. The ability to analyze individual cells reveals diverse cell types, dynamic cellular states, and rare stem cell populations, providing unprecedented resolution for unraveling cellular heterogeneity and complexity [13].

Single-cell technology is particularly valuable for stem cell research because it facilitates non-invasive analyses of molecular dynamics and cellular functions over time [12]. This perspective is crucial for advancing stem cell research, especially given the various heterogeneities present among stem cell sources that have hindered their widespread clinical utilization [12]. Furthermore, stem cell research is intimately connected with cutting-edge technologies such as microfluidic organoids, CRISPR technology, and cell/tissue engineering, with single-cell approaches providing the analytical framework to understand these complex systems [12].

Transcriptomics Technologies

Single-cell RNA sequencing (scRNA-seq) technologies represent the foundation of single-cell analysis, with approaches primarily based on microfluidic chips, microdroplets, and microwell-based systems [14]. The main experimental workflow involves preparing single-cell suspensions, isolating individual cells, capturing mRNA, performing reverse transcription and nucleic acid amplification, and finally constructing transcriptome libraries [14]. Among the most prominent methodologies are:

- Droplet-based technologies (10X Genomics Chromium, Drop-seq) that use beads to capture RNA within oil droplets, creating reaction droplets with high throughput and cost-effectiveness [13].

- Plate-based methods (CEL-seq2, MARS-seq2.0) that provide enhanced sensitivity through linear amplification and barcoding strategies [13].

- Full-length transcript methods (SMART-seq3, FLASH-seq) that utilize template-switching oligos to create full-length cDNA libraries, enabling identification of 5' ends of transcripts and isoform characterization [13].

A critical advancement in scRNA-seq data analysis involves proper data transformation to handle the heteroskedastic nature of count data. The shifted logarithm transformation with a carefully chosen pseudo-count (e.g., ( \log(y/s + y0) ) where ( y0 = 1/(4\alpha) ) based on typical overdispersion ( \alpha )) has been shown to perform as well or better than more sophisticated alternatives for subsequent statistical analysis [15].

Proteomics and Multi-omics Integration

While transcriptomics reveals gene activity patterns, single-cell proteomics provides crucial phenotypic information by quantifying protein abundance [5]. Antibody-based single-cell proteomics, particularly methods such as CITE-seq, ECCITE-seq, and Abseq, leverage the specific binding of antibodies to target proteins to precisely quantify protein expression, revealing cellular heterogeneity and functional diversity [5]. These technologies employ oligonucleotide-labeled antibodies to simultaneously quantify mRNA and surface protein levels in individual cells, generating paired transcriptomic and proteomic datasets from the same cellular microenvironment [5].

The emerging field of single-cell multimodal omics integrates information across diverse molecular dimensions within a single cell, providing a holistic view of biological processes [13]. This approach illuminates the interconnected networks that shape cell behavior and enables identification of causal relationships between omics layers, revealing how genetics affect gene expression, epigenetics, proteins, and metabolites [13]. This integrative perspective is particularly valuable for dissecting complex diseases and understanding stem cell differentiation pathways.

Benchmarking Clustering Algorithms for Stem Cell Population Identification

Experimental Framework and Performance Metrics

The comprehensive benchmarking of clustering algorithms for single-cell data requires a structured experimental framework. Recent studies have evaluated computational methods using datasets with varying characteristics, including: (i) varying numbers of true cell types (5-20) with fixed cells per type; (ii) varying numbers of cells per type (50-250) with fixed cell type numbers; and (iii) varying ratios between major and minor cell types (2:1, 4:1, 10:1) [6]. These datasets are typically sourced from well-characterized references such as Tabula Muris, Tabula Sapiens, or Human Cell Atlas projects [6].

Performance evaluation employs multiple metrics to assess different aspects of clustering quality:

- Clustering Accuracy (CA): Measures the proportion of correctly clustered cells against known labels [5]

- Adjusted Rand Index (ARI): Quantifies clustering quality by comparing predicted and ground truth labels, with values from -1 to 1 [5]

- Normalized Mutual Information (NMI): Measures mutual information between clustering and ground truth, normalized to [0,1] [5]

- Purity: Assesses the extent to which clusters contain cells from single classes [5]

- Computational Efficiency: Peak memory usage and running time [5]

For robust evaluation, studies often employ stability-based approaches that assess clustering robustness to data perturbations, with the assumption that clustering using the optimal number of clusters would be most robust to small perturbations introduced by random resampling [6].

Comparative Performance Across Omics Modalities

A comprehensive 2025 benchmarking study evaluated 28 clustering algorithms across 10 paired single-cell transcriptomic and proteomic datasets, encompassing over 50 cell types and more than 300,000 cells [5]. The algorithms were categorized into three methodological approaches: classical machine learning-based methods (SC3, CIDR, TSCAN, etc.), community detection-based methods (PARC, Leiden, Louvain, etc.), and deep learning-based methods (DESC, scDCC, scGNN, etc.) [5].

Table 1: Top-Performing Clustering Algorithms for Single-Cell Data

| Algorithm | Transcriptomics Ranking | Proteomics Ranking | Method Category | Strengths |

|---|---|---|---|---|

| scAIDE | 2 | 1 | Deep Learning | Top performance across omics, excellent robustness |

| scDCC | 1 | 2 | Deep Learning | High accuracy, memory efficiency |

| FlowSOM | 3 | 3 | Machine Learning | Excellent robustness, balanced performance |

| CarDEC | 4 | 16 | Deep Learning | Good in transcriptomics, less suited for proteomics |

| PARC | 5 | 18 | Community Detection | Fast, but modality-specific performance |

Table 2: Performance Characteristics by Algorithm Category

| Method Category | Representative Algorithms | Performance Strengths | Computational Efficiency |

|---|---|---|---|

| Deep Learning | scDCC, scAIDE, scDeepCluster | High accuracy across modalities, robust to noise | Variable (scDCC and scDeepCluster memory efficient) |

| Machine Learning | FlowSOM, TSCAN, SHARP | Fast processing, interpretable results | Excellent time efficiency (TSCAN, SHARP, MarkovHC) |

| Community Detection | PARC, Leiden, Louvain | Good balance of speed and accuracy | Fast, efficient for large datasets |

The benchmarking revealed that deep learning-based methods generally achieved superior performance for both transcriptomic and proteomic data, with scAIDE, scDCC, and FlowSOM demonstrating the strongest cross-modal performance [5]. Interestingly, some methods that performed well on transcriptomic data (CarDEC, PARC) showed significantly reduced performance on proteomic data, highlighting the modality-specific strengths of certain algorithms [5].

Performance variations between transcriptomic and proteomic data can be attributed to their distinct data distributions and feature dimensionalities [5]. Proteomic data often exhibit different characteristics that pose non-trivial challenges for applying clustering techniques uniformly across both modalities [5].

Experimental Protocols for Algorithm Benchmarking

Standardized Workflow for Single-Cell Data Processing

To ensure reproducible benchmarking results, a standardized preprocessing workflow is essential. The following protocol outlines the key steps for single-cell data processing prior to clustering:

Data Filtering and Quality Control

- Filter cells with minimum of 2000 non-zero transcripts

- Exclude cells with ≥5% mitochondrial transcripts or ≤10% ribosomal transcripts

- Remove transcripts not present in at least 3 cells [16]

Normalization and Transformation

- Normalize counts to counts per million (CPM) for each cell

- Apply log base 2 transformation with an offset of 1

- Scale normalized data across single cells to mean expression = 0 and variance = 1 [16]

Feature Selection and Dimensionality Reduction

- Select highly variable genes using variance-stabilizing transformation

- Perform principal component analysis (PCA) to reduce data dimensionality

- Determine the number of principal components using the Kneedle heuristic to identify the point of maximum curvature of explained variance [16]

Graph Construction and Clustering

- Construct k-nearest neighbor graph (typically k=100) with edges representing distances between cells

- Apply Leiden algorithm with resolution of 0.8 to group single cells into clusters [16]

- Visualize clusters in UMAP space for quality assessment

This workflow is implemented in tools such as Scanpy (Python) or Seurat (R), which provide standardized pipelines for single-cell data analysis [14].

Cross-Modal Integration Protocols

For multi-omics data integration, recent benchmarking studies have employed state-of-the-art integration methods including moETM, sciPENN, scMDC, totalVI, and MOFA+ [5]. The integration protocol typically involves:

- Paired Data Processing: Process transcriptomic and proteomic data from the same cells using matched barcodes

- Modality-Specific Normalization: Apply appropriate normalization for each data type (e.g., log(CPM+1) for RNA, arcsinh transformation for protein)

- Feature Integration: Use integration algorithms to align the different modalities in a shared latent space

- Joint Clustering: Apply clustering algorithms to the integrated features to identify cell populations

The performance of clustering on integrated data is then compared to clustering performed on individual modalities to assess the value of multi-omics integration [5].

Research Reagent Solutions for Single-Cell Studies

Table 3: Essential Research Reagents and Platforms for Single-Cell Stem Cell Research

| Product Category | Specific Examples | Application in Single-Cell Research |

|---|---|---|

| Cell Culture Media | eTeSR, TeSR-AOF 3D | Maintain pluripotent stem cells in undifferentiated state for single-cell studies |

| Differentiation Kits | STEMdiff Cardiomyocyte Expansion Kit, STEMdiff Microglia Culture System | Generate specific cell types from stem cells for heterogeneity analysis |

| Extracellular Matrices | STEMmatrix BME | Provide physiological 3D environment for stem cell growth and differentiation |

| Cell Separation | ImmunoCult-XF, ImmunoCult Human T Cell Activators | Isle and expand specific immune cell populations from differentiated cultures |

| Bioreactor Systems | PBS-MINI Bioreactor | Scale up 3D cell cultures for large-scale single-cell sequencing projects |

Visualization of Single-Cell Data Analysis Workflow

Single-Cell Analysis Workflow

The benchmarking of clustering algorithms for single-cell data in stem cell research reveals that while deep learning methods generally provide superior performance, the choice of algorithm depends on specific research goals, data modalities, and computational constraints. The field continues to evolve rapidly with emerging trends including:

- Improved multi-omics integration methods that better capture interactions between molecular layers

- Spatial transcriptomics technologies that preserve spatial context in single-cell analysis

- Temporal dynamics inference through computational methods such as RNA velocity and pseudotime analysis

- Automated cell type annotation tools that leverage reference atlases for consistent cell labeling

For stem cell researchers, the selection of clustering algorithms should consider both performance metrics and practical constraints. scAIDE, scDCC, and FlowSOM represent strong choices for cross-modal applications, while TSCAN and SHARP offer efficient solutions for transcriptomic-specific analyses [5]. As single-cell technologies continue to mature, standardized benchmarking approaches will be increasingly important for ensuring rigorous and reproducible stem cell research.

Single-cell RNA-sequencing (scRNA-seq) has revolutionized stem cell biology by enabling researchers to investigate cellular heterogeneity, lineage commitment, and plasticity at unprecedented resolution. A critical step in analyzing scRNA-seq data involves unsupervised clustering, which partitions cells into distinct subpopulations based on their transcriptomic profiles. Accurate clustering is fundamental for identifying rare stem cell populations, tracking differentiation trajectories, and understanding plasticity mechanisms. This guide objectively compares the performance of various clustering algorithms specifically within the context of stem cell research, providing experimental data and methodologies to inform algorithm selection for specific applications. Benchmarking studies reveal that method choice significantly impacts biological interpretations, as different algorithms exhibit varying strengths in detecting subtle population structures, estimating cluster numbers, and handling the unique characteristics of stem cell datasets [17] [18].

Benchmarking Clustering Algorithms for Stem Cell Applications

Performance Evaluation on Real and Simulated Data

Systematic benchmarking efforts evaluate clustering algorithms using multiple metrics on real and simulated datasets. Key performance indicators typically include:

- Adjusted Rand Index (ARI): Measures the similarity between computational clustering results and biological ground truth labels.

- Normalized Mutual Information (NMI): Quantifies the mutual dependence between predicted clusters and reference cell types.

- Running Time and Peak Memory Usage: Assess computational efficiency and scalability [5] [18].

These evaluations employ datasets with known cell type labels to objectively quantify accuracy. For instance, studies often use the Tabula Muris dataset, which contains carefully annotated cell types from mouse tissues, to create benchmark datasets with varying numbers of cell types (5-20), different cells per type (50-250), and different proportions of major and minor populations [18]. This approach tests algorithm performance under controlled conditions that mimic the challenges of stem cell research.

Table 1: Top-Performing Clustering Algorithms Across Single-Cell Modalities

| Algorithm | Transcriptomic Data Ranking | Proteomic Data Ranking | Key Strengths | Computational Efficiency |

|---|---|---|---|---|

| scAIDE | 2nd | 1st | High performance across omics | Moderate |

| scDCC | 1st | 2nd | Excellent generalization | Memory efficient |

| FlowSOM | 3rd | 3rd | Robustness, fast running time | Time and memory efficient |

| Seurat | Variable | Variable | Handlers large datasets | Moderate |

| SC3 | Variable | N/A | User-friendly | High memory usage |

Performance Variation Across Data Modalities

Clustering performance can vary significantly between transcriptomic and proteomic data. A 2025 benchmark evaluating 28 algorithms on 10 paired transcriptomic and proteomic datasets found that scDCC, scAIDE, and FlowSOM consistently ranked highest for both modalities, demonstrating strong generalization capabilities [5]. However, some methods exhibited modality-specific performance; for example, CarDEC and PARC ranked 4th and 5th respectively in transcriptomics but dropped significantly to 16th and 18th in proteomics [5]. This highlights the importance of selecting algorithms validated for specific data types in stem cell research.

Algorithm robustness is another critical consideration. Benchmarking using 30 simulated datasets with varying noise levels and dataset sizes identified FlowSOM as particularly robust, maintaining stable performance under different data quality conditions [5]. For users with specific computational constraints, scDCC and scDeepCluster are recommended for memory efficiency, while TSCAN, SHARP, and MarkovHC excel in time efficiency [5].

Application 1: Identifying Rare Stem Cell Populations

Technical Challenges and Algorithm Selection

Rare stem cell populations, such as cancer stem cells or quiescent tissue-specific stem cells, often constitute a small fraction of the total cell population but play critical roles in development, homeostasis, and disease. Identifying these rare populations presents particular challenges: their transcriptomic signatures may be obscured by more abundant cell types, and standard clustering approaches may fail to resolve these subtle differences.

Specialized clustering approaches have been developed to address these challenges. RaceID was specifically designed to identify rare cell types by introducing a statistical test to compare within-cluster dispersion, enabling detection of outliers that may represent rare populations [18]. SC3 employs consensus clustering combined with eigenvalue analysis based on the Tracy-Widom test, enhancing its sensitivity to small but biologically relevant subpopulations [18]. Benchmarking studies have revealed that algorithms differ significantly in their ability to correctly estimate the number of cell types in a dataset—a crucial prerequisite for rare population identification [18].

Table 2: Algorithm Performance in Estimating Number of Cell Types

| Algorithm | Tendency | Stability | Notable Characteristics |

|---|---|---|---|

| Monocle3 | Minimal deviation | High | Community detection-based |

| scLCA | Minimal deviation | High | Uses Silhouette index |

| scCCESS-SIMLR | Minimal deviation | Moderate | Stability-based approach |

| SC3 | Overestimation | Moderate | Consensus clustering |

| Seurat | Overestimation | Moderate | Handles large datasets well |

| SHARP | Underestimation | High | Uses multiple indices |

| densityCut | Underestimation | Moderate | Density-based |

| Spectrum | High variability | Low | Eigengap heuristic |

Experimental Protocol for Rare Population Identification

A typical workflow for identifying rare stem cell populations includes:

- Data Preprocessing: Quality control, normalization, and feature selection using highly variable genes (HVGs). The number of HVGs significantly impacts clustering performance and should be optimized for each dataset [5].

- Dimensionality Reduction: Application of PCA or other techniques to reduce computational complexity and noise.

- Clustering Analysis: Implementation of rare cell-sensitive algorithms like RaceID or SC3 with appropriate parameters.

- Validation: Experimental validation using fluorescence-activated cell sorting (FACS) with stem cell markers or functional assays such as transplantation studies [19].

For hematopoietic stem cells (HSCs), which are particularly rare, researchers have successfully combined antibody-based isolation with single-cell transcriptomics to resolve previously unrecognized heterogeneity within this population [20]. This integrated approach has revealed that putatively homogeneous stem cell populations actually contain subpopulations with distinct functional characteristics and differentiation potentials.

Application 2: Tracking Stem Cell Differentiation

Lineage Trajectory Reconstruction

Stem cell differentiation involves progressive restriction of developmental potential, culminating in specialized cell types. Tracking this process requires computational approaches that can reconstruct developmental trajectories from snapshots of single-cell data. Pseudotemporal ordering methods have been particularly valuable in this context, as they order cells based on transcriptomic similarities to reconstruct the longest continuous path through a high-dimensional space, effectively recreating the differentiation timeline [20].

Studies using single-cell transcriptomics have revealed that lineage commitment often begins with stochastic fluctuations in the expression of lineage-affiliated genes in multipotent stem cells—a phenomenon known as "lineage priming" [20]. As differentiation progresses, cells transition through a hierarchical series of commitment steps before stabilizing a specific lineage program.

Experimental Workflow for Tracking Differentiation

Figure 1: Experimental workflow for tracking stem cell differentiation using viral barcoding and high-throughput sequencing.

Advanced experimental methods combine viral genetic barcoding with high-throughput sequencing to track single cells in heterogeneous populations [19]. The methodology involves:

- Viral Barcoding: A lentiviral library containing semi-random 33mer DNA barcodes is used to infect stem cells at a low multiplicity of infection (MOI~1) to ensure most cells receive a single barcode [19].

- Transplantation/Differentiation: Barcoded cells are transplanted into host organisms or induced to differentiate in vitro.

- Time-Series Sampling: Cells are collected at multiple time points during differentiation.

- Sequencing and Barcode Recovery: Genomic DNA is extracted, barcodes are recovered via PCR, and high-throughput sequencing identifies barcodes present in different cell populations [19].

- Clonal Analysis: Bioinformatic analysis reconstructs differentiation trees based on barcode sharing between cell populations.

This approach has revealed that stem cells do not contribute equally to differentiation—some HSCs generate balanced output across lineages while others show distinct differentiation biases [19].

Application 3: Understanding Stem Cell Plasticity

Defining and Measuring Plasticity

Stem cell plasticity refers to the capacity of stem cells to switch lineages, dedifferentiate, or transdifferentiate in response to environmental cues. While traditionally, differentiation was viewed as a unidirectional process, single-cell studies have revealed remarkable flexibility in cell identity, particularly in cancer stem cells and during cellular reprogramming.

The core molecular regulators of plasticity include:

- Pluripotency Factors: Oct4, Sox2, and Nanog maintain pluripotency in embryonic stem cells through a complex network of mutual regulation and co-occupation of target gene promoters [21].

- EMT/MET Regulators: Epithelial-mesenchymal transition (EMT) and its reverse (MET) are crucial for plasticity, with transcription factors like Snail, Zeb, and Twist acting as repressors of the epithelial phenotype and inducers of mesenchymal characteristics [21].

- Epigenetic Modifiers: DNA methylation and histone modification states significantly influence lineage potential, with small molecule epigenetic manipulators capable of enhancing or restricting differentiation capacity [22].

Signaling Pathways Regulating Plasticity

Figure 2: Signaling pathways and molecular regulators of stem cell plasticity.

Experimental approaches for investigating plasticity include:

- Lineage Tracing: Genetic labeling of specific cell populations followed by fate mapping.

- Reprogramming Assays: Introduction of pluripotency factors (Oct4, Klf4, Sox2, c-Myc) to induce dedifferentiation.

- Single-Cell Multi-omics: Simultaneous measurement of transcriptomic and epigenomic states in individual cells.

- Clonal Analysis: Tracking the fate of individual stem cells and their progeny over time.

Researchers have discovered that the reprogramming of somatic cells into induced pluripotent stem cells (iPSCs) requires a MET, highlighting the intimate connection between plasticity and epithelial phenotype [21]. Small molecule epigenetic manipulators—such as Gemcitabine and Chidamide—can significantly enhance osteogenic differentiation in aged human mesenchymal stem cells by 5.9- and 2.3-fold respectively, demonstrating how epigenetic modifications can overcome age-related declines in plasticity [22].

The Scientist's Toolkit: Essential Research Reagents and Computational Tools

Table 3: Essential Research Reagents and Computational Tools for Stem Cell Clustering

| Category | Specific Tool/Reagent | Function/Application | Considerations |

|---|---|---|---|

| Wet-Lab Reagents | Lentiviral Barcode Library | Single-cell lineage tracing | Ensure single-cell representation [19] |

| Oligonucleotide-labeled Antibodies | CITE-seq for paired transcriptomics/proteomics | Enables multi-modal clustering [5] | |

| Epigenetic Molecules | Modulating lineage potential | Specificity for lineages varies [22] | |

| Computational Tools | Seurat | Comprehensive scRNA-seq analysis | Shows variable estimation performance [18] |

| SC3 | Consensus clustering | Tendency for overestimation [18] | |

| Monocle3 | Trajectory inference | Accurate cell type number estimation [18] | |

| FlowSOM | Clustering for proteomic data | Excellent robustness across modalities [5] |

Clustering algorithms play an indispensable role in unlocking the complexities of stem cell biology, from rare population identification to differentiation tracking and plasticity assessment. Benchmarking studies consistently identify scDCC, scAIDE, and FlowSOM as top-performing methods across multiple modalities and evaluation metrics, providing excellent starting points for researchers. However, algorithm performance is context-dependent—methods excelling at estimating cluster numbers (e.g., Monocle3, scLCA) may differ from those optimal for rare population detection (e.g., RaceID, SC3).

Future developments will likely focus on multi-omics integration, dynamic trajectory inference, and machine learning approaches that can better capture the complexity of stem cell systems. As single-cell technologies continue to evolve, with methods now enabling simultaneous profiling of transcriptomics, proteomics, and epigenomics in the same cells, clustering algorithms must similarly advance to leverage these rich, multi-dimensional datasets. The integration of computational clustering with advanced experimental techniques—particularly viral barcoding and epigenetic manipulation—will continue to drive fundamental discoveries in stem cell biology and accelerate the development of stem cell-based therapies.

Comparative Analysis of Clustering Algorithms: Performance Across Stem Cell Datasets

The identification of distinct stem cell subpopulations is crucial for advancing regenerative medicine and understanding cellular differentiation pathways. This process relies heavily on computational clustering algorithms to decipher complex single-cell data. As research progresses, three major algorithmic categories have emerged as fundamental tools: Classical Machine Learning, Community Detection, and Deep Learning approaches. Each category offers distinct methodologies and advantages for tackling the challenges of stem cell heterogeneity analysis.

Classical machine learning algorithms provide well-established, interpretable frameworks for cell type identification. Community detection methods, originally developed for network analysis, excel at uncovering functional modules within cellular interaction networks. Deep learning approaches offer superior pattern recognition capabilities for high-dimensional data, enabling the identification of subtle morphological and transcriptomic differences between stem cell states. The integration of these computational approaches with systems biology and artificial intelligence (SysBioAI) is transforming stem cell research by enabling holistic analysis of multi-omics datasets and accelerating therapeutic development [23].

This guide provides an objective comparison of these algorithm categories within the specific context of benchmarking studies for stem cell subpopulation identification, presenting experimental data and methodologies to inform researchers' selection of appropriate computational tools.

Performance Comparison of Algorithm Categories

Comprehensive Benchmarking Across Algorithm Types

Table 1: Overall Performance Characteristics of Algorithm Categories

| Algorithm Category | Representative Methods | Key Strengths | Key Limitations | Computational Efficiency |

|---|---|---|---|---|

| Classical Machine Learning | SVM, Random Forest, SC3, TSCAN | High interpretability, robust with smaller datasets, minimal hyperparameter tuning | Limited capacity for very high-dimensional data, may miss complex nonlinear patterns | Moderate to high (varies by method) |

| Community Detection | Louvain, Leiden, PARC, PhenoGraph | Effective for network-structured data, identifies hierarchical communities | Stochasticity leads to variability, requires resolution parameter selection | High (for most methods) |

| Deep Learning | scDCC, scAIDE, scGNN, DESC | Superior handling of high-dimensional data, automated feature learning, high accuracy | High computational demand, requires large datasets, "black box" nature | Variable (often resource-intensive) |

Table 2: Quantitative Performance Metrics from Benchmarking Studies

| Algorithm Category | Top Performers | Average ARI* | Average NMI* | Scalability to Large Datasets | Handling of Batch Effects |

|---|---|---|---|---|---|

| Classical ML | SVM, Random Forest | 0.72-0.85 | 0.75-0.88 | Moderate | Moderate |

| Community Detection | Leiden, Louvain | 0.68-0.82 | 0.71-0.85 | High | Limited |

| Deep Learning | scAIDE, scDCC, FlowSOM | 0.78-0.92 | 0.81-0.94 | Variable (improving) | Good to excellent |

*ARI (Adjusted Rand Index) and NMI (Normalized Mutual Information) are similarity measures between clustering results and ground truth, where values closer to 1 indicate better performance [24].

Benchmarking studies evaluating 28 computational algorithms on paired transcriptomic and proteomic datasets have revealed that deep learning methods generally achieve superior performance metrics, with scAIDE, scDCC, and FlowSOM ranking as top performers across multiple evaluation criteria [24]. However, classical machine learning approaches like SVM have demonstrated exceptional consistency, emerging as top performers in three out of four datasets in cell annotation tasks [25].

Community detection algorithms like Leiden and Louvain remain widely adopted due to their speed and efficiency in processing large single-cell datasets, though they exhibit stochasticity that can lead to variability in results across different runs [26]. The recently developed scICE framework addresses this limitation by evaluating clustering consistency, achieving up to 30-fold improvement in speed compared to conventional consensus clustering-based methods [26].

Performance in Stem Cell Research Applications

Table 3: Algorithm Performance in Specific Stem Cell Applications

| Application Domain | Recommended Algorithms | Performance Notes | Key Experimental Findings |

|---|---|---|---|

| Hematopoietic Stem/Progenitor Cell Identification | Deep Learning (LSM model), SVM, FlowSOM | DL achieved >90% accuracy distinguishing LT-HSCs, ST-HSCs, MPPs | DL models successfully classified HSC subpopulations based solely on morphological features from DIC images [27] |

| Mesenchymal Stem Cell Characterization | scAIDE, Random Forest, Leiden | Integration of multi-omics data enhances subpopulation resolution | SysBioAI approaches enable iterative refinement of stem cell therapeutic products [23] |

| Cancer Stem Cell Identification | GNN-based approaches, SVM, PhenoGraph | DL identifies subtle transcriptomic subpopulations from morphology | CNNs discriminated breast cancer subpopulations with AUC 0.74-0.8 using phase contrast images [28] |

| Rare Stem Cell Population Detection | scICE, SVM, scDCC | Specialized frameworks improve consistency for rare cell identification | Ensemble approaches combining multiple algorithms enhance rare cell type discovery [26] [25] |

In functional subpopulation classification of hematopoietic stem cells, deep learning approaches have demonstrated remarkable capability by distinguishing long-term HSCs, short-term HSCs, and multipotent progenitors based solely on morphological features observed through light microscopy images [27]. This deep learning-based platform provided proof-of-principle for antibody-free identification of different cell populations purely based on cell morphology, potentially obviating the need for time-consuming transplantation experiments for functional assessment.

For stem cell research requiring integration of multiple data modalities, systems biology approaches combining AI and multi-omics data analysis have shown particular promise. The iterative circle of refined clinical translation concept leverages SysBioAI to optimize both therapeutic products and clinical trial strategies through continuous adaptation cycles [23].

Experimental Protocols and Methodologies

Standardized Benchmarking Framework

To ensure fair comparison across algorithm categories, benchmarking studies should implement standardized experimental protocols:

Data Preprocessing Pipeline:

- Quality Control: Filtering low-quality cells and genes using standardized thresholds

- Normalization: Apply appropriate normalization methods (e.g., logCPM for transcriptomic data)

- Feature Selection: Identify highly variable genes (HVGs) or relevant features

- Dimensionality Reduction: Implement PCA, scLENS [26], or other reduction techniques

- Graph Construction: Build k-nearest neighbor graphs for community detection approaches

Evaluation Methodology:

- Multiple Datasets: Utilize diverse datasets representing different stem cell types and tissues

- Ground Truth Validation: Use experimentally validated cell labels when available

- Multiple Metrics: Employ ARI, NMI, clustering accuracy, purity, and runtime assessment

- Consistency Testing: Execute multiple runs with different random seeds to assess stability

- Statistical Analysis: Perform appropriate statistical tests to determine significance of performance differences

The benchmarking study of 28 clustering algorithms implemented this rigorous approach across 10 paired transcriptomic and proteomic datasets encompassing over 50 cell types and more than 300,000 cells [24]. This comprehensive evaluation revealed that approximately 30% of clustering attempts across different algorithm classes produced consistent results, highlighting the importance of robust benchmarking [26].

Deep Learning Model Training Protocol

For deep learning approaches in stem cell research, the following experimental protocol has proven effective:

Network Architecture Selection:

- Convolutional Neural Networks (CNNs): For image-based stem cell classification [27] [28]

- Graph Neural Networks (GNNs): For network-structured single-cell data [29] [30]

- Autoencoders: For dimensionality reduction and feature learning [29]

Training Procedure:

- Data Partitioning: Split data into training (80%), validation (10%), and test sets (10%)

- Data Augmentation: Apply appropriate augmentation techniques (rotation, flipping for images; noise injection for omics data)

- Model Initialization: Use pre-trained weights when available (transfer learning)

- Optimization: Employ adaptive learning rate methods (Adam, SGD with momentum)

- Regularization: Implement dropout, weight decay, and early stopping to prevent overfitting

- Validation: Monitor performance on validation set throughout training

In the hematopoietic stem cell study, researchers developed a three-class classifier (LSM model) using extensive image datasets after rigorous training and validation [27]. The model extracted intrinsic morphological features unique to different cell types, independent of surface markers or intracellular GFP markers used for initial identification and isolation.

Consistency Evaluation Framework

For assessing clustering reliability across algorithm categories, the scICE framework provides a robust methodology:

Inconsistency Coefficient Calculation:

- Multiple Clustering Runs: Execute clustering algorithm multiple times with different random seeds

- Similarity Matrix Construction: Compute element-centric similarity between all label pairs

- Probability Estimation: Determine occurrence probability of each unique label

- IC Calculation: Compute inconsistency coefficient using IC = 1/(pSpᵀ) where p represents probability vector and S is similarity matrix

Implementation Details:

- Parallel processing across multiple cores to reduce computation time

- Application across various resolution parameters

- Identification of consistent cluster labels for downstream analysis

This approach has demonstrated up to 30-fold speed improvement compared to conventional consensus clustering-based methods while effectively identifying reliable clustering results [26].

Essential Research Reagents and Computational Tools

Research Reagent Solutions for Stem Cell Analysis

Table 4: Essential Research Reagents for Stem Cell Isolation and Characterization

| Reagent Category | Specific Examples | Application in Stem Cell Research | Function in Experimental Protocols |

|---|---|---|---|

| Surface Marker Antibodies | CD150, CD48, CD34, CD135, Sca-1, c-Kit | Hematopoietic stem cell isolation and characterization | Cell sorting and population validation via flow cytometry [27] |

| Intracellular Markers | α-catulin, Evi1, GFP reporters | Stem cell tracking and functional assessment | Genetic labeling of stem cell populations for lineage tracing [27] |

| Cell Staining Reagents | Lineage cocktail antibodies, viability dyes | Sample preparation for single-cell analysis | Cell identification and removal of dead cells [27] |

| Single-Cell Sequencing Kits | 10x Genomics, CITE-seq reagents | Transcriptomic and proteomic profiling | Simultaneous measurement of mRNA and surface protein levels [24] |

Computational Tools and Frameworks

Table 5: Essential Computational Tools for Algorithm Implementation

| Tool Category | Specific Software/Packages | Algorithm Support | Key Applications |

|---|---|---|---|

| Comprehensive Platforms | Seurat, Scanpy, Monocle3 | All categories | End-to-end single-cell data analysis [24] [26] |

| Classical ML Implementation | scikit-learn, SC3, TSCAN | Classical ML | Cell type annotation, clustering [24] [25] |

| Community Detection | Leiden, Louvain, PARC | Community Detection | Graph-based clustering, network analysis [24] [26] |

| Deep Learning Frameworks | PyTorch, TensorFlow, scDCC, scAIDE | Deep Learning | Complex pattern recognition, image analysis [24] [27] |

| Benchmarking Tools | scICE, multiK, chooseR | All categories | Clustering consistency evaluation [26] |

The selection of appropriate computational tools depends on the specific research question and data characteristics. For rapid analysis of large datasets, community detection methods implemented in Seurat or Scanpy provide efficient solutions. For more complex pattern recognition tasks involving morphological data or multi-omics integration, deep learning approaches offer superior performance despite higher computational requirements [27] [28].

The comparative analysis of classical machine learning, community detection, and deep learning approaches for stem cell subpopulation identification reveals a complex landscape where each algorithm category offers distinct advantages depending on the specific research context.

Classical machine learning methods, particularly SVM and Random Forest, provide robust, interpretable solutions for standard classification tasks and remain competitive in many benchmarking studies [25]. Community detection algorithms excel in processing large-scale single-cell datasets efficiently, though their stochastic nature requires consistency validation frameworks like scICE [26]. Deep learning approaches demonstrate superior performance in handling high-dimensional data and complex pattern recognition tasks, particularly for image-based stem cell classification and multi-omics integration [27] [28].

The integration of these computational approaches with SysBioAI frameworks presents a promising direction for future stem cell research, enabling iterative refinement of therapeutic products and clinical translation strategies [23]. As the field advances, the development of more efficient, interpretable, and adaptable algorithms will further enhance our ability to unravel stem cell heterogeneity and accelerate the development of regenerative therapies.

Researchers should select algorithms based on their specific data characteristics, computational resources, and research objectives, leveraging benchmarking studies and consistency evaluation tools to ensure robust and reproducible results in stem cell subpopulation identification.

Single-cell RNA sequencing (scRNA-seq) has revolutionized stem cell research by enabling the detailed dissection of cellular heterogeneity within populations. A fundamental step in this analysis is clustering, which groups cells with similar gene expression profiles to identify distinct cell types, states, and transitional populations. The selection of an appropriate clustering algorithm directly impacts the reliability of downstream biological interpretations, from discovering novel stem cell subtypes to understanding differentiation trajectories. Recent comprehensive benchmarking studies have systematically evaluated computational methods for clustering single-cell data across different omics modalities, including transcriptomics and proteomics. These studies reveal that despite the proliferation of available methods, three algorithms—scAIDE, scDCC, and FlowSOM—consistently demonstrate superior performance for transcriptomic and proteomic data, making them particularly promising candidates for the complex analysis of stem cell populations [31] [5]. This guide provides an objective comparison of these top-performing methods based on experimental data, offering stem cell researchers evidence-based recommendations for their analytical workflows.

Benchmarking Methodology and Evaluation Metrics

The performance data presented in this guide originates from a large-scale benchmark study published in Genome Biology (2025), which comprehensively evaluated 28 clustering algorithms on 10 paired transcriptomic and proteomic datasets [31] [5]. The benchmarking framework employed multiple validation metrics to ensure robust assessment:

- Clustering Quality Metrics: Adjusted Rand Index (ARI), Normalized Mutual Information (NMI), Clustering Accuracy (CA), and Purity were used to quantify how well the computational clusters matched established biological labels [5].

- Computational Efficiency Metrics: Peak memory usage and running time were measured to assess practical performance requirements [31].

- Robustness Evaluation: Algorithms were tested on 30 simulated datasets with varying noise levels and dataset sizes to evaluate their stability under different conditions [5].

- Multi-Omics Integration: The study also assessed how these methods performed on integrated transcriptomic and proteomic data using seven state-of-the-art integration methods [5].

The benchmarking study ranked algorithms based on their overall performance across both transcriptomic and proteomic data. The following table summarizes the key findings for the top performers:

Table 1: Overall Performance Ranking of Top Clustering Algorithms

| Algorithm | Overall Rank (Transcriptomics) | Overall Rank (Proteomics) | Key Strengths | Computational Profile |

|---|---|---|---|---|

| scAIDE | 2 | 1 | Top performance in proteomics, robust across modalities | Moderate resource usage |

| scDCC | 1 | 2 | Best in transcriptomics, memory efficient | High memory efficiency |

| FlowSOM | 3 | 3 | Excellent robustness, balanced performance | Fast, memory efficient |

This comprehensive evaluation revealed that scAIDE, scDCC, and FlowSOM formed a distinct top tier of performers, significantly outperforming other methods in clustering accuracy and consistency across diverse data types [5]. While the benchmark did not exclusively use stem cell datasets, the consistent performance across multiple tissue types and biological systems suggests strong generalizability to stem cell research applications.

Detailed Algorithm Analysis

scAIDE: Advanced Deep Learning Framework

scAIDE (single-cell Autoencoder-Imputed Distance-preserved Embedding) represents a sophisticated deep learning approach specifically designed to address the high noise and dimensionality challenges of single-cell data [32].

Table 2: Technical Specifications of scAIDE

| Aspect | Specification | Biological Relevance |

|---|---|---|

| Architecture | Two-stage neural network: Autoencoder for imputation + MDS encoder for distance preservation | Effectively handles dropout events common in stem cell scRNA-seq |

| Clustering Method | Random Projection Hashing-based k-means (RPH-kmeans) | Identifies rare cell types (e.g., rare stem cell subtypes) |

| Scalability | Analyzed 1.3 million neural cells within 30 minutes | Suitable for large-scale stem cell atlas projects |

| Key Innovation | Distance-preserving embedding coupled with imbalance-aware clustering | Maintains biological relationships while addressing cell population size disparities |

The experimental validation of scAIDE demonstrated exceptional performance in identifying rare cell populations—a critical capability for stem cell research where transitional states or rare subtypes often represent biologically significant populations. In one application, scAIDE successfully identified Cajal-Retzius cells (approximately 1.6% of total population) in a neural dataset, highlighting its sensitivity for detecting minority populations [32]. For stem cell researchers, this sensitivity could translate to improved identification of early differentiation intermediates or rare progenitor cell types.

scDCC: Memory-Efficient Deep Clustering

scDCC represents another deep learning-based approach that excelled in the benchmarking studies, particularly noted for its memory efficiency while maintaining high accuracy [31] [5].