Cross-Platform Validation of Stem Cell scRNA-seq Findings: A Framework for Robustness and Reproducibility

Single-cell RNA sequencing has revolutionized stem cell research by uncovering cellular heterogeneity and developmental trajectories.

Cross-Platform Validation of Stem Cell scRNA-seq Findings: A Framework for Robustness and Reproducibility

Abstract

Single-cell RNA sequencing has revolutionized stem cell research by uncovering cellular heterogeneity and developmental trajectories. However, the reproducibility of findings across different experimental platforms, technologies, and analytical pipelines remains a significant challenge. This article provides a comprehensive framework for the cross-platform validation of stem cell scRNA-seq data, addressing foundational concepts, methodological applications, troubleshooting strategies, and comparative validation approaches. We explore how integrating systems biology with artificial intelligence (SysBioAI), leveraging large-scale foundation models, and implementing robust computational pipelines can enhance the reliability of stem cell research. Targeted at researchers, scientists, and drug development professionals, this review synthesizes current best practices to foster more reproducible and translatable stem cell science, ultimately accelerating the path to clinical application.

The Critical Need for Cross-Platform Validation in Stem Cell Biology

Single-cell RNA sequencing (scRNA-seq) has revolutionized stem cell biology by enabling the resolution of cellular heterogeneity within seemingly homogeneous populations. However, the power to discover novel stem cell subtypes or precisely characterize differentiation states is critically dependent on effectively managing the substantial technical variability inherent to scRNA-seq technologies. This technical noise, if unaccounted for, can masquerade as biological signal, potentially leading to false discoveries and irreproducible findings in cross-platform validation studies. Technical variability in scRNA-seq arises from multiple sources, including cell-to-cell variation in detection sensitivity, platform-specific biases, batch effects, and the high frequency of zero counts (dropouts) that can result from either biological absence of expression or technical failures in detection [1]. For stem cell researchers, these challenges are particularly acute when integrating datasets across different laboratories or platforms to validate key stem cell markers or differentiation pathways. This guide systematically compares the performance of major scRNA-seq platforms and analytical methods, providing experimental data and methodologies to empower robust cross-platform validation of stem cell findings.

Platform-Specific Performance Differences

Different scRNA-seq platforms exhibit distinct performance characteristics that directly impact data interpretation. A systematic comparison of two high-throughput 3′-scRNA-seq platforms—10× Chromium and BD Rhapsody—using complex tumor tissues revealed several key differences in performance metrics [2]. Both platforms demonstrated similar gene sensitivity, but BD Rhapsody datasets showed higher mitochondrial content. Critically, the study identified cell type detection biases between platforms: BD Rhapsody detected a lower proportion of endothelial and myofibroblast cells, while 10× Chromium showed lower gene sensitivity in granulocytes [2]. Furthermore, the sources of ambient RNA contamination differed between the plate-based and droplet-based platforms, highlighting how fundamental technological approaches influence the nature of technical artifacts.

The Zero-Inflation Problem and Detection Sensitivity

A fundamental characteristic of scRNA-seq data is the high proportion of zero counts, which can stem from either biological phenomena (true absence of expression) or technical artifacts (failure to detect expressed genes). This "zero-inflation problem" is particularly pronounced for lowly expressed genes, where technical dropouts are most frequent [1]. The proportion of genes reporting zero expression varies substantially across individual cells, and this variability is driven by both biological and technical factors [1]. In stem cell applications, where subtle expression changes in regulatory genes can have profound biological implications, this zero-inflation can obscure critical transcriptional events and complicate the identification of rare transitional states during differentiation.

Batch Effects and Experimental Confounding

Batch effects represent a major source of technical variability that can profoundly impact scRNA-seq studies. These effects occur when cells from different biological groups or conditions are processed, captured, or sequenced separately, introducing technical correlations that can confound biological interpretations [1]. The problem is particularly acute in stem cell research, where experimental designs often necessitate processing samples across multiple batches due to the temporal nature of differentiation protocols. Evidence demonstrates that systematic errors, including batch effects, can explain a substantial percentage of observed cell-to-cell expression variability, and this technical variation can be mistakenly interpreted as novel biological heterogeneity when unsupervised methods like clustering are applied [1].

Table 1: Major Sources of Technical Variability in scRNA-seq Data

| Variability Source | Impact on Data | Particular Relevance to Stem Cell Studies |

|---|---|---|

| Platform Differences | Cell type detection biases, varying gene sensitivity | Compromises cross-platform validation of stem cell markers |

| Zero Inflation/Dropouts | Underestimation of true expression, especially for low-abundance transcripts | Obscures detection of critical regulatory genes with low expression |

| Batch Effects | Artificial clustering, confounded group differences | Impacts longitudinal differentiation studies processed in multiple batches |

| Cell-to-Cell Detection Variation | Inconsistent measurement accuracy across cells | Affects characterization of heterogeneity within stem cell populations |

| Ambient RNA Contamination | Background noise from lysed cells | Particularly problematic in sensitive primary stem cell cultures |

Cross-Platform Performance Comparison

Experimental Design for Platform Benchmarking

Robust comparison of scRNA-seq platforms requires carefully designed experiments that control for biological variability while measuring technical performance. A multi-center study established a benchmark approach using two biologically distinct but well-characterized reference cell lines: a human breast cancer cell line (HCC1395) and a matched B lymphocyte line (HCC1395BL) derived from the same donor [3]. This design included both individual cell lines and defined mixtures processed across four sequencing centers and multiple platforms, including 10x Genomics Chromium (3' end counting), Fluidigm C1 (full-length), Fluidigm C1 HT (high-throughput), and Takara Bio ICELL8 (full-length) [3]. By including both separate and mixed samples across sites, this design enabled disentanglement of technical effects from biological variability, providing a template for rigorous platform assessment relevant to stem cell researchers considering cross-platform validation strategies.

Quantitative Performance Metrics Across Platforms

The performance differences between scRNA-seq platforms can be quantified through multiple metrics that are critical for experimental planning in stem cell studies. A systematic comparison of 10× Chromium and BD Rhapsody platforms provided specific quantitative measurements across key performance parameters [2]. Both platforms demonstrated similar gene sensitivity, but differed significantly in mitochondrial content and cell type representation. The study identified specific cell type detection biases, with BD Rhapsody showing lower proportions of endothelial cells and myofibroblasts, while 10× Chromium exhibited reduced gene sensitivity specifically in granulocytes [2]. These findings highlight that platform choice can directly influence which cell types are detectable and well-characterized—a critical consideration for stem cell researchers studying heterogeneous differentiation cultures or tissue regeneration models where multiple cell lineages may be present.

Table 2: Quantitative Performance Comparison of scRNA-seq Platforms

| Performance Metric | 10× Chromium | BD Rhapsody | Implications for Stem Cell Research |

|---|---|---|---|

| Gene Sensitivity | High | Similar to 10× Chromium | Both platforms suitable for detecting expressed transcripts in stem cells |

| Mitochondrial Content | Standard | Highest | BD Rhapsody may better capture mitochondrial transcripts in metabolic studies |

| Endothelial Cell Detection | Standard | Lower proportion | Platform choice critical for vascular differentiation studies |

| Myofibroblast Detection | Standard | Lower proportion | Important for stromal differentiation or fibrosis models |

| Granulocyte Gene Sensitivity | Lower | Standard | Platform consideration for hematopoietic differentiation studies |

| Ambient RNA Source | Droplet-based | Plate-based | Different contamination profiles require specific correction approaches |

Methodologies for Assessing Technical Variability

Experimental Design for Variability Assessment

Proper experimental design is fundamental for characterizing and mitigating technical variability in scRNA-seq studies. Key considerations include:

Replication Strategy: Incorporating both technical replicates (splitting the same sample for separate processing) and biological replicates (different biological samples processed similarly) enables separation of technical from biological variability [4]. Technical replicates measure noise from protocols or equipment, while biological replicates capture inherent variability in biological systems [4].

Sample Preparation Consistency: Maintaining stable temperature during sample preparation is critical, as cells held at 4°C maintain viability while those at room temperature begin to die, extruding cellular contents and causing aggregation that degrades data quality [4]. Gentle manipulation and minimizing processing time reduces stress responses that can obscure true biological states.

Fixed vs. Fresh Samples: Fixation permits storage of samples for later processing, streamlining logistics for complex experiments like time-course differentiation studies. This approach minimizes batch effects that can occur when processing fresh samples at different times [4]. Plate-based combinatorial barcoding methods enable fixed sample processing, allowing researchers to store and later run up to 96 samples with a single kit [4].

Computational Methods for Quantifying Variability

Several computational approaches have been developed specifically to measure and account for technical variability in scRNA-seq data. A comprehensive evaluation of 14 different variability metrics identified distinct performance characteristics across different data structures [5]. The study found that platform-specific differences in gene expression variability tended to be larger than differences due to cell type for some metrics, highlighting the substantial impact of technical factors [5]. Among the evaluated methods, scran demonstrated the strongest all-round performance, showing similar estimated variability within the same cell types regardless of sequencing method, while methods like CV, DESeq2, edgeR, and glmGamPoi were more significantly impacted by sequencing platform differences [5]. This benchmarking provides stem cell researchers with evidence-based guidance for selecting appropriate variability metrics for their specific analytical needs.

Integration and Batch Correction Methods

The ability to integrate datasets across platforms and batches is essential for cross-platform validation in stem cell research. Evaluation of multiple integration methods revealed distinctive performance characteristics, with Seurat v3, Harmony, BBKNN, and fastMNN all demonstrating effective batch correction for data derived from biologically similar samples across platforms and sites [3]. However, when samples contained large fractions of biologically distinct cell types, Seurat v3 over-corrected and misclassified cell types, while methods like limma and ComBat failed to remove batch effects [3]. These findings highlight that the choice of integration method must be tailored to the specific biological context and composition of samples—a critical consideration for stem cell researchers integrating data from different differentiation stages or across multiple experimental conditions.

Advanced Solutions for Technical Variability

Novel Computational Frameworks

Recent computational advances have produced increasingly sophisticated methods for addressing technical artifacts in scRNA-seq data. The ZILLNB framework integrates zero-inflated negative binomial regression with deep generative modeling to systematically decompose technical variability from intrinsic biological heterogeneity [6]. This approach employs an ensemble architecture combining Information Variational Autoencoder and Generative Adversarial Network to learn latent representations at cellular and gene levels, with parameters iteratively optimized through an Expectation-Maximization algorithm [6]. In benchmarking evaluations, ZILLNB achieved superior performance in cell type classification tasks, with improvements in Adjusted Rand Index ranging from 0.05 to 0.2 over existing methods including VIPER, scImpute, DCA, and others [6]. For stem cell researchers, such advanced denoising methods can enhance the detection of rare cell states and improve the accuracy of differential expression analysis in complex differentiation systems.

Simultaneous DNA-RNA Analysis

A emerging approach for addressing technical variability involves simultaneous measurement of DNA and RNA from the same single cells. The SDR-seq tool enables highly sensitive capture of genomic variations and RNA together in the same cell, increasing precision and scalability compared to previous technologies [7]. This method is particularly valuable for stem cell research applications because it can determine variations in non-coding regions of the genome—where more than 95% of disease-associated variants occur—and directly link these genetic variants to gene expression consequences in the same cell [7]. For cross-platform validation studies, this integrated approach provides an additional layer of biological ground truth that can help distinguish technical artifacts from genuine biological differences.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Research Reagent Solutions for scRNA-seq Experiments

| Reagent/Resource | Function | Application Notes |

|---|---|---|

| HEPES or Hanks' Buffered Salt (without Ca²⁺/Mg²⁺) | Prevents cell aggregation during preparation | Cations in standard media cause cell clumping; calcium/magnesium-free media reduces aggregation |

| Ficoll or Optiprep | Density gradient centrifugation media | Separates viable cells from debris; effective for PBMC fractionation and nuclei cleaning (e.g., myelin sheath removal in brain tissue) |

| Commercial Enzyme Cocktails (e.g., Miltenyi Biotec) | Tissue dissociation | Plug-and-play kits for generating single-cell suspensions from various tissue types |

| SMART-Seq v4 Ultra Low Input RNA Kit | Full-length cDNA synthesis | Used in Fluidigm C1 system for full-length scRNA-seq; superior for detecting alternative splicing and sequence variants |

| 10x Genomics Chromium Library Prep Kit | 3' end counting-based library construction | Incorporates UMIs for improved quantification; high-throughput droplet-based system |

| Fixed Cell Preservation Solutions | Sample stabilization | Enables batch processing of time-course experiments; critical for minimizing batch effects in complex differentiation studies |

Experimental Workflows for Variability Assessment

Workflow for Platform Comparison Studies

Platform Comparison Workflow

Technical Variability Identification Pipeline

Variability Assessment Pipeline

Technical variability in scRNA-seq data presents significant challenges for cross-platform validation of stem cell research findings, but systematic characterization of these effects enables effective mitigation strategies. The performance differences between major scRNA-seq platforms—including distinct cell type detection biases, varying sensitivity profiles, and different sources of technical noise—highlight the importance of platform selection tailored to specific research questions in stem cell biology. Furthermore, experimental design choices such as appropriate replication, sample preparation consistency, and computational method selection critically impact the ability to distinguish technical artifacts from genuine biological signals. As the field advances, emerging technologies like simultaneous DNA-RNA sequencing and sophisticated deep learning-based denoising methods offer promising approaches for further enhancing the reproducibility and reliability of scRNA-seq findings across platforms. For stem cell researchers, embracing these rigorous assessment and mitigation approaches will be essential for building robust, validated models of stem cell biology that transcend individual technological platforms and laboratory-specific technical influences.

The precise definition of cellular states and differentiation potency represents a fundamental challenge in stem cell biology and single-cell genomics. As single-cell RNA-sequencing (scRNA-seq) technologies enable unprecedented resolution of cellular heterogeneity, the field requires robust, quantitative metrics to characterize cellular identity and functional potential. The differentiation potency of a single cell—its capacity to give rise to diverse specialized progeny—has traditionally been assessed through functional assays in vitro and in vivo. However, these approaches are labor-intensive, low-throughput, and impractical for large-scale studies. The emergence of computational frameworks that leverage scRNA-seq data now provides powerful in silico methods for estimating cellular potency across diverse biological systems, from normal development to cancer [8].

Within the context of cross-platform validation of stem cell findings, establishing consensus metrics for cellular states and potency is particularly crucial. As different scRNA-seq platforms and processing pipelines generate technical variations, biologically meaningful definitions must transcend these methodological differences. This review synthesizes current computational approaches for quantifying cellular potency, compares their underlying methodologies and applications, and provides a framework for validating these metrics across experimental platforms. By establishing standardized evaluation criteria, researchers can more reliably compare stem cell states and differentiation potentials across studies, ultimately enhancing reproducibility in regenerative medicine and drug development applications.

Computational Frameworks for Quantifying Cellular Potency

Signaling Entropy: A Network-Based Potency Metric

Signaling entropy has emerged as a powerful computational approach for estimating differentiation potency from scRNA-seq data without requiring feature selection. This method approximates a cell's differentiation potential by quantifying the signaling promiscuity or uncertainty of its transcriptome within the context of a protein-protein interaction network [8]. The core premise is that pluripotent cells, capable of differentiating into all major lineages, maintain balanced activity across diverse signaling pathways, resulting in high entropy. In contrast, differentiated cells exhibit more focused signaling patterns corresponding to their specific lineage commitment, manifesting as lower entropy [8].

The mathematical foundation of signaling entropy involves modeling cellular signaling as a probabilistic process on a network. The algorithm integrates a cell's transcriptomic profile with a high-quality protein-protein interaction (PPI) network to define a cell-specific random walk. The underlying assumption is that two genes encoding interacting proteins are more likely to functionally interact if both are highly expressed. The global signaling entropy is then computed as the entropy rate of this probabilistic signaling process, effectively quantifying the overall signaling promiscuity or the efficiency with which signaling can diffuse throughout the network [8].

Validation studies have demonstrated that signaling entropy strongly correlates with established pluripotency measures. In an analysis of 1,018 single-cell RNA-seq profiles of human embryonic stem cells (hESCs) and their derivatives, pluripotent hESCs exhibited the highest signaling entropy values, followed by multipotent progenitors (neural progenitors, definitive endoderm progenitors), with terminally differentiated cells (fibroblasts, trophoblasts, endothelial cells) showing the lowest values [8]. The differences were highly statistically significant (Wilcoxon rank-sum P<1e-50), and signaling entropy correlated strongly with a established pluripotency gene expression signature (Spearman correlation=0.91, P<1e-500) [8].

Alternative Computational Approaches for Potency Assessment

While signaling entropy represents a network-based approach, other computational methods have been developed to assess cellular potency from single-cell transcriptomic data. The single-cell entropy (SCENT) algorithm leverages signaling entropy to independently order single cells in pseudo-time without requiring feature selection or clustering, providing advantages over trajectory inference methods like Monocle, SCUBA, and Diffusion Pseudotime [8].

CytoTRACE is another computational framework that predicts differentiation potency based on the premise that less differentiated cells express more diverse genes than their more specialized counterparts. By analyzing the number of genes expressed per cell, CytoTRACE can reconstruct differentiation trajectories and identify progenitor states [9].

Pluripotency gene expression signatures offer a more direct approach by scoring cells based on the expression of established pluripotency markers like NANOG, POU5F1 (OCT4), and SOX2. While conceptually straightforward and widely used, this approach requires prior knowledge of relevant markers and may miss novel cell states or heterogeneous populations [8].

Table 1: Comparison of Computational Methods for Assessing Cellular Potency

| Method | Underlying Principle | Key Advantages | Limitations |

|---|---|---|---|

| Signaling Entropy | Quantifies signaling promiscuity in PPI network | No feature selection required; captures biological context | Dependent on quality and completeness of PPI network |

| SCENT | Implements signaling entropy for trajectory inference | Independent of clustering; robust across cell types | Computational intensive for very large datasets |

| CytoTRACE | Uses gene counts per cell as potency proxy | Conceptually simple; fast computation | May be confounded by technical variations in gene detection |

| Pluripotency Scores | Expression of established pluripotency markers | Easy to implement and interpret | Limited to predefined gene sets; may miss novel states |

Experimental Validation of Potency Metrics

Validation in Developmental Systems

Developmental systems provide ideal contexts for validating computational potency metrics due to their well-characterized differentiation hierarchies. In one comprehensive analysis, signaling entropy was computed for 3,256 non-malignant cells from melanoma microenvironments, including T-cells, B-cells, natural killer cells, macrophages, endothelial cells, and cancer-associated fibroblasts [8]. The results confirmed established biological knowledge: lymphocytes exhibited similar average signaling entropy values, while intra-tumoral macrophages showed marginally higher entropy. Crucially, endothelial cells and cancer-associated fibroblasts demonstrated the highest signaling entropy among these non-malignant cell types, consistent with their known phenotypic plasticity [8].

Time-course differentiation experiments further validate the utility of signaling entropy. When applied to scRNA-seq data from hESCs differentiating into definitive endoderm progenitors via mesoendoderm intermediates, signaling entropy values showed a substantial decrease only after 72 hours, aligning with known differentiation kinetics where definitive endoderm commitment occurs around 3-4 days post-induction [8]. Similarly, in developing mouse lung epithelium, signaling entropy decreased continuously until adulthood, reflecting gradual differentiation, and could discriminate between bipotent progenitors and alveolar cell types at embryonic day 18 [8].

Application to Cancer Stem Cell Populations

Cellular potency metrics have proven valuable for identifying and characterizing cancer stem cell populations, which drive tumor initiation and therapeutic resistance. In breast cancer, integrative analysis of scRNA-seq data revealed seven consensus cancer cell states (CCSs) recurring across patients [9]. When researchers applied potency metrics including signaling entropy (SCENT) and CytoTRACE to these states, they found that certain CCSs (hc2, hc3, hc7, and hc10) exhibited higher stemness scores than others [9]. These high-potency states showed enrichment in HER2+/triple-negative breast cancer patients and corresponded closely to luminal progenitor or basal cell phenotypes, suggesting potential cells of origin for these aggressive cancer subtypes [9].

Cross-Platform Validation Frameworks

The establishment of comprehensive reference datasets enables robust validation of potency metrics across platforms. Recently, researchers integrated six published human scRNA-seq datasets covering development from zygote to gastrula stages to create a unified reference atlas [10]. This integrated resource, comprising 3,304 early human embryonic cells, provides a standardized framework for benchmarking cellular potency metrics and authenticating stem cell-based embryo models [10]. The reference includes detailed lineage annotations validated against human and non-human primate datasets, allowing researchers to project query datasets onto this reference and obtain predicted cell identities with associated potency expectations.

The UniverSC tool provides a flexible cross-platform solution for scRNA-seq data processing, supporting over 40 different technologies through a unified workflow [11]. By serving as a wrapper for Cell Ranger (10x Genomics) while accommodating diverse barcode and UMI configurations, UniverSC enables consistent processing across datasets generated from different platforms. This approach mitigates technical variations that could confound potency assessments, as demonstrated by improved integration of mouse primary cell data from different platforms (higher Silhouette score: 0.43 vs. 0.36) compared to platform-specific processing [11].

Experimental Protocols for scRNA-seq of Stem Cell Populations

Cell Isolation and Preparation

The accuracy of potency metrics depends critically on sample preparation quality. For hematopoietic stem/progenitor cell (HSPC) analysis, researchers have optimized a protocol using human umbilical cord blood. Mononuclear cells are first isolated via Ficoll-Paque density gradient centrifugation (30 minutes at 400×g, 4°C) [12]. Cells are then stained with antibody cocktails for fluorescence-activated cell sorting (FACS):

- Lineage markers cocktail (FITC-conjugated): CD235a, CD2, CD3, CD14, CD16, CD19, CD24, CD56, CD66b

- CD45 (PE-Cy7-conjugated)

- CD34 (PE-conjugated) or CD133 (APC-conjugated) [12]

Cells are stained in the dark at 4°C for 30 minutes, then washed and resuspended in RPMI-1640 with 2% FBS before sorting. The sorting strategy typically gates small events (2-15 μm), selects lineage-negative populations, then identifies CD34+Lin-CD45+ or CD133+Lin-CD45+ HSPCs [12]. This approach enables HSPC analysis even with limited cell numbers, providing high-quality input for scRNA-seq.

Library Preparation and Sequencing

For scRNA-seq library preparation, the sorted cells are processed immediately using the Chromium X Controller (10X Genomics) and Chromium Next GEM Chip G Single Cell Kit [12]. Libraries are constructed using the Chromium Next GEM Single Cell 3′ GEM, Library & Gel Bead Kit v3.1, with the Single Index Kit T Set A, following manufacturer guidelines. Sequencing is typically performed on Illumina NextSeq 1000/2000 systems with P2 flow cell chemistry (200 cycles) in paired-end mode (Read 1: 28 bp, Read 2: 90 bp), targeting approximately 25,000 reads per cell [12].

Quality Control and Data Processing

Rigorous quality control is essential for reliable potency assessment. The initial processing typically involves:

- Demultiplexing and FASTQ conversion using bcl2fastq within Cell Ranger mkfastq

- Alignment and feature counting using Cell Ranger count with reference genome GRCh38

- Filtering cells with <200 or >2,500 transcripts and >5% mitochondrial transcripts [12]

For cross-platform compatibility, the UniverSC pipeline provides a unified processing framework, handling technology-specific barcode and UMI configurations while generating consistent output formats [11]. This standardized approach facilitates comparative analyses across different experimental setups.

Visualization of Cellular Potency Concepts

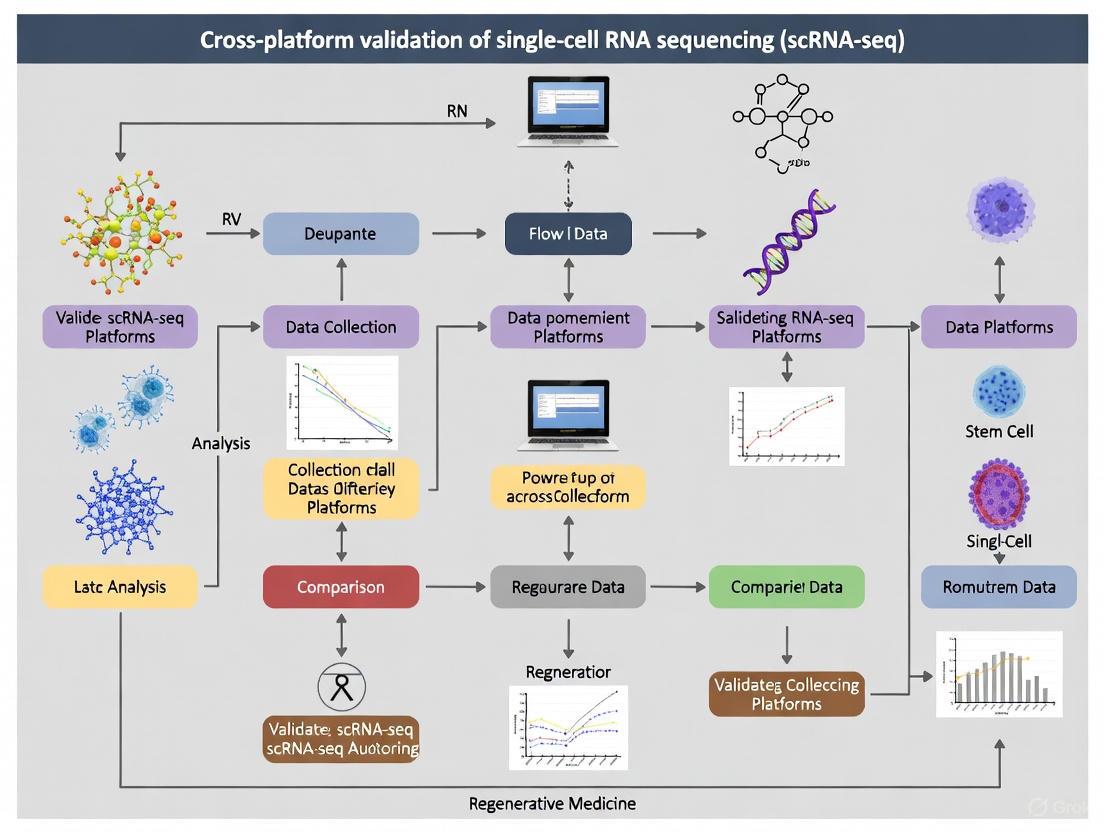

Signaling Entropy Calculation Workflow

Figure 1: Signaling entropy quantifies cellular potency by integrating scRNA-seq data with protein-protein interaction networks to calculate signaling promiscuity.

Cross-Platform Validation Framework

Figure 2: Cross-platform validation framework integrates data from multiple technologies using unified processing and reference benchmarks.

Essential Research Reagent Solutions

Table 2: Key Research Reagents for Stem Cell scRNA-seq Studies

| Reagent Category | Specific Examples | Research Application |

|---|---|---|

| Cell Surface Markers | CD34, CD133, CD45, Lineage cocktail | Isolation of specific stem/progenitor populations by FACS |

| scRNA-seq Kits | Chromium Next GEM Single Cell 3' Kit (10X Genomics) | Library preparation for single-cell transcriptomics |

| Analysis Pipelines | Cell Ranger, UniverSC, Seurat | Processing and analysis of scRNA-seq data |

| Reference Datasets | Human embryo atlas (zygote to gastrula) | Benchmarking and validation of cellular potency metrics |

| Protein Interaction Networks | STRING, BioGRID, Human Reference Interactome | Context for signaling entropy calculations |

Consensus Recommendations and Future Directions

The integration of computational potency metrics with experimental validation across platforms provides a robust framework for defining cellular states in stem cell research. Based on current evidence, signaling entropy offers particular utility as a generalizable, network-based approach that requires no prior feature selection and demonstrates strong correlation with established pluripotency measures [8]. The SCENT algorithm provides an implementation specifically optimized for single-cell data, enabling potency assessment and trajectory inference without clustering [8].

For cross-platform validation, researchers should leverage reference datasets such as the integrated human embryo atlas [10] and unified processing tools like UniverSC [11] to minimize technical variations. Experimental designs should incorporate FACS-sorted populations with well-defined markers [12] [13] and implement rigorous quality control thresholds during data processing.

Future developments will likely focus on multi-omic integration, combining transcriptomic, epigenetic, and proteomic data to refine potency assessments. The application of these frameworks to clinical samples, particularly cancer stem cell populations [9], holds promise for identifying therapeutic targets and predicting treatment responses. As single-cell technologies continue evolving, establishing consensus metrics and validation standards will be crucial for advancing stem cell biology and translation applications.

The Impact of Batch Effects and Biological Heterogeneity on Data Interpretation

In the field of stem cell research, single-cell RNA sequencing (scRNA-seq) has become an indispensable tool for dissecting cellular heterogeneity, identifying novel subpopulations, and understanding differentiation trajectories. However, the integration of data from different experiments, laboratories, or technological platforms introduces technical variations known as batch effects, which can obscure true biological signals and lead to erroneous conclusions [14] [15]. This challenge is particularly acute in cross-platform validation studies where distinguishing subtle technical artifacts from genuine biological heterogeneity is critical for robust scientific discovery. Batch effects can manifest as systematic shifts in gene expression profiles stemming from differences in sample preparation, sequencing runs, instrumentation, or experimental conditions [14]. Simultaneously, biological heterogeneity—especially relevant in stem cell populations comprising diverse transitional states—introduces another layer of complexity in data interpretation. This article provides a comprehensive comparison of batch effect correction methodologies and their performance in addressing these challenges, with a specific focus on applications in stem cell scRNA-seq research.

Understanding Batch Effects and Biological Heterogeneity

Batch effects in scRNA-seq data arise from multiple technical sources, including differences in reagents, instruments, sequencing runs, and personnel [14]. These unwanted variations can obscure true biological signals and lead to incorrect inferences in downstream analyses. In stem cell research, where identifying subtle differences between transitional states is common, batch effects can be particularly problematic. For example, differences in enzyme batches used for cell dissociation or variations in ambient temperature during cell capture can introduce batch effects that might be misinterpreted as biologically relevant differences between stem cell populations [14].

Beyond technical factors, biological variation can also function as batch effects when they are not the focus of investigation. In cross-platform validation studies, variations between donors, sample collection times, or environmental conditions can systematically overshadow the biological signals of interest [14]. This is especially relevant when integrating stem cell data from multiple sources or time points, where distinguishing between technical artifacts and genuine biological heterogeneity becomes paramount for valid interpretation.

The Dual Challenge: Removing Technical Noise While Preserving Biological Variance

The central challenge in batch effect correction lies in removing technical variations while preserving biological heterogeneity, especially subtle cell states and rare populations. Over-correction can remove genuine biological signals, while under-correction can lead to false discoveries based on technical artifacts rather than biology [16]. This balance is particularly crucial in stem cell biology, where rare transitional states or progenitor populations may hold keys to understanding differentiation processes and developing therapeutic applications.

Comparative Analysis of Batch Effect Correction Methods

Methodologies and Underlying Algorithms

Various computational approaches have been developed to address batch effects in scRNA-seq data, each with distinct theoretical foundations and methodological frameworks.

Table 1: Core Algorithms and Methodological Approaches

| Method | Underlying Algorithm | Key Mechanism | Output |

|---|---|---|---|

| Harmony [17] | Iterative clustering and correction | Uses PCA for dimensionality reduction, then iteratively removes batch effects by maximizing diversity of batches within clusters | Integrated low-dimensional embedding |

| Seurat Integration [14] [17] | Canonical Correlation Analysis (CCA) and Mutual Nearest Neighbors (MNN) | Identects "anchors" between datasets using CCA and MNN, then corrects expression values based on these anchors | Corrected gene expression matrix |

| Scanorama [17] | Mutual Nearest Neighbors (MNN) in reduced dimension | Identifies MNNs across batches in PCA space and performs similarity-weighted panorama stitching | Integrated low-dimensional embedding |

| BBKNN [14] [17] | Batch Balanced K-Nearest Neighbors | Constructs a graph that prioritizes connections between similar cells across batches rather than within batches | Corrected k-neighbor graph |

| LIGER [17] | Integrative Non-negative Matrix Factorization (iNMF) | Decomposes gene expression into shared and dataset-specific factors, then performs quantile normalization | Factorized expression matrices |

| scVI [14] [17] | Variational Autoencoder (VAE) | Uses probabilistic modeling to learn a batch-invariant latent representation while accounting for count-based noise | Latent representation and denoised counts |

| scDML [16] | Deep Metric Learning | Uses initial clustering and nearest neighbor information with triplet loss to learn batch-invariant representations | Corrected low-dimensional embedding |

| scCRAFT [18] | Variational Autoencoder with Dual-Resolution Triplet Loss | Combines VAE reconstruction with domain adaptation and topology-preserving triplet loss | Batch-corrected latent embeddings |

Performance Benchmarking and Quantitative Comparisons

Independent benchmarking studies have evaluated batch correction methods across multiple datasets with different characteristics. These evaluations typically assess two key aspects: batch mixing (how well cells from different batches integrate) and bio-conservation (how well biological signals like cell type distinctions are preserved).

Table 2: Performance Benchmarking Across Methodologies

| Method | Batch Mixing Score | Bio-Conservation Score | Computational Efficiency | Handling of Rare Cell Types |

|---|---|---|---|---|

| Harmony [17] | High | High | Fastest among top performers | Moderate |

| Seurat 3 [17] | High | High | Memory-intensive for large datasets | Good |

| LIGER [17] | High | High | Moderate | Moderate |

| Scanorama [17] [18] | High | Moderate-High | Moderate | Good |

| BBKNN [14] [17] | Moderate | Moderate | Fast | Limited |

| scVI [17] [18] | Moderate-High | Moderate | Requires GPU for efficiency | Moderate |

| scDML [16] | High | High | Moderate | Excellent |

| scCRAFT [18] | Highest in benchmarks | Highest in benchmarks | Moderate (requires GPU) | Excellent |

A comprehensive benchmark study evaluating 14 methods across ten datasets with different characteristics recommended Harmony, LIGER, and Seurat 3 as the top performers, with Harmony having the advantage of significantly shorter runtime [17]. More recent evaluations incorporating newer methods like scDML and scCRAFT have shown these approaches consistently outperform earlier methods across multiple datasets, with scCRAFT demonstrating particularly robust performance in preserving rare cell types and handling complex integration tasks [16] [18].

Specialized Methods for Complex Scenarios

Recent methodological advances have addressed specific challenges in batch effect correction:

Preserving biological order: Methods like order-preserving batch correction utilize monotonic deep learning networks to maintain the original ranking of gene expression levels during correction, which helps preserve differential expression patterns and inter-gene correlations that might be lost by other methods [19].

Handling unbalanced batches and rare cell types: scDML and scCRAFT incorporate specialized strategies for preserving rare cell populations that might be lost in standard correction approaches. scDML uses deep metric learning guided by initial clusters, making it particularly effective at preserving subtle cell types [16]. scCRAFT employs a dual-resolution triplet loss that maintains within-batch topological relationships, providing robust performance even with highly unbalanced cell-type distributions across batches [18].

Experimental Protocols for Cross-Platform Validation

Standardized Processing Pipelines

For cross-platform validation studies, consistent data processing is essential before batch correction. The UniverSC pipeline provides a universal tool that supports any unique molecular identifier (UMI)-based platform, serving as a wrapper for Cell Ranger (10x Genomics) but adaptable to multiple technologies [11]. This approach enables consistent processing across different platforms, establishing a foundation for more reliable batch integration.

A typical workflow involves:

- Raw Data Processing: Using UniverSC or platform-specific tools to generate gene-barcode matrices from FASTQ files

- Quality Control: Filtering cells based on metrics like total counts, percentage of mitochondrial genes, and number of detected genes

- Normalization: Applying methods like log-normalization, SCTransform, or pooling-based normalization (e.g., Scran) to adjust for technical biases [14]

- Feature Selection: Identifying highly variable genes for downstream analysis

- Batch Correction: Applying appropriate integration methods based on dataset characteristics

- Downstream Analysis: Clustering, visualization, and biological interpretation

Quality Control and Validation Metrics

Rigorous quality control is essential for reliable cross-platform validation. Key steps include:

Cell and Gene Filtering: Removing low-quality cells based on metrics like total UMI counts, percentage of mitochondrial reads, and number of detected genes. Visual inspection of capture sites (for plate-based methods) and data-driven filtering approaches help ensure analysis is restricted to high-quality single cells [15].

Assessment of Batch Correction Quality: Multiple metrics quantitatively evaluate correction effectiveness:

- kBET (k-nearest neighbor Batch Effect Test): Measures whether local batch label distributions match the global distribution [14] [17]

- LISI (Local Inverse Simpson's Index): Quantifies batch mixing (Batch LISI) and cell-type separation (Cell Type LISI) [14] [17]

- ASW (Average Silhouette Width): Evaluates cluster compactness and separation [17]

- ARI (Adjusted Rand Index): Measures clustering accuracy against known labels [16] [17]

Visualization of Batch Correction Workflows

Generalized Workflow for scRNA-seq Batch Correction

The following diagram illustrates the standard analytical pipeline for batch correction in scRNA-seq studies, particularly relevant for cross-platform validation of stem cell data:

Advanced Batch Correction with Deep Metric Learning

More sophisticated methods like scDML employ specialized approaches that integrate clustering with batch correction, as shown in this workflow:

The Scientist's Toolkit: Essential Research Reagents and Computational Solutions

Table 3: Key Research Reagent Solutions and Computational Tools for scRNA-seq Batch Correction

| Category | Item | Function/Purpose | Considerations for Stem Cell Research |

|---|---|---|---|

| Wet Lab Reagents | ERCC Spike-In Controls [15] | External RNA controls of known concentration to monitor technical variation | Limited utility as they don't experience all processing steps of endogenous RNA |

| Unique Molecular Identifiers (UMIs) [15] | Molecular barcodes to correct for amplification bias and enable accurate molecule counting | Essential for quantitative analysis of stem cell heterogeneity | |

| Viability Stains | Assessment of cell viability before sequencing | Critical for stem cell samples sensitive to dissociation protocols | |

| Computational Tools | UniverSC [11] | Universal pipeline for processing scRNA-seq data from any UMI-based platform | Enables consistent cross-platform analysis for validation studies |

| Seurat [14] | Comprehensive R toolkit for single-cell analysis with integration methods | Widely adopted with extensive documentation and community support | |

| Scanpy [14] | Python-based single-cell analysis with multiple integration options | Enables scalable analysis of large stem cell datasets | |

| Harmony [17] | Fast, iterative batch integration algorithm | Recommended first choice due to speed and effectiveness | |

| Quality Assessment | kBET [14] [17] | Statistical test for batch effect presence in local neighborhoods | Identifies regions where batch effects persist after correction |

| LISI [14] [17] | Metric evaluating batch mixing and cell-type separation | Provides dual assessment of integration quality |

Batch effect correction remains a critical challenge in scRNA-seq studies, particularly for cross-platform validation of stem cell research findings where distinguishing technical artifacts from biological heterogeneity is paramount. Method selection should be guided by specific dataset characteristics, with Harmony, Seurat, and Scanorama representing robust, well-established options, while newer methods like scDML and scCRAFT show superior performance for preserving rare cell types and handling complex integration scenarios. For stem cell researchers pursuing cross-platform validation, a rigorous approach incorporating standardized processing, multiple correction methods, and comprehensive quality assessment using both quantitative metrics and biological validation is essential for generating robust, reproducible findings that advance our understanding of stem cell biology.

Foundational Principles for Rigorous Stem Cell Research and Clinical Translation

The field of stem cell research holds transformative potential for regenerative medicine, but realizing this potential demands unwavering commitment to foundational principles that ensure scientific rigor, ethical integrity, and patient safety. The International Society for Stem Cell Research (ISSCR) emphasizes that the primary societal mission of basic biomedical research and its clinical translation is to alleviate and prevent human suffering caused by illness and injury [20]. This collective endeavor depends on public support and contributions from scientists, clinicians, patients, research participants, industry members, regulators, and legislators across national boundaries [20]. Ethical principles and guidelines help secure the basis for this collective effort through an internationally coordinated framework that regulates research at all levels, including clinical trials and market access to proven interventions [20]. These foundations provide assurance that stem cell research is conducted with scientific and ethical integrity and that new therapies are evidence-based [21].

Adherence to these principles is particularly crucial in an era of rapid technological advancement. As the field progresses, balancing excitement over growing numbers of clinical trials with the requirement to rigorously evaluate each potential new intervention remains paramount [22]. Clinical applications and trials occurring far in advance of warranted by sound preclinical evidence jeopardize both patient safety and future development of promising technologies [22]. This guide examines the core principles, standards, and methodologies that underpin rigorous stem cell research and its responsible translation to clinical applications, with particular emphasis on their application in cross-platform validation of single-cell RNA sequencing (scRNA-seq) findings.

Foundational Ethical Principles in Stem Cell Research

Core Ethical Frameworks

The ISSCR Guidelines build upon widely shared ethical principles in science, research with human subjects, and medicine, including the Nuremberg Code, Declaration of Helsinki, and other foundational documents [20]. These guidelines promote an ethical, practical, appropriate, and sustainable enterprise for stem cell research and the development of cell therapies that will improve human health [20]. Several core principles form the ethical bedrock:

Integrity of the Research Enterprise: The primary goals of stem cell research are to advance scientific understanding, generate evidence for addressing unmet medical and public health needs, and develop safe and efficacious therapies for patients [20]. This research must ensure that information obtained is trustworthy, reliable, accessible, and responsive to scientific uncertainties and priority health needs through independent peer review, oversight, replication, institutional oversight, and accountability at each research stage [20].

Primacy of Patient/Participant Welfare: Physicians and physician-researchers owe their primary duty of care to patients and/or research subjects, never excessively placing vulnerable patients or research subjects at risk [20]. Clinical testing should never allow promise for future patients to override the welfare of current research subjects [20].

Respect for Patients and Research Subjects: Researchers must empower potential human research participants to exercise valid informed consent where they have adequate decision-making capacity, offering accurate information about risks and the current state of evidence for novel stem cell-based interventions [20].

Transparency: Researchers should promote timely exchange of accurate scientific information, communicate with various public groups, and convey the scientific state of the art, including uncertainty about safety, reliability, or efficacy of potential applications [20].

Social and Distributive Justice: Fairness demands that benefits of clinical translation efforts should be distributed justly and globally, with particular emphasis on addressing unmet medical and public health needs [20]. Risks and burdens associated with clinical translation should not be borne by populations unlikely to benefit from the knowledge produced [20].

Navigating Ethical Challenges in Embryonic and Pluripotent Stem Cell Research

Stem cell and embryo research show great promise for advancing understanding of human development and disease, addressing issues pertinent to earliest stages of human development such as causes of miscarriage, epigenetic, genetic and chromosomal disorders, and human reproduction [21]. The derivation of some types of stem cell lines necessitates the use of human embryos, and scientific research on human embryos and embryonic stem cell lines is viewed as ethically permissible in many countries when performed under rigorous scientific and ethical oversight [21].

Sensitivities surrounding research activities involving human embryos and gametes represent significant ethical considerations [20]. Creating embryos for research, permitted in relatively few jurisdictions, is required to develop and ensure both standard and novel methods involving IVF are safe, efficient, and effective [21]. The 2025 update to the ISSCR Guidelines refines recommendations for stem cell-based embryo models (SCBEMs), retiring the classification of models as "integrated" or "non-integrated" and replacing it with the inclusive term "SCBEMs" [21]. These guidelines reiterate that human SCBEMs are in vitro models and must not be transplanted to the uterus of a living animal or human host, and include a new recommendation prohibiting ex vivo culture of SCBEMS to the point of potential viability [21].

Standards for Clinical Translation of Stem Cell-Based Interventions

Pathways to Clinical Application

Responsible translation of basic stem cell research into clinical applications requires addressing scientific, clinical, regulatory, ethical, and social issues [22]. The rapid advances in stem cell research and genome editing technologies have created high expectations for regenerative medicine and cell-based therapies, but new interventions should only advance to clinical trials when there is a compelling scientific rationale, plausible mechanism of action, and acceptable chance of success [22].

The safety and effectiveness of new interventions must be demonstrated in well-designed and expertly-conducted clinical trials with approval by regulators before being offered to patients or incorporated into standard clinical care [22]. The following table summarizes key regulatory categories for stem cell-based interventions:

Table: Regulatory Classification of Stem Cell-Based Products

| Product Category | Definition | Key Characteristics | Regulatory Pathway |

|---|---|---|---|

| Minimally Manipulated Cells/Tissues [22] | Cells/tissues undergoing minimal processing that does not alter original relevant characteristics | Processing does not change original function; homologous use only [22] | Generally subject to fewer regulatory requirements; oversight varies by jurisdiction [22] |

| Substantially Manipulated Cells/Tissues [22] | Cells subjected to processing that alters original structural/biological characteristics | Enzymatic digestion, culture expansion, genetic manipulation; may differ from original source tissue [22] | Regulated as drugs, biologics, advanced therapy medicinal products; requires rigorous preclinical/clinical testing [22] |

| Non-Homologous Use [22] | Cells/tissues repurposed to perform different basic function in recipient | Different function than cells/tissue originally performed; example: adipose cells for eye treatment [22] | Requires rigorous safety/efficacy evaluation as advanced therapy product; well-designed preclinical/clinical studies [22] |

Substantially manipulated stem cells, cells, and tissues are subjected to processing steps that alter their original structural or biological characteristics, such as isolation and purification processes, tissue culture and expansion, or genetic manipulation [22]. The safety and efficacy profile of such interventions needs determination for particular indications using rigorous research methods, as composition may differ from original source tissue [22].

Non-homologous use occurs when stem cells, cells, or tissue are repurposed to perform different basic function in the recipient than originally performed prior to removal, processing, and transplantation [22]. This poses serious risks, as demonstrated by reports of vision loss when using adipose-derived stromal cells to treat macular degeneration [22].

Manufacturing and Quality Control Standards

Given the unique proliferative and regenerative nature of stem cells and their progeny, stem cell-based therapies present regulatory authorities with unique challenges [22]. Cell processing and manufacture of any product must be conducted with scrupulous, expert, and independent review and oversight to ensure integrity, function, and safety of cells destined for patient use [22].

Sourcing Material: Donors of cells for allogeneic use should give written and legally valid informed consent covering potential research/therapeutic uses, disclosure of incidental findings, potential for commercial application, and stem cell-specific aspects [22]. Donors and/or resulting cell banks should be screened/tested for infectious diseases and other risk factors per regulatory guidelines [22].

Quality Control in Manufacture: All reagents and processes should be subject to quality control systems and standard operating procedures to ensure reagent quality and protocol consistency [22]. Manufacturing should be performed under Good Manufacturing Practice (GMP) conditions when possible or mandated, though GMPs may be introduced in phase-appropriate manner in early-stage clinical trials in some regions [22].

Processing and Manufacture Oversight: Oversight and review of cell processing and manufacturing protocols should be rigorous, considering cell manipulation, source, intended use, clinical trial nature, and research subjects exposed to them [22]. Maintenance of cells in culture places selective pressures different from in vivo, potentially leading to genetic/epigenetic changes, altered differentiation behavior, and function [22].

Experimental Design for Cross-Platform Validation

Integrated scRNA-seq Analysis Workflow

Cross-platform validation of scRNA-seq findings is essential for ensuring reliability and reproducibility in stem cell research. The following diagram illustrates a robust experimental workflow for integrating single-cell and bulk RNA-seq data to validate stem cell findings:

Integrated scRNA-seq Analysis Workflow

This workflow demonstrates the comprehensive approach required for rigorous validation of stem cell research findings, particularly in investigating stemness-related heterogeneity. The process begins with a clear research question, proceeds through systematic data collection and processing, employs machine learning for stemness quantification, and culminates in biological interpretation and therapeutic target identification [23].

Key Methodological Approaches

Malignant Cell Identification: CopyKAT (Copy Number Karyotyping of Tumors) applies a Bayesian segmentation algorithm to detect large-scale chromosomal gains and losses at approximately 5 megabase resolution, using unsupervised clustering based on genome-wide CNV patterns to classify cells as diploid or aneuploid [23]. Aneuploidy, a hallmark of over 90% of human cancers, serves as the key distinguishing feature between malignant and non-malignant cells [23].

Stemness Index Calculation: The stemness index (mRNAsi) is derived using a one-class logistic regression (OCLR) model trained on human stem cell data from the Progenitor Cell Biology Consortium, quantifying similarity between tumor cells and stem cells as an indicator of cellular plasticity and potential tumor aggressiveness [23]. The model uses elastic net regularization (α = 0.5) to balance L1 and L2 penalties, with the regularization parameter (λ) optimized via 5-fold cross-validation [23].

Differential Analysis: CellChat algorithm calculates cell-cell communication based on communication probability scores derived from known ligand-receptor interactions [23]. The computeCommunProb function calculates interaction probability for each cell type pair, retaining significant interactions with statistical thresholds (p < 0.05) [23].

Essential Research Reagents and Tools

Core Reagent Solutions for Stem Cell Research

Rigorous stem cell research requires carefully selected reagents and tools that ensure reproducibility and reliability. The following table details essential research reagent solutions for foundational stem cell research, particularly focused on scRNA-seq applications:

Table: Essential Research Reagents for scRNA-seq Stem Cell Research

| Reagent/Tool Category | Specific Examples | Function and Application | Key Considerations |

|---|---|---|---|

| Cell Culture & Maintenance [22] [24] | Defined culture media, extracellular matrix substrates, growth factors | Maintain stem cell potency and direct differentiation; ensure reproducibility across experiments [22] | Quality control for consistency; avoid lot-to-lot variability; GMP-grade for clinical applications [22] |

| Cell Characterization [25] | Flow cytometry antibodies (CD73, CD90, CD105), differentiation induction kits | Verify stem cell identity per ISCT criteria; assess multipotent differentiation capability [25] | Standardized antibody panels; validate differentiation potential through trilineage assays [25] |

| Single-Cell RNA Sequencing [23] | Cell separation enzymes, viability dyes, barcoded beads, library prep kits | Enable single-cell transcriptome analysis; identify cell subpopulations and states [23] | Optimize cell dissociation to preserve viability/RNA quality; control for technical batch effects [23] |

| Bioinformatics Analysis [23] | Seurat, CellChat, CopyKAT, Harmony integration | Process scRNA-seq data; identify cell types; infer copy number variations; analyze cell communications [23] | Implement rigorous quality control filters; use appropriate normalization; correct for batch effects [23] |

| Genetic Manipulation [25] | CRISPR-Cas9 systems, viral vectors, transfection reagents | Engineer stem cells for mechanistic studies; enhance therapeutic properties [25] | Monitor off-target effects; ensure high efficiency without compromising cell viability/function [25] |

Quality Standards and Reporting Practices

The ISSCR Standards for Human Stem Cell Use in Research identify quality standards and outline basic core principles for laboratory use of both tissue and pluripotent human stem cells and in vitro model systems that rely on them [24]. These standards establish minimum characterization and reporting criteria for scientists, students, and technicians in basic research laboratories working with human stem cells [24]. Emphasis is placed on creating recommendations that, when taken together, ensure research reproducibility and reliability [24].

Manufacturing of cells outside the human body introduces additional risk of contamination with pathogens, and prolonged passage in cell culture carries potential for accumulating mutations and genomic and epigenetic instabilities that could lead to altered cell function or malignancy [22]. While many countries have established regulations governing culture, genetic alteration, and cell transfer into patients, optimized standard operating procedures for cell processing, characterization protocols, and release criteria remain to be refined for emerging technologies [22].

Signaling Pathways and Therapeutic Mechanisms

Key Mechanistic Pathways in Mesenchymal Stem Cell Therapy

Understanding the fundamental mechanisms through which stem cells exert their effects is crucial for rigorous research and successful clinical translation. Mesenchymal stem cells (MSCs) have emerged as powerful tools in regenerative medicine due to their ability to differentiate into mesenchymal lineages, low immunogenicity, and strong immunomodulatory properties [25]. The following diagram illustrates the primary therapeutic mechanisms of MSCs:

MSC Therapeutic Mechanisms

Unlike traditional cell therapies relying on engraftment, MSCs primarily function through paracrine signaling—secreting bioactive molecules like vascular endothelial growth factor (VEGF), transforming growth factor-beta (TGF-β), and exosomes that contribute to tissue repair, promote angiogenesis, and modulate immune responses in damaged or inflamed tissues [25]. Recent studies have identified mitochondrial transfer as a novel therapeutic mechanism where MSCs donate mitochondria to injured cells through tunneling nanotubes, restoring bioenergetic function in conditions characterized by mitochondrial dysfunction such as acute respiratory distress syndrome (ARDS) and myocardial ischemia [25].

Immunomodulatory Pathways

MSCs interact with both innate and adaptive immune systems to help restore immune balance. They inhibit T-cell proliferation through secretion of immunosuppressive agents such as prostaglandin E2 (PGE2), indoleamine 2,3-dioxygenase (IDO), and programmed death-ligand 1 (PD-L1), thereby tempering overactive immune responses [25]. Furthermore, MSCs guide macrophage polarization by converting pro-inflammatory M1 macrophages into anti-inflammatory M2 phenotypes through signaling molecules like interleukin-10 (IL-10) and transforming growth factor-beta (TGF-β) [25]. This shift plays a critical role in autoimmune conditions such as multiple sclerosis, where MSCs also promote expansion of regulatory T cells (Tregs) to enhance immune tolerance [25].

In neurological disorders, MSCs offer unique therapeutic advantages due to their capacity to cross the blood-brain barrier and release neuroprotective factors [25]. MSC-derived exosomes have been shown to slow motor neuron degeneration in animal models of amyotrophic lateral sclerosis (ALS) [25]. In cardiovascular medicine, MSC-secreted factors contribute to attenuation of adverse ventricular remodeling in heart failure, helping maintain cardiac function [25].

Foundational principles for rigorous stem cell research and clinical translation provide the essential framework through which the field can realize its transformative potential while maintaining scientific integrity and public trust. Adherence to ethical guidelines, manufacturing standards, and robust experimental design—particularly for cross-platform validation of scRNA-seq findings—ensures that stem cell research progresses responsibly from bench to bedside.

The ISSCR emphasizes that the collective effort of stem cell research depends on public support and contributions of many individuals working across institutions, professions, and national boundaries [20]. When this collective effort works well, the social mission of responsible basic research and clinical translation is achieved efficiently alongside the legitimate private interests of its various contributors [20]. By maintaining these foundational principles, the stem cell research community can continue to advance scientific understanding while developing safe and efficacious therapies that address unmet medical needs and improve human health.

Advanced Computational Tools and Integrative Analytical Frameworks

Leveraging Single-Cell Foundation Models for Enhanced Data Representation

Single-cell RNA sequencing (scRNA-seq) has revolutionized biological research by providing a granular view of transcriptomics at single-cell resolution, particularly in stem cell research where understanding cellular heterogeneity is crucial for unraveling differentiation pathways and regenerative mechanisms [26] [27]. However, stem cell scRNA-seq data presents significant analytical challenges including high sparsity, technical noise, batch effects, and complex heterogeneity patterns [26] [28]. Single-cell foundation models (scFMs) have emerged as powerful computational tools designed to overcome these challenges by learning universal biological knowledge from massive datasets during pretraining, enabling zero-shot learning and efficient adaptation to various downstream tasks [26] [27] [29].

The integration of scFMs into stem cell research offers unprecedented opportunities for cross-platform validation of findings. These models, trained on tens of millions of cells spanning diverse tissues, conditions, and donors, capture fundamental principles of gene regulation and cellular states that can be applied to validate stem cell characteristics across different experimental platforms and laboratory environments [27] [29]. This review provides a comprehensive comparison of current scFMs, their performance across critical analytical tasks, and practical guidance for researchers seeking to leverage these tools for enhanced data representation in stem cell studies.

Understanding Single-Cell Foundation Models: Architectures and Approaches

Core Architectures and Training Paradigms

Single-cell foundation models adapt transformer architectures, originally developed for natural language processing, to analyze gene expression data by treating cells as "sentences" and genes as "words" [27]. These models employ self-supervised learning on vast single-cell corpora, typically using masked gene modeling objectives where the model learns to predict masked or missing gene expressions based on contextual information from other genes in the cell [26] [27] [29]. The fundamental premise is that exposure to millions of cells encompassing diverse biological conditions enables the model to learn transferable representations of gene interactions and cellular states [27].

These models primarily differ in their approaches to tokenization—how they convert continuous gene expression values into discrete inputs for the transformer architecture. The three predominant strategies include: (1) ranking-based approaches that order genes by expression levels within each cell [26] [30]; (2) value binning that discretizes expression values into categorical buckets [26] [27]; and (3) value projection that preserves continuous expression values through linear projections [26] [29]. Each approach presents distinct trade-offs between computational efficiency and information preservation.

Model Ecosystem and Key Characteristics

The scFM landscape has expanded rapidly, with multiple models demonstrating strengths across different applications. Key models include Geneformer (40M parameters, trained on 30M cells) [26], scGPT (50M parameters, trained on 33M cells) [26], UCE (650M parameters, trained on 36M cells) [26], scFoundation (100M parameters, trained on 50M cells) [26] [29], and more recent entrants like CellFM (800M parameters, trained on 100M human cells) [29] and the Teddy model family (up to 400M parameters, trained on 116M cells) [30]. These models vary in their architectural choices, pretraining datasets, and specialization, making them differentially suited for specific stem cell research applications.

Figure 1: Generalized workflow for single-cell foundation models, showing how raw scRNA-seq data undergoes tokenization, self-supervised pretraining, and generates embeddings for various downstream tasks relevant to stem cell research.

Comparative Performance Analysis of Leading scFMs

Benchmarking Framework and Evaluation Metrics

Comprehensive benchmarking studies have evaluated scFMs against traditional methods using diverse metrics spanning unsupervised, supervised, and knowledge-based approaches [26] [28]. Performance is typically assessed across multiple tasks including batch integration, cell type annotation, cancer cell identification, and drug sensitivity prediction [26]. Novel biology-informed metrics such as scGraph-OntoRWR (which measures consistency of captured cell type relationships with biological ontologies) and LCAD (Lowest Common Ancestor Distance, which measures ontological proximity between misclassified cell types) provide more meaningful biological validation than traditional computational metrics alone [26] [28].

Benchmarking results consistently indicate that no single scFM universally outperforms all others across diverse tasks [26] [28]. Instead, model performance is highly dependent on task characteristics, dataset size, and biological context. This underscores the importance of task-specific model selection rather than seeking a universally superior solution—a critical consideration for stem cell researchers with specific analytical needs.

Performance Across Key Analytical Tasks

Table 1: Comparative performance of scFMs across critical tasks for stem cell research

| Model | Cell Type Annotation | Batch Integration | Perturbation Prediction | Stem Cell Specific Tasks |

|---|---|---|---|---|

| Geneformer | Intermediate [26] | Strong [26] | Strong [26] | Not specifically evaluated |

| scGPT | Strong [26] | Intermediate [26] | Variable [31] | Not specifically evaluated |

| scFoundation | Strong [26] | Intermediate [26] | Underperforms baselines [31] | Not specifically evaluated |

| UCE | Intermediate [26] | Strong [26] | Limited data | Not specifically evaluated |

| CellFM | State-of-the-art [29] | Not reported | Improved performance [29] | Not specifically evaluated |

| Traditional Methods | Variable [26] | Strong (e.g., Harmony) [26] | Often superior [31] | Established workflows |

For cell type annotation, scFMs generally provide robust performance, with models like scGPT and scFoundation demonstrating particular strength [26]. The biological relevance of these annotations is enhanced by scFM's ability to capture ontological relationships between cell types, as measured by the scGraph-OntoRWR metric [26] [28]. This capability is particularly valuable in stem cell research for identifying transitional states and differentiation trajectories.

In batch integration tasks, which are crucial for cross-platform validation, scFMs demonstrate competitive performance with specialized methods like Harmony and Seurat [26]. Geneformer and UCE show particular promise for integrating datasets across different technological platforms—a common challenge when comparing stem cell datasets generated using different scRNA-seq protocols [26].

For perturbation prediction, benchmarking results reveal important limitations in current scFMs. Surprisingly, simple baseline models—including a mean expression model and random forest regressors using Gene Ontology features—consistently outperform sophisticated foundation models like scGPT and scFoundation across multiple Perturb-seq datasets [31]. This suggests that current scFMs may not adequately capture causal perturbation relationships, an important consideration for stem cell researchers studying differentiation or reprogramming interventions.

Experimental Protocols for scFM Evaluation

Standardized Benchmarking Methodology

Comprehensive scFM evaluation follows standardized protocols to ensure fair comparison across models [26]. The benchmarking pipeline typically involves: (1) extracting zero-shot gene and cell embeddings from pretrained models without additional fine-tuning; (2) applying these embeddings to specific downstream tasks using consistent evaluation datasets; and (3) assessing performance using multiple metrics tailored to each task [26] [28].

For cell-level tasks like batch integration and annotation, models are evaluated on diverse datasets containing multiple sources of variation including inter-patient, inter-platform, and inter-tissue differences [26] [28]. Performance is assessed using both traditional metrics (e.g., silhouette score, ARI) and novel biology-informed metrics (e.g., scGraph-OntoRWR, LCAD) that better capture biological relevance [26]. For perturbation prediction, models are evaluated using Perturb-seq datasets with held-out perturbations to assess generalization to unseen conditions [31].

Domain-Specific Validation for Stem Cell Research

While general scFM benchmarks provide valuable performance insights, stem cell research requires additional domain-specific validation. Recommended protocols include: (1) evaluating performance on rare stem cell populations identification; (2) assessing ability to reconstruct differentiation trajectories; (3) testing robustness to technical variations common in stem cell cultures; and (4) validating cross-platform consistency using paired datasets from different sequencing technologies [32] [12] [33].

For example, in hematopoietic stem cell research, scFMs should be validated for their ability to distinguish closely related progenitor states and correctly order cells along differentiation pathways [12]. Similarly, in pluripotent stem cell applications, models should be tested for accurate identification of pluripotency states and early lineage commitment markers [33]. These domain-specific validations are essential for determining which scFM is most appropriate for specific stem cell research applications.

Practical Implementation Guide

Model Selection Framework

Selecting the optimal scFM requires careful consideration of multiple factors. The following decision framework supports informed model selection:

- For large-scale atlas integration (>100,000 cells): scFoundation or CellFM provide strong performance due to their extensive pretraining and parameter counts [26] [29].

- For cell type annotation with limited computational resources: scGPT offers a favorable balance of performance and efficiency [26].

- For trajectory inference and differentiation analysis: Geneformer's rank-based approach may better capture expression dynamics [26] [30].

- For cross-species analysis: UCE incorporates evolutionary information through protein language models [26].

- For perturbation response prediction: Traditional machine learning methods with biological feature engineering may outperform current scFMs [31].

Additional practical considerations include computational resource requirements, documentation quality, and community support. Models like scGPT and Geneformer generally offer more accessible implementations for researchers without specialized computational expertise [26].

The Stem Cell Researcher's Toolkit

Table 2: Essential research reagents and computational tools for scFM applications in stem cell research

| Resource Category | Specific Tools/Platforms | Application in Stem Cell Research |

|---|---|---|

| Data Repositories | CELLxGENE [27], GEO [27] [29], Single-Cell Expression Atlas [27] | Sources of reference data for model training and validation |

| Processing Frameworks | Seurat [26], Scanpy [26], SCVI [26] [32] | Standardized data preprocessing and baseline method implementation |

| Benchmarking Platforms | scBench [26], scHUB [28] | Performance evaluation across multiple tasks and datasets |

| Biological Networks | Gene Ontology [26] [31], STRING [33], KEGG [31] | Biological prior knowledge for interpretation and validation |

| Visualization Tools | UMAP [32] [12], t-SNE, SCANPY plotting functions | Visualization of high-dimensional embeddings and cellular relationships |

Emerging Trends and Development

The scFM field is evolving rapidly, with several promising directions emerging. Scale continues to be a key driver of improvement, with newer models like CellFM (800M parameters) and Teddy (up to 400M parameters) demonstrating that increased model size and training data correlate with enhanced performance on certain tasks [29] [30]. Multimodal integration represents another frontier, with efforts to incorporate epigenetic, spatial, and proteomic data alongside transcriptomic measurements [27] [30].

For stem cell research specifically, key development needs include: (1) models pretrained specifically on stem cell datasets to better capture pluripotency and early development biology; (2) improved perturbation modeling capabilities for predicting differentiation and reprogramming outcomes; and (3) enhanced interpretability methods to extract biological insights about stem cell regulation networks from the models [12] [33].