Navigating Batch Effects in Stem Cell scRNA-seq: From Foundational Concepts to Advanced Integration Strategies

This article provides a comprehensive guide for researchers and drug development professionals on handling batch effects in single-cell RNA sequencing (scRNA-seq) of stem cell datasets.

Navigating Batch Effects in Stem Cell scRNA-seq: From Foundational Concepts to Advanced Integration Strategies

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on handling batch effects in single-cell RNA sequencing (scRNA-seq) of stem cell datasets. We first explore the sources and impact of technical variation, highlighting its critical implications for data interpretation. We then detail a landscape of computational correction methods, from established to cutting-edge deep learning models, offering practical application guidance. The guide further addresses common troubleshooting scenarios and optimization techniques to prevent overcorrection and preserve biological signals. Finally, we present a rigorous framework for method validation using advanced metrics and benchmark performance across different stem cell research contexts, empowering robust and reproducible analysis.

Understanding the Enemy: Defining Batch Effects and Their Impact on Stem Cell ScRNA-Seq Data

What Are Batch Effects? Technical vs. Biological Variation in Stem Cell Cultures

What is a Batch Effect?

A batch effect is non-biological variation in experimental data caused by technical factors. In molecular biology, this occurs when non-biological factors in an experiment introduce systematic changes in the produced data. These effects can lead to inaccurate conclusions when their causes are correlated with experimental outcomes [1].

In the context of stem cell single-cell RNA sequencing (scRNA-seq), batch effects can obscure true biological signals, such as cellular heterogeneity or differentiation states, and lead to incorrect biological inferences [2]. Batch effects are a critical challenge in high-throughput sequencing experiments, including those using microarrays, mass spectrometers, and scRNA-seq platforms [1].

What Causes Batch Effects in Stem Cell Cultures and scRNA-seq?

Batch effects originate from multiple sources throughout the experimental workflow. The table below categorizes common sources of this technical variation.

Table 1: Sources of Variation in Stem Cell Research

| Variation Type | Source Examples | Impact on Data |

|---|---|---|

| Technical (Batch Effects) | Different sequencing runs or instruments [1] [3] | Systematic shifts in gene expression profiles that are not due to biology [2]. |

| Variations in reagent lots or manufacturing batches [1] [3] | Cells from the same type cluster by processing batch instead of biological condition [4]. | |

| Changes in sample preparation protocols or personnel [1] [3] | Compromised differential expression analysis and meta-analyses [3]. | |

| Time of day when the experiment was conducted [1] | ||

| Environmental conditions (temperature, humidity, atmospheric ozone) [1] [3] | ||

| Biological | Genotypic differences between individual donors or cell lines [5] | Represents the true biological variation of interest, such as different cell types or disease states. |

| Biological noise in gene expression between cells [5] |

For stem cell cultures specifically, technical variation can be introduced by [6]:

- Differences in feeder cell conditions (e.g., mouse embryonic fibroblasts vs. human foreskin fibroblasts).

- Variations in extracellular matrix lots (e.g., basement membrane gel).

- Inconsistencies in media components and supplement batches (e.g., growth factors like FGF or LIF).

- Minor changes in dissociation protocols during passaging.

How Do I Detect Batch Effects in My scRNA-seq Data?

Before correction, you should assess whether your data contains significant batch effects. Several visualization and quantitative methods can help [4].

Visualization Techniques:

- Principal Component Analysis (PCA): Plot your data by the top principal components. If samples cluster by batch (e.g., sequencing run) rather than by biological source (e.g., cell type), it indicates a batch effect [3] [4].

- t-SNE or UMAP: Overlay batch labels on the plot. In the presence of batch effects, cells from different batches tend to form separate clusters instead of mixing based on biological similarities [4].

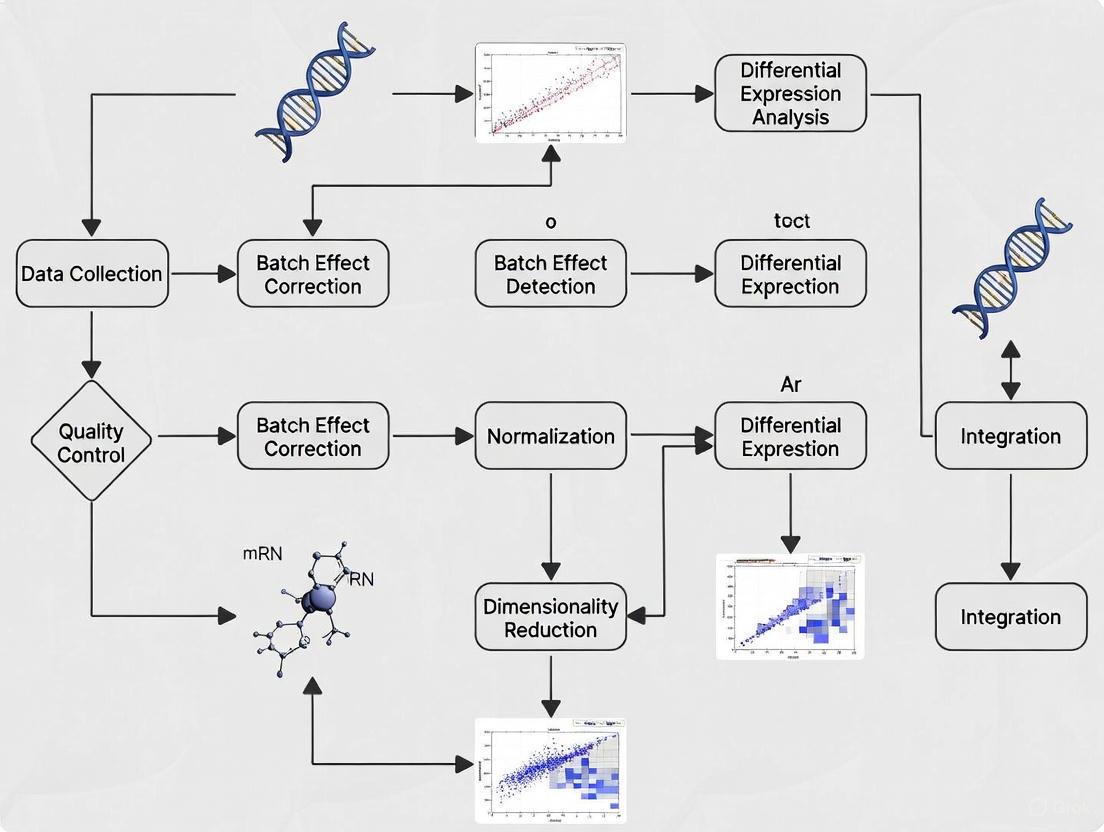

Diagram: Workflow for Batch Effect Detection and Correction

Quantitative Metrics:

- kBET (k-nearest neighbor Batch Effect Test): A statistical test that assesses whether the local neighborhood of a cell has a balanced mix of batches [2].

- LISI (Local Inverse Simpson's Index): Quantifies both batch mixing (Batch LISI) and cell type separation (Cell Type LISI). Higher batch LISI values indicate better mixing [2].

What Methods Can Correct for Batch Effects?

Various computational techniques have been developed to correct for batch effects. The choice of method depends on your data type and experimental design.

Table 2: Batch Effect Correction Tools for scRNA-seq Data

| Tool/Method | Description | Best For | Considerations |

|---|---|---|---|

| Harmony [2] [4] | Integrates datasets iteratively in low-dimensional space (e.g., PCA). | Large datasets; fast runtime [4]. | Preserves biological variation well [2]. |

| Seurat Integration [2] [4] | Uses Canonical Correlation Analysis (CCA) and Mutual Nearest Neighbors (MNN). | Datasets with high biological fidelity needs [2]. | Computationally intensive for large datasets [2]. |

| ComBat/ComBat-seq [3] | Empirical Bayes framework to adjust for batch effects. | Microarray and RNA-seq count data [3]. | Can be used with small batch sizes [1]. |

| scDML [7] | Deep metric learning using triplet loss, guided by initial clusters. | Preserving rare cell types; complex integrations. | Newer method showing high performance in benchmarks [7]. |

| BBKNN [2] | Batch Balanced K-Nearest Neighbors; fast and lightweight. | Large datasets requiring computational efficiency [2]. | Less effective for non-linear batch effects [2]. |

How Can I Prevent Batch Effects Through Experimental Design?

Prevention is the most effective strategy. Good experimental design can substantially reduce batch effects before data processing begins [2].

Key Strategies:

- Balance Your Design: Ensure that the biological conditions of interest (e.g., treatment vs. control) are equally represented across all processing batches [8].

- Include Technical Replicates: Process the same biological sample across different batches to quantify technical variation [9].

- Standardize Protocols: Use the same reagent lots, instruments, and personnel for a given study when possible [2].

- Randomize Processing: If samples cannot be processed simultaneously, randomize the order of processing across biological conditions.

- Use Controls: Include reference control samples or spike-in RNAs (e.g., ERCC spike-ins) in each batch to monitor technical performance [5].

What is the Difference Between Technical and Biological Replicates?

Understanding replicates is crucial for designing experiments that can account for batch effects.

Technical Replicates: Repeated measurements of the same biological sample. They demonstrate the variability of the protocol itself and address the reproducibility of the assay, but not the biological phenomenon [9].

- Example in stem cell research: Taking one batch of iPSCs from a single donor, splitting it, and preparing multiple RNA-seq libraries to measure library prep variability.

Biological Replicates: Measurements from biologically distinct samples. They capture random biological variation and indicate if an experimental effect is generalizable [9].

- Example in stem cell research: Using iPSCs derived from multiple different healthy donors to ensure observed effects are not specific to one genetic background.

What Are the Signs of Over-Correction?

Aggressive batch correction can sometimes remove genuine biological signals. Watch for these signs of over-correction [4]:

- Distinct Cell Types Merge: On UMAP/PCA plots, clearly distinct cell types are clustered together after correction.

- Complete Overlap of Samples: Samples from very different conditions or experiments show complete overlap, suggesting biological differences have been removed.

- Loss of Biological Markers: Cluster-specific markers identified after correction are dominated by genes with widespread high expression (e.g., ribosomal genes) rather than specific functional genes.

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for Stem Cell scRNA-seq Studies

| Reagent/Material | Function | Example Use Case |

|---|---|---|

| Extracellular Matrix [6] | Provides attachment surface for feeder-free stem cell culture. | Coating plates for iPSC maintenance in defined conditions. |

| Pluripotency-Supporting Media [6] | Serum-free media formulations with essential growth factors. | Maintaining stem cells in an undifferentiated state across experiments. |

| Stem Cell Dissociation Reagent [6] | Enzymatic or non-enzymatic solution for detaching cells during passaging. | Creating single-cell suspensions for scRNA-seq without affecting viability. |

| ROCK Inhibitor (Y-27632) [6] | Improves survival of single stem cells after dissociation. | Adding to media after passaging or thawing to reduce apoptosis. |

| ERCC Spike-In Controls [5] | Exogenous RNA sequences added to samples in known quantities. | Quantifying technical noise and batch effects in sequencing data. |

| UMI Barcodes [5] | Unique Molecular Identifiers attached to each mRNA molecule. | Correcting for amplification bias and improving quantification accuracy. |

Diagram: Relationship Between Experimental Factors and Data Quality

FAQ on Batch Effects in Stem Cell Research

Q: Can batch effects be completely eliminated? A: While they can be significantly reduced, complete elimination is challenging. The goal is to minimize their impact so that biological signals remain the dominant source of variation in your data [1] [8].

Q: Should I correct for batch effects if my batches are balanced? A: Even with balanced designs, batch effects can still exist and should be assessed. However, in a perfectly balanced scenario, batch effects may be 'averaged out' when comparing biological conditions [8].

Q: How does sample imbalance affect batch correction? A: Sample imbalance (different cell type proportions across batches) substantially impacts integration results and biological interpretation. Methods like Harmony and scVI have shown better performance with imbalanced samples, but careful interpretation is always needed [4].

Q: Can I add new data to an already batch-corrected dataset? A: This is challenging. Corrected embeddings are typically tied to the specific datasets processed together. Integrating new data often requires re-running the entire batch correction process on the combined old and new data [2].

Q: In stem cell research, what are the most critical steps to minimize batch effects? A: Standardizing cell culture conditions (passaging techniques, media batches, and confluence at harvesting) and using consistent RNA library preparation protocols across all samples are most critical for minimizing batch effects in stem cell studies [6] [5].

FAQ: Understanding and Identifying Batch Effects

Batch effects in stem cell scRNA-seq arise from both biological and technical sources. Key biological sources include variations between individual cell donors and differences in sample collection times or environmental conditions [2]. A prominent technical source is the inherent stochasticity of the iPSC reprogramming process itself, which can create strong batch (or donor) effects that prevent models trained on one batch from being applied to another [10]. Other major technical sources encompass differences in sequencing platforms (e.g., Illumina vs. Ion Torrent), sample preparation protocols, reagents, instrumentation, and personnel handling samples across different laboratories or processing dates [2] [11].

How can I detect if my stem cell scRNA-seq data has batch effects?

You can use both visual and quantitative methods to detect batch effects.

- Visual Methods:

- PCA Plot Examination: Perform Principal Component Analysis (PCA) on the raw data and color cells by their batch of origin. Separation of cells by batch in the top principal components, rather than by biological source (e.g., cell type), indicates a batch effect [11] [4].

- t-SNE/UMAP Plot Examination: Visualize your data using t-SNE or UMAP. If cells from the same cell type or condition cluster separately based on their batch, it signals a batch effect that needs correction [11] [4].

- Quantitative Metrics: Several metrics provide a less biased assessment [11] [4]:

- kBET (k-nearest neighbor Batch Effect Test): A statistical test assessing if the local batch proportion in a cell's neighborhood matches the global expectation.

- LISI (Local Inverse Simpson's Index): Quantifies the diversity of batches (Batch LISI) and cell types (Cell Type LISI) in a local neighborhood. A higher Batch LISI indicates better batch mixing.

Table 1: Quantitative Metrics for Batch Effect Assessment

| Metric | What It Measures | Interpretation |

|---|---|---|

| kBET | Whether local batch mixing reflects the global expected proportion | Rejection of the null hypothesis indicates a significant batch effect. |

| Batch LISI | Diversity of batches in a cell's local neighborhood | Higher values indicate better mixing of batches. |

| Cell Type LISI | Purity of cell types in a cell's local neighborhood | Lower values indicate better separation of cell types. |

| ARI (Adjusted Rand Index) | similarity between two clusterings (e.g., before/after correction) | Values closer to 1 indicate higher clustering accuracy. |

| ASW (Average Silhouette Width) | Compactness and separation of clusters | Higher values indicate more compact and well-separated clusters. |

What are the best methods for correcting batch effects in stem cell data?

The "best" method can depend on your specific data, but several tools have been benchmarked for scRNA-seq integration [2] [4].

Table 2: Commonly Used scRNA-seq Batch Effect Correction Tools

| Tool | Core Methodology | Strengths | Considerations for Stem Cell Research |

|---|---|---|---|

| Harmony | Iterative clustering in PCA space with batch correction [2] [11]. | Fast, scalable, preserves biological variation [2]. | A recommended first choice due to good balance of speed and performance [4]. |

| Seurat Integration | Uses CCA and Mutual Nearest Neighbors (MNN) as "anchors" to align datasets [2] [11]. | High biological fidelity; integrated with a comprehensive analysis suite [2]. | Can be computationally intensive for large datasets; requires parameter tuning [2]. |

| scANVI | Deep generative model (variational autoencoder) that can use cell labels [2]. | Excels at modeling complex, non-linear batch effects [2]. | Requires familiarity with deep learning; may need GPU acceleration [2]. Preserves rare cell types well [7]. |

| BBKNN | Batch Balanced K-Nearest Neighbors; a fast graph-based method [2]. | Computationally efficient and lightweight [2]. | Less effective on highly complex batch effects; parameter sensitive [2]. |

| scDML | Deep metric learning using triplet loss, guided by initial clusters and neighbor information [7]. | Effectively preserves subtle and rare cell types, which is crucial in stem cell differentiation studies [7]. | A newer method that has shown strong performance in benchmarks against other popular tools [7]. |

| sysVI | Conditional VAE using VampPrior and cycle-consistency constraints [12] [13]. | Designed for integrating datasets with substantial batch effects (e.g., across species or protocols) [12] [13]. | Ideal for ambitious projects like integrating organoid models with primary tissue data [12] [13]. |

What are the signs of over-correction and how can I avoid it?

Over-correction occurs when batch effect removal also removes genuine biological signal. Key signs include [11] [4]:

- Distinct cell types are incorrectly clustered together on UMAP/t-SNE plots.

- A complete overlap of samples from very different biological conditions (e.g., control vs. treated), suggesting the loss of meaningful differential expression.

- Cluster-specific markers are comprised of genes with widespread high expression (e.g., ribosomal genes) instead of canonical cell-type-specific markers.

- A notable absence of expected differential expression hits for pathways known to be active in certain cell types or conditions.

To avoid over-correction, start with less aggressive correction methods and always validate that known biological signals are retained after integration. Be particularly cautious with methods that use strong adversarial learning or high Kullback–Leibler (KL) regularization, as these can indiscriminately remove both technical and biological variation [12] [13].

My stem cell samples are imbalanced (different cell type proportions across batches). How does this affect integration?

Sample imbalance—where batches have different numbers of cells, different cell types present, or different cell type proportions—is common in stem cell research (e.g., due to varying differentiation efficiencies). This can substantially impact integration and downstream biological interpretation [4].

- Adversarial learning methods are particularly prone to mixing embeddings of unrelated cell types when their proportions are unbalanced across batches [12] [13]. For instance, a rare cell type in one batch might be incorrectly merged with a more abundant cell type from another batch.

- Guidelines: In imbalanced settings, it is recommended to use methods that do not rely on adversarial learning and to carefully inspect the integration results for the preservation of rare cell populations [4]. Methods like

scDMLthat are explicitly designed to preserve rare cell types can be particularly valuable in these scenarios [7].

Experimental Protocol: A Standard Workflow for Batch Effect Correction

The following diagram outlines a standard computational workflow for detecting and correcting batch effects in scRNA-seq data.

Standard Batch Effect Correction Workflow

Detailed Methodological Steps:

- Normalization: Adjust raw counts for technical biases like sequencing depth. Common methods include LogNormalize (counts divided by total cells, scaled, log-transformed) and SCTransform (uses a regularized negative binomial model), which also performs variance stabilization [2].

- Feature Selection: Identify Highly Variable Genes (HVGs) that drive biological heterogeneity. This focuses subsequent analysis on the most informative features and can help minimize the influence of batch-effect-associated genes [2].

- Scaling and Linear Dimensionality Reduction: Scale the data so that the mean expression is 0 and variance is 1 across cells. Then, perform Principal Component Analysis (PCA) to reduce dimensionality. The top PCs are used for batch correction by many methods [2] [11].

- Batch Effect Detection & Decision: As described in FAQ #2, use PCA/UMAP and quantitative metrics (e.g., LISI, kBET) to assess the need for correction.

- Batch Effect Correction: If a significant batch effect is detected, apply a chosen integration algorithm (see Table 2). The selection depends on dataset size, complexity of batch effects, and the need to preserve rare cell types.

- Post-Correction Evaluation: It is critical to re-evaluate the data using the same visual and quantitative methods from Step 4. Check that batches are well-mixed and that biological separation (e.g., by known cell types) is maintained, guarding against over-correction.

Table 3: Key Software Tools and Resources

| Category | Item/Reagent Solution | Function / Explanation |

|---|---|---|

| Primary Analysis Suites | Seurat (R) / Scanpy (Python) | Comprehensive toolkits encompassing the entire scRNA-seq analysis workflow, including normalization, integration, clustering, and visualization [2]. |

| Batch Correction Algorithms | Harmony, scANVI, scDML, sysVI | Specific computational methods designed to remove unwanted technical variation while preserving biological signal. See Table 2 for details [2] [12] [7]. |

| Quantitative Metrics Packages | kBET, LISI | Software packages that calculate metrics to objectively evaluate the success of batch integration before and after correction [2] [11]. |

| Reference Materials | Quartet Project Reference Materials | Well-characterized reference samples (used in proteomics and other omics) that can be profiled alongside study samples across batches to monitor technical performance and aid in batch-effect correction [14]. |

Frequently Asked Questions

FAQ 1: How can I tell if my clustering results are unreliable due to batch effects?

Clustering results may be unreliable if the same analysis yields different cell groups each time it is run, a problem known as clustering inconsistency. This is often driven by underlying technical variation or batch effects that disrupt the true biological signal. Specifically, when you change the random seed in your clustering algorithm and this leads to the disappearance of previously detected clusters or the emergence of entirely new ones, it is a strong indicator of instability caused by unaddressed technical noise [15]. Tools like the single-cell Inconsistency Clustering Estimator (scICE) have been developed to quantitatively measure this consistency, helping to identify and exclude unreliable clustering outputs [15].

FAQ 2: Why are rare cell populations particularly vulnerable to batch effects, and how can I protect them in my analysis?

Rare cell populations are vulnerable for two main reasons. First, their low cell counts make them statistically easier to obscure by technical variation. Second, aggressive batch correction methods might mistakenly mix them with more abundant, but biologically distinct, cell types to achieve a uniform batch distribution [12] [16]. To protect these populations, it is recommended to use batch correction methods that are known for high biological fidelity and to employ targeted approaches during analysis. Before correction, visually inspect your data to note the location of potential rare populations. After applying a method like Harmony or Seurat Integration, verify that these populations remain distinct and have not been improperly merged with other groups [16] [17].

FAQ 3: Is it better to use a batch-corrected matrix or include batch as a covariate in my differential expression model?

For known batch variables, the current best practice is to incorporate them directly as covariates in your regression model for differential expression analysis, rather than using a pre-corrected gene expression matrix. Studies have shown that using a batch-corrected matrix can lead to inflated false discovery rates (FDRs), while including batch as a covariate in a model like those in edgeR or DESeq2 provides more reliable results [18]. For latent batch effects (those not known or measured), surrogate variable analysis (SVA) methods have been shown to effectively control FDR while maintaining good power [18].

Troubleshooting Guides

Problem: Clustering Bias and Inconsistency

Issue: Your clustering results change dramatically with different random seeds, making cell type identification unreliable.

Diagnosis: This is a classic sign of clustering inconsistency, where technical variation (batch effects) interferes with the algorithm's ability to find stable, biologically real groupings [15].

Solutions:

- Evaluate Clustering Consistency: Use the scICE tool to efficiently assess the consistency of your clusters across multiple runs. It calculates an Inconsistency Coefficient (IC)—values close to 1 indicate reliable clusters, while higher values signal instability [15].

- Apply Appropriate Batch Correction: Implement a robust batch correction method before clustering. The table below summarizes top-performing methods recommended for their ability to integrate data while preserving biological variation [17].

Table: Benchmarking of Select Batch Correction Methods

| Method | Key Principle | Best For | Strengths | Limitations |

|---|---|---|---|---|

| Harmony [17] | Iterative clustering in PCA space | Large datasets, general use | Fast, scalable, good batch mixing | Limited native visualization tools |

| Seurat Integration [17] | Canonical Correlation Analysis (CCA) & Mutual Nearest Neighbors (MNN) | Datasets where biological signal is paramount | High biological fidelity, comprehensive workflow | Computationally intensive for large data [2] |

| LIGER [17] | Integrative Non-negative Matrix Factorization (NMF) | Separating technical from biological variation | Does not assume all inter-dataset variation is technical | Requires more parameter tuning |

| sysVI (VAMP+CYC) [12] | Variational Autoencoder with VampPrior & cycle-consistency | Challenging cases (e.g., cross-species, organoid-tissue) | Improves correction without removing biological signals | More complex, deep learning-based |

Experimental Protocol for Reliable Clustering:

- Quality Control: Filter out low-quality cells and genes using standard QC metrics (count depth, number of genes, mitochondrial fraction) [19].

- Normalization: Normalize data using a method like SCTransform (regularized negative binomial regression) to account for sequencing depth and technical covariates [2].

- Batch Correction: Apply a suitable method from the table above (e.g., Harmony) to integrate your datasets.

- Consistency Check: Run scICE on the corrected data across a range of clustering resolutions to identify the most stable and reliable cluster labels [15].

The following diagram illustrates the negative impact of batch effects on clustering and the corrective workflow.

Problem: Masking of Rare Cell Types

Issue: A suspected rare cell population visible in one dataset disappears or becomes merged with a common cell type after data integration.

Diagnosis: Aggressive batch correction can over-correct the data, forcing the distinct gene expression profile of a rare cell type to be "aligned" with a more prevalent one, especially if the rare type is absent or has unbalanced proportions in one of the batches [12] [16].

Solutions:

- Method Selection: Choose a batch correction method demonstrated to preserve biological heterogeneity. Seurat Integration is often noted for its high biological fidelity [2] [17].

- Leverage Newer Algorithms: Consider advanced methods like sysVI, which uses a cycle-consistency constraint and VampPrior to improve integration without sacrificing biological signals, making it suitable for challenging integrations like between organoids and primary tissue [12].

- Sub-clustering: Perform a two-stage analysis. First, identify and extract major cell populations post-correction. Then, perform a second round of batch correction and clustering only on the cells within a major population of interest. This "zoomed-in" approach can reveal hidden rare subtypes [15].

Problem: Compromised Differential Expression (DE) Analysis

Issue: Differential expression analysis yields an unexpectedly high number of false positives or fails to identify known marker genes.

Diagnosis: Batch effects are a major confounder in DE analysis. If not properly accounted for, the systematic technical differences between sample groups can be misinterpreted as biological differences, inflating false positives. Conversely, overly strong correction can remove genuine biological signals [18].

Solutions:

- For Known Batches: The most effective approach is to include the batch as a covariate in the statistical model used for DE testing (e.g., in

edgeRorDESeq2), rather than using a pre-corrected expression matrix [18]. - For Latent Batches: When batch factors are unknown, use a method like Surrogate Variable Analysis (SVA) to estimate and account for these hidden sources of variation within your DE model [18].

- Use Improved Correction Tools: For analyses that require a corrected matrix, consider newer methods like ComBat-ref. This method builds on ComBat-seq but selects the batch with the smallest dispersion as a reference, which has been shown to improve the sensitivity and specificity of subsequent DE analysis compared to earlier methods [20].

Table: Impact of Batch Effect Correction on Differential Expression Analysis

| Scenario | Impact on True Positives | Impact on False Positives | Recommended Strategy |

|---|---|---|---|

| No Correction | Low (Power loss) | High (Inflation) | Never skip correction. |

| Using Corrected Matrix | Variable | Can be high (Inflation) | Avoid; use covariate instead [18]. |

| Batch as Covariate in Model | High | Well-controlled | Best practice for known batches [18]. |

| ComBat-ref Workflow | High (Retains power) | Well-controlled | Good alternative when a corrected matrix is needed [20]. |

The relationship between batch effects, correction strategies, and the integrity of differential expression analysis is summarized below.

The Scientist's Toolkit

Table: Essential Computational Tools & Reagents for scRNA-seq Batch Correction

| Tool / Resource | Function / Description | Use Case |

|---|---|---|

| Harmony | Iterative batch correction algorithm in PCA space. | Fast, general-purpose integration of multiple datasets [17]. |

| Seurat | Comprehensive R toolkit for single-cell analysis, includes CCA/MNN-based integration. | When high biological fidelity and a full analysis workflow are needed [2] [17]. |

| scICE | Evaluates clustering consistency using the Inconsistency Coefficient (IC). | Quantifying the reliability of clustering results post-correction [15]. |

| sysVI | A cVAE-based method using VampPrior and cycle-consistency. | Integrating datasets with substantial batch effects (e.g., cross-species) [12]. |

| ComBat-ref | A refined batch effect correction method for count data using a reference batch. | Preparing data for differential expression analysis with high statistical power [20]. |

| Unique Molecular Identifiers (UMIs) | Molecular barcodes that label individual mRNA molecules. | Correcting for amplification bias and improving quantification accuracy [16] [19]. |

| SCTransform | A variance-stabilizing normalization method based on a regularized negative binomial model. | Normalizing data and removing technical variation due to sequencing depth [2]. |

Core Case Study: Experimental Investigation of Technical Variation

Background and Experimental Design

This case study is founded on research specifically designed to disentangle technical variability from biological variation in single-cell RNA-sequencing (scRNA-seq) of human induced pluripotent stem cell (iPSC) lines [5]. The experimental design involved collecting scRNA-seq data from iPSC lines of three genetically distinct Yoruba (YRI) individuals. Critically, the researchers performed three independent C1 microfluidic plate collections per individual, with each replicate accompanied by processing of a matching bulk sample using the same reagents [5]. This robust design enabled precise estimation of error and variability associated with technical processing independently from biological variation across individuals.

Detailed Methodology

Table: Experimental Protocol for Controlled Replicate Study

| Step | Description | Key Parameters |

|---|---|---|

| Cell Lines | iPSC lines from three YRI individuals (NA19098, NA19239, etc.) | Genetically distinct backgrounds |

| Replicate Design | Three independent C1 collections per individual | Technical replicates processed separately |

| Quality Control | Visual inspection of C1 plates + data-driven filtering | Flagged empty wells (21) and multiple-cell captures (54) |

| Sequencing | Fluidigm C1 platform with UMIs and ERCC spike-in controls | Average 6.3 ± 2.1 million reads per sample |

| Data Processing | Alignment, UMI counting, QC filtering | 564 high-quality single cells retained from initial collection |

The methodology incorporated both unique molecular identifiers (UMIs) to account for amplification bias and ERCC spike-in controls of known abundance [5]. Visual inspection of C1 microfluidic plates constituted a crucial quality control step, with 21 samples flagged as containing no cell and 54 samples containing more than one cell [5].

Key Findings and Quantitative Results

The study revealed several critical findings regarding technical variation in scRNA-seq experiments:

Table: Key Quantitative Findings from Controlled Replicate Study

| Finding | Metric | Implication |

|---|---|---|

| Read-to-Molecule Correlation | Endogenous genes: r = 0.92; ERCC spikes: r = 0.99 | UMIs essential for accurate quantification |

| Sufficient Sequencing Depth | ~1.5 million reads/cell (~50,000 molecules) | Enabled detection of >6,000 genes |

| Bulk Correlation | Pearson coefficient = 0.8 for bottom 50% expressed genes | Single-cell expression profiles recapitulated bulk data |

| Sample Quality | 564 high-quality samples retained from initial collection | Stringent QC necessary for reliable data |

The research demonstrated that while gene-specific reads and molecule counts were highly correlated for ERCC spike-in data (r = 0.99), this correlation was lower for endogenous genes (r = 0.92), particularly for genes expressed at lower levels [5]. This underscores the importance of using UMIs in single-cell gene expression studies.

Troubleshooting Guide: Common Experimental Challenges

iPSC Culture and Differentiation Issues

Problem: Excessive differentiation (>20%) in cultures

- Solution: Ensure complete cell culture medium is less than 2 weeks old; remove differentiated areas prior to passaging; avoid having culture plates out of incubator for >15 minutes; ensure even cell aggregate size during passaging [21].

Problem: Poor differentiation efficiency

- Solution: Use H9 or H7 ESC line as control; adjust cell density or extend induction time for difficult-to-differentiate iPSC lines [22].

Problem: Low cell attachment after plating

- Solution: Plate 2-3 times higher number of cell aggregates initially; reduce incubation time with passaging reagents; use correct plate type for coating matrix [21].

scRNA-seq Quality Control Challenges

Problem: High mitochondrial read percentage

- Solution: Calculate fraction of counts from mitochondrial genes; filter cells with excessive mitochondrial content indicating broken membranes and cell death [23]. Filtering via median absolute deviations (MAD) is recommended, marking cells as outliers if they differ by 5 MADs [23].

Problem: Low number of detected genes per cell

- Solution: Establish minimum thresholds based on experimental system; typically filter cells with <500-1000 UMIs; consider cell type complexity when setting thresholds [24].

Problem: Doublet detection

- Solution: While not always included in standard QC, tools like Scrublet can identify doublets; however, exercise caution as they may remove cells with intermediate phenotypes [24].

Computational Methods for Batch Effect Management

Advanced Integration Strategies

Substantial batch effects arising from different biological systems (e.g., species, organoids vs primary tissue) or technologies (e.g., single-cell vs single-nuclei RNA-seq) present particular challenges. Current research demonstrates that conventional cVAE-based methods struggle with these substantial batch effects [12] [13].

Table: Comparison of Batch Effect Correction Methods

| Method | Mechanism | Advantages | Limitations |

|---|---|---|---|

| KL Regularization | Adjusts how much embeddings deviate from Gaussian distribution | Standard in cVAE architecture; easy to implement | Removes biological and technical variation indiscriminately |

| Adversarial Learning | Aligns batch distributions in latent space | Actively pushes together cells from different batches | May mix embeddings of unrelated cell types |

| sysVI (VAMP + CYC) | Combines VampPrior and cycle-consistency constraints | Preserves biological signals while improving integration | More complex implementation |

| GLUE | Uses adversarial learning with graph-based framework | Among best-performing in benchmarks | Can mix cell types with unbalanced proportions |

The recently proposed sysVI method employs VampPrior and cycle-consistency constraints to improve integration across challenging datasets while preserving biological signals [12] [13]. This approach specifically addresses the limitations of existing methods that either remove biological information (KL regularization) or artificially mix cell types (adversarial learning).

Research Reagent Solutions

Table: Essential Research Reagents for iPSC scRNA-seq Studies

| Reagent Category | Specific Examples | Function | Considerations |

|---|---|---|---|

| Culture Media | mTeSR Plus, Essential 8 Medium, StemFlex Medium | Supports pluripotent stem cell growth | Monitor expiration; prepare fresh aliquots |

| Passaging Reagents | ReLeSR, Gentle Cell Dissociation Reagent, EDTA | Dissociates cells while maintaining viability | Optimize incubation time for specific cell lines |

| Matrices | Geltrex, Matrigel, Vitronectin XF, Laminin-521 | Provides surface for cell attachment | Use tissue culture-treated plates appropriately |

| Inhibitors | ROCK inhibitor Y-27632, RevitaCell Supplement | Enhances cell survival after passaging/thawing | Use at 10μM for overnight treatment |

| QC Tools | ERCC spike-in controls, UMIs | Monitors technical variation | Include in library preparation |

| Cryopreservation | CRYOSTEM, DMSO with FBS | Long-term cell storage | Use controlled-rate freezing |

FAQs: Addressing Common Researcher Questions

Q: How can I determine if my scRNA-seq data has substantial batch effects? A: Compare per-cell type distances between samples from individual datasets versus between different systems. Significant differences indicate substantial batch effects requiring specialized integration methods [12].

Q: What are the minimum QC thresholds for scRNA-seq data? A: While thresholds vary by experiment, general guidelines include: minimum 500-1000 UMIs/cell, detection of 300+ genes/cell, and mitochondrial ratio below 20% [23] [24]. However, these should be adjusted based on biological expectations.

Q: How many replicates are sufficient for technical variation studies? A: The case study utilized three independent C1 collections per cell line, providing robust estimation of technical variability [5]. The exact number depends on cost constraints and desired statistical power.

Q: Can I combine data from different scRNA-seq platforms? A: Yes, but this creates substantial batch effects requiring advanced integration methods like sysVI. Performance should be carefully evaluated using metrics like iLISI and NMI [13].

Q: How does the use of UMIs improve data quality? A: UMIs account for amplification bias by counting molecules rather than reads, substantially reducing technical variability and providing more accurate gene expression estimates [5].

The Correction Toolbox: A Practical Guide to Batch Effect Integration Methods

In single-cell RNA sequencing (scRNA-seq) research, particularly in stem cell studies, batch effects present a significant challenge. These technical variations, introduced from different machines, handling personnel, or reagent lots, can obscure true biological signals and lead to spurious conclusions [25] [26]. Effective batch effect correction is crucial for integrating datasets and revealing accurate cellular heterogeneity, differentiation trajectories, and novel cell states. This guide demystifies three major computational approaches for batch correction: Mutual Nearest Neighbors (MNN), Deep Learning, and Matrix Factorization, providing troubleshooting and implementation FAQs specifically for stem cell scRNA-seq datasets.

Understanding the Core Methodologies

What is the Mutual Nearest Neighbors (MNN) Approach?

Mutual Nearest Neighbors (MNN) is a powerful strategy for identifying and correcting batch effects by finding pairs of cells across different batches that are biologically similar.

Core Principle: The fundamental assumption is that cells of the same type exist across different batches. MNN identifies these "anchor" cell pairs—where a cell in one batch is the nearest neighbor of a cell in another batch, and vice versa. The computational differences between these mutual neighbors are considered technical batch effects, which can then be corrected [27] [17].

Key Implementation: The original MNNCorrect operates in high-dimensional gene expression space, but this can be computationally intensive. Subsequent methods like fastMNN and Scanorama perform the MNN search in a lower-dimensional subspace (e.g., PCA) to improve speed and efficiency [17]. Seurat's integration method (V3+) also uses a related concept, finding "integration anchors" in a subspace created by Canonical Correlation Analysis (CCA) [17].

The following diagram illustrates the workflow of a standard MNN-based correction method:

How Do Deep Learning Models Correct Batch Effects?

Deep learning approaches use neural networks to learn complex, non-linear representations of scRNA-seq data that are invariant to technical batches.

Core Principle: These models, such as autoencoders, learn to compress gene expression data into a low-dimensional "bottleneck" layer (the embedding) and then reconstruct the data from this layer. The network is trained so that this embedding contains all biological information but is stripped of batch-specific technical noise [28].

Key Variants:

- Variational Autoencoders (VAEs): Used in methods like scGen and scVI, they learn a distribution of the latent space, which can be beneficial for modeling uncertainty and generating data [28] [17].

- Residual Neural Networks: Used in deepMNN, this approach stacks residual blocks to transform the data, using a loss function that minimizes distances between MNN pairs in a PCA subspace while preserving the original data structure through regularization [27].

- Deep Metric Learning: Used in scDML, this method uses "triplet loss" to learn an embedding where cells of the same type are pulled closer together and cells of different types are pushed apart, effectively removing batch effects [7].

What is Matrix Factorization's Role in Batch Integration?

Matrix factorization techniques decompose the high-dimensional gene expression matrix into lower-dimensional factors that represent biological and technical sources of variation.

Core Principle: Methods like LIGER use integrative non-negative matrix factorization (iNMF) to factorize multiple datasets simultaneously. This generates two sets of factors: shared factors (representing common biological features across batches) and dataset-specific factors (representing batch-specific technical variations) [17]. Batch correction is achieved by using only the shared factors for downstream analysis.

Key Implementation: LIGER does not force a complete alignment of batches. It aims to distinguish biological and technical variations, which can be advantageous when batches contain legitimate biological differences alongside technical artifacts [17].

Performance Comparison and Selection Guide

The table below summarizes a comprehensive benchmark of these methods across key performance criteria, based on large-scale evaluation studies [17] [7].

Table 1: Benchmarking Batch Effect Correction Methods

| Method (Example) | Method Category | Key Strength | Preservation of Rare Cell Types | Scalability to Large Datasets | Handling of Multiple Batches | Runtime Efficiency |

|---|---|---|---|---|---|---|

| Harmony [17] | Mixed (PCA + clustering) | Fast, good overall performance | Good | Excellent | Excellent | Excellent |

| Scanorama [17] | MNN | Effective integration, handles multiple batches | Good | Very Good | Excellent | Very Good |

| Seurat V3/V4 [27] [17] | MNN (CCA-based) | Popular, well-integrated workflow | Good | Good | Excellent | Good |

| LIGER [17] | Matrix Factorization | Distinguishes biological vs. technical variation | Fair | Good | Excellent | Good |

| scGen [17] | Deep Learning (VAE) | Supervised, requires cell type labels | Fair | Fair | Requires reference | Fair |

| deepMNN [27] | Deep Learning (ResNet) | Powerful non-linear correction, uses MNN loss | Very Good (per authors) | Excellent (per authors) | Excellent | Excellent (per authors) |

| scDML [7] | Deep Learning (Metric) | Excellent at preserving rare cell types | Excellent | Excellent | Excellent | Very Good |

Troubleshooting FAQs for Stem Cell Researchers

General Integration Challenges

Q: After integration, my stem cell populations are overly mixed and I can no longer distinguish between pluripotent and early differentiated states. What went wrong?

A: This indicates potential over-correction, where the batch effect method has removed biological variation along with technical noise.

- Solution 1: Adjust the method's parameters. For instance, in methods like Harmony or LIGER, reduce the strength of the correction or integration parameter.

- Solution 2: Switch to a method known for better biological conservation. Benchmark studies suggest scDML is particularly strong at preserving subtle cell types [7], while LIGER is designed to retain biological variation distinct from batch effects [17].

- Solution 3: Validate with known marker genes. Check the expression of well-established stem cell markers (e.g., POUSF1/OCT4, NANOG) in the integrated data to ensure their expression patterns are consistent with biology.

Q: My batches are not integrating well; they remain separate in the UMAP visualization. Is the method failing?

A: This indicates under-correction.

- Solution 1: Ensure your pre-processing is consistent. Use the same normalization and highly variable gene selection method across all batches before integration [29].

- Solution 2: Check for severe batch effects. If the batches were generated with vastly different technologies, they might not be suitable for integration. Consider using a method designed for strong correction, like a deep learning approach (e.g., deepMNN [27]).

- Solution 3: Verify the method's compatibility. Some older MNN methods were designed for two batches. Use methods that explicitly handle multiple batches, such as Harmony, Scanorama, Seurat V4, or scDML [27] [17] [7].

Method-Specific Issues

Q: When using an MNN-based method (e.g., fastMNN, Scanorama), the integration result changes depending on the order I input the batches. Why?

A: This is a known limitation of some early MNN implementations, which correct batches in a pairwise, sequential manner. The result can be influenced by which batch is used as the reference.

- Solution: Use more advanced methods that perform batch integration in a single, collective step. Harmony, Scanorama, and deepMNN are explicitly designed to correct multiple batches simultaneously without order dependence [27] [17].

Q: I am using a deep learning model like scVI, but the training is unstable or the results are poor. How can I improve this?

A: Deep learning models are sensitive to hyperparameters and data quality.

- Solution 1: Increase training data size. Deep learning models typically require a substantial number of cells to learn effectively. If your dataset is small, consider using a simpler method.

- Solution 2: Check for over-denoising. Some VAEs can "fill in" too many dropouts (zero counts), potentially distorting the biology. If you suspect this, try a different method like scDML or deepMNN which use different loss functions [27] [7].

- Solution 3: Ensure consistent pre-processing. As with all methods, normalize and scale the data uniformly across batches.

Essential Research Reagent Solutions

The following table lists key computational "reagents" – the algorithms and packages that are essential for implementing these batch correction strategies.

Table 2: Key Computational Tools for Batch Effect Correction

| Tool Name | Method Category | Primary Function | Programming Language | Key Application Context |

|---|---|---|---|---|

| fastMNN [17] | MNN | Fast batch correction using MNN in PCA space. | R | Efficient integration of datasets with identical cell types. |

| Seurat [29] [17] | MNN (CCA & PCA) | A comprehensive toolkit for single-cell analysis, including integration. | R | General-purpose scRNA-seq analysis with robust integration capabilities. |

| Scanorama [17] | MNN | Panoramic stitching of batches for scalable integration. | Python | Integrating large numbers of diverse batches. |

| Harmony [17] | Mixed | Iterative clustering and correction for efficient integration. | R | Fast and effective integration, recommended as a first try. |

| LIGER [17] | Matrix Factorization | Integrative NMF to factorize shared and dataset-specific factors. | R | When seeking to distinguish biological from technical variation. |

| scVI [17] | Deep Learning (VAE) | Probabilistic modeling and batch correction using a VAE. | Python | Complex integration tasks and downstream analysis with uncertainty. |

| scGen [17] | Deep Learning (VAE) | Supervised batch correction and perturbation response prediction. | Python | When cell type labels are available and can be used for guidance. |

| deepMNN [27] | Deep Learning (ResNet) | Batch correction using residual networks guided by MNN pairs. | Python (PyTorch) | Large-scale data integration with high performance. |

| scDML [7] | Deep Learning (Metric) | Batch alignment and rare cell type preservation via metric learning. | Python (PyTorch) | Projects where preserving subtle cell states (e.g., stem cell progenitors) is critical. |

Recommended Experimental Protocol for Stem Cell Datasets

Based on benchmark studies and methodological advances, here is a recommended step-by-step protocol for benchmarking batch correction methods on your stem cell scRNA-seq data:

- Pre-processing: Normalize (e.g., log(TPM+1) or SCTransform) and scale the data for each batch separately. Identify highly variable genes consistently across batches [27] [29].

- Initial Run with Harmony: Given its speed and robust performance in benchmarks [17], run Harmony on your pre-processed data using default parameters.

- Visual Inspection: Generate UMAP plots colored by batch and by cell type (if known). Assess if batches are mixed and if biologically distinct stem cell states remain separate.

- Quantitative Evaluation (If Ground Truth is Available):

- Iterate and Compare: If results from Harmony are unsatisfactory (e.g., over-mixing of states or under-mixing of batches), test other methods. A strong candidate is scDML for its exceptional ability to preserve rare cell types [7], or Scanorama for robust multi-batch integration [17].

- Biological Validation: The final check must always be biological. Ensure that key marker genes for your stem cell system show expected expression patterns in the integrated data and that differentiation trajectories appear plausible.

The following diagram summarizes this recommended workflow:

Frequently Asked Questions

Q1: Based on recent benchmarks, which integration methods consistently perform best for complex single-cell datasets?

Several independent benchmarking studies have identified a consistent group of top-performing methods for single-cell RNA-seq data integration. According to a large-scale benchmark evaluating 68 method and preprocessing combinations across 85 batches, scANVI, Scanorama, scVI, and scGen performed particularly well on complex integration tasks [30]. Another major benchmark focusing on atlas-level data integration found that Harmony, scVI, and Scanorama achieved the best balance between batch effect removal and biological conservation [30]. For cross-species integration specifically, which presents particularly substantial batch effects, scANVI, scVI, and SeuratV4 methods achieved the best balance between species-mixing and biology conservation [31].

Q2: What are the key limitations of popular integration methods I should be aware of?

Each method has specific limitations that may affect your choice depending on your data characteristics and computational resources:

- Seurat Integration: Can be computationally intensive and memory-intensive for large datasets, requiring careful parameter tuning [2].

- scVI/scANVI: Demand significant computational resources and familiarity with deep learning frameworks; scANVI requires GPU acceleration for efficiency [2].

- Harmony: Has limited native visualization tools and requires integration with other packages for comprehensive visualization [2].

- LIGER: Requires choosing a reference dataset (typically the set with the largest number of cells), which may introduce biases [7].

Q3: My dataset has substantial batch effects across different species and technologies. Which method is most suitable?

For substantial batch effects such as cross-species, organoid-tissue, or single-cell/single-nuclei integrations, recent research recommends sysVI, a cVAE-based method employing VampPrior and cycle-consistency constraints [13]. This approach specifically addresses the limitations of standard cVAE models that struggle with substantial batch effects. When integrating whole-body atlases between species with challenging gene homology annotation, SAMap has demonstrated superior performance despite being computationally intensive [31].

Q4: What metrics should I use to evaluate the success of batch correction in my stem cell dataset?

A comprehensive evaluation should include both batch effect removal and biological conservation metrics:

Table: Key Metrics for Evaluating Batch Correction Performance

| Metric Category | Specific Metrics | What It Measures |

|---|---|---|

| Batch Effect Removal | kBET (k-nearest neighbor Batch Effect Test) [2] | Whether batch proportions in local neighborhoods match expected proportions |

| iLISI (graph integration local inverse Simpson's Index) [13] [30] | Batch mixing in local neighborhoods | |

| ASW_batch (Average Silhouette Width) [7] | Batch separation using silhouette widths | |

| Biological Conservation | ARI (Adjusted Rand Index) [7] [31] | Similarity between clustering results and known cell type annotations |

| NMI (Normalized Mutual Information) [13] [7] | Mutual information between clustering and known annotations | |

| ASW_celltype (Average Silhouette Width) [7] | Cell type separation using silhouette widths | |

| ALCS (Accuracy Loss of Cell type Self-projection) [31] | Preservation of cell type distinguishability after integration |

Q5: How does Harmony's approach differ from Seurat's, and when would I choose one over the other?

Harmony and Seurat employ fundamentally different integration strategies:

Table: Comparison of Harmony and Seurat Integration Methods

| Characteristic | Harmony | Seurat Integration |

|---|---|---|

| Core Methodology | Iterative clustering and correction in low-dimensional embedding space [2] | Canonical Correlation Analysis (CCA) and Mutual Nearest Neighbors (MNN) [2] |

| Primary Output | Corrected embedding [32] | Corrected count matrix or embedding [32] |

| Computational Efficiency | Fast and scalable to millions of cells [2] | Computationally intensive for large datasets [2] |

| Strengths | Excellent batch mixing while preserving biological variation [32] [2] | High biological fidelity and seamless integration with Seurat's comprehensive toolkit [2] |

| Ideal Use Case | Large-scale atlas projects with multiple batches [30] | Studies requiring careful cell type distinction and full Seurat workflow integration [2] |

Troubleshooting Guides

Poor Integration Results After Running Batch Correction

Problem: After running batch correction, your stem cell datasets still show strong batch separation, or biological variation has been过度校正.

Solutions:

- Increase integration strength: For methods like Harmony, adjust parameters to increase integration strength. For scVI-based methods, consider sysVI which adds cycle-consistency constraints for challenging integrations [13].

- Check feature selection: Highly variable gene selection significantly improves performance of data integration methods. Re-evaluate your HVG selection strategy [30].

- Try multiple methods: If one method fails, switch to an alternative top-performing approach. Benchmarks show that performance can vary by dataset characteristics [30] [31].

- Validate with appropriate metrics: Use both batch removal (iLISI, kBET) and biology conservation (ALCS, ARI) metrics to ensure you're not over-correcting [13] [31].

Excessive Mixing of Different Cell Types

Problem: After batch correction, distinct cell types in your stem cell dataset are becoming improperly mixed together.

Solutions:

- Reduce integration strength: Over-correction can mix biologically distinct populations. Decrease integration strength parameters in your chosen method.

- Use scDML for rare cell type preservation: For datasets where preserving subtle cell types is crucial, consider scDML which uses deep metric learning to preserve rare populations while removing batch effects [7].

- Apply biology-conscious methods: Methods like scANVI that can incorporate cell type annotations may better preserve biological variation [30] [31].

- Check for unbalanced cell types: Adversarial methods may incorrectly mix cell types with unbalanced proportions across batches. Consider using reference-based approaches instead [13].

Computational Performance and Memory Issues

Problem: Batch correction methods are running too slowly or exceeding available memory with your large stem cell dataset.

Solutions:

- For very large datasets (>1M cells): Use Scanorama or scVI which scale well to large datasets [30].

- For memory-constrained environments: BBKNN is fast and lightweight, though may be less effective for strong batch effects [2].

- Leverage GPU acceleration: Methods like scVI and scANVI can utilize GPU acceleration to significantly speed up computation [2].

- Subsample strategically: For method testing and parameter optimization, use subsampled data before running on full datasets.

Experimental Protocols

Standardized Workflow for Method Evaluation and Selection

Diagram: Comprehensive workflow for evaluating and selecting batch correction methods.

Step-by-Step Protocol for Comparative Method Benchmarking

Research Reagent Solutions & Computational Tools:

- Single-cell analysis toolkit: Seurat (R) or Scanpy (Python) for foundational data processing [2]

- Batch correction methods: Install Harmony, scVI/scANVI, Seurat, LIGER following official documentation [33]

- Evaluation framework: scIB Python module for standardized metric calculation [30]

- Visualization tools: Uniform Manifold Approximation and Projection (UMAP) for visual assessment [7]

Procedure:

- Data Preprocessing: Normalize your single-cell data using standard log-normalization or SCTransform, then select 2000-5000 highly variable genes [30] [2].

- Method Configuration: Set up each integration method with appropriate parameters:

- Harmony: Use default parameters initially, then adjust theta (diversity clustering) and lambda (ridge regression) parameters if needed

- scVI: Train with default architecture (128 hidden nodes, 2 layers, 10-dimensional latent space)

- Seurat: Use 2000 integration features and 30 dimensions for CCA

- LIGER: Use suggested parameters with 20-30 factors [31]

- Integration Execution: Run each method on your stem cell dataset, saving both corrected embeddings and any corrected count matrices.

- Comprehensive Evaluation: Calculate the following metrics for each method:

- Visual Inspection: Generate UMAP plots colored by batch and cell type to visually assess integration quality.

- Method Selection: Choose the method that best balances batch removal and biological preservation for your specific research question.

Performance Reference Tables

Table: Benchmarking Results Across Integration Tasks (Based on [30])

| Method | Simple Integration Tasks | Complex Atlas Tasks | Scalability to >1M Cells | Recommended Preprocessing |

|---|---|---|---|---|

| Harmony | Excellent | Good | Yes [2] | HVG selection [30] |

| scVI | Good | Excellent | Yes [30] | Raw counts [30] |

| Scanorama | Good | Excellent | Yes [30] | HVG selection [30] |

| Seurat | Excellent | Good | Limited [2] | HVG selection & scaling [30] |

| LIGER | Good | Good | Moderate | Raw counts without scaling [30] |

Table: Cross-Species Integration Performance (Based on [31])

| Method | Species-Mixing Score | Biology Conservation | Annotation Transfer Accuracy | Recommended For |

|---|---|---|---|---|

| scANVI | High | High | High | When cell annotations are available |

| scVI | High | Medium-High | Medium-High | Unsupervised integration |

| SeuratV4 | Medium-High | Medium-High | Medium-High | General cross-species use |

| SAMap | Not quantified [31] | High | High | Distant species with poor homology |

Advanced Technical Considerations

Handling Substantial Batch Effects in Stem Cell Research

For challenging stem cell integrations involving substantial batch effects (e.g., different protocols, time points, or differentiation systems), consider these advanced approaches:

Diagram: Strategy for handling substantial batch effects in stem cell datasets.

Key Technical Considerations:

- Systems-Level Integration: For organoid-primary tissue comparisons or cross-species stem cell analysis, employ sysVI which specifically addresses limitations in standard cVAE models through VampPrior and cycle-consistency constraints [13].

- Gene Homology Mapping: For cross-stem cell-species comparisons, optimize gene homology mapping by including in-paralogs for evolutionarily distant species, not just one-to-one orthologs [31].

- Avoid Over-Correction: Use the ALCS metric to detect whether integration is blurring biologically distinct stem cell states, which is particularly important for capturing subtle transitional states in differentiation processes [31].

- Iterative Approach: For building stem cell atlases, plan for an iterative integration process where new datasets can be added without requiring complete reprocessing of existing data [2].

FAQ: Core Concepts and Method Selection

Q1: What distinguishes "substantial" batch effects from milder technical variations? Substantial batch effects arise from major biological or technical confounders, such as integrating data across different species, between in vitro models (like organoids) and primary tissue, or from fundamentally different sequencing protocols (e.g., single-cell vs. single-nuclei RNA-seq). These effects are characterized by significantly greater variation between these systems than the variation observed between samples within the same system. In contrast, milder batch effects typically stem from technical replicates or samples processed in different laboratories but with similar underlying biology [12].

Q2: When should I choose sysVI over scDML, and vice versa? The choice depends on your data characteristics and analysis goals. sysVI is a conditional Variational Autoencoder (cVAE)-based method that combines a VampPrior and latent cycle-consistency loss. It is particularly effective when you need to preserve fine-grained biological variation and perform downstream analysis on cell states and conditions after integration [34] [12]. scDML utilizes deep metric learning guided by initial clusters and nearest neighbor information. It excels in scenarios with rare cell types and when the goal is high clustering accuracy, as it is specifically designed to prevent the loss of subtle cell populations during integration [7] [35].

Q3: What are the definitive signs of over-correction in my integrated data? Over-correction occurs when batch effect removal also erases meaningful biological variation. Key signs include:

- Distinct cell types are clustered together in dimensionality reduction plots (e.g., UMAP) without a biological justification.

- A complete overlap of samples from very different biological conditions (e.g., healthy and diseased cells becoming indistinguishable).

- Cluster-specific marker genes are dominated by housekeeping genes (e.g., ribosomal genes) that lack cell-type specificity [4].

Troubleshooting Guides

sysVI-Specific Workflow and Troubleshooting

Experimental Protocol for sysVI

- Data Preparation: Start with normalized (to a fixed count per cell) and log-transformed data. Subset to highly variable genes (HVGs). For substantial batch effects, it is recommended to select HVGs per system and then take the intersection across systems to obtain ~2000 shared HVGs [34].

- Setup in scvi-tools: Use

SysVI.setup_anndata()to specify thebatch_key(which should represent the "system," e.g., species or technology) and any additional categorical covariates (e.g.,["batch"]within a system) [34]. - Model Initialization: Initialize the model with

model = SysVI(adata). If you have many categorical covariates, setembed_categorical_covariates=Trueto reduce memory usage [34]. - Model Training: Train the model using

model.train(). To use the recommended configuration, employ the VampPrior and latent cycle-consistency by settingplan_kwargs={"z_distance_cycle_weight": 5}. The number of epochs should be sufficient for the loss to stabilize (e.g., 200) [34]. - Obtain Embeddings: After training, generate the integrated latent representation using

embed = model.get_latent_representation(adata)[34].

Troubleshooting Common sysVI Issues

| Problem | Possible Cause | Solution |

|---|---|---|

| Insufficient integration (batches still separate) | Cycle-consistency loss weight may be too low. | Increase z_distance_cycle_weight in plan_kwargs (a range of 2-10 is typical, but values up to 50 can be tested for strong effects) [34]. |

| Loss of biological signal (cell types blurring) | Cycle-consistency or KL loss weight is too high. | Decrease z_distance_cycle_weight or the kl_weight in plan_kwargs [34]. |

| Training instability or poor results | High sensitivity to random seed. | Run multiple models (e.g., 3) with different random seeds (scvi.settings.seed) and select the best performer [34]. |

| High memory usage | Many one-hot encoded categorical covariates. | Initialize the model with embed_categorical_covariates=True to embed categorical covariates instead of one-hot encoding them [34]. |

scDML-Specific Workflow and Troubleshooting

Experimental Protocol for scDML

- Preprocessing: Perform standard preprocessing (normalization, log1p transformation), identify highly variable genes, and scale the data. Conduct PCA to obtain an initial embedding [7].

- Initial Clustering: Perform graph-based clustering at a high resolution on the preprocessed data. This initial over-clustering is crucial to ensure all subtle and rare cell types are captured before integration [7].

- Cluster Merging and MNN: The algorithm uses k-nearest neighbor (KNN) and mutual nearest neighbor (MNN) information to build a similarity matrix between cell clusters. It then applies a merging criterion to optimize the final number of clusters, guided by the known or expected number of cell types [7].

- Deep Metric Learning: scDML uses the initial cluster information and MNN pairs to guide a deep metric learning model with triplet loss. This learns a low-dimensional embedding that pulls cells of the same type (from the same or different batches) closer together while pushing cells of different types apart, effectively removing batch effects [7] [35].

Troubleshooting Common scDML Issues

| Problem | Possible Cause | Solution |

|---|---|---|

| Rare cell types are lost | Initial clustering resolution was too low. | Increase the resolution parameter in the initial graph-based clustering step to generate more, smaller clusters [7]. |

| Poor clustering accuracy | The final cluster number may be misspecified. | Ensure the cut-off for the hierarchical merging of clusters is set appropriately. Using the known number of true cell types as a guide is recommended for evaluation [7]. |

| Incomplete batch mixing | Triplet loss may not be effectively aligning batches. | The method relies on MNNs and triplet selection; ensure the initial clustering and MNN detection are of high quality. Benchmarking has shown scDML generally outperforms other methods in mixing while preserving biology [7] [35]. |

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Computational Tools for Advanced Batch Correction

| Tool / Resource | Function | Relevance to sysVI/scDML |

|---|---|---|

| scvi-tools [34] [36] | A Python package for deep generative modeling of single-cell data. | Provides the implementation of the sysVI model. Essential for the entire sysVI workflow. |

| Scanpy [7] | A scalable Python toolkit for single-cell gene expression data analysis. | Used for standard data preprocessing (normalization, HVG selection, PCA) before applying either sysVI or scDML. |

| scDML Python Package [7] | The official implementation of the scDML algorithm. | Required to run the scDML method. It is built on PyTorch and integrates with Scanpy for preprocessing. |

| Harmony [4] [33] | A fast and versatile integration method. | A popular alternative for comparison. Benchmarking studies can use it as a baseline to evaluate the performance gain from sysVI or scDML. |

| Seurat (in R) [33] [29] | A comprehensive R toolkit for single-cell genomics. | Its integration functions (e.g., CCA) are common benchmarks. Useful for comparative analysis and for users familiar with the R ecosystem. |

Workflow and Model Architecture Diagrams

sysVI Architecture and Workflow

scDML Architecture and Workflow

Performance Benchmarking and Quantitative Comparison

Table: Benchmarking Scores of sysVI, scDML, and Other Methods on Simulated Data

This table summarizes the performance of various integration methods on a simulated dataset with 4 cell types across 4 batches, as reported in benchmarking studies. The scores are normalized, with higher values indicating better performance.

| Method | Batch Correction (iLISI) | Bio Conservation (NMI) | Bio Conservation (ARI) | Composite Score | Key Strength |

|---|---|---|---|---|---|

| scDML [7] [35] | 0.78 | 1.00 | 1.00 | 0.92 | Superior cell type preservation & clustering |

| sysVI (VAMP+CYC) [12] | 0.85 | 0.96 | 0.95 | 0.89 | Strong integration & biological fidelity |

| Scanorama [7] [35] | 0.75 | 0.91 | 0.90 | 0.83 | Good all-round performance |

| scVI [7] [35] | 0.65 | 0.87 | 0.85 | 0.76 | Scalable baseline |

| Harmony [7] [4] | 0.80 | 0.82 | 0.80 | 0.79 | Fast batch mixing |

| Liger [7] | 0.82 | 0.75 | 0.72 | 0.74 | Requires a reference dataset |

Key Takeaway: Both scDML and sysVI are top-tier methods, but they excel in slightly different areas. scDML achieves perfect clustering metrics (ARI/NMI=1.0) in the provided simulation, highlighting its strength in recovering true cell types. sysVI also demonstrates high biological preservation while achieving excellent batch mixing, making it a robust choice for complex integrations [7] [35] [12].

Frequently Asked Questions

Q1: I have a very large dataset (over 500,000 cells). Which methods are both effective and computationally efficient? For large datasets, computational runtime and memory usage are critical. Harmony is highly recommended as a first choice due to its significantly shorter runtime, which was a key finding in a major benchmark study [17]. Other scalable methods identified in benchmarks include LIGER and Scanorama [17] [7]. A newer method, scDML, also demonstrates scalability to large datasets with lower peak memory usage [7].

Q2: After integrating my data, my rare cell types have disappeared. What can I do? Most methods first remove batch effects and then cluster cells, which can lead to the loss of subtle biological signals, including rare cell types [7]. To address this, consider using scDML, a method specifically designed to preserve rare cell types by leveraging deep metric learning and initial high-resolution clustering to protect these populations during the integration process [7].

Q3: How can I objectively evaluate if my batch correction was successful? Successful batch correction should achieve two goals: good mixing of cells from different batches and preservation of distinct biological cell types. Do not rely on visual inspection alone, as it can be subjective [17]. Instead, use quantitative metrics. The table below summarizes key benchmarking metrics recommended for evaluating the performance of batch correction tools [17] [37].

| Metric Name | What It Measures | Interpretation |

|---|---|---|

| kBET (k-nearest neighbour batch-effect test) | Batch mixing on a local level by comparing local vs. global batch label distributions [17] [37]. | A low rejection rate indicates good local batch mixing. |

| LISI (Local Inverse Simpson's Index) | The effective number of batches in a cell's local neighbourhood [7] [37]. | A higher score indicates better batch mixing. |

| ASW (Average Silhouette Width) | How well cell type clusters are separated (ASWcelltype) or batches are mixed (ASWbatch) [17] [7]. | High ASWcelltype and low ASWbatch are desirable. |

| ARI (Adjusted Rand Index) | The similarity between the clustering results and the known cell type labels [17] [7]. | A higher score indicates better preservation of biological clusters. |

Q4: What is the most recommended method to try first on a new dataset? Based on a comprehensive benchmark of 14 methods, Harmony is recommended as the first method to try due to its fast runtime and strong performance across various scenarios [17]. Seurat 3 and LIGER are also listed as top-tier viable alternatives [17].

Troubleshooting Guides

Problem: Slow Runtime or Inability to Process Large Data

- Possible Cause: The batch correction method is not optimized for the scale of your data. Some algorithms are computationally demanding in terms of CPU time and memory [17].

- Solution:

- Switch to a method known for its speed and scalability, such as Harmony or Scanorama [17].

- Ensure you are following the method's recommended preprocessing steps, which often include dimensionality reduction (e.g., PCA) to improve speed [17].

- Check if the method can operate in a lower-dimensional space, as this can significantly reduce computational demands [17].

Problem: Poor Integration Results (Batch Effect Not Removed or Biological Signals Lost)

- Possible Cause 1: The method assumes all differences are technical and over-corrects, removing true biological variation [7].

- Solution 1: Use a method like LIGER, which is designed to distinguish technical variation from biological variation, or scDML, which aims to preserve cell type purity [17] [7].

- Possible Cause 2: The method is not suited for the specific complexity of your dataset (e.g., batches with non-identical cell types) [17].

- Solution 2: Consult the following flowchart to select a method based on your dataset's characteristics. A benchmark study found that performance can vary depending on the scenario, such as whether batches have identical or non-identical cell types [17].

Flowchart for selecting a batch correction method based on dataset characteristics.

Research Reagent Solutions

The following table details key computational tools and their functions in the analysis of single-cell RNA-sequencing data, particularly for batch correction.

| Tool / Resource | Function in Analysis |

|---|---|

| Seurat | A comprehensive R toolkit for single-cell genomics, widely used for normalization, scaling, highly variable gene (HVG) selection, and its own CCA-based integration method [17]. |

| Scanpy | A popular Python-based framework for analyzing single-cell gene expression data, used for preprocessing (normalization, PCA) and providing a ecosystem for various integration methods [7]. |

| Harmony | An algorithm that iteratively clusters cells and corrects batch effects in a reduced PCA space, known for its short runtime [17]. |

| scDML | A deep metric learning model that uses triplet loss to remove batch effects while preserving the clustering structure and rare cell types [7]. |

| kBET/LISI Metrics | Quantitative metrics used to objectively evaluate the success of batch correction by measuring the mixing of batches and preservation of cell types [17] [37]. |

Beyond Basic Correction: Troubleshooting Overcorrection and Optimizing for Biological Fidelity