Navigating the Toolbox: A Comprehensive Guide to Differential Expression Analysis for Stem Cell Research

Differential expression (DE) analysis is a cornerstone of stem cell research, enabling the identification of key genes driving development, reprogramming, and disease modeling.

Navigating the Toolbox: A Comprehensive Guide to Differential Expression Analysis for Stem Cell Research

Abstract

Differential expression (DE) analysis is a cornerstone of stem cell research, enabling the identification of key genes driving development, reprogramming, and disease modeling. This article provides a comprehensive guide for researchers and drug development professionals, synthesizing current evidence to navigate the complex landscape of DE tools. We cover foundational concepts of bulk and single-cell RNA-seq, methodological guidance for applying top-performing tools like DESeq2, edgeR, and pseudobulk methods, and critical troubleshooting strategies to combat false discoveries. By comparing tool performance based on benchmark studies and validating findings through functional enrichment, we offer a actionable framework for robust DE analysis that yields biologically accurate insights into stem cell mechanisms.

Laying the Groundwork: From Bulk RNA-seq to Single-Cell Transcriptomics in Stem Cell Systems

In stem cell biology, cellular heterogeneity is a fundamental characteristic, whether in a population of pluripotent stem cells capable of forming all three germ layers or tissue-specific stem cells found in adult tissues [1]. Traditional bulk RNA sequencing masks these critical cell-to-cell differences by measuring average gene expression across thousands of cells, potentially obscuring rare stem cell populations and dynamic transition states [1] [2]. Single-cell RNA sequencing (scRNA-seq) technologies overcome this limitation by quantifying transcriptomes in individual cells, revealing the intricate diversity within stem cell populations and providing unprecedented insights into developmental processes, lineage commitment, and stem cell fate decisions [3] [1]. This capability is particularly valuable for identifying novel stem cell markers, understanding regulatory networks, and tracing differentiation trajectories [3] [1].

Core Technological Principles of RNA-seq in Stem Cell Analysis

From Bulk to Single-Cell Resolution

The fundamental principle underlying RNA-seq quantification is the conversion of RNA molecules into a cDNA library followed by high-throughput sequencing. In bulk RNA-seq, this process is applied to the entire population of cells, yielding averaged expression values that represent the population but conceal cellular heterogeneity [1]. In contrast, scRNA-seq employs sophisticated barcoding strategies to tag individual cells and their transcripts before pooling for sequencing, enabling computational deconvolution of the data back to single-cell resolution [3] [4].

Key technological innovations have been crucial for adapting RNA-seq to stem cell research. Unique Molecular Identifiers (UMIs) are random nucleotide sequences incorporated during reverse transcription that tag individual mRNA molecules, allowing bioinformatic correction for amplification bias and enabling precise digital counting of transcripts [3] [2]. Cell barcodes are sequences unique to each cell that permit millions of sequencing reads to be assigned to their cell of origin [3] [4]. These technologies work in concert to generate accurate gene expression profiles for each individual cell within a heterogeneous stem cell population.

Platform-Specific Methodologies for Stem Cell Applications

Table 1: Comparison of scRNA-seq Platform Characteristics Relevant to Stem Cell Research

| Platform/Method | Cell Separation Principle | Cell Capture Efficiency | Transcript Capture Efficiency | Key Applications in Stem Cell Research |

|---|---|---|---|---|

| Fluidigm C1 | Size-specific microfluidic chambers | ~1,000 cells per run | ~6,606 genes/cell (percentage not specified) | Staining and imaging prior to sequencing; requires known cell size [3] |

| DropSeq | Droplet-based microfluidics | ~5% of cells per run (approx. 7,000 cells) | ~10.7% of cell's transcripts | Cost-effective studies of heterogeneous populations [3] |

| 10X Genomics Chromium | Droplet-based microfluidics | ~65% of cells per run (approx. 1,000 cells) | ~14% of cell's transcripts | High-efficiency capture of rare stem cell populations [3] |

| SCI-Seq | Combinatorial indexing of methanol-fixed cells | 5%-10% of cells | ~10%-15% of cell's transcripts | Massive-scale experiments (up to 500,000 cells) [3] |

| Smart-seq2 | Micromanipulation or FACS | Lower throughput, full-length transcripts | High sensitivity for full-length coverage | Alternative splicing analysis, allele-specific expression [2] |

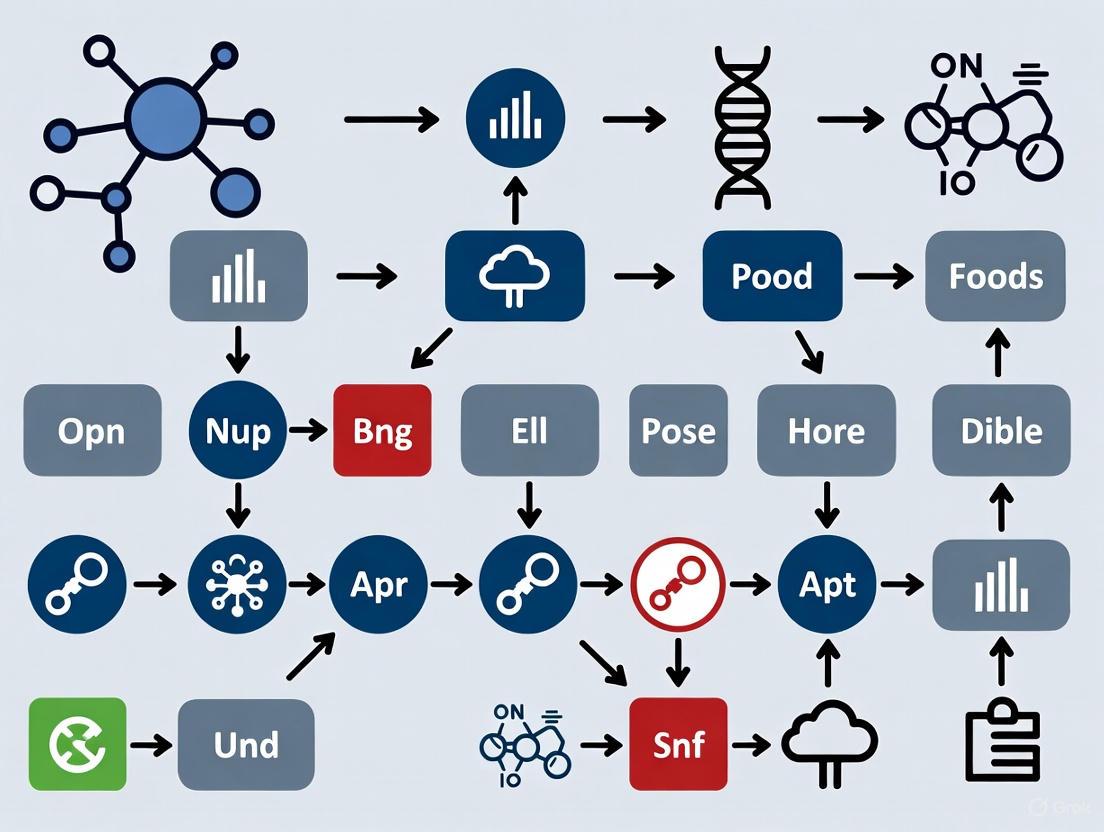

Figure 1: scRNA-seq Workflow from Cell Isolation to Data Analysis

Critical Experimental Considerations for Stem Cell RNA-seq

Sample Preparation and Quality Control

The initial steps of sample preparation are particularly critical for stem cell research. Creating high-quality single-cell suspensions while preserving cell viability and RNA integrity is essential [4]. For embryonic and tissue-specific stem cells, this often requires optimized dissociation protocols that minimize cellular stress and preserve transcriptional states [4]. Stem cells are particularly sensitive to handling, making gentle dissociation and rapid processing crucial for obtaining biologically relevant data.

Quality control metrics must be tailored to stem cell populations. Key parameters include:

- Transcripts per cell: Cells with very low counts may be dead or damaged, while abnormally high counts may indicate doublets (multiple cells captured together) [3].

- Mitochondrial gene percentage: Elevated levels often indicate stressed or dying cells, a critical consideration for sensitive stem cell populations [3].

- Housekeeping gene expression: Confirms general RNA quality and capture efficiency [3].

For stem cell applications, these thresholds must be established carefully. As noted in the literature, "If all cells with a transcript count higher than 2 SDs from the mean are removed from the analysis, it could lead to the elimination of all cancer cells, mistaking them for doublets because of their high transcriptional activity" [3]. Similarly, highly transcriptionally active stem cells might be mistakenly excluded with inappropriate thresholds.

Unique Challenges in Stem Cell Transcriptomics

Stem cell populations present specific challenges for RNA-seq quantification. The low RNA content in some quiescent stem cell populations combined with the stochastic nature of gene expression in individual cells leads to technical artifacts like "drop-out" events, where transcripts are detected in some cells but not others despite being expressed [5]. This zero-inflation problem is particularly relevant when studying rare transcriptional events in stem cell populations. Additionally, the dynamic nature of stem cell differentiation requires methods that can reconstruct continuous processes from snapshots of static data [1].

Bioinformatics Pipelines for Stem Cell Data Analysis

Essential Computational Steps

The computational analysis of scRNA-seq data involves multiple steps to transform raw sequencing data into biologically meaningful information. The standard pipeline includes:

- Quality Control and Filtering: Removal of low-quality cells and artifacts [3]

- Normalization: Accounting for technical variability between cells [3]

- Feature Selection: Identifying highly variable genes most relevant to biological variation [3]

- Dimensionality Reduction: Using methods like PCA and t-SNE/UMAP to visualize high-dimensional data [3]

- Clustering: Identifying distinct cell populations and states [3]

- Differential Expression: Identifying genes that define clusters or vary between conditions [5]

For stem cell research, specialized algorithms have been developed to address specific biological questions. Pseudotime analysis tools (e.g., Monocle) order cells along differentiation trajectories, reconstructing dynamic processes from static snapshots [1]. Gene-gene co-expression network analysis can reveal regulatory relationships critical for stem cell identity and fate decisions [6].

Differential Expression Tools for Stem Cell Research

Table 2: Comparison of Differential Expression Analysis Methods for Stem Cell Data

| Method | Underlying Model | Key Features | Performance with Stem Cell Data |

|---|---|---|---|

| DESeq2 | Negative binomial model with shrinkage estimation | Designed for bulk RNA-seq but applicable to scRNA-seq | High precision but lower true positive rates; suitable for well-defined populations [5] |

| edgeR | Negative binomial models with empirical Bayes estimation | Robust for bulk and single-cell data | Similar performance to DESeq2; effective for identifying markers [5] |

| MAST | Two-part hierarchical model | Specifically addresses dropout events in scRNA-seq | Improved performance for heterogeneous stem cell populations with abundant zeros [5] |

| SCDE | Mixture probabilistic model | Combines Poisson (dropouts) and negative binomial (amplified genes) | Effective for capturing bimodality in partially differentiated populations [5] |

| Monocle2 | Census count normalization with negative binomial | Designed for trajectory and time-series analysis | Particularly valuable for reconstructing stem cell differentiation paths [5] |

| scDD | Bayesian framework | Detects differential distribution (mean and modality) | Identifies heterogeneous responses in stem cell populations [5] |

A comprehensive benchmarking study evaluating eleven differential expression tools revealed important trade-offs for stem cell researchers. "In general, agreement among the tools in calling DE genes is not high. There is a trade-off between true-positive rates and the precision of calling DE genes. Methods with higher true positive rates tend to show low precision due to their introducing false positives, whereas methods with high precision show low true positive rates due to identifying few DE genes" [5]. This underscores the importance of selecting analytical methods based on specific research questions and experimental designs.

Applications in Stem Cell Biology

Delineating Developmental Trajectories

scRNA-seq has proven particularly powerful for reconstructing developmental processes. By profiling individual cells across different timepoints during differentiation, researchers can infer "pseudotime" trajectories that reveal the sequence of transcriptional changes as stem cells mature into specialized cell types [1]. For example, in a comprehensive human embryo reference dataset integrating six published studies, "Slingshot trajectory inference based on the 2D UMAP embeddings revealed three main trajectories related to the epiblast, hypoblast and TE lineage development starting from the zygote" [7]. This approach identified 367, 326, and 254 transcription factor genes showing modulated expression along the epiblast, hypoblast, and TE trajectories, respectively [7].

Identifying Novel Stem Cell Populations and States

The unbiased nature of scRNA-seq enables discovery of previously unrecognized cell types and states within supposedly homogeneous stem cell populations. This capability has been instrumental in identifying rare stem cell subtypes, transitional states during differentiation, and context-dependent functional states [1] [2]. In one study, "single-cell RNA-seq can identify numerous sub-populations of cells that would be missed if bulk RNA-seq were performed instead" [1]. These findings have reshaped our understanding of stem cell heterogeneity and its functional implications.

Research Reagent Solutions for Stem Cell RNA-seq

Table 3: Essential Research Reagents and Platforms for Stem Cell RNA-seq

| Reagent/Platform | Function | Application Notes for Stem Cell Research |

|---|---|---|

| Chromium X Series (10X Genomics) | Microfluidic partitioning system | Enables high-throughput profiling (80K-960K cells per kit); ideal for heterogeneous stem cell populations [4] |

| Fluidigm C1 | Automated microfluidic cell capture | Allows staining and imaging prior to sequencing; suitable for smaller-scale studies of defined populations [3] |

| Gel Beads with Barcoded Oligonucleotides | Cellular barcoding and mRNA capture | Each bead contains cell barcode and unique molecular identifiers (UMIs) for digital counting [3] [4] |

| Smart-seq2 Reagents | Full-length cDNA preparation | Provides full transcript coverage; optimal for alternative splicing analysis in stem cells [2] |

| Cell Ranger Pipeline | Data processing and alignment | Transforms barcoded sequencing data into expression matrices; compatible with various sequencing platforms [4] |

| SingleCellExperiment Class | Data structure for R/Bioconductor | Standardized container for scRNA-seq data; enables interoperability between analysis packages [8] |

Future Perspectives and Methodological Advancements

The field of scRNA-seq continues to evolve rapidly, with over 1,000 analysis tools now available [8]. Recent trends show a shift in focus from ordering cells on continuous trajectories to integrating multiple samples and leveraging reference datasets [8]. Emerging computational methods specifically address stem cell research needs, including tools for identifying rare subpopulations, reconstructing complex differentiation pathways, and integrating multi-omics data from the same cells.

The development of benchmarking frameworks using synthetic spike-in controls and in silico mixtures provides robust evaluation of analytical performance [9], helping stem cell researchers select optimal methods for their specific applications. As these technologies become more accessible and analytical methods more sophisticated, RNA-seq will continue to deepen our understanding of stem cell biology and accelerate translational applications in regenerative medicine.

Figure 2: Bioinformatics Analysis Pipeline for Stem Cell RNA-seq Data

For stem cell researchers, unlocking the secrets of cellular identity, differentiation, and function hinges on accurately measuring gene expression. The choice of sequencing technology—bulk RNA sequencing or single-cell RNA sequencing—fundamentally shapes the questions you can answer. Bulk RNA-seq provides a population-wide average, while single-cell RNA-seq reveals the intricate tapestry of individual cellular transcriptomes. This guide provides an objective comparison of these technologies, focusing on their performance in differential expression analysis for stem cell research, to help you select the optimal tool for your specific scientific inquiry.

Technology at a Glance: Core Principles and Workflows

Understanding the fundamental differences in how bulk and single-cell RNA sequencing data are generated is crucial for selecting the appropriate method.

Bulk RNA Sequencing: The Population Average

Bulk RNA sequencing analyzes the collective RNA from a population of thousands to millions of cells. The biological sample is digested to extract total RNA, which is then converted into cDNA and prepared into a sequencing library. The resulting data represents a composite gene expression profile, providing an average expression level for each gene across all cells in the sample [10] [11]. This approach is analogous to hearing the roar of a crowd without distinguishing individual voices.

Single-Cell RNA Sequencing: The Individual Voice

Single-cell RNA sequencing measures the whole transcriptome of individual cells. A critical first step is the generation of a viable single-cell suspension. Cells are then individually partitioned, often using microfluidics as in the 10x Genomics Chromium system, where each cell is enclosed in a droplet with a unique barcode. This barcode tags every mRNA transcript from a single cell, allowing bioinformaticians to trace its origin after sequencing. This process captures the heterogeneity present within a cell population [10] [12] [13].

The following diagram illustrates the fundamental workflow differences between these two approaches.

Head-to-Head Comparison: Key Technical Differences

The table below summarizes the critical distinctions between bulk and single-cell RNA sequencing, which directly influence their suitability for various research scenarios in stem cell biology.

Table 1: Key Characteristics of Bulk vs. Single-Cell RNA Sequencing

| Feature | Bulk RNA Sequencing | Single-Cell RNA Sequencing |

|---|---|---|

| Resolution | Population average [10] [11] | Individual cell level [10] [11] |

| Cell Heterogeneity Detection | Limited; masks differences [11] | High; reveals distinct subpopulations and rare cells [10] [11] |

| Rare Cell Type Detection | Not possible; signal diluted [11] | Possible; can identify very rare cell types [11] |

| Typical Cost per Sample | Lower (~1/10th of scRNA-seq) [11] | Higher [11] |

| Data Complexity | Lower; simpler analysis [11] | Higher; requires specialized computational tools [11] |

| Gene Detection Sensitivity | Higher per sample; more genes detected [11] | Lower per cell; fewer genes detected per cell due to sparsity [11] |

| Primary Challenge | Cannot resolve cellular heterogeneity [10] [11] | Data sparsity, technical noise, and complex data analysis [5] [11] |

Differential Expression Analysis: Performance and Protocols

Differential expression (DE) analysis identifies genes that are statistically significantly expressed between conditions. The nature of the data from bulk and single-cell technologies demands different analytical strategies and tools.

Analytical Challenges in Single-Cell Data

Single-cell RNA-seq data is characterized by its high sparsity, meaning a large proportion of data points are zero counts, stemming from both biological and technical factors [5]. Furthermore, the data exhibits multimodality—the expression of a gene may follow multiple distinct distributions across different cell subpopulations [5]. These characteristics violate the assumptions of many traditional DE tools designed for bulk data, necessitating the development of specialized methods.

Benchmarking Differential Expression Tools

A comprehensive benchmark of 46 DE workflows for single-cell data with multiple batches evaluated methods based on their F-score and Area Under the Precision-Recall Curve (AUPR) [14]. The performance of different methods is highly dependent on data characteristics like sequencing depth and the strength of batch effects.

Table 2: High-Performing Differential Expression Methods Under Different Conditions

| Experimental Condition | Recommended Methods | Key Findings |

|---|---|---|

| Moderate Sequencing Depth & Large Batch Effects | MAST with batch covariate (MASTCov), ZINB-WaVE weights with edgeR (ZWedgeR_Cov), limmatrend [14] | Covariate modeling that includes batch as a factor improves performance substantially. Using pre-corrected (batch-effect-corrected) data rarely helps [14]. |

| Low Sequencing Depth | limmatrend, DESeq2, Fixed Effects Model on log-normalized data (LogN_FEM), Wilcoxon test [14] | Methods based on zero-inflated models (e.g., ZINB-WaVE) deteriorate in performance. The relative performance of non-parametric methods like the Wilcoxon test improves [14]. |

| General Recommendation | limmatrend, MAST, DESeq2, and their covariate models [14] | These methods consistently show good performance across a range of depths. Covariate modeling is beneficial when batch effects are substantial [14]. |

For bulk RNA-seq data, established tools like DESeq2 and edgeR remain the gold standards [5]. They model count data using negative binomial distributions and are highly robust for analyzing population-level expression differences.

Experimental Protocol for a Single-Cell DE Study

A typical workflow for a differential expression study in stem cell biology using scRNA-seq involves the following steps:

- Single-Cell Isolation and Library Preparation: Generate a high-quality single-cell suspension from your stem cell population or tissue. Partition cells using a platform like 10x Genomics Chromium to barcode transcripts. Prepare sequencing libraries following the manufacturer's protocol [12] [13].

- Sequencing and Data Generation: Sequence the libraries on an Illumina sequencer to an appropriate depth (e.g., 50,000 reads per cell). Convert BCL files to FASTQ and then to a count matrix using pipelines like Cell Ranger [12].

- Quality Control and Preprocessing: Filter the data to remove low-quality cells, doublets, and empty droplets. Remove genes that are sparsely expressed. Normalize the data to account for differences in sequencing depth between cells [12].

- Cell Type Identification and Clustering: Perform dimensionality reduction (PCA, UMAP) and cluster cells. Use known marker genes to annotate cell types, such as distinguishing pluripotent stem cells from differentiating progeny [12] [15].

- Differential Expression Analysis: Isolate the cell population of interest across conditions (e.g., treated vs. control pluripotent stem cells). Use a high-performing method identified in Table 2, such as MAST with appropriate batch covariates, to identify statistically significant DE genes [14].

- Validation: Confirm key findings using an orthogonal method, such as fluorescent in situ hybridization (FISH) or qPCR [12].

Application in Stem Cell Research: Use Cases and Data

The choice between bulk and single-cell sequencing is dictated by the biological question. The table below outlines classic scenarios in stem cell research where each technology excels.

Table 3: Matching Technology to Research Goals in Stem Cell Biology

| Research Goal | Recommended Technology | Exemplary Application |

|---|---|---|

| Identifying Rare Stem Cell Subpopulations | Single-Cell RNA-seq | Identification of a rare cluster of mouse embryonic stem cells highly expressing Zscan4, a population with greater differentiation potential [11]. |

| Dissecting Lineage Differentiation Trajectories | Single-Cell RNA-seq | Reconstruction of developmental hierarchies during stem cell differentiation, revealing branching points and transient cell states [10] [16]. |

| Benchmarking In-Vitro Cell Differentiation | Single-Cell RNA-seq | Projecting in-vitro-derived stem cell populations onto integrated atlases of primary cells (e.g., using Stemformatics) to assess transcriptional similarity and maturity [15]. |

| Transcriptional Profiling of Homogeneous Populations | Bulk RNA-seq | Measuring the average gene expression response of a homogeneous cultured stem cell line to a specific growth factor or small molecule. |

| Biomarker Discovery from Bulk Tissue | Bulk RNA-seq | Identifying a prognostic gene expression signature from bulk tumor samples, which may be dominated by a specific cell population [13]. |

| Large-Scale Cohort Studies | Bulk RNA-seq | Profiling hundreds of samples from biobanks or clinical trials in a cost-effective manner to discover associations with clinical outcomes [10]. |

Success in stem cell transcriptomics relies on a suite of experimental and bioinformatic tools.

Table 4: Essential Research Reagent Solutions and Resources

| Item | Function / Application | Example / Note |

|---|---|---|

| Chromium X Series Instrument | High-throughput single cell partitioning instrument for barcoding cells. | 10x Genomics platform [13]. |

| GEM-X Flex / Universal Assays | Single cell RNA-seq reagent kits for library preparation on partitioned cells. | 10x Genomics assay kits [10]. |

| Stemformatics.org | Data portal for finding, viewing, and benchmarking stem cell transcriptional profiles against curated public data. | Integrated atlases for pluripotent and myeloid cells [15]. |

| Cell Ranger | Software pipeline for demultiplexing, barcode processing, and counting from 10x Genomics single cell data. | Standard analysis suite [13]. |

| MAST (Model-based Analysis of Single-Cell Transcriptomics) | R package for differential expression analysis of scRNA-seq data using a hierarchical generalized linear model. | Recommended for scRNA-seq DE analysis, handles dropouts [14] [5] [17]. |

| DESeq2 / edgeR | R/Bioconductor packages for differential expression analysis of bulk RNA-seq count data. | Gold-standard for bulk DE analysis [14] [5]. |

| FastQC | Quality control tool for high-throughput sequence data. | Checks raw sequencing data quality pre-alignment [12]. |

| UMI-tools | Software for handling Unique Molecular Identifiers in scRNA-seq data to correct for PCR amplification bias. | Critical for accurate transcript quantification [12]. |

Decision Framework and Future Outlook

Choosing the right technology requires a strategic balance between your research question, budget, and technical expertise. The following decision diagram provides a logical pathway for selecting the most appropriate sequencing method.

Future trends point towards multi-omics approaches that combine scRNA-seq with other modalities like ATAC-seq (for chromatin accessibility) to provide a more comprehensive view of cellular state. Furthermore, spatial transcriptomics is emerging as a powerful technology that overlays gene expression data onto tissue morphology, directly addressing the loss of spatial context in standard scRNA-seq [12] [13]. As costs continue to decrease and methods for integrating bulk and single-cell data mature, researchers will be increasingly empowered to design studies that leverage the strengths of both resolutions.

Single-cell RNA sequencing (scRNA-seq) has revolutionized stem cell research by enabling the dissection of cellular heterogeneity and the identification of rare cell populations, which are fundamental to understanding differentiation, reprogramming, and disease mechanisms. However, the analysis of scRNA-seq data presents unique computational challenges that distinguish it from bulk RNA-seq approaches. Three primary characteristics define these challenges: dropouts, where a gene is observed at a moderate expression level in one cell but is not detected in another cell of the same type; cellular heterogeneity, reflecting the diverse transcriptional states within a population; and data multimodality, where gene expression values follow complex, multiple distributions across cells [18] [5]. These factors collectively contribute to the high-dimensionality and sparsity of scRNA-seq data, posing significant hurdles for accurate differential expression (DE) analysis. For stem cell researchers aiming to identify key transcriptional drivers of cell fate decisions, choosing appropriate computational tools is paramount. This guide provides an objective comparison of DE analysis methods, evaluating their performance in addressing these inherent data challenges to inform robust biological discovery.

Decoding the Data: Fundamental scRNA-seq Challenges

The Dropout Phenomenon: Technical Noise versus Biological Signal

Dropout events refer to the phenomenon where a gene is highly expressed in one cell but undetected in another similar cell, primarily caused by the low starting quantities of mRNA in individual cells and inefficiencies in cDNA library preparation [18] [5]. In a typical scRNA-seq dataset, over 97% of the count matrix can be zeros [18], creating a zero-inflated data structure that complicates analysis. While traditionally viewed as a problem requiring imputation or correction, recent approaches have demonstrated that dropout patterns themselves carry biological information. Genes functioning in the same pathway often exhibit similar dropout patterns across cell types, providing an alternative signal for cell population identification [18]. This paradigm shift enables methods like co-occurrence clustering, which binarizes expression data and identifies cell types based on shared patterns of gene detection rather than quantitative expression levels alone [18].

Cellular Heterogeneity: Unraveling Population Diversity

Stem cell populations often contain cells at various stages of differentiation, creating substantial transcriptional diversity. This heterogeneity manifests in scRNA-seq data as multimodal expression distributions, where genes show distinct expression patterns across different subpopulations [5]. Unlike bulk RNA-seq, which averages expression across thousands of cells, scRNA-seq captures this cellular diversity, requiring analytical approaches that can identify and model multiple cell states simultaneously. This characteristic is particularly relevant for stem cell researchers investigating lineage commitment, where identifying transitional states is crucial for understanding differentiation trajectories.

Data Multimodality: Complex Distributions Across Cells

The combination of biological heterogeneity and technical artifacts creates data multimodality, where expression values do not follow a single continuous distribution but instead cluster into multiple modes [5]. This complexity challenges conventional DE tools that assume unimodal distributions. As shown in Figure 1, multimodal distributions require specialized statistical approaches that can capture these patterns rather than simply comparing mean expression levels between conditions.

Comparative Analysis of scRNA-seq Differential Expression Tools

Methodologies and Statistical Approaches

Differential expression tools for scRNA-seq employ diverse statistical frameworks to address data sparsity, heterogeneity, and multimodality:

- Two-Part Models: Methods like MAST (Model-based Analysis of Single-cell Transcriptomics) and SCDE (Single-Cell Differential Expression) use a two-component mixture model that separately handles the dropout events (typically modeled with a Poisson distribution) and the amplified gene expression (modeled with a negative binomial distribution) [5]. This approach explicitly accounts for the excess zeros in the data.

- Distribution-Based Methods: scDD (scRNA-seq Differential Distributions) employs a Bayesian framework to identify genes with differential distributions beyond just mean expression, categorizing differences into four modalities: differential proportion, mean, magnitude, or shape [5]. This approach is particularly suited for detecting heterogeneous responses in stem cell populations.

- Nonparametric Approaches: Tools like SigEMD and EMDomics use Earth Mover's Distance (EMD) to measure dissimilarities between entire expression distributions without assuming specific parametric forms, making them robust to multimodal data [5].

- Zero-Inflated Models: DEsingle utilizes a zero-inflated negative binomial (ZINB) regression model to estimate the proportion of real zeros versus dropout zeros and classifies DE genes into three categories based on the type of difference detected [5].

Table 1: Overview of scRNA-seq Differential Expression Tools

| Tool | Statistical Model | Input Data | Key Features | Stem Cell Application |

|---|---|---|---|---|

| MAST | Two-part generalized linear model | Normalized expression | Models dropout rate and conditional expression; handles covariates | Identifying lineage-specific markers in heterogeneous cultures |

| scDD | Bayesian modeling of distributions | Normalized expression | Detects differential distribution patterns; identifies multimodal genes | Finding subpopulation-specific responses to differentiation cues |

| D3E | Non-parametric or analytic models | Read counts | Designed for heterogeneous data without preprocessing; analyzes raw counts | Detecting early fate bias in apparently homogeneous stem cells |

| DESingle | Zero-inflated negative binomial | Read counts | Classifies DE into three types; estimates real vs. dropout zeros | Distinguishing technical artifacts from biological zeros in rare populations |

| SigEMD | Earth Mover's Distance | Normalized expression | Non-parametric; compares entire expression distributions | Identifying genes with complex expression changes during maturation |

Experimental Protocols for Benchmarking Studies

Comprehensive evaluations of DE tools follow standardized workflows to ensure fair comparisons. A typical benchmarking protocol involves:

- Data Simulation: Generating synthetic scRNA-seq data with known differential expression status using parameters estimated from real datasets (e.g., immortalized B-cell samples) [19]. This creates a gold standard for evaluating true positive and false positive rates.

- Real Data Validation: Applying tools to experimentally validated datasets with established marker genes, such as human peripheral blood mononuclear cells (PBMCs) or stem cell differentiation time courses [5] [20].

- Performance Metrics Calculation: Assessing tools based on:

- Accuracy: Area under the precision-recall curve (AUPRC) and receiver operating characteristic (AUROC)

- Precision and Recall: Trade-off between true positive rates and false discovery rates

- Runtime and Scalability: Computational efficiency with increasing cell numbers

- Biological Relevance: Gene set enrichment analysis of detected DE genes [5]

The following workflow diagram illustrates the standard experimental protocol for benchmarking DE tools:

Figure 1: Experimental workflow for benchmarking DE analysis tools

Performance Comparison Across Tool Categories

Evaluation studies reveal significant differences in tool performance across various data characteristics relevant to stem cell research:

Table 2: Performance Comparison of DE Tools Across Data Challenges

| Tool | Dropout Handling | Heterogeneity Detection | Multimodality Sensitivity | Stem Cell Data Recommendation |

|---|---|---|---|---|

| MAST | High (explicit dropout model) | Medium | Low | Recommended when covariate adjustment is needed |

| scDD | Medium | High (designed for heterogeneity) | High (detects distribution changes) | Ideal for identifying subpopulation markers |

| D3E | Medium | High | Medium | Suitable for analyzing raw count data without normalization |

| DESingle | High (models zero inflation) | Medium | Medium | Preferred for distinguishing biological vs. technical zeros |

| SigEMD | Low | High | High | Best for detecting complex distributional changes |

| DESeq2 | Low (designed for bulk) | Low | Low | Not recommended for heterogeneous single-cell data |

Benchmarking analyses consistently show a trade-off between true positive rates and precision across methods [5]. Tools with higher true positive rates typically show lower precision due to introducing false positives, while methods with high precision tend to have lower true positive rates as they identify fewer DE genes. Notably, methods specifically designed for scRNA-seq data don't always outperform bulk RNA-seq methods adapted for single-cell analysis [5]. The agreement between tools in calling DE genes is generally low, highlighting the importance of method selection based on specific biological questions and data characteristics.

Visualization Strategies for scRNA-seq Data Interpretation

Effective visualization is crucial for interpreting scRNA-seq analysis results, particularly for exploring cellular heterogeneity and expression patterns:

- UMAP (Uniform Manifold Approximation and Projection): Visualizes both local and global relationships, preserving population structure across scales to identify distinct cell types or states [21]. This is particularly valuable for observing stem cell subpopulations.

- t-SNE (t-Distributed Stochastic Neighbor Embedding): Emphasizes local cellular relationships and highlights fine population structure, but may not preserve global geometry [22] [21].

- Violin Plots: Show expression distribution of marker genes across clusters, combining statistical summary with distribution shape to reveal bimodal or skewed expression patterns [21] [23].

- Feature Plots: Display expression patterns of genes on dimensionality reduction plots (UMAP/t-SNE) to visualize co-expression or mutual exclusivity in different cell types [23].

- Volcano Plots: Visualize differentially expressed genes by plotting statistical significance (-log₁₀(p-value)) against magnitude of change (log₂ fold change), highlighting genes with large and significant expression differences [23].

Table 3: Essential Visualization Techniques for scRNA-seq Analysis

| Visualization | Primary Purpose | Strengths | Limitations | Stem Cell Application |

|---|---|---|---|---|

| UMAP | Cell population identification | Preserves global and local structure; faster computation | Distance interpretation requires caution | Mapping differentiation trajectories |

| t-SNE | Fine cluster examination | Excellent local structure preservation; emphasizes clusters | Loses global structure; computationally intensive | Identifying rare transitional states |

| Violin Plot | Expression distribution analysis | Shows full distribution shape and summary statistics | Limited to one gene at a time | Comparing marker expression across conditions |

| Volcano Plot | DE result overview | Quickly identifies significant large-effect genes | Does not show expression patterns across cells | Prioritizing candidate genes for validation |

| Dot Plot | Multi-gene, multi-cluster summary | Compact visualization of expression and detection rate | Loses individual cell resolution | Screening multiple stem cell markers simultaneously |

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful scRNA-seq analysis in stem cell research requires both computational tools and appropriate analytical frameworks:

Table 4: Essential Research Reagent Solutions for scRNA-seq Analysis

| Resource Category | Specific Tools/Frameworks | Function | Application Context |

|---|---|---|---|

| Differential Expression Tools | MAST, scDD, DESingle | Identify statistically significant expression changes | Finding lineage-specific markers; response genes |

| Clustering Algorithms | Seurat, SC3, PhenoGraph | Identify cell populations without prior labels | Discovering novel stem cell states |

| Data Integration Platforms | scVI, Scanpy, Seurat | Batch correction and multi-sample analysis | Integrating data from multiple differentiation experiments |

| Visualization Packages | SCope, C-DIAM Multi-Omics Studio | Interactive exploration of single-cell data | Communicating findings; exploratory analysis |

| Pathway Analysis Tools | GSEA, Reactome, WikiPathways | Biological interpretation of DE results | Understanding functional implications of gene sets |

The unique characteristics of scRNA-seq data—dropouts, heterogeneity, and multimodality—demand specialized analytical approaches tailored to specific research questions in stem cell biology. No single differential expression method outperforms all others across all scenarios, highlighting the need for strategic tool selection. For identifying subpopulation-specific markers in heterogeneous stem cell cultures, distribution-based methods like scDD offer superior sensitivity to multimodal expression patterns. When analyzing rare cell populations or situations where distinguishing technical dropouts from biological zeros is crucial, zero-inflated models like DESingle provide more accurate characterization. For studies requiring covariate adjustment or analyzing focused gene sets, MAST's two-part model maintains robust performance. Stem cell researchers should prioritize tools that explicitly address the specific data challenges most relevant to their experimental systems, validate findings across multiple analytical approaches when possible, and maintain rigorous visualization practices to ensure biological insights are grounded in appropriate computational frameworks.

In stem cell research, the journey from raw sequencing data to a gene count matrix is a critical foundation for downstream discoveries. This process, involving the alignment of sequencing reads to a reference and the quantification of gene expression, directly impacts the reliability of identifying differentially expressed genes in crucial systems, from hematopoietic stem cells (HSCs) to pluripotent stem cells [24] [15]. With numerous bioinformatic pipelines available, researchers face the challenge of selecting the most appropriate tools for their specific experimental context. This guide provides an objective comparison of common alignment and quantification pipelines, framing their performance within the rigorous demands of stem cell research, where accurately identifying subtle transcriptional changes can illuminate disease mechanisms and potential therapeutic targets [24] [25].

Performance Comparison of scRNA-seq Alignment Tools

A benchmark study evaluating five common alignment tools on 10X Genomics datasets revealed significant differences in runtime, cell detection, and gene quantification [26]. The table below summarizes the key findings.

Table 1: Performance comparison of common scRNA-seq alignment tools on 10X Genomics data

| Tool | Alignment Approach | Runtime | Barcode Correction | Key Strengths | Potential Limitations |

|---|---|---|---|---|---|

| Cell Ranger 6 | Classical alignment (STAR) | Moderate | Whitelist-based | High precision; standard for 10X data | Resource-intensive; kit-dependent |

| STARsolo | Classical alignment | Fast (vs Cell Ranger) | Whitelist-based | High precision; faster than Cell Ranger; less memory | Can be memory intensive |

| Kallisto | Pseudo-alignment | Fastest | Whitelist-based (post-alignment) | Extremely fast; high number of reported cells | Overrepresentation of cells with low gene content; potential mapping artefacts |

| Alevin | Selective alignment (pseudo) | Moderate (improved with fry) | Putative whitelist (knee point) | Accurate cell calling; rare low-content cells | Historically slower; requires parameter tuning |

| Alevin-fry | Custom pseudo-alignment | Fast | Putative whitelist | Memory-efficient; fast processing | Relatively new; less extensively benchmarked |

Striking differences were observed in the overall runtime, with Kallisto being the fastest [26]. However, speed must be balanced with accuracy; Kallisto reported the highest number of cells but also an overrepresentation of cells with low gene content and unknown cell type, whereas Alevin rarely reported such low-content cells [26]. Furthermore, the set of expressed genes varied, with Kallisto detecting additional genes from the Vmn and Olfr families that are likely mapping artefacts [26].

The choice of gene annotation also significantly influences results. Using a filtered annotation (containing only protein-coding, lncRNA, and immunoglobulin genes) versus a full Ensembl annotation (which includes pseudogenes) affects mitochondrial content calculation and gene composition, which can alter downstream interpretation [26].

Differential Expression Analysis Workflows for Single-Cell Data

When integrating multiple scRNA-seq batches for differential expression (DE) analysis, a comprehensive benchmark of 46 workflows provides critical insights [14]. The performance of these methods is substantially impacted by batch effects, sequencing depth, and data sparsity.

Table 2: Performance of differential expression analysis strategies across different data conditions

| Analysis Strategy | High Sequencing Depth | Low Sequencing Depth | Small Batch Effects | Large Batch Effects | Key Tools |

|---|---|---|---|---|---|

| Covariate Modeling | Good | Good | Can slightly deteriorate | Substantial improvement | MASTCov, ZWedgeRCov, DESeq2Cov, limmatrend_Cov |

| Batch-Effect Corrected (BEC) Data | Rarely improves analysis | Rarely improves analysis | Rarely improves analysis | Rarely improves analysis | scVI (with limmatrend showed some improvement) |

| Meta-analysis | Does not improve on naïve DE | Improved performance for low depth | Does not improve on naïve DE | Does not improve on naïve DE | LogN_FEM, FEM |

| Pseudobulk Methods | Good for small effects | Good for small effects | Good | Worst for large effects | edgeR, DESeq2 (on pseudobulk counts) |

| Naïve DE Analysis | Good with the right tool | Good with the right tool | Good | Poor | limmatrend, Wilcoxon test, DESeq2, MAST |

For single-cell DE analysis with multiple batches, the benchmark suggests that using batch-corrected data rarely improves, and can even deteriorate, the analysis [14]. In contrast, including batch as a covariate in the statistical model often improves performance, especially when batch effects are large [14]. At low sequencing depths, methods like Wilcoxon test on log-normalized data and fixed effects model (FEM) meta-analysis perform well, whereas single-cell-specific methods based on zero-inflation models (e.g., MAST) may deteriorate in performance [14].

Experimental Protocols for Benchmarking

Benchmarking scRNA-seq Alignment Tools

The comparative analysis of scRNA-seq alignment tools was conducted using three published datasets for human and mouse, sequenced with different versions of the 10X Genomics protocol [26]. The methodology can be summarized as follows:

- Tools Compared: Cell Ranger version 6, STARsolo, Kallisto, Alevin, and Alevin-fry.

- Evaluation Metrics: Differences were evaluated in whitelisting (cell calling), gene quantification, overall performance (runtime and memory), and the impact on downstream analysis (clustering and detection of differentially expressed genes).

- Annotation Sets: The study compared the effects of using a filtered gene annotation (as recommended by 10X Genomics) versus a complete Ensembl annotation, including pseudogenes and other biotypes, on mitochondrial content and gene composition [26].

Benchmarking Differential Expression Workflows

The benchmark of 46 DE workflows employed both model-based simulation using the splatter R package and model-free simulation using real scRNA-seq data to incorporate realistic and complex batch effects [14]. The core protocol included:

- Workflow Components: Ten batch-effect correction methods (e.g., ZINB-WaVE, MNN, scMerge, Seurat, ComBat), covariate models, three meta-analysis methods (wFisher, FEM, REM), and seven DE methods (DESeq2, edgeR, limmatrend, MAST, Wilcoxon test) were combined.

- Experimental Design: Focus was on a "balanced" design where each batch contained both sample conditions to be compared, enabling batch effects to be accommodated in the DE model.

- Performance Metrics: For simulated data, the F-score and area under the precision-recall curve (AUPR) were used, with an emphasis on F0.5-scores and partial AUPR for recall rates <0.5 to weigh precision higher than recall [14].

Visualizing the Bioinformatics Workflow

The following diagram illustrates the standard pathway from FASTQ files to a count matrix, highlighting the key decision points for tool selection.

For researchers embarking on scRNA-seq analysis, the following resources and tools are indispensable.

Table 3: Key resources for scRNA-seq data analysis in stem cell research

| Resource Category | Specific Examples | Function/Purpose |

|---|---|---|

| Reference Atlases | Stemformatics Myeloid Cell Atlas [15] | Benchmark in-vitro-derived stem cells against primary human myeloid cell references. |

| Quality Control Tools | FASTQC, MultiQC, fastp, Trim Galore [27] | Assess and improve raw read quality; remove adapter sequences and low-quality bases. |

| Alignment & Quantification | STAR, Kallisto (bustools), Alevin-fry, Cell Ranger [26] [28] | Map reads to a reference genome/transcriptome and generate gene-cell count matrices. |

| Doublet Detection | Scrublet (Python), DoubletFinder (R) [29] | Identify and remove artifacts from multiple cells sharing the same barcode. |

| Batch Effect Correction | Seurat, SCTransform, scVI, ComBat [14] [29] | Remove technical variation between samples processed in different batches. |

| Differential Expression | limmatrend, MAST (with covariate), Wilcoxon test [14] | Identify statistically significant gene expression changes between conditions. |

Selecting an optimal pipeline from FASTQ to count matrix is a decisive step in stem cell transcriptomics. Evidence suggests that pseudo-aligners like Kallisto and Alevin-fry offer remarkable speed, while traditional aligners like STARsolo and Cell Ranger provide high precision [26]. For differential expression analysis across multiple batches, modeling batch as a covariate in the DE model consistently outperforms analyzing batch-corrected data [14]. As stem cell research continues to leverage scRNA-seq to unravel the molecular underpinnings of development and disease, making informed choices during data preprocessing will ensure that downstream biological conclusions are built upon a robust and accurate foundation.

Selecting and Applying Differential Expression Tools for Robust Stem Cell Insights

The emergence of single-cell RNA sequencing (scRNA-seq) has fundamentally transformed stem cell research by enabling the dissection of cellular heterogeneity at unprecedented resolution. Unlike bulk RNA-seq, which measures average gene expression across cell populations, scRNA-seq captures the transcriptomic landscape of individual cells, revealing rare cell types, dynamic transitions, and complex lineage relationships that are fundamental to stem cell biology [30]. However, this technological advancement presents substantial analytical challenges, including high levels of technical noise, excessive zeros (dropouts), and complex multimodality that demand specialized statistical approaches [31] [32].

The selection of an appropriate differential expression (DE) methodology is particularly critical in stem cell studies, where accurately identifying subtle transcriptional differences between closely related cellular states can determine success in identifying novel progenitors, understanding differentiation pathways, or discovering disease-relevant cellular subpopulations. This article provides a systematic taxonomy and comparative assessment of the three predominant methodological frameworks for single-cell differential expression analysis: parametric, non-parametric, and bulk-derived approaches. By synthesizing recent benchmarking studies and experimental validations, we aim to equip researchers with evidence-based guidance for selecting optimal analytical strategies tailored to specific research questions and experimental designs in stem cell biology.

Parametric Methods

Parametric methods operate on strong assumptions about the underlying distribution of single-cell data. These approaches specify a probabilistic model for the gene expression counts and estimate the parameters of this distribution from the data.

- Negative Binomial (NB) Models: Originally developed for bulk RNA-seq, these models account for overdispersion (variance exceeding the mean) common in count-based sequencing data. Tools like edgeR and DESeq2 have been adapted for single-cell analysis, though they may struggle with the excessive zero inflation characteristic of scRNA-seq [32].

- Zero-Inflated Negative Binomial (ZINB) Models: These extend NB models by incorporating an additional component that explicitly models the excess zeros in scRNA-seq data. Methods like ZINB-WaVE use this framework to better capture the bimodal nature of single-cell expression distributions, where zeros may represent both technical dropouts and biological absence of expression [31] [32].

- Hurdle Models: These two-component models separately handle the probability of a zero (binary component) and the mean of positive expression values (continuous component). MAST (Model-based Analysis of Single-cell Transcriptomics) employs a hurdle model with a logistic regression component for zeros and a Gaussian linear model for log-transformed non-zero expressions [32].

Non-Parametric Methods

Non-parametric methods make fewer assumptions about the underlying data distribution, instead relying on rank-based statistics or resampling techniques.

- Rank-Based Approaches: Methods like the Wilcoxon rank-sum test compare the ranks of expression values between groups rather than the raw values themselves, making them robust to outliers and non-normality.

- Resampling Methods: Bootstrap and permutation tests estimate the sampling distribution of test statistics empirically rather than assuming a theoretical distribution, providing flexibility for complex data structures.

Bulk-Derived Methods

Bulk-derived methods encompass statistical approaches originally developed for bulk RNA-seq analysis that have been subsequently applied to single-cell data, often with modifications to address single-cell-specific characteristics.

- Bulk RNA-seq Adaptations: Tools like DESeq2, edgeR, and limma-voom were designed for bulk data but remain in use for single-cell analysis, particularly for analyses aggregated to pseudo-bulk counts or for high-coverage full-length scRNA-seq protocols [33] [32].

- Compositional Methods: Approaches like ALDEx2 that address the compositional nature of sequencing data (where counts are relative rather than absolute) can be applied to both bulk and single-cell data, though they may not fully capture the zero-inflation specific to scRNA-seq [32].

Table 1: Core Methodological Categories for Single-Cell Differential Expression Analysis

| Category | Underlying Assumptions | Representative Tools | Key Strengths | Principal Limitations |

|---|---|---|---|---|

| Parametric | Assumes data follows specific probability distributions (e.g., NB, ZINB) | MAST, ZINB-WaVE, DESeq2 | Statistical efficiency when assumptions are met; direct probabilistic interpretation | Potential bias when distributional assumptions are violated |

| Non-Parametric | Minimal assumptions about data distribution | Wilcoxon rank-sum, Scater | Robustness to outliers and distributional misspecification | Generally lower statistical power; may overlook data characteristics |

| Bulk-Derived | Adapts bulk RNA-seq assumptions, often ignoring zero-inflation | DESeq2, edgeR, limma | Leverages established, validated frameworks | Poor handling of scRNA-seq excess zeros; potentially high false positive rates |

Comparative Performance Benchmarking: Insights from Systematic Evaluations

Distributional Assumptions and Goodness-of-Fit

The suitability of distributional assumptions fundamentally impacts methodological performance. A comprehensive benchmark evaluating statistical methods across single-cell, bulk RNA-seq, and metagenomics data revealed important insights about how well different models capture the characteristics of real scRNA-seq data [32].

The Negative Binomial distribution demonstrated the lowest root mean square error (RMSE) for mean count estimation in both 16S and whole metagenome shotgun sequencing data, which share sparsity characteristics with scRNA-seq data, followed by the Zero-Inflated Negative Binomial distribution [32]. Both distributions showed symmetric error distributions around zero, indicating no systematic bias in mean estimation. Conversely, the Zero-Inflated Gaussian distribution consistently underestimated observed means, while the Dirichlet-Multinomial distribution overestimated low mean counts and underestimated high mean counts [32].

For zero probability estimation, hurdle models provided the most accurate estimates of observed zero proportions in sparse data, while NB and ZINB distributions tended to overestimate zero probabilities for features with low observed zero counts [32]. This finding highlights the critical importance of selecting methods whose underlying distributions align with the specific characteristics of the experimental data.

Performance Across Experimental Scenarios

Method performance varies substantially across different experimental conditions and data types. A systematic evaluation of simulation methods for scRNA-seq data examined 12 methods across 35 experimental datasets, assessing their ability to maintain biological signals—a critical consideration for differential expression analysis [31].

Table 2: Comparative Performance of Selected Methods Across Evaluation Criteria

| Method | Type | Data Property Estimation | Biological Signal Retention | Scalability | Applicability |

|---|---|---|---|---|---|

| ZINB-WaVE | Parametric | High | Medium | Low | General purpose |

| SPARSim | Parametric | High | Medium | High | General purpose |

| SymSim | Parametric | High | Medium | Medium | General purpose |

| scDesign | Parametric | Medium | High | Medium | Power calculation |

| zingeR | Parametric | Medium | High | Medium | DE evaluation |

| SPsimSeq | Semi-parametric | Medium | Low | Low | General purpose |

The benchmark revealed that no single method outperformed all others across all evaluation criteria, indicating that optimal method selection depends on specific research goals and data characteristics [31]. Methods excelling in data property estimation (e.g., ZINB-WaVE, SPARSim, SymSim) accurately captured technical characteristics of scRNA-seq data, while others designed for specific purposes like power calculation (scDesign) or DE evaluation (zingeR) performed better at retaining biological signals despite lower accuracy in estimating overall data properties [31].

Integrated Approaches for Enhanced Robustness

Given the limitations of individual methods, integrated approaches that combine multiple algorithms may offer improved robustness. DElite is an R package that leverages four state-of-the-art DE tools (edgeR, limma, DESeq2, and dearseq) and provides a statistically combined output [33]. This approach demonstrated improved performance for detecting DE genes in small datasets, which are common in stem cell research where sample availability may be limited [33].

The package implements six different statistical methods for combining p-values (Lancaster's, Fisher's, Stouffer's, Wilkinson's, Bonferroni-Holm's, Tippett's) and returns the intersection of genes identified as DE by all four tools, attributing the least significant p-value (Max-P) to enhance robustness [33]. Validation on both synthetic and real-world RNA-sequencing data supported the improved performance of these combination approaches, particularly for small datasets with limited statistical power [33].

Practical Applications in Stem Cell Research

Analytical Considerations for Stem Cell Data

Stem cell datasets present specific analytical challenges that influence method selection. These datasets often exhibit:

- Continuous differentiation trajectories rather than discrete cell populations, requiring methods that can handle graded expression changes [7]

- Rare cell populations such as stem and progenitor cells, demanding high sensitivity to detect subtle expression differences

- Complex lineage relationships where cells share partial expression programs, complicating traditional group comparisons

- Technical variability introduced by low RNA content in certain stem cell states and sensitive protoco

The construction of a comprehensive human embryo reference through integration of six published scRNA-seq datasets demonstrates the importance of appropriate analytical frameworks for stem cell applications [7]. This resource, covering development from zygote to gastrula, enables precise annotation of cell identities in stem cell-based embryo models—a critical validation step that depends on accurate differential expression analysis between in vivo and in vitro systems [7].

Experimental Design and Workflow Considerations

Proper experimental design and analysis workflows are essential for generating biologically meaningful results in stem cell studies:

Feature selection significantly impacts downstream analysis quality. A recent benchmark evaluating feature selection methods for scRNA-seq integration found that using highly variable genes generally produces high-quality integrations and improves query mapping, label transfer, and detection of unseen populations [34]. The number of selected features, batch-aware feature selection, and lineage-specific feature selection all meaningfully affect performance, with integration models interacting differently with feature selection strategies [34].

Table 3: Key Research Reagent Solutions for Single-Cell Stem Cell Studies

| Resource Category | Specific Tools/Databases | Primary Function | Relevance to Stem Cell Research |

|---|---|---|---|

| Reference Databases | StemMapper, Human Embryo Reference | Curated gene expression references | Provides benchmark for stem cell identity and differentiation status |

| Analysis Platforms | Nygen, BBrowserX, Partek Flow | Integrated analysis environments | Accessible DE analysis for non-bioinformaticians |

| Experimental Design | SPARSim, SymSim | Data simulation | Power calculation and experimental optimization |

| Method Integration | DElite | Combined statistical approaches | Enhanced robustness for small stem cell datasets |

StemMapper represents a particularly valuable resource for the stem cell research community. This manually curated database contains over 960 transcriptomes covering a broad range of human and mouse stem cell types, with standardized processing and stringent quality control to minimize artifacts [35]. Its user-friendly interface enables fast querying, comparison, and interactive visualization of quality-controlled stem cell gene expression data, facilitating the identification of novel marker genes and lineage signatures [35].

The expanding methodological landscape for single-cell differential expression analysis offers both opportunities and challenges for stem cell researchers. No single method universally outperforms others across all experimental scenarios, underscoring the importance of selective method application based on specific research questions, data characteristics, and analytical requirements.

Parametric methods provide statistical efficiency when their distributional assumptions are satisfied, while non-parametric approaches offer robustness to violations of these assumptions. Bulk-derived methods, though suboptimal for many single-cell applications, may remain useful for specific contexts such as high-coverage data or pseudo-bulk analyses. For critical applications in stem cell research, particularly with limited sample sizes, integrated approaches that combine multiple algorithms may provide enhanced robustness.

As single-cell technologies continue evolving, with emerging approaches like long-read sequencing enabling isoform-resolution analysis [36] [37], methodological frameworks must similarly advance. The development of specialized reference atlases for stem cell biology [35] [7] and continued benchmarking efforts [31] [32] [34] will be essential for guiding method selection and advancing our understanding of stem cell biology through single-cell transcriptomics.

Differential expression (DE) analysis represents a fundamental methodology in genomic research, enabling researchers to identify genes whose expression changes significantly between different biological conditions. With the advent of single-cell RNA sequencing (scRNA-seq) technologies, the field has witnessed a paradigm shift from bulk tissue analysis to cellular-resolution transcriptomics. This transition has created both unprecedented opportunities and significant analytical challenges, as scRNA-seq data exhibit unique characteristics including high sparsity, substantial technical noise, and complex heterogeneity [38] [5]. In stem cell research, where understanding cellular heterogeneity and lineage specification is paramount, these challenges are particularly acute. The scientific community has responded by developing numerous computational methods for DE analysis, ranging from adaptations of established bulk RNA-seq tools to novel algorithms designed specifically for single-cell data.

Among the plethora of available methods, DESeq2, edgeR, and limma have maintained their prominence despite being originally developed for bulk RNA-seq, while pseudobulk approaches have emerged as particularly powerful strategies for analyzing multi-sample, multi-condition scRNA-seq experiments. This guide provides a comprehensive comparison of these top-performing methods based on extensive benchmarking studies, with special consideration for applications in stem cell research. We examine their underlying statistical frameworks, relative performance metrics, and practical implementation requirements to equip researchers with the evidence needed to select appropriate tools for their specific experimental questions.

Performance Benchmarking: Quantitative Comparisons Across Methodologies

Comprehensive Performance Metrics from Recent Benchmarking Studies

Rigorous benchmarking studies have evaluated differential expression methods across multiple dimensions, including detection accuracy, false discovery control, computational efficiency, and robustness to experimental designs with limited replication. The tables below summarize key findings from these investigations, providing quantitative comparisons essential for method selection.

Table 1: Overall performance characteristics of major DE method categories based on benchmarking studies

| Method Category | Representative Tools | Key Strengths | Key Limitations | Recommended Context |

|---|---|---|---|---|

| Pseudobulk Methods | edgeR, DESeq2, limma with aggregation | Excellent false discovery control, handles biological replicates appropriately, minimal bias toward highly expressed genes | May miss subtle subpopulation differences, requires sufficient biological replicates | Multi-sample, multi-condition experiments with defined biological replicates |

| Bulk RNA-seq Methods (single-cell application) | edgeR, DESeq2, limma | Robust statistical models, extensive community validation, well-documented | May not fully address single-cell specific characteristics like zero inflation | Well-powered studies with adequate cell numbers per population |

| Single-cell Specific Methods | MAST, Wilcoxon, t-test | Can capture cell-to-cell variability, no aggregation required | Prone to pseudoreplication bias, inflated false discovery rates | Preliminary analyses, detection of strong effects in homogeneous populations |

| Mixed Models | MASTRE, NEBULA-LN, muscatMM | Accounts for within-sample correlation, nuanced modeling | Computational intensity, implementation complexity | When subject-level effects need explicit modeling as random effects |

Table 2: Performance metrics from benchmarking studies of differential expression methods

| Method | AUROC Range | Sensitivity | Specificity | F1-Score | Computational Efficiency |

|---|---|---|---|---|---|

| Pseudobulk-edgeR | 0.82-0.91 | High | High | 0.79-0.87 | Moderate |

| Pseudobulk-DESeq2 | 0.80-0.89 | High | High | 0.77-0.85 | Moderate |

| Pseudobulk-limma | 0.79-0.88 | Moderate-High | High | 0.76-0.84 | High |

| edgeR (single-cell) | 0.75-0.84 | Moderate-High | Moderate | 0.70-0.79 | Moderate |

| DESeq2 (single-cell) | 0.73-0.82 | Moderate | Moderate-High | 0.69-0.78 | Moderate |

| MAST | 0.68-0.79 | Moderate | Moderate | 0.65-0.74 | Low-Moderate |

| Wilcoxon | 0.65-0.76 | High | Low-Moderate | 0.62-0.72 | High |

| t-test | 0.62-0.74 | Moderate | Low-Moderate | 0.60-0.70 | High |

The performance advantages of pseudobulk approaches are particularly pronounced in studies involving multiple biological replicates, where they effectively control false discoveries by properly accounting for between-replicate variation [39] [40]. One landmark study evaluating 18 different DS analysis methods found that pseudobulk methods and mixed models that incorporate subjects as random effects significantly outperformed naïve single-cell methods that treat all cells as independent observations [39]. The naïve methods achieved higher sensitivity but at the cost of substantially more false positives, compromising their reliability for downstream biological interpretation.

The Critical Importance of Biological Replicates in Experimental Design

Benchmarking studies have consistently demonstrated that proper accounting of biological replicates represents perhaps the most important factor in obtaining accurate differential expression results. Methods that fail to incorporate this hierarchical structure of multi-sample scRNA-seq data—where cells from the same biological sample show more similar expression patterns than cells across different samples—are vulnerable to pseudoreplication bias [39] [40].

This phenomenon was starkly illustrated in a comprehensive benchmarking study that compared fourteen DE methods across eighteen "gold standard" datasets where both scRNA-seq and bulk RNA-seq data were available from the same biological samples [40]. The investigation revealed that all six top-performing methods shared a common characteristic: they aggregated cells within biological replicates to form pseudobulks before applying statistical tests. The performance advantage of pseudobulk methods was maintained across multiple concordance metrics, including alignment with bulk RNA-seq results, prediction of protein abundance changes, and biological relevance of enriched Gene Ontology terms [40] [41].

A particularly insightful finding emerged from analysis of bias patterns: single-cell DE methods systematically identified highly expressed genes as differentially expressed even when their expression remained unchanged between conditions [40]. This bias was experimentally validated using datasets containing synthetic mRNA spike-ins, where single-cell methods incorrectly flagged many abundant spike-ins as differentially expressed, while pseudobulk methods appropriately recognized their constant expression across conditions [40]. This systematic tendency toward false discoveries among highly expressed genes poses particular challenges in stem cell research, where accurately identifying subtle expression changes in regulatory genes is critical for understanding differentiation processes.

Methodological Deep Dive: Statistical Frameworks and Implementation

Pseudobulk Implementation Workflows

The pseudobulk approach transforms single-cell data into a structure compatible with established bulk RNA-seq analysis methods by aggregating gene expression counts across cells within biological replicates. The typical workflow involves:

- Quality Control and Filtering: Remove poor-quality cells, doublets, empty droplets, and dead cells based on established quality metrics [39].

- Cell Type Identification: Assign cell identities using clustering and annotation approaches.

- Aggregation: For each biological sample and cell type, sum the raw gene expression counts across all cells to create pseudobulk samples [42].

- Differential Expression Analysis: Apply bulk RNA-seq methods (edgeR, DESeq2, or limma) to the pseudobulk expression matrix [40] [42].

This aggregation strategy effectively addresses the within-sample correlation structure inherent in multi-sample scRNA-seq experiments and dramatically reduces the impact of zero inflation, particularly for lowly expressed genes [40] [42]. The resulting data structure more closely matches the assumptions of the statistical models underlying bulk RNA-seq methods, leading to improved calibration of test statistics and more accurate error rate control.

Figure 1: Pseudobulk analysis workflow for differential expression analysis of single-cell data.

Statistical Foundations of Leading Methods

DESeq2 employs a negative binomial generalized linear model (GLM) with shrinkage estimation for dispersion and fold changes. It uses a regularized log transformation (rlog) or variance-stabilizing transformation (VST) to normalize data and calculates size factors to account for sequencing depth differences. For hypothesis testing, DESeq2 offers both Wald tests and likelihood ratio tests (LRT), with the latter particularly useful for complex experimental designs [43]. The method's sophisticated approach to dispersion estimation enables robust performance even with limited replication.

edgeR similarly utilizes a negative binomial model but employs a quantile-adjusted conditional maximum likelihood (qCML) or GLM approach for estimation. The method incorporates empirical Bayes moderation to share information across genes, stabilizing dispersion estimates particularly for genes with low counts [39] [43]. edgeR's Trimmed Mean of M-values (TMM) normalization effectively handles composition biases between samples. Benchmarking studies have noted that edgeR often detects more differentially expressed genes compared to DESeq2, though with generally good overlap in identified genes [43].

limma (Linear Models for Microarray Data) was originally developed for microarray analysis but has been adapted for RNA-seq data through the voom transformation, which converts count data to approximately normal distributed log2-counts per million (logCPM) with precision weights. This transformation enables application of limma's established empirical Bayes moderation framework for estimating gene-wise variability [38] [39]. The method excels in complex experimental designs with multiple factors and provides particularly strong performance when sample sizes are limited.

Table 3: Statistical models and normalization strategies of leading DE methods

| Method | Primary Statistical Model | Normalization Approach | Hypothesis Tests Available | Data Requirements |

|---|---|---|---|---|

| DESeq2 | Negative binomial GLM | Median of ratios | Wald test, LRT | ≥2 biological replicates per condition |

| edgeR | Negative binomial GLM | TMM | Exact test, QLF, LRT | ≥2 biological replicates per condition |

| limma | Linear model with empirical Bayes moderation | TMM + voom transformation | Moderated t-test, F-test | ≥3 biological replicates per condition recommended |

| MAST | Two-part hurdle model | CPM + log2 transformation | LRT | Can work with single replicates but with limited reliability |

Experimental Design and Protocol Considerations

Best Practices for Experimental Design in Stem Cell Research

The performance of differential expression methods depends heavily on appropriate experimental design, particularly in stem cell research where biological materials may be limited or exhibit inherent variability. Based on benchmarking evidence, several key principles emerge:

Biological Replication: The most critical factor for reliable DE analysis is adequate biological replication. Studies with only technical replication (multiple cells from the same biological sample) are highly susceptible to pseudoreplication bias, where expression differences between samples are confounded with biological variability [40]. Benchmarking studies recommend a minimum of 3-5 biological replicates per condition for robust detection of differentially expressed genes, with more replicates needed for detecting subtle expression changes [39].

Cell Number Considerations: While increasing the number of cells per sample improves power for rare cell population detection, it does not compensate for insufficient biological replication. In fact, analyzing large numbers of cells without proper accounting of biological replicates can exacerbate false discoveries [40]. For pseudobulk approaches, sufficient cells per sample-cell type combination are needed for reliable aggregation—typically at least 10-20 cells per combination, though more are preferable.

Batch Effect Management: In stem cell research where experiments may be conducted across multiple differentiation batches or sequencing runs, incorporating batch factors into the analysis model is essential. The inclusion of sample-level covariates in the design matrix (e.g., ~batch + condition rather than ~condition) significantly improves performance across all methods [43].

Implementation Protocols for Robust Differential Expression Analysis

Based on consensus findings from multiple benchmarking studies, the following protocol represents current best practices for differential expression analysis in stem cell single-cell RNA-seq studies:

Step 1: Data Preprocessing and Quality Control

- Begin with raw count data rather than normalized values to preserve statistical properties of counting processes [42] [43].

- Filter low-quality cells based on metrics including total counts, detected features, and mitochondrial percentage.

- Remove genes expressed in only a minimal number of cells (e.g., <10 cells) to reduce multiple testing burden.

Step 2: Cell Type Identification and Stratification

- Perform clustering and cell type annotation using established methods.

- Stratify analysis by cell type to identify cell-type-specific differential expression.

Step 3: Pseudobulk Aggregation

- For each biological sample and cell type combination, sum raw counts across cells to create pseudobulk samples [42].

- Retain only sample-cell type combinations with sufficient cell numbers (≥10-20 cells).

Step 4: Method-Specific Normalization and Modeling

- For DESeq2: Use the

DESeqDataSetFromMatrix()function with appropriate design formula, followed byDESeq()for estimation andresults()for extraction. Apply independent filtering to remove low-count genes. - For edgeR: Create a

DGEListobject, applycalcNormFactors()for TMM normalization, estimate dispersions withestimateDisp(), and fit models usingglmQLFit()andglmQLFTest()for quasi-likelihood F-tests. - For limma: Convert counts to logCPM with

voom()transformation, which simultaneously normalizes data and estimates precision weights, then applylmFit(),eBayes(), andtopTable()for differential expression testing.

Step 5: Result Interpretation and Validation

- Apply multiple testing correction (e.g., Benjamini-Hochberg FDR control).

- Consider fold change thresholds alongside statistical significance to prioritize biologically meaningful changes.

- Validate key findings using orthogonal methods when possible.