Pseudobulk RNA-seq Analysis: A Powerful Framework for Comparative Stem Cell Transcriptomics

This article provides a comprehensive guide to pseudobulk analysis, a powerful computational approach for comparing transcriptomes across distinct stem cell populations.

Pseudobulk RNA-seq Analysis: A Powerful Framework for Comparative Stem Cell Transcriptomics

Abstract

This article provides a comprehensive guide to pseudobulk analysis, a powerful computational approach for comparing transcriptomes across distinct stem cell populations. Tailored for researchers and drug development professionals, we explore the foundational principles that justify its use over mean-centric single-cell methods, detailing robust methodological pipelines from cell sorting and aggregation to statistical testing. The content addresses critical troubleshooting aspects for low-input samples and data integration, and establishes a framework for validation against bulk RNA-seq and functional interpretation. By synthesizing insights from hematopoietic, mesenchymal, and neural stem cell studies, this resource empowers scientists to leverage pseudobulk analysis for uncovering biologically significant differences in stem cell biology and therapeutic potential.

Why Pseudobulk? Establishing the Conceptual Foundation for Stem Cell Comparison

Bridging Single-Cell Resolution and Population-Level Comparisons

Single-cell RNA sequencing (scRNA-seq) has revolutionized our ability to profile heterogeneous cell populations, including stem cell populations, at unprecedented resolution. However, a fundamental challenge emerges when researchers need to make sample-level inferences across multiple biological replicates rather than simply comparing clusters of cells. This is where pseudobulk analysis becomes indispensable—it bridges the gap between single-cell resolution and population-level comparisons by aggregating single-cell data into sample-level representations that account for biological variability between donors, patients, or experimental replicates.

The term "differential state" analysis describes this approach, where a given subset of cells (termed subpopulation) is followed across a set of samples and experimental conditions to identify subpopulation-specific responses [1]. In stem cell research, this enables investigators to uncover how specific stem cell populations respond to perturbations, differentiate over time, or vary between disease states while properly accounting for sample-to-sample variability. Unlike methods that treat individual cells as independent observations—which can lead to inflated significance values due to failure to account for biological replication—pseudobulk approaches align the statistical framework with the experimental design [2] [3].

Performance Comparison of Pseudobulk Methodologies

Quantitative Benchmarking of Analysis Approaches

Comprehensive evaluations have compared various computational frameworks for differential state analysis. These benchmarks assess methods across multiple performance dimensions including statistical power, false discovery control, and computational efficiency.

Table 1: Performance Comparison of Single-Cell Analysis Methods

| Method Type | Examples | Precision | Recall | Specificity | Use Case Strengths |

|---|---|---|---|---|---|

| Pseudobulk (Sum Counts + EdgeR/DESeq2) | muscat, EdgeR, DESeq2 | High | High | High | Multi-sample, multi-condition designs [1] [2] [3] |

| Pseudobulk (Mean Normalization) | Seurat, Scanpy | Moderate | Moderate | Moderate | Rapid exploratory analysis [2] |

| Cell-Level Mixed Models | MAST, scDD | Variable | Variable | Variable | Single-sample designs [1] |

| Reference-free Deconvolution | - | Low | Low | Low | Exploration when reference unavailable [4] |

Performance assessments consistently demonstrate that pseudobulk approaches based on count aggregation coupled with established bulk RNA-seq tools (EdgeR, DESeq2, limma-voom) outperform methods designed specifically for single-cell data when analyzing multi-sample experiments [1] [2]. One evaluation found pseudobulk methods demonstrated superior specificity and precision compared to alternatives, with the sum of counts approach generally outperforming mean normalization strategies [2].

Specialized Methodologies for Enhanced Analysis

Beyond standard pseudobulk implementations, specialized computational methods have emerged to address specific challenges in single-cell data analysis:

- muscat: Provides a comprehensive framework for multi-sample multi-condition scRNA-seq data, enabling detection of subpopulation-specific responses to experimental conditions [1]

- SCORPION: Reconstructs gene regulatory networks suitable for population-level comparisons by leveraging coarse-grained single-cell data, outperforming 12 existing network reconstruction techniques in benchmarking studies [5]

- Heterogeneous Simulation: Enables realistic benchmarking of cell-type deconvolution methods by preserving biological variance more accurately than homogeneous simulation approaches [4]

Table 2: Advanced Computational Tools for Specialized Applications

| Tool | Methodology | Application | Performance Advantage |

|---|---|---|---|

| SCORPION | Message-passing algorithm with coarse-grained data | Gene regulatory network reconstruction | 18.75% higher precision and recall than other methods [5] |

| Heterogeneous Simulation | Constrains cells to biological samples | Deconvolution benchmarking | Produces variance matching real bulk data [4] |

| PARAFAC2-RISE | Tensor decomposition | Multi-condition single-cell analysis | Integrates data across experimental conditions [6] |

| scPoli | Data integration | Atlas-level organoid comparison | Accounts for batch effects while preserving biology [7] |

Experimental Protocols and Workflows

Standardized Pseudobulk Analysis Pipeline

A robust pseudobulk analysis workflow consists of several methodical steps that transform single-cell data into biologically meaningful sample-level comparisons:

Data Preprocessing and Quality Control: Filter cells based on quality metrics (mitochondrial content, number of features) and remove potential doublets. Ensure presence of raw counts in addition to normalized values [3].

Cell Type Annotation: Identify cell populations using clustering and marker gene expression. This can be performed via manual annotation or automated algorithms, resulting in a metadata column (e.g., "annotated") specifying cell type identities [1] [3].

Pseudobulk Matrix Generation: Aggregate raw counts based on biological replicates and cell types using one of two primary approaches:

Differential Expression Analysis: Process aggregated data using established bulk RNA-seq tools (EdgeR, DESeq2, limma-voom) with appropriate experimental design formulas [1] [3].

Interpretation and Validation: Conduct pathway analysis, visualize results, and experimentally validate key findings.

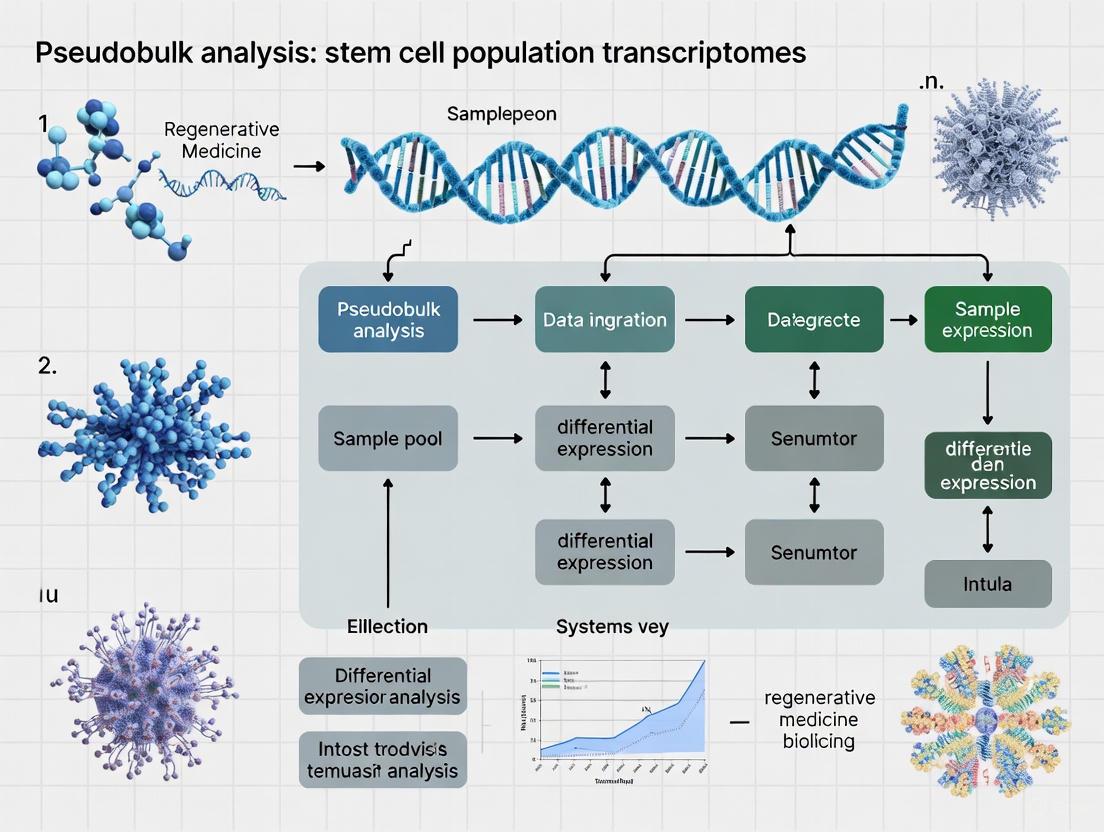

Figure 1: Pseudobulk analysis workflow from single-cell data to biological insights

Experimental Design Considerations for Stem Cell Research

When applying pseudobulk analysis to stem cell populations, several experimental factors require special consideration:

- Replication Strategy: Include sufficient biological replicates (recommended: ≥3 per condition) to estimate sample-to-sample variability accurately [1]

- Cell Numbers: Ensure adequate cell coverage (minimum 50-100 cells per cell type per sample) to generate robust pseudobulk estimates [2]

- Metadata Collection: Document critical experimental factors (donor information, passage number, differentiation batch) to include as covariates in statistical models [3]

- Reference Atlas Integration: For organoid and stem cell differentiation studies, leverage reference atlases like the Human Endoderm-Derived Organoid Cell Atlas (HEOCA) to assess protocol fidelity and identify off-target cells [7]

Signaling Pathways and Biological Applications

Key Signaling Pathways in Stem Cell Biology

Pseudobulk analysis has been instrumental in characterizing pathway activity across stem cell populations:

- PI3K-AKT-mTOR Signaling: Investigation of PI3K, AKT, and mTOR inhibitors in high-grade serous ovarian cancer revealed feedback loops involving receptor tyrosine kinase activation, mediated by CAV1 upregulation [8]

- Cell Cycle Regulation: CDK inhibitors induce distinct transcriptional shifts across cell models, detectable through pseudobulk analysis of cell type-specific responses [8]

- Epigenetic Regulation: BET and HDAC inhibitors demonstrate consistent cluster-specific effects across multiple stem cell models, suggesting conserved mechanisms of action [8]

Figure 2: Drug resistance pathway identified through pseudobulk pharmacotranscriptomics

Applications in Stem Cell and Organoid Research

The pseudobulk framework has enabled key advances in stem cell research:

- Organoid Fidelity Assessment: Integration of nearly one million cells from 218 organoid samples enabled systematic comparison of PSC-, FSC-, and ASC-derived organoids with their in vivo counterparts, revealing PSC-derived organoids exhibit lower on-target percentages (23.28-83.63%) compared to ASC-derived organoids (98.14%) [7]

- Developmental Trajectory Analysis: Pseudobulk approaches enable quantification of cell type-specific expression changes along differentiation timecourses while accounting for biological variability between different differentiations [7]

- Disease Modeling: Identification of subpopulation-specific responses to inflammatory stimuli like lipopolysaccharide treatment in brain cortex cells, revealing cell-type-specific defense mechanisms [1]

Table 3: Essential Research Reagents and Computational Tools for Pseudobulk Analysis

| Resource | Type | Function | Application Context |

|---|---|---|---|

| Decoupler [3] | Computational Tool | Generates pseudobulk expression matrices from single-cell data | Aggregation by cell type and sample |

| EdgeR/DESeq2 [1] [3] | Statistical Package | Differential expression analysis of pseudobulk data | Identifying cell-type-specific responses |

| muscat [1] | R Package | Comprehensive DS analysis for multi-condition experiments | Complex experimental designs with multiple conditions |

| SCORPION [5] | R Package | Gene regulatory network reconstruction | Comparing regulatory networks across populations |

| Cell Hashing Antibodies [8] | Wet-bench Reagent | Sample multiplexing for scRNA-seq | Increasing throughput and reducing batch effects |

| HEOCA [7] | Reference Atlas | Integrated organoid transcriptomes | Assessing organoid fidelity and maturation |

| scPoli [7] | Computational Method | Data integration across datasets | Harmonizing cell annotations across studies |

Pseudobulk analysis represents a powerful statistical framework that bridges single-cell resolution with population-level comparisons in stem cell research. By properly accounting for biological replication through aggregation of single-cell data, these methods enable robust identification of cell-type-specific responses across conditions while controlling false discovery rates. The continuing development of specialized tools—from muscat for multi-condition analysis to SCORPION for network reconstruction—is expanding the applications of pseudobulk approaches in characterizing stem cell populations, evaluating organoid models, and identifying disease-relevant mechanisms. As single-cell technologies continue to evolve, pseudobulk methodologies will remain essential for extracting biologically meaningful insights from complex experimental designs in stem cell biology and regenerative medicine.

Overcoming the Limitations of Mean-Centric Single-Cell Analysis

Single-cell RNA sequencing (scRNA-seq) has revolutionized our ability to study cellular heterogeneity, particularly in complex stem cell populations. However, a persistent challenge in the field has been the proper identification of differentially expressed (DE) genes between conditions while accounting for biological replication. Traditional methods that treat individual cells as independent observations—a mean-centric approach—fundamentally misunderstand the statistical nature of scRNA-seq data generation, leading to inflated false discovery rates and reduced biological accuracy [9] [10]. This analysis guide objectively compares the performance of pseudobulk approaches against alternative methodologies, providing researchers with evidence-based recommendations for analyzing stem cell population transcriptomes.

Performance Benchmarking: Pseudobulk vs. Alternative Methods

Quantitative Performance Metrics

Extensive benchmarking studies consistently demonstrate that pseudobulk methods outperform single-cell-specific approaches across multiple performance metrics when analyzing biological replicates.

Table 1: Comparative Performance of Differential Expression Methods

| Method | Type I Error Control | Power | Computational Speed | Bias Toward Highly Expressed Genes | Reference Performance Metric |

|---|---|---|---|---|---|

| Pseudobulk (DESeq2/edgeR) | Excellent | High | Fast | Minimal | MCC: 0.8-0.95 [11] |

| Mixed Models (GLMMs) | Good | High to Moderate | Slow | Moderate | Type I Error: Near nominal [12] |

| Single-cell Methods (MAST, scVI) | Poor | Variable | Moderate to Slow | Substantial | AUCC: Lower than pseudobulk [9] |

| Naive Methods (t-test/Wilcoxon) | Very Poor | High (false positives) | Fast | Severe | Type I Error: Highly inflated [10] |

A landmark study by Squair et al. (2021) evaluated 14 DE methods across 18 gold-standard datasets where the ground truth was known from matched bulk RNA-seq data. Their analysis revealed that "pseudobulk methods outperformed generic and specialized single-cell DE methods" with highly significant differences in performance [9]. The area under the concordance curve (AUCC) between bulk and scRNA-seq results was substantially higher for pseudobulk approaches, indicating superior biological accuracy.

Matthews Correlation Coefficient Analysis

Murphy and Skene (2022) employed the Matthews Correlation Coefficient (MCC) as a balanced performance measure that considers both type I (false positive) and type II (false negative) error rates. Their analysis demonstrated that "pseudobulk approaches achieve highest performance across individuals and cells variations," with one exception at very small sample sizes (5 individuals and 10 cells) where sum pseudobulk performed worse than the Tobit method [11]. The MCC values for pseudobulk methods typically ranged between 0.8-0.95 across simulation scenarios, significantly outperforming pseudoreplication approaches.

Table 2: Specialized Method Performance in Atlas-Level Scenarios

| Method Category | Use Case | Recommended Tool | Performance | Runtime |

|---|---|---|---|---|

| Pseudobulk | Individual datasets | DESeq2, edgeR | Excellent | Fast [10] |

| Mixed Models | Complex experimental designs | DREAM | Good | Moderate [10] |

| Permutation-based | Atlas-level analyses | distinct | Excellent | Poor [10] |

| Hierarchical Bootstrap | Adaptive to data structure | Custom implementation | Good | Moderate [10] |

Experimental Protocols for Method Validation

Benchmarking Framework Using Ground Truth Datasets

The most reliable performance assessments come from studies using experimental ground truth rather than simulated data. The following protocol exemplifies rigorous method validation:

Dataset Curation: Identify matched bulk and scRNA-seq datasets profiling the same population of purified cells, exposed to the same perturbations, and sequenced in the same laboratories [9]. Eighteen such "gold standard" datasets were identified in the literature for comprehensive benchmarking.

Method Selection: Include representative methods from major analytical approaches: pseudobulk (DESeq2, edgeR, limma-voom), mixed models (MAST with random effects, GLMM Tweedie), and single-cell methods (Wilcoxon, t-test, scVI) [9] [10].

Concordance Assessment: Calculate the area under the concordance curve (AUCC) between DE results from bulk versus scRNA-seq datasets. This quantifies how well each scRNA-seq method recapitulates known biological truth [9].

Bias Evaluation: Assess systematic biases by analyzing false positive rates across expression levels, using spike-in controls where available to identify genes falsely called as differentially expressed [9].

Functional Validation: Compare Gene Ontology term enrichment analyses between bulk and scRNA-seq DE results to determine which methods produce biologically interpretable findings [9].

Simulation Studies with Controlled Parameters

For use cases where experimental ground truth is unavailable, well-designed simulation studies provide valuable insights:

Data Generation: Use modified simulation approaches like hierarchicell that properly account for the hierarchical structure of scRNA-seq data, with both differentially expressed and non-differentially expressed genes [11].

Fair Comparisons: Ensure all methods are tested on identical simulated datasets by setting appropriate random number generator seeds [11].

Performance Metrics: Calculate both type I error rates and power simultaneously using balanced metrics like MCC, rather than evaluating these error rates in isolation [11].

Scenario Testing: Evaluate method performance across varying experimental designs, including balanced/unbalanced cell numbers per sample, different proportions of differentially expressed genes, and varying numbers of biological replicates [11] [10].

Theoretical Foundation: The Statistical Principles of Valid Single-Cell Analysis

The superiority of pseudobulk methods stems from their appropriate handling of the hierarchical structure of scRNA-seq data, which arises from a two-stage sampling design: first, biological specimens are sampled, then multiple cells are profiled from each specimen [10].

This hierarchical structure induces dependencies among cells from the same biological replicate, quantified by the intraclass correlation coefficient (ICC). As Zimmerman et al. noted, "failing to account for the within-individual correlation in scRNA-seq data produces grossly inflated false positives" [12]. The variance of the difference in means estimator is inflated by a factor of (1+(m-1)ρ), where (m) is the number of cells per sample and (ρ) is the ICC. With typical values of (m=100) and (ρ=0.5), the variance is inflated 50-fold, dramatically overstating statistical significance when using naive methods [10].

The Researcher's Toolkit: Essential Solutions for Single-Cell Differential Expression

Table 3: Research Reagent Solutions for Single-Cell Differential Expression Analysis

| Tool/Resource | Function | Application Context | Implementation |

|---|---|---|---|

| DESeq2 | Negative binomial generalized linear model | Pseudobulk analysis | R package, standard workflow |

| edgeR | Negative binomial models with robust dispersion estimation | Pseudobulk analysis | R package, quasi-likelihood framework |

| limma-voom | Linear modeling of log-counts with precision weights | Pseudobulk analysis | R package, voom transformation |

| DREAM | Mixed model extension of limma-voom | Complex designs with repeated measures | R package, accounts for subject effects |

| MAST | Hurdle model with random effects | Single-cell specific modeling | R package, accounts for zero inflation |

| NEBULA | Fast negative binomial mixed model | Large multi-subject datasets | R package, approximate likelihood |

| muscat | Multi-condition multi-sample analysis | Comprehensive differential state testing | R Bioconductor package |

| aggregateBioVar | Pseudobulk creation per cell type | Preparing data for bulk tools | R Bioconductor package |

Advanced Considerations for Stem Cell Research

Addressing the Four Curses of Single-Cell Analysis

Recent research has identified four fundamental challenges—"curses"—that plague single-cell DE analysis [13]:

The Curse of Zeros: scRNA-seq data contains abundant zeros, which may represent genuine biological absence or technical dropouts. Pseudobulk methods naturally handle this by reducing zeros through aggregation, while maintaining sensitivity to biologically meaningful absence patterns in stem cell subpopulations.

The Curse of Normalization: Library size normalization methods developed for bulk RNA-seq may be inappropriate for UMI-based scRNA-seq data, as they convert absolute counts to relative abundances. Pseudobulk approaches applied to raw UMI counts preserve absolute quantification while properly accounting for sequencing depth.

The Curse of Donor Effects: Biological variability between donors or samples must be modeled explicitly. Methods that fail to account for this inherent variation produce false discoveries. As Squair et al. demonstrated, single-cell methods "are biased and prone to false discoveries" with the most widely used methods discovering "hundreds of differentially expressed genes in the absence of biological differences" [9].

The Curse of Cumulative Biases: The sequential application of normalization, imputation, and transformation steps can compound biases. Pseudobulk methods minimize this risk through their simpler analytical framework.

Offset Pseudobulk: A Theoretical Advance

A recent theoretical breakthrough demonstrates that "a count-based pseudobulk equipped with a proper offset variable has the same statistical properties as GLMMs in terms of both point estimates and standard errors" [14]. This offset-pseudobulk approach provides the statistical rigor of mixed models with substantially faster computation ((>10×) speedup) and improved numerical stability, particularly for low-expression transcripts [14].

The evidence from multiple comprehensive benchmarks consistently supports pseudobulk methods as superior for differential expression analysis in single-cell studies, including stem cell population transcriptomics. These approaches demonstrate excellent control of false discoveries, high power to detect true biological signals, computational efficiency, and minimal bias toward highly expressed genes. For most experimental scenarios involving biological replicates, pseudobulk methods implemented with established bulk RNA-seq tools (DESeq2, edgeR, limma-voom) provide the most robust and biologically accurate results. For atlas-level studies with extremely large sample sizes, permutation-based methods offer excellent performance despite computational costs, while DREAM presents a viable compromise for complex designs requiring mixed models. By adopting these evidence-based analytical approaches, researchers can overcome the limitations of mean-centric single-cell analysis and generate more reliable, reproducible insights into stem cell biology.

This guide objectively compares the performance of pseudobulk analysis against other computational strategies for single-cell RNA sequencing (scRNA-seq) data when comparing stem cell populations, their differentiation states, and responses to culture conditions. Pseudobulk analysis, which involves aggregating single-cell transcriptomes into grouped samples, is a cornerstone technique in stem cell research for its robustness in specific experimental designs [15].

Table of Contents

- Introduction to Pseudobulk Analysis in Stem Cell Research

- Performance Comparison: Pseudobulk vs. Other Differential Expression Methods

- Key Applications and Experimental Protocols

- Visualizing Experimental Workflows and Signaling Pathways

- The Scientist's Toolkit: Essential Research Reagents

Single-cell RNA sequencing has revolutionized our ability to study heterogeneous systems, such as stem cell populations and their differentiation intermediates. However, the inherent technical noise and sparsity of scRNA-seq data pose challenges for robust statistical comparisons between groups. Pseudobulk analysis addresses this by summing gene expression counts across cells belonging to the same sample or group (e.g., a specific cell type from one donor or culture condition), creating a "pseudobulk" profile that resembles traditional bulk RNA-seq data [15]. This approach is particularly powerful in stem cell research for benchmarking culture conditions, identifying molecular signatures of potency, and validating differentiation protocols.

Performance Comparison: Pseudobulk vs. Other Differential Expression Methods

The choice of analytical method depends heavily on experimental design, data quality, and the biological question. A comprehensive benchmark of 46 differential expression workflows for single-cell data with multiple batches provides critical insights into method selection [15].

Table 1: Benchmarking Differential Expression Workflows for Single-Cell Data with Batch Effects [15]

| Method Category | Example Methods | Performance with Small Batch Effects | Performance with Large Batch Effects | Performance with Low Sequencing Depth | Recommended Use Case in Stem Cell Research |

|---|---|---|---|---|---|

| Pseudobulk | DESeq2, edgeR on aggregated counts | Good precision-recall (pAUPR) [15] | Lowest F-scores; worsens with more batches [15] | Not the top performer [15] | Well-controlled studies with minimal technical variation; small batch numbers. |

| Covariate Modeling | MASTCov, limmatrendCov | Slight deterioration vs. naïve methods [15] | Among highest performers; robustly improves analysis [15] | Benefit diminishes at very low depth [15] | Default choice for studies with significant technical or donor variation. |

| Batch-Corrected Data | scVI + limmatrend | Rarely improves DE analysis [15] | scVI considerably improves limmatrend [15] | scVI improvement is lost [15] | Specific tool combinations (e.g., scVI) can be effective. |

| Naïve Workflows | Raw_Wilcox, limmatrend | Good performance [15] | Performance drops [15] | Wilcoxon test and LogN_FEM performance enhanced [15] | Preliminary analysis or datasets with no batch effects. |

Key Insight: The benchmark concluded that the use of batch-corrected data rarely improves differential expression analysis, whereas covariate modeling (using uncorrected data with a batch covariate) consistently improves analysis for large batch effects [15]. Pseudobulk methods performed well for small batch effects but were the worst-performing for large batch effects [15].

Key Applications and Experimental Protocols

Application 1: Comparing Closely Related Stem Cell Populations

Objective: To identify transcriptomic differences between highly similar stem cell populations, such as CD34+ and CD133+ hematopoietic stem and progenitor cells (HSPCs), which is crucial for isolating cells with specific regenerative potentials.

Experimental Protocol (as described in Frontiers in Cell and Developmental Biology, 2025) [16] [17]:

- Cell Source: Obtain human umbilical cord blood (UCB) from healthy newborns with appropriate ethical approval.

- Cell Isolation: Isolate mononuclear cells (MNCs) via density gradient centrifugation using Ficoll-Paque.

- Fluorescence-Activated Cell Sorting (FACS):

- Stain MNCs with a cocktail of fluorescently labeled antibodies: Lineage markers (Lin-FITC), CD45 (PE-Cy7), CD34 (PE), and CD133 (APC).

- Sort two distinct populations using a high-speed sorter (e.g., MoFlo Astrios EQ):

- Population A: CD34+Lin−CD45+

- Population B: CD133+Lin−CD45+

- Single-Cell RNA Sequencing:

- Process sorted cells directly using the Chromium X Controller (10X Genomics).

- Prepare libraries using the Chromium Next GEM Single Cell 3' Kit v3.1.

- Sequence on an Illumina NextSeq 1000/2000 platform, targeting 25,000 reads per cell.

- Data Analysis:

- Process raw data with Cell Ranger to generate gene-cell matrices.

- Perform quality control, filtering out cells with <200 or >2,500 genes and >5% mitochondrial reads.

- For population comparison, the two datasets can be merged and treated as a "pseudobulk" sample for each population to assess overall correlation and identify subtle, consistent differences [16] [17].

Supporting Data: This optimized scRNA-seq protocol applied to CD34+ and CD133+ HSPCs revealed that the two populations do not differ significantly in their overall gene expression, evidenced by a very strong positive linear relationship (R = 0.99) when analyzed in an integrated pseudobulk manner [16] [17].

Application 2: Mapping Early Differentiation Trajectories

Objective: To reconstruct a continuous map of the earliest differentiation decisions of hematopoietic stem cells (HSCs) across the human lifetime, identifying key genes and branching points.

Experimental Protocol (as described in Nature Communications, 2025) [18]:

- Sample Collection: Collect bone marrow-derived CD34+ HSPCs from 15 healthy donors across age groups (young, middle-aged, old).

- Targeted Single-Cell Multi-Omics: Use BD Rhapsody technology for combined Transcriptomic/AbSeq analysis.

- Targeted mRNA Panel: Quantify expression of a rationally selected panel of 596 genes related to HSPCs, leukemia, and immune modulation.

- Surface Proteome Panel: Simultantly quantify 46 surface antigens using oligonucleotide-labeled antibodies.

- Bioinformatic Reconstruction:

- After quality control and batch-effect correction, subset the data to the most immature HSPC clusters (HSC/MPP, MPP/MK-Ery, MPP/LMPP).

- Perform pseudotime analysis (e.g., with tradeSeq) on these ~21,000 cells to model differentiation trajectories.

- Use pseudobulk DESeq analysis on defined subpopulations (e.g., HSC-1 vs. HSC-2) to find differentially expressed genes driving early fate decisions [18].

Supporting Data: This approach identified four major differentiation trajectories from HSPCs, consistent upon aging, with an early branching point into megakaryocyte-erythroid progenitors [18]. Young donors exhibited a more productive differentiation from HSPCs to committed progenitors of all lineages [18]. Key genes like DLK1 and ADGRG6 showed continuous changes in expression at the earliest branching points, and CD273/PD-L2 was identified as a novel marker for a quiescent, immature HSPC subfraction with immune-modulatory function [18].

Application 3: Integrating Data Across Conditions and Studies

Objective: To leverage publicly available data for comparative analysis of gene regulation across diverse tissues and cell types, overcoming the limitations of individual studies.

Experimental Protocol (The Compass Framework) [19]:

- Data Curation (CompassDB):

- Collect high-quality, uniformly processed, publicly available single-cell multi-omics data (measuring both chromatin accessibility and gene expression).

- Compile a resource of over 2.8 million cells from hundreds of human and mouse cell types [19].

- Analysis and Visualization (CompassR):

- Use the companion open-source R package, CompassR, to query the database.

- The framework enables the identification of cis-regulatory element (CRE)-gene linkages that are specific to a tissue type or conserved across tissues.

- While not exclusively pseudobulk, the framework's reliance on large, aggregated data resources embodies the principle of increasing power and generalizability through data integration, a core strength of pseudobulk approaches in a meta-analysis context.

Supporting Data: The Compass framework demonstrates that comparative analysis across a large number of tissues can distinguish whether a gene is regulated by a specific CRE in just one tissue or across multiple tissues, providing a powerful resource for the stem cell community to contextualize their findings [19].

Visualizing Experimental Workflows and Signaling Pathways

Workflow for Hematopoietic Stem Cell Population Comparison

The diagram below illustrates the integrated experimental and computational workflow for comparing stem cell populations, as used in the HSPC study [16] [17].

WNT/YAP Signaling in Alveolar Differentiation

The diagram below models the key signaling pathway involved in the differentiation and maturation of human pluripotent stem cell (hPSC)-derived alveolar organoids, as described in the cited research [20].

The Scientist's Toolkit: Essential Research Reagents

The following table details key reagents and their functions used in the featured stem cell research protocols.

Table 2: Essential Research Reagents for Stem Cell Isolation and Differentiation

| Research Reagent | Specific Example / Clone | Function in Experimental Protocol |

|---|---|---|

| FACS Antibody Panel | CD34 (clone 581), CD133 (clone CD133), CD45 (clone HI30), Lineage Cocktail (CD235a, CD2, CD3, etc.) [17] | Isolation of highly purified hematopoietic stem and progenitor cell (HSPC) populations for downstream transcriptomic analysis. |

| Cell Culture Supplement | CHIR99021 [20] | A small molecule GSK-3 inhibitor that activates WNT signaling, crucial for directing differentiation towards lung and alveolar progenitors. |

| Cell Culture Supplement | Y-27632 (Rho Kinase Inhibitor) [20] | Enhances the survival and recovery of stem cells and organoids after passaging or cryopreservation. |

| Cell Culture Supplement | Activin A [20] | A TGF-β family growth factor used in the first step of differentiation to induce definitive endoderm from pluripotent stem cells. |

| Cell Culture Supplement | Noggin, FGF4, SB431542 [20] | A combination of factors used to pattern definitive endoderm into anterior foregut endoderm, a precursor to lung lineages. |

| Extracellular Matrix | Matrigel [20] | A basement membrane extract used to support the 3D culture and growth of organoids, providing crucial structural and biochemical cues. |

| scRNA-seq Kit | Chromium Next GEM Single Cell 3' Kit (10X Genomics) [17] | For preparing barcoded single-cell RNA sequencing libraries from sorted cell populations. |

This guide provides an objective performance comparison of a pseudobulk analysis strategy against conventional single-cell RNA sequencing (scRNA-seq) approaches for analyzing hematopoietic stem and progenitor cells (HSPCs). The evaluation focuses on an experimental workflow designed to compare two closely related HSPC populations: CD34+Lin−CD45+ and CD133+Lin−CD45+ cells isolated from human umbilical cord blood (UCB) [16] [17] [21].

The core finding demonstrates that while standard scRNA-seq clustering reveals subtle differences between these populations, the pseudobulk approach confirms an exceptionally strong positive linear relationship (R = 0.99) in their transcriptomes [17] [21]. This indicates that despite historical postulations that CD133+ HSPCs might be enriched for more primitive stem cells, their overall gene expression profiles at the population level are remarkably similar [21]. The pseudobulk method proved particularly valuable for drawing robust biological conclusions from limited cell numbers, a common challenge in rare stem cell research [16].

Experimental Workflow & Protocol

The following diagram illustrates the integrated experimental and computational workflow used for the pseudobulk analysis of HSPCs.

Detailed Experimental Methodology

Cell Isolation and Sorting

- Source Material: Human umbilical cord blood (UCB) was obtained from healthy newborns with appropriate ethical approvals [17] [21].

- Mononuclear Cell Isolation: UCB was diluted with phosphate-buffered saline (PBS) and carefully layered over Ficoll-Paque, followed by centrifugation for 30 minutes at 400× g at 4°C. The mononuclear cell (MNC) phase was collected and washed for further analysis [17].

- Fluorescence-Activated Cell Sorting (FACS): Cells were stained with antibody cocktails including:

- Cell Populations Sorted: Small events (2-15 μm) were gated as "lymphocyte-like" population (P1), with Lin− events selected and subsequently analyzed for CD45 with either CD34 or CD133. Final sorted populations were CD34+Lin−CD45+ and CD133+Lin−CD45+ HSPCs using a MoFlo Astrios EQ cell sorter [17].

Single-Cell RNA Sequencing

- Platform: Chromium X Controller (10X Genomics) with Chromium Next GEM Chip G Single Cell Kit [17] [21].

- Library Preparation: Chromium Next GEM Single Cell 3′ GEM, Library & Gel Bead Kit v3.1, and Single Index Kit T Set A were used according to manufacturer's guidelines [17].

- Sequencing: Libraries were pooled and sequenced on Illumina NextSeq 1000/2000 using P2 flow cell chemistry (200 cycles) with paired-end sequencing mode (read 1-28 bp, read 2-90 bp), targeting 25,000 reads per single cell [17].

Bioinformatic Analysis

- Primary Processing: Raw sequencing files (BCL) were demultiplexed using Cell Ranger mkfastq pipeline (CellRanger version 7.2.0). Reads were mapped to human genome GRCh38 (version 2020-A) [17] [21].

- Quality Control: Cells with fewer than 200 or more than 2,500 transcripts, and those with >5% mitochondrial transcripts were excluded from analysis [17].

- Clustering and Visualization: Downstream analysis performed using Seurat (version 5.0.1). Cell subpopulations were identified and visualized using uniform manifold approximation and projection (UMAP) [16] [17].

- Pseudobulk Integration: Individual datasets from CD34+ and CD133+ HSPCs were merged and treated as "pseudobulk" for integrated analysis, emphasizing their combined transcriptional relationship [16].

Performance Comparison & Data Analysis

Key Findings from scRNA-seq and Pseudobulk Analysis

Table 1: Comparative Analysis of scRNA-seq vs. Pseudobulk Approaches for HSPC Characterization

| Analysis Parameter | Standard scRNA-seq Clustering | Pseudobulk Integration |

|---|---|---|

| Population Relationship | Reveals subtle subpopulation differences via UMAP clustering [16] | Shows near-identical transcriptomes (R=0.99) [17] [21] |

| Biological Interpretation | Suggests potential heterogeneity within and between populations [16] | Indicates CD34+ and CD133+ HSPCs are highly similar at population level [21] |

| Sensitivity to Rare Cells | Can identify rare subpopulations but requires sufficient cell numbers [16] | Robust approach for limited cell numbers common in HSPC research [16] |

| Technical Requirements | Demanding QC standards: cell viability, mitochondrial reads, transcript counts [17] | Same technical requirements but more forgiving for population-level conclusions [16] |

| Data Integration | Maintains single-cell resolution for heterogeneity assessment [17] | Enables merging of datasets as combined "pseudobulk" profile [16] |

Table 2: Key Quantitative Metrics from HSPC scRNA-seq Experiment

| Experimental Metric | Specification | Impact on Data Quality |

|---|---|---|

| Cells After QC | >200 and <2,500 transcripts; <5% mitochondrial reads [17] | Ensures analysis of high-quality, viable cells |

| Sequencing Depth | 25,000 reads per cell [17] | Provides sufficient coverage for transcript detection |

| Cell Size Gating | 2-15 μm "lymphocyte-like" events [17] | Enriches for target HSPC population |

| Marker Co-expression | CD34+Lin−CD45+ and CD133+Lin−CD45+ [17] [21] | Defines purified HSPC populations without differentiated cells |

| Correlation Strength | R=0.99 between CD34+ and CD133+ populations [17] [21] | Quantifies remarkable transcriptome similarity |

Biological Interpretation of Results

The relationship between the HSPC populations and their developmental context can be visualized through the following biological pathway diagram.

The pseudobulk analysis demonstrated that CD34+ and CD133+ HSPCs share remarkably similar transcriptional programs, challenging the hypothesis that CD133+ marks a distinctly more primitive stem cell population [17] [21]. This finding aligns with emerging understanding of hematopoiesis as a continuous process of differentiation trajectories rather than strictly discrete progenitor populations [18] [22].

The high correlation (R=0.99) between these populations suggests they occupy overlapping functional states in the hematopoietic hierarchy, with both populations capable of giving rise to similar progenitor lineages [21]. This refined understanding could simplify experimental design for studying early hematopoietic differentiation events.

The Scientist's Toolkit

Essential Research Reagents and Solutions

Table 3: Key Research Reagents for HSPC scRNA-seq Studies

| Reagent / Solution | Specific Example | Function in Experimental Workflow |

|---|---|---|

| Cell Separation Medium | Ficoll-Paque | Density gradient separation of mononuclear cells from whole UCB [17] |

| Lineage Depletion Cocktail | FITC-conjugated antibodies against CD235a, CD2, CD3, CD14, CD16, CD19, CD24, CD56, CD66b | Negative selection to remove committed lineage cells [17] [21] |

| HSPC Positive Selection Antibodies | PE-conjugated anti-CD34, APC-conjugated anti-CD133, PE-Cy7-conjugated anti-CD45 | Fluorescence-activated cell sorting of target HSPC populations [17] |

| Single-Cell Library Prep Kit | Chromium Next GEM Single Cell 3' GEM, Library & Gel Bead Kit v3.1 | Generation of barcoded single-cell sequencing libraries [17] |

| Sequencing Platform | Illumina NextSeq 1000/2000 with P2 flow cell | High-throughput sequencing of single-cell libraries [17] |

| Bioinformatic Tools | Cell Ranger (v7.2.0), Seurat (v5.0.1) | Processing, integration, and analysis of single-cell data [17] |

Comparative Advantages and Limitations

Performance Assessment

The pseudobulk integration approach demonstrated particular strength in addressing specific biological questions about population-level transcriptome similarities, outperforming conventional clustering analysis in this specific application [16] [17]. However, standard scRNA-seq clustering remains superior for identifying rare subpopulations and understanding cellular heterogeneity [17].

The exceptional correlation (R=0.99) between CD34+ and CD133+ HSPCs highlights how pseudobulk analysis can reveal fundamental biological relationships that might be obscured by over-interpreting subtle clustering differences in UMAP visualizations [16] [21].

Technical Considerations

The success of this integrated approach depended critically on rigorous quality control throughout the experimental workflow, including:

- Careful FACS gating strategies to ensure population purity [17]

- Stringent QC thresholds during bioinformatic processing [17]

- Appropriate normalization before pseudobulk integration [16]

This methodological rigor provides a template for similar comparative studies of closely related stem cell populations, particularly when working with limited cell numbers from precious primary samples like human UCB [16].

From Cells to Insights: A Step-by-Step Pseudobulk Analysis Pipeline

Cell sorting is a foundational technique in stem cell research, enabling the isolation of pure populations of hematopoietic stem cells (HSCs) and mesenchymal stem cells (MSCs) for downstream applications ranging from transcriptomic analysis to therapeutic implantation. The selection of an appropriate sorting strategy directly impacts experimental outcomes, including cell yield, purity, viability, and the reliability of omics data. This guide provides an objective comparison of the primary cell sorting methodologies—magnetic-activated cell sorting (MACS) and fluorescence-activated cell sorting (FACS)—within the context of modern stem cell research, with particular emphasis on how sorting choices influence subsequent pseudobulk transcriptome analysis.

The critical challenge in stem cell isolation lies in the inherent rarity of these populations; HSCs constitute less than 0.01% of bone marrow cells, necessitating robust pre-enrichment or high-resolution sorting strategies [23] [24]. Furthermore, emerging evidence indicates that the sorting method itself can significantly alter the molecular profile of cells, a crucial consideration for functional studies and therapeutic development [23] [25]. This guide synthesizes experimental data to help researchers navigate the trade-offs between throughput, purity, yield, and molecular fidelity when designing stem cell sorting protocols.

Technology Comparison: MACS vs. FACS

Performance Metrics and Experimental Data

The choice between MACS and FACS involves balancing multiple performance parameters. The following table summarizes quantitative data from direct comparison studies, providing an objective basis for selection.

Table 1: Quantitative Performance Comparison of MACS and FACS

| Performance Metric | MACS | FACS | Experimental Context |

|---|---|---|---|

| Cell Loss | 7-9% [26] | ~70% [26] | Separation of ALPL+ stromal vascular fraction (SVF) cells |

| Processing Speed (Single Sample) | 4-6x faster for low proportion targets [26] | Slower | ALPL+ SVF cells at low starting proportions (<25%) |

| Purity | Requires optimization for accuracy at high target proportions [26] | High accuracy across all proportions [26] | Defined mixtures of ALPL+ and ALPL- cells |

| Throughput | High; processes multiple samples in parallel [26] | Lower; processes samples sequentially [26] | Multiple samples of SVF cells |

| Post-Sort Viability | >83% [26] | >83% [26] | Human SVF cells and A375 melanoma cells |

| Therapeutic Potential | N/A | Enables selection based on extracellular vesicle (EV) secretion [25] | MSC selection for myocardial infarction treatment |

Technical Workflow Comparison

The fundamental difference between MACS and FACS lies in their separation mechanisms, which dictates their respective workflows, advantages, and limitations.

Table 2: Technical Foundations of MACS and FACS

| Feature | Magnetic-Activated Cell Sorting (MACS) | Fluorescence-Activated Cell Sorting (FACS) |

|---|---|---|

| Separation Principle | Magnetic labeling and column-based separation in a magnetic field [27] [28] | Electrostatic deflection of fluorescently-labeled droplets [29] |

| Labeling | Antibody-conjugated magnetic beads (direct or indirect) [27] | Antibody-conjugated fluorochromes [24] |

| Key Output | Enriched cell population based on a single marker (typically) | Multiparametric, high-purity sort based on multiple markers simultaneously [24] |

| Throughput | Very high; can process >10⁶ cells/second [28] | Lower; limited by droplet generation frequency and event rate [29] |

| Critical Settings | Antibody/bead concentration, cell concentration, flow rate [26] [28] | Drop-charge delay, nozzle size, laser alignment, sort mode [29] |

| Instrument Complexity | Relatively low; benchtop equipment | High; requires specialized, expensive machinery and expert operators [26] |

Figure 1: Comparative Workflows of MACS and FACS. MACS relies on magnetic separation in a column, while FACS utilizes fluorescence detection and electrostatic droplet deflection for higher-resolution sorting.

Experimental Protocols for Stem Cell Isolation

Mouse Hematopoietic Stem Cell Isolation via FACS

The isolation of pure HSCs is critical for studying hematopoiesis. The following protocol is standardized for adult C57Bl/6 mouse bone marrow.

Table 3: Key Research Reagent Solutions for Mouse HSC Sorting

| Reagent | Function | Example Clone/Catalog |

|---|---|---|

| Lineage Cocktail (FITC) | Labels mature hematopoietic cells for exclusion | CD3 (145-2C11), CD11b (M1/70), CD45R (RA3-6B2), Gr-1 (RB6-8C5), Ter119 (Ter119) [24] |

| Anti-c-Kit (PE) | Identifies progenitor cells | 2B8 [24] |

| Anti-Sca-1 (APC) | Identifies stem and progenitor cells | E13-161.7 [24] |

| Anti-CD150 (PE-Cy7) | Enriches for LT-HSCs (SLAM code) | TC15-12F12.2 [24] |

| Anti-CD48 (APC) | Enriches for LT-HSCs (SLAM code) | HM48-1 [24] |

| Anti-EPCR (PE) | Further enriches for HSCs (ESLAM phenotype) | RMEPCR1560 [24] |

| Fc Block (anti-CD16/32) | Prevents non-specific antibody binding | - |

Step-by-Step Protocol:

- Bone Marrow Harvesting: Isolate femora and tibiae from adult C57Bl/6 mice. Flush the marrow cavities using a syringe with a 21-26G needle filled with 1-3 mL of PBS (without Mg²⁺ or Ca²⁺) supplemented with 5 mM EDTA and 1% fetal calf serum [24].

- Single-Cell Suspension: Gently triturate the flushed marrow to create a single-cell suspension. Pass the suspension through a 40-70 μm cell strainer to remove aggregates and debris. Perform red blood cell lysis if necessary.

- Cell Staining: Resuspend up to 10⁸ cells in a staining buffer (PBS with 0.5-2% BSA and 2 mM EDTA). Incubate with an Fc block for 10-15 minutes on ice to reduce non-specific binding. Add the pre-titrated antibody cocktail (see Tables 1-3 in [24] for panel configurations) and incubate for 20-30 minutes on ice, protected from light.

- Viability Staining: Include a viability dye (e.g., DAPI or propidium iodide) to exclude dead cells during sorting.

- Flow Cytometry Setup and Sorting: Resuspend stained cells in sorting buffer and filter through a 35-40 μm cell strainer immediately before sorting. Use a high-purity sort mode (e.g., "Single-Cell" or "Purify" mode) and a nozzle size of 70-100 μm. Confirm the instrument's drop-delay calibration using reference beads for optimal recovery (

Rmax) [29]. - Gating Strategy:

- Gate 1 (Live Cells): Exclude debris based on FSC-A/SSC-A and select viable, nucleated cells (DAPI-negative).

- Gate 2 (Singlets): Select single cells using FSC-H vs. FSC-A to exclude doublets.

- Gate 3 (Lineage Negative): Select the Lin- (FITC-negative) population.

- Gate 4 (LSK Population): From Lin- cells, select the Sca-1⁺c-Kit⁺ population.

- Gate 5 (HSC Enrichment): For LT-HSCs, further select CD150⁺CD48⁻ cells from the LSK population (LSK/SLAM phenotype) [24].

Mesenchymal Stem Cell Isolation via MACS

This protocol adapts CD90-based MACS for isolating rabbit synovial fluid MSCs (rbSF-MSCs) as a translational model [27].

Step-by-Step Protocol:

- Cell Source and Culture: Collect synovial fluid from the knee joint cavity of a New Zealand white rabbit. Filter the fluid through a 40 μm nylon cell strainer to remove debris. Culture the filtered cells in complete medium (DMEM with 10% FBS and 1% Penicillin-Streptomycin) at 37°C and 5% CO₂. Replenish medium after 48 hours to remove non-adherent cells [27].

- Cell Preparation: Once cultures reach ~80% confluency, detach cells using 0.25% Trypsin-EDTA. Inactivate the trypsin with culture medium, pass the cell suspension through a 40 μm strainer, and centrifuge at 600 × g for 10 minutes. Resuspend the cell pellet in MACS running buffer (PBS, pH 7.2, 0.5% BSA, 2 mM EDTA) and perform a cell count [27].

- Magnetic Labeling: For 10⁷ total cells, centrifuge the suspension at 300 × g for 10 minutes. Completely aspirate the supernatant. Resuspend the pellet in 80 μL of buffer. Add 20 μL of anti-CD90 microbeads. Mix well and incubate for 15 minutes in the dark at 4°C. Add 1-2 mL of buffer to wash, then centrifuge and aspirate the supernatant [27].

- Magnetic Separation: Place an MS column in the magnetic field of the separator. Rinse the column with 500 μL of buffer. Apply the cell suspension to the column. Collect the flow-through containing unlabeled (CD90-negative) cells. Wash the column 2-3 times with 500 μL of buffer, collecting the wash each time. Remove the column from the magnet, place it over a collection tube, add 1 mL of buffer, and immediately flush out the magnetically labeled (CD90-positive) cells using the plunger [27].

- Post-Sort Culture and Validation: Culture the sorted CD90⁺ cells. Validate the sorted population by flow cytometry for positive expression of MSC markers (CD44, CD105) and negative expression of hematopoietic markers (CD45, CD34). Confirm trilineage differentiation potential (osteogenic, adipogenic, chondrogenic) in vitro [27].

Connecting Sorting Strategies to Pseudobulk Transcriptomics

The choice of cell sorting technology has profound implications for downstream transcriptomic analysis, particularly the emerging gold standard of pseudobulk analysis [9].

The Critical Role of Biological Replicates in Pseudobulk Analysis

Pseudobulk methods aggregate gene expression counts from all cells within individual biological replicates before performing differential expression (DE) analysis. This approach has been demonstrated to significantly outperform methods that analyze individual cells in isolation, as it more accurately recapitulates bulk RNA-seq results—the established ground truth [9]. The superiority of pseudobulk methods stems from their ability to properly account for the inherent variation between biological replicates. Methods that ignore this variation by pooling all cells are biased and prone to false discoveries, often identifying hundreds of differentially expressed genes even in the absence of true biological differences [9].

How Cell Sorting Influences Transcriptomic Fidelity

The sorting method can introduce technical artifacts that confound pseudobulk analysis:

- MACS and Replicate Integrity: MACS is highly efficient for pre-enriching target populations prior to FACS. For example, pre-enrichment of mouse HSCs can increase stem cell frequency more than 30-fold, drastically reducing sort time and potential cell stress in subsequent FACS steps [23] [30]. This is crucial for preserving the biological integrity of each replicate. However, different pre-enrichment strategies (e.g., lineage depletion vs. c-Kit selection) vary in speed, yield, and degree of enrichment, and this choice can significantly impact the number and levels of metabolites detected in HSCs [23] [30]. While not directly measured for transcriptomics, this indicates a clear effect on molecular profiling.

- FACS Settings and Data Quality: The accuracy of FACS is paramount. An miscalibrated drop-delay does not primarily affect purity but drastically reduces recovery—the number of target particles sorted relative to the number originally intended to be sorted [29]. Low recovery can lead to under-sampling of certain biological replicates, reducing statistical power and potentially introducing bias in pseudobulk comparisons. Furthermore, FACS itself can induce stress responses that alter gene expression. Therefore, sorting protocols must be optimized for speed and gentleness, especially for sensitive applications like metabolomics [23] and single-cell RNA-seq.

Figure 2: The Impact of Cell Sorting on Pseudobulk Analysis. The quality of the initial cell sort directly influences the validity of the downstream pseudobulk transcriptomic analysis. High purity, recovery, and careful handling of replicates are prerequisites for robust differential expression (DE) results.

Advanced and Emerging Sorting Technologies

Nanovial Technology for Functional Sorting

A major limitation of conventional sorting is its reliance on surface markers, which may not correlate with a cell's functional or therapeutic state. A novel nanovial technology addresses this by enabling the sorting of cells based on their secretory function, specifically the secretion of extracellular vesicles (EVs) [25].

In this platform, single cells are loaded into cavity-containing hydrogel particles (nanovials) that are functionalized with antibodies to capture secreted EVs on their surface. The captured EVs are then fluorescently labeled, and the entire nanovial (with its living cell) is sorted via FACS based on the fluorescence intensity, which corresponds to the level of EV secretion [25]. This method has been used to isolate MSCs with high EV secretion, which demonstrated distinct transcriptional profiles and superior therapeutic efficacy in a mouse model of myocardial infarction compared to low-secreting MSCs [25]. This represents a paradigm shift from phenotypic to functional sorting for cell therapy optimization.

Single-Cell vs. Single-Nucleus RNA-Seq Considerations

For tissues where cell dissociation is challenging (e.g., heart, brain), single-nucleus RNA-sequencing (DroNc-seq) provides an alternative to single-cell RNA-sequencing (Drop-seq) [31]. While Drop-seq profiles total cellular RNA, DroNc-seq profiles nuclear RNA. Key differences include:

- Gene Detection: Drop-seq typically detects more genes and transcripts per cell [31].

- Read Composition: DroNc-seq captures a much higher fraction of intronic reads (up to 50%), reflecting unprocessed nuclear transcripts. Incorporating these intronic reads is essential for improving gene detection rates in DroNc-seq [31].

- Gene Bias: Systematic differences exist, with DroNc-seq detecting more long non-coding RNAs and Drop-seq detecting more mitochondrial and ribosomal transcripts [31].

Despite these differences, both techniques can effectively identify cell types and reconstruct differentiation trajectories when analyzed with appropriate bioinformatic pipelines, including pseudobulk methods [31].

In single-cell transcriptomic studies of stem cell populations, pseudobulk analysis has emerged as a powerful statistical approach for comparing transcriptomes across conditions, donors, or time points. This method involves aggregating single-cell data from groups of cells—typically from the same cell type, sample, or experimental condition—to create composite "pseudobulk" profiles that resemble traditional bulk RNA-seq data. The pseudobulk approach effectively mitigates pseudoreplication bias by accounting for the non-independence of cells originating from the same individual, thereby controlling false positive rates in differential expression analysis [12] [32]. For stem cell researchers investigating population-level responses to differentiation cues, therapeutic compounds, or disease states, pseudobulk profiling provides a robust framework for identifying consistent transcriptional programs while accommodating the inherent technical and biological variability of single-cell data.

The fundamental strength of pseudobulk analysis lies in its compatibility with established bulk RNA-seq tools like DESeq2 and edgeR, which have well-validated statistical properties for detecting differentially expressed genes [33] [32]. When studying stem cell populations, this approach enables researchers to leverage sophisticated experimental designs—including paired samples, complex time courses, and multi-factorial perturbations—while maintaining proper statistical control over type I error rates. As the scale of single-cell studies continues to expand, particularly in clinical contexts involving multiple donors, pseudobulk methods provide a scalable solution for identifying reproducible transcriptional signatures that distinguish stem cell states, lineages, and response patterns.

Performance Comparison of Pseudobulk Methodologies

Benchmarking Against Single-Cell Specific Methods

Comprehensive benchmarking studies have demonstrated that pseudobulk approaches consistently outperform methods that treat individual cells as independent observations. When evaluated using balanced performance metrics like the Matthews Correlation Coefficient (MCC), pseudobulk methods achieve superior classification accuracy for distinguishing differentially expressed from non-differentially expressed genes [32]. This advantage is particularly pronounced as the number of cells per individual increases, where pseudoreplication methods show increasingly poor performance due to overestimation of statistical power [32].

Table 1: Performance Comparison of Differential Expression Methods

| Method Type | Specific Method | Type I Error Control | Statistical Power | MCC Score | Recommended Use Case |

|---|---|---|---|---|---|

| Pseudobulk | Pseudobulk-Mean | Conservative | High | 0.81-0.89 | Balanced cell numbers across samples |

| Pseudobulk | Pseudobulk-Sum (with normalization) | Conservative | High | 0.79-0.87 | Large sample sizes with normalization |

| Mixed Models | Two-part hurdle RE | Appropriate | Moderate | 0.45-0.62 | Complex hypothesis testing |

| Mixed Models | GLMM Tweedie | Appropriate | Low-Moderate | 0.35-0.55 | Small sample sizes |

| Pseudoreplication | Modified t-test | Inflated | High (false positives) | 0.20-0.45 | Not recommended |

| Pseudoreplication | Tobit models | Inflated | Moderate | 0.30-0.50 | Not recommended |

A critical advantage of pseudobulk methods is their robust performance across balanced and imbalanced experimental designs. While mixed models theoretically offer slight advantages with severely unbalanced cell numbers per individual, pseudobulk approaches with mean aggregation demonstrate comparable or superior performance in practical applications, even with imbalanced cell counts [12] [32]. This resilience makes pseudobulk methods particularly valuable for stem cell research, where cell numbers often vary substantially across experimental conditions due to differences in proliferation, survival, or differentiation efficiency.

Performance in Reproducibility and Meta-Analysis

The reproducibility of differential expression findings across independent studies represents a significant challenge in single-cell transcriptomics. Pseudobulk methods demonstrate superior performance in meta-analysis contexts, particularly for complex systems like neurodegenerative diseases where individual studies often yield inconsistent results [33]. When applied to stem cell datasets, this reproducible performance is crucial for distinguishing biologically meaningful transcriptional programs from study-specific artifacts.

Table 2: Reproducibility of Differential Expression Findings Across Studies

| Disease Context | Number of Studies | Reproducibility with Standard Methods | Reproducibility with Pseudobulk | AUC for Cross-Dataset Prediction |

|---|---|---|---|---|

| Alzheimer's Disease | 17 | <15% genes reproducible | 68% (with meta-analysis) | 0.68 → 0.89 |

| Parkinson's Disease | 6 | ~40% genes reproducible | 85% (with meta-analysis) | 0.77 → 0.92 |

| COVID-19 | 16 | ~60% genes reproducible | 90% (with meta-analysis) | 0.75 → 0.94 |

| Huntington's Disease | 4 | ~35% genes reproducible | 82% (with meta-analysis) | 0.85 → 0.95 |

The SumRank meta-analysis method, which prioritizes genes showing consistent differential expression patterns across multiple datasets, significantly enhances the discovery of reproducible biomarkers when combined with pseudobulk profiling [33]. For stem cell researchers integrating data from multiple experiments, laboratories, or platforms, this approach provides a robust statistical framework for identifying conserved transcriptional networks underlying stem cell identity, lineage commitment, and pathological dysfunction.

Experimental Protocols for Pseudobulk Construction

Standard Workflow for Pseudobulk Profile Generation

The construction of pseudobulk profiles from single-cell libraries follows a systematic workflow that transforms raw single-cell data into aggregated expression matrices suitable for differential expression analysis. The following protocol outlines the key steps for generating pseudobulk data from single-cell RNA-seq counts:

Step 1: Quality Control and Filtering Begin with standard quality control of single-cell data, removing low-quality cells based on metrics including total counts, detected features, and mitochondrial percentage. Filter out genes expressed in only a minimal number of cells (typically <10 cells) to reduce noise in subsequent aggregation steps.

Step 2: Cell Type Identification and Annotation Using clustering and marker gene analysis, assign each cell to a specific cell type or state. In stem cell research, this may involve distinguishing between pluripotent states, progenitor populations, and differentiated lineages using established marker genes.

Step 3: Define Aggregation Groups Determine the appropriate grouping scheme based on the experimental design. Common approaches include:

- Sample-level aggregation: Combine all cells of the same type from each biological sample (e.g., individual donor, culture, or treatment condition)

- Condition-level aggregation: Pool cells of the same type across replicates within the same experimental condition

- Custom groupings: Define groups based on experimental factors such as time points, differentiation stages, or spatial locations

Step 4: Count Aggregation For each group, sum the raw UMI counts across all cells within the group for each gene. This creates a pseudobulk expression matrix where rows represent genes and columns represent aggregated groups. The mathematical representation is:

[ PB{g,s} = \sum{c \in Cs} X{g,c} ]

Where ( PB{g,s} ) is the pseudobulk count for gene ( g ) in sample ( s ), ( Cs ) represents all cells belonging to sample ( s ), and ( X_{g,c} ) is the count of gene ( g ) in cell ( c ).

Step 5: Normalization Apply standard bulk RNA-seq normalization methods to the pseudobulk count matrix. Options include:

- DESeq2's median of ratios method for subsequent analysis with DESeq2

- Trimmed Mean of M-values (TMM) for analysis with edgeR or limma

- Counts Per Million (CPM) with appropriate normalization factors for general use

Step 6: Differential Expression Analysis Utilize established bulk RNA-seq tools such as DESeq2, edgeR, or limma-voom to identify differentially expressed genes between conditions while accounting for biological replication at the appropriate level.

Advanced Applications in Complex Experimental Designs

For sophisticated stem cell studies involving time-course experiments or multi-factor designs, pseudobulk analysis can be extended to accommodate these complexities:

Longitudinal Analysis of Differentiation Trajectories When studying stem cell differentiation over time, construct pseudobulk profiles at each time point for each cell type or transitional state. These can be analyzed using appropriate time-series methods such as spline models or likelihood ratio tests within the DESeq2 framework to identify genes with dynamic expression patterns.

Multi-Factor Experimental Designs For studies examining multiple experimental factors (e.g., treatment, genotype, and differentiation stage), construct pseudobulk profiles for each unique combination of factors. This enables the use of factorial designs to test for main effects and interactions using established bulk RNA-seq methodologies.

Integration with Other Data Modalities Pseudobulk profiles can facilitate integrated analysis of single-cell transcriptomic data with other data types. For example, aggregate accessibility scores from single-cell ATAC-seq can be correlated with pseudobulk expression profiles to identify putative regulatory relationships, or protein abundance measurements can be integrated with transcriptomic pseudobulk data for multi-omics analysis.

Table 3: Essential Research Reagents and Computational Tools for Pseudobulk Analysis

| Category | Item | Specification/Function | Application in Stem Cell Research |

|---|---|---|---|

| Wet Lab Reagents | 10X Chromium Single Cell Kit | 3' or 5' gene expression with UMIs | High-throughput single-cell transcriptomics of stem cell populations |

| Enzymatic Dissociation Reagents | Tissue/cell dissociation with viability preservation | Preparation of single-cell suspensions from stem cell cultures or tissues | |

| Cell Surface Marker Antibodies | FACS sorting for specific stem cell populations | Isolation of defined stem cell subsets before scRNA-seq | |

| Computational Tools | Seurat R Package | Single-cell data preprocessing and clustering | Cell type identification and quality control |

| DESeq2 R Package | Differential expression analysis of pseudobulk counts | Statistical testing for transcriptional changes | |

| Scater/SingleCellExperiment | Data structures for single-cell data | Container for single-cell counts and metadata | |

| muscat R Package | Specialized methods for multi-sample scRNA-seq | Streamlined pseudobulk differential expression | |

| Isosceles | Long-read single-cell isoform quantification | Alternative splicing analysis in stem cell populations [34] | |

| Reference Resources | Stem Cell Atlas References | Curated marker genes for stem cell states | Annotation of stem cell populations and transitional states |

| Gene Set Collections | Pluripotency, differentiation, and lineage programs | Functional interpretation of differential expression results |

Critical Considerations for Experimental Design

Addressing Technical and Biological Variability

Successful pseudobulk analysis requires careful consideration of variability at multiple levels. Biological replication remains essential, as pseudobulk profiles derived from multiple independent samples (donors, cultures, or experiments) enable statistically robust comparisons between conditions. Technical variability introduced during sample processing can be accounted for through batch correction methods or inclusion of batch terms in statistical models [13].

The selection of aggregation units should align with the experimental question. For studies focused on cell-type-specific responses, aggregation should be performed within each cell type and sample. When studying population-level behaviors or when cell numbers are limited, aggregation across related cell types or states may be appropriate, though this may obscure subtle cell-type-specific effects.

Mitigating Analysis Pitfalls

Several potential pitfalls require attention in pseudobulk analysis:

Library Size Normalization Unlike bulk RNA-seq, single-cell data with UMI counts provides absolute molecular counts. Standard size-factor-based normalization methods that assume most genes are unchanged across conditions may be inappropriate for stem cell studies where global transcriptional changes often occur during state transitions [13]. Consider alternative approaches such as spike-in normalization or methods that preserve absolute abundance information when comparing across conditions with potentially different total transcriptional output.

Handling of Zero-Inflation Single-cell data typically contains a high proportion of zeros, which can arise from biological absence of expression or technical dropout. Pseudobulk aggregation naturally mitigates this issue by summing across cells, but careful filtering of lowly-expressed genes prior to aggregation is recommended to reduce noise [13].

Donor Effects and Confounding In studies involving multiple donors or biological replicates, accounting for donor effects is critical for appropriate statistical inference. Pseudobulk methods naturally accommodate this through the use of sample-level replication in differential expression models, unlike methods that treat cells as independent observations [33].

Pseudobulk analysis represents a robust, statistically sound approach for comparative transcriptomic analysis in stem cell research. By aggregating single-cell data into composite profiles that respect biological replication, this methodology enables researchers to leverage well-validated bulk RNA-seq tools while capturing the cellular heterogeneity inherent to stem cell systems. The strong performance of pseudobulk methods across benchmarking studies, particularly in terms of reproducibility and control of false positive rates, makes them particularly valuable for identifying conserved transcriptional programs underlying stem cell identity, plasticity, and differentiation.

As single-cell technologies continue to evolve, pseudobulk approaches are adapting to accommodate new data types and experimental designs. The integration of long-read sequencing for isoform-resolution analysis [34], multi-modal data integration [19], and spatial transcriptomics represents promising frontiers for pseudobulk methodology. For stem cell researchers, these advances will enable increasingly precise dissection of the molecular networks that govern stem cell behavior in development, regeneration, and disease.

Differential expression (DE) analysis is a cornerstone of transcriptomics, enabling researchers to identify genes whose expression changes significantly across different biological conditions. In stem cell research, particularly when comparing population transcriptomes during differentiation, selecting an appropriate statistical method is crucial for generating accurate, biologically meaningful results. The single-cell RNA sequencing (scRNA-seq) revolution has introduced new analytical challenges, prompting the development of specialized DE methods. Among these, pseudobulk approaches have emerged as superior for population-level studies due to their ability to properly account for biological variability between replicates [9] [11]. This guide provides an objective comparison of current DE methodologies, with particular emphasis on their application to stem cell population studies.

The Critical Importance of Accounting for Biological Replicates

A fundamental challenge in DE analysis, particularly in scRNA-seq studies, stems from the hierarchical structure of biological data. Cells from the same biological replicate (donor) exhibit correlated expression patterns due to shared genetic background and experimental conditions. Ignoring this replicate-level variation leads to inflated false discovery rates by misattuting natural between-replicate variability to experimental effects [9] [13].

The False Discovery Crisis in Single-Cell Methods

Comprehensive benchmarking using gold-standard datasets has revealed that methods analyzing individual cells as independent observations produce dramatically elevated false positives. In one striking demonstration, these methods identified hundreds of differentially expressed genes—including abundant spike-in RNAs added at equal concentrations—when no biological differences actually existed [9]. This systematic bias preferentially affects highly expressed genes, potentially misleading biological interpretations.

Comparative Performance of Differential Expression Methods

| Method | Type | Key Strength | Key Limitation | Stem Cell Application |

|---|---|---|---|---|

| Pseudobulk (edgeR, DESeq2, limma) | Bulk adaptation | Excellent control of false discoveries [9] [11] | Aggregates cellular heterogeneity | Ideal for population-level stem cell comparisons |

| BEANIE | Non-parametric | Superior specificity for gene signatures [35] | Designed for pre-defined signatures | Stem cell pathway enrichment studies |

| DiSC | Single-cell | Fast individual-level analysis [36] | Newer method, less established | Large cohort stem cell studies |

| GLIMES | Single-cell | Handles UMI counts and zero proportions [13] | Complex implementation | Stem cell datasets with technical zeros |

| Wilcoxon Rank-Sum | Non-parametric | Computational simplicity [37] | Inflated false positives with spatial correlation [37] | Not recommended for structured data |

| QRscore | Non-parametric | Detects both mean and variance shifts [38] | Focuses on distributional changes | Identifying heterogeneous responses in stem cells |

Quantitative Performance Comparison

Table 2 summarizes benchmark results from rigorous methodological comparisons evaluating false discovery control and statistical power.

Table 2: Experimental Performance Benchmarks of DE Methods

| Method | Type I Error Control | Power | Computational Speed | Replicate Handling |

|---|---|---|---|---|

| Pseudobulk (mean) | Excellent (MCC: 0.85-0.95) [11] | High (>0.9 sensitivity) [11] | Fast | Properly accounts for replicates |

| Pseudobulk (sum) | Good (with normalization) [11] | High [11] | Fast | Properly accounts for replicates |

| BEANIE | Superior specificity (0.999) [35] | Perfect at ≥50% perturbation [35] | Moderate | Accounts for patient-specific biology |

| DiSC | Effectively controls FDR [36] | High statistical power [36] | Very fast (~100x faster than alternatives) [36] | Individual-level analysis |

| GLIMM | Good (theoretical) | High (theoretical) | Slow with convergence issues [37] | Accounts for correlations |

| Wilcoxon | Poor (inflated with correlations) [37] | Good | Very fast | Ignores replicate structure |

Experimental Protocols for Method Evaluation

Gold-Standard Benchmarking Framework

Rigorous method evaluation requires experimental designs where ground truth is known. The following protocol has been employed in multiple comprehensive benchmarks:

Dataset Curation: Identify matched bulk and scRNA-seq data from the same purified cell populations, exposed to identical perturbations, and sequenced in the same laboratories [9]

Method Application: Apply multiple DE methods to the scRNA-seq data while using bulk results as biological ground truth

Concordance Assessment: Calculate the area under the concordance curve (AUCC) between bulk and single-cell results [9]

Bias Evaluation: Test for systematic biases using spike-in RNAs with known concentrations [9]

Functional Validation: Compare Gene Ontology term enrichment between methods [9]

Simulation Study Design

Computational simulations provide complementary evidence by creating datasets with known differentially expressed genes:

Data Generation: Use tools like hierarchicell to simulate single-cell expression data with predefined DE genes [11]

Balanced Metric Selection: Apply Matthews Correlation Coefficient (MCC) which provides a balanced measure of performance considering both type I and type II errors [11]

Power Analysis: Generate receiver operating characteristic (ROC) curves to compare sensitivity at controlled type I error rates [11]

Imbalanced Condition Testing: Evaluate performance with unequal cell numbers between conditions to mimic real experimental data [11]

Implementation Workflow for Pseudobulk Analysis in Stem Cell Research

The following diagram illustrates the recommended pseudobulk workflow for stem cell transcriptome comparisons:

Protocol Details for Stem Cell Applications

Cell Type Identification: First, assign cells to specific subpopulations (e.g., distinct stem cell states) using clustering tools [36]