Purpose-Built Cell Therapy Software: Mastering Process Control and Data Integrity in 2025

This article provides researchers, scientists, and drug development professionals with a comprehensive guide to the specialized software platforms essential for modern cell therapy manufacturing.

Purpose-Built Cell Therapy Software: Mastering Process Control and Data Integrity in 2025

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive guide to the specialized software platforms essential for modern cell therapy manufacturing. It explores the foundational challenges of manual processes and data fragmentation, details the methodological application of Laboratory Information Management Systems (LIMS) and cell orchestration platforms for workflow automation, and offers strategies for troubleshooting supply chain and scalability issues. The content also delivers a validated, comparative analysis of leading software solutions to inform strategic selection, emphasizing how purpose-built digital tools are critical for ensuring data integrity, regulatory compliance, and the successful scale-up of transformative therapies.

Why Traditional Systems Fail: The Urgent Need for Purpose-Built Cell Therapy Software

The Data Integrity Crisis in Complex Cell Therapy Workflows

Cell therapy manufacturing represents a paradigm shift in medicine, harnessing living cells to fight previously incurable diseases [1]. However, this groundbreaking approach faces a formidable obstacle: a data integrity crisis within its complex, multi-stage workflows. Unlike traditional pharmaceuticals, cell therapies are living products, where subtle environmental shifts in temperature of just 1–2°C or slight media changes can dramatically compromise cell growth, potency, and safety [2]. The industry's historical reliance on manual, lab-scale methods and paper-based records has created a foundation insufficient for the data-intensive demands of modern therapeutic production. This application note dissects the origins and impacts of this crisis and provides detailed protocols for implementing robust, data-driven solutions that ensure product quality, regulatory compliance, and ultimately, patient safety.

The core of the problem lies in the personalized nature of many therapies. Autologous treatments, which use a patient's own cells, involve highly variable starting materials and individualized batch processing [1] [3]. This contrasts sharply with the precisely controlled starting materials used in traditional one-size-fits-all therapies, generating vast, heterogeneous datasets that are difficult to standardize and manage [3]. Furthermore, the continued use of paper batch records and disconnected data systems creates unacceptable risks of transcription errors, data loss, and an incomplete product history, making process traceability and investigation of deviations nearly impossible. As the industry scales to serve larger patient populations, overcoming this data integrity crisis is not merely a technical goal but a fundamental prerequisite for delivering safe, effective, and commercially viable treatments.

Quantitative Analysis of Data Pain Points

A systematic analysis of common data pain points across the cell therapy workflow reveals critical vulnerabilities. The following table summarizes the major failure modes, their impact on data integrity, and the consequent risk to the final product.

Table 1: Data Integrity Pain Points in Cell Therapy Manufacturing

| Manufacturing Stage | Common Data Failure Mode | Impact on Data Integrity | Therapeutic Product Risk |

|---|---|---|---|

| Cell Sourcing & Collection [1] | Lack of standardized collection protocols; manual patient/donor scheduling | Introduces variability in starting material quality; potential for sample misidentification | Compromised cell quality and viability; risk of patient misassignment |

| Cell Isolation & Activation [1] | Manual recording of process parameters (e.g., centrifugation speed, MACS/FACS settings) | Transcription errors; loss of granular data on critical process parameters (CPPs) | Batch-to-batch inconsistency in cell purity and yield |

| Cell Expansion & Engineering [1] [2] | Paper-based logs for media feeds, cytokine concentrations, and metabolic readings (e.g., glucose, pH) | Inability to correlate process parameters with critical quality attributes (CQAs) in real-time | Uncontrolled processes leading to subpotent products or undesirable cell phenotypes |

| Final Product Formulation & Cryopreservation [1] | Incomplete documentation of cryoprotectant addition or freezing rate | Breaks in the chain of identity and integrity; incomplete product history | Reduced cell viability and potency upon infusion; product failure |

The financial and temporal costs of these data failures are substantial. A single failed batch can cost over $500,000 [2], a loss compounded by the irreversible time cost for a patient awaiting a personalized treatment. Moreover, data stored on paper is functionally useless for the continuous process monitoring and improvement required by regulators and for operational excellence [2]. This lack of digitized, structured data prevents the application of advanced analytics and machine learning to identify subtle correlations between process parameters and product quality, perpetuating a cycle of process uncertainty and variable outcomes.

A Framework for Digital Process Control and Data Integrity

Resolving the data integrity crisis requires a strategic shift towards an integrated digital ecosystem. This framework is built on three pillars: automation of data capture, real-time process analytics, and the use of digital twins for predictive modeling.

Pillar 1: Automated Data Capture and Integration

The foundation of data integrity is the replacement of paper records with integrated digital systems. This involves deploying:

- Manufacturing Execution Systems (MES) and Electronic Batch Records (EBR) to digitize workflow instructions and data entry [2].

- Process Analytical Technology (PAT) such as inline sensors for pH, dissolved oxygen, glucose, and lactate to provide continuous, real-time data on bioreactor conditions [2].

- Automated sampling and analysis systems that feed data directly into a centralized data lake, eliminating manual transcription.

Pillar 2: Real-Time Analytics and AI-Powered Monitoring

With data streams digitized, advanced analytics can be deployed for real-time quality control. AI-powered batch monitoring systems function as tireless quality inspectors, simultaneously analyzing thousands of process parameters to catch subtle patterns of deviation [2]. For instance, machine learning algorithms can identify metabolite shifts that signal potential problems before they affect product quality, allowing operators to adjust parameters like temperature or nutrients proactively [2]. This moves the quality paradigm from reactive end-product testing to proactive real-time process control.

Pillar 3: Digital Twins for Predictive Modeling

A digital twin is a virtual replica of the manufacturing process that runs in parallel to the real process. Fueled by real-time data, it can predict future process states and allow for risk-free optimization. For example, the UK's Cell and Gene Therapy Catapult has developed a digital twin of the CAR-T cell expansion process. This model uses live sensor data to forecast cell growth trends and identify optimal feeding strategies, creating a cycle of ongoing process refinement [2].

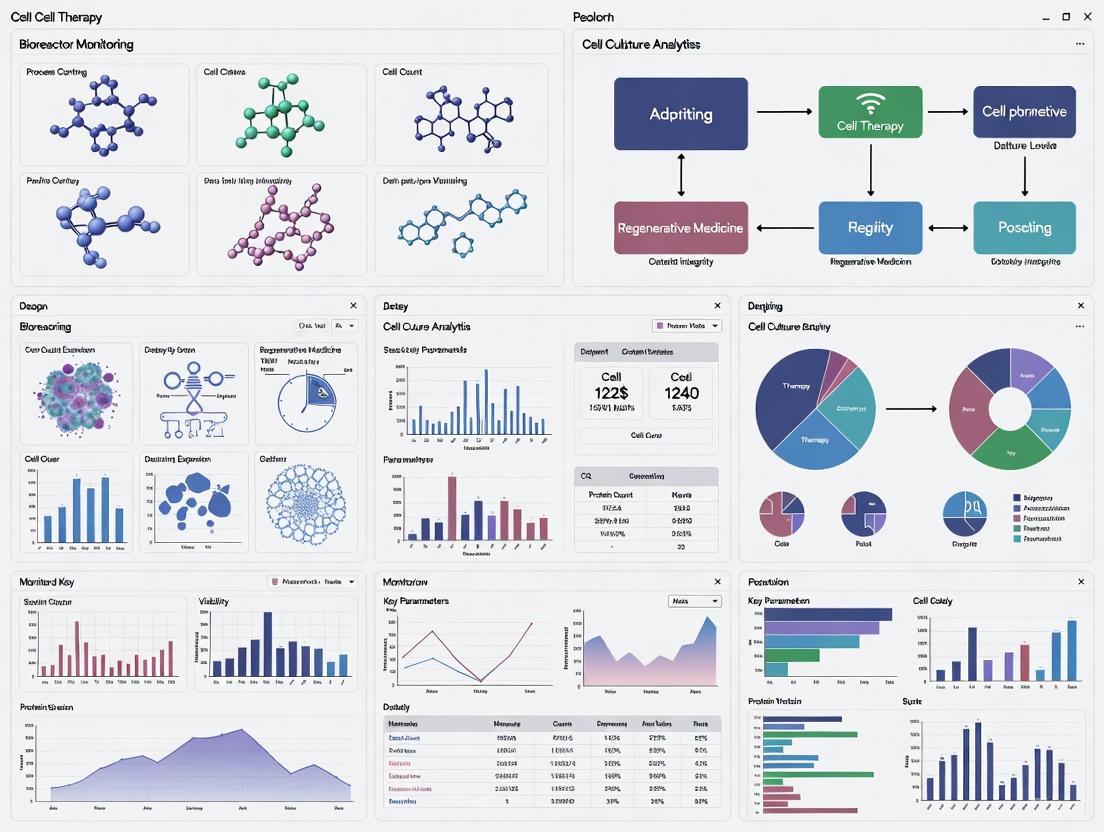

The following diagram illustrates the logical flow and interactions between these core pillars within a closed-loop control system that ensures data integrity.

Experimental Protocol: Implementing a Real-Time Process Control System

This protocol details the steps for integrating real-time, data-driven monitoring and control for a T-cell expansion process, a common step in CAR-T therapy manufacturing.

Objective

To establish an automated, data-informative bioreactor system for T-cell expansion that maintains critical process parameters within predefined ranges and collects all relevant data in a structured electronic format for real-time analysis and long-term traceability.

Materials and Equipment

Table 2: Research Reagent Solutions and Essential Materials

| Item | Function / Explanation |

|---|---|

| Closed-system Bioreactor | A scalable bioreactor (e.g., wave-mixed or stirred-tank) with integrated control units for temperature, pH, and dissolved oxygen (DO). Provides a controlled, sterile environment for cell growth. |

| Inline PAT Sensors | Sterilizable probes for pH, DO, glucose, and lactate. Enable continuous, real-time monitoring of key metabolic parameters without manual sampling. |

| Automated Sampler | An aseptic, inline cell counter (e.g., based on flow cytometry or image analysis). Provides periodic, automated cell count and viability data. |

| Data Historian / MES Software | A centralized software platform (e.g., a Manufacturing Execution System) that interfaces with all hardware to collect, timestamp, and store all process data in a structured database. |

| Cell Culture Media | Serum-free media optimized for T-cell expansion, supplemented with cytokines (e.g., IL-2). The specific formulation is a Critical Process Parameter. |

| Activation Reagents | Anti-CD3/CD28 antibodies (soluble or bead-bound) for T-cell activation and initiation of the expansion process [1]. |

Step-by-Step Methodology

System Setup and Calibration

- Aseptically install and calibrate all PAT sensors (pH, DO, glucose) according to manufacturer specifications.

- Connect the bioreactor control system, PAT sensors, and automated sampler to the central MES/data historian software. Verify data streaming for all parameters.

- Define and program the critical process parameter (CPP) setpoints and acceptable ranges into the bioreactor controller and MES. For example:

- pH: 7.2 - 7.4

- DO: 30-50%

- Glucose: Maintain between 4-6 mM

Process Initiation and Data Acquisition

- Load the bioreactor with media and initiate the control loops for temperature, pH, and DO.

- Inoculate with isolated and activated T-cells at the target seeding density, recording the exact cell count and viability data directly into the MES.

- The MES automatically creates a new, time-stamped electronic batch record. All subsequent actions and sensor readings are logged automatically.

Real-Time Monitoring and Control

- The data historian continuously collects data from all connected systems.

- Configure the MES or a connected analytics platform to trigger alerts if any CPP deviates from its setpoint range.

- Implement a control strategy where glucose and lactate readings from the PAT sensors inform automated media feed or perfusion rates to maintain metabolite levels.

Predictive Analysis and Intervention

- Feed real-time cell growth and metabolite data into a pre-validated digital twin of the process.

- Use the digital twin's predictions for peak cell density and nutrient exhaustion to plan feeding schedules or determine the optimal harvest time.

- Any process adjustments made based on these predictions must be recorded in the EBR with a note referencing the digital twin's output.

Process Conclusion and Data Archival

- Upon harvest, perform final product quality checks (e.g., phenotype by flow cytometry, potency assay) and link these Critical Quality Attribute (CQA) results to the process data in the centralized database.

- The MES generates a final, complete batch report, ensuring a full and auditable data trail from cell source to final product.

- All electronic records are archived in a secure, compliant manner for long-term retention, facilitating future lot comparisons and regulatory submissions.

The following diagram maps this experimental workflow, highlighting the key stages and the continuous, bidirectional flow of data and control instructions.

The Scientist's Toolkit: Essential Digital Solutions

Implementing the framework above requires a suite of specialized tools and technologies. The following table catalogs key solution categories and their specific functions for addressing the data integrity crisis.

Table 3: Key Research Reagent Solutions for Digital Workflows

| Solution Category | Specific Function / Explanation |

|---|---|

| Manufacturing Execution System (MES) | A software system that tracks and documents the transformation of raw materials into finished goods. It digitizes the batch record, enforces standard operating procedures (SOPs), and is the primary system of record for production data. |

| Process Analytical Technology (PAT) | A system for inline, online, or at-line measurement of CPPs and CQAs. Examples include bioreactor probes (pH, DO) and automated, inline cell counters. Provides the real-time data stream for analysis. |

| Digital Twin Software | A platform for creating a virtual replica of a physical process. It uses real-time data and models to simulate, predict, and optimize the manufacturing process, allowing for risk-free testing of parameters [2]. |

| Cloud-Based Data Platforms | Secure, scalable computing platforms that host centralized data lakes. They enable global collaboration on color workflows (e.g., PantoneLIVE) and complex datasets, supporting AI-driven analytics and regulatory submissions [4]. |

| AI/ML Batch Monitoring | Software that uses machine learning algorithms to analyze historical and real-time batch data. It identifies subtle patterns and correlations, predicts outcomes, and flags anomalies that might be missed by human operators [2]. |

| Electronic Lab Notebook (ELN) | A digital system for recording research and development experiments. It promotes data integrity at the R&D stage, ensuring that early process development data is structured and traceable. |

The data integrity crisis in cell therapy is a significant barrier to industrializing these transformative treatments. However, as outlined in this application note, a strategic combination of integrated digital systems, predictive analytics, and purpose-built automation provides a clear path forward. By adopting the protocols and frameworks described, manufacturers can transform their workflows from error-prone, paper-based exercises into robust, data-rich processes. This evolution is critical not only for regulatory compliance but also for building the consistent, scalable, and economically viable manufacturing operations required to deliver on the full promise of cell therapies for patients worldwide. The future of cell therapy manufacturing is digital, and the time to invest in that future is now.

In the specialized field of cell and gene therapy manufacturing, manual process control rooted in spreadsheet-based systems presents significant and multifaceted risks to product quality and patient safety. Advanced Therapy Medicinal Products (ATMPs) require aseptic processing across multiple, complex unit operations, often over extended durations, making them uniquely vulnerable to inconsistencies inherent in human-dependent workflows [5]. This application note details the quantitative risks of manual interventions and provides structured, actionable protocols for implementing robust, automated process control strategies. Adherence to these methodologies is critical for ensuring data integrity, compliance with Good Manufacturing Practice (GMP), and the consistent production of safe, efficacious therapies [6] [7].

Quantitative Risk Analysis of Manual Interventions

A systematic risk assessment of aseptic processing steps is fundamental to a proactive contamination control strategy. The Aseptic Risk Evaluation Model (AREM) provides a formal framework for quantifying the risk of manual manipulations based on three key factors: duration, complexity, and proximity to the exposed product or process stream [5].

Table 1: Aseptic Risk Evaluation Model (AREM) Factor Definitions and Scoring Criteria [5]

| Risk Factor | Low Risk (Score=1) | Medium Risk (Score=2) | High Risk (Score=3) |

|---|---|---|---|

| Duration | Short (< 1 minute) | Medium (1-5 minutes) | Long (> 5 minutes) |

| Complexity | Simple, single motion | Multiple, coordinated motions | Complex, multi-part manipulation |

| Proximity | Far from open product | Close to, but not over, open product | Directly over exposed product |

The overall risk score for each aseptic manipulation is determined through a two-matrix scoring system, which classifies procedures and dictates required risk mitigation actions.

Table 2: AREM Preliminary and Final Risk Matrices for Overall Risk Scoring [5]

| Preliminary Matrix (Complexity x Duration) | Duration → | Short (1) | Medium (2) | Long (3) | | Complexity ↓ | | | | | | Simple (1) | | Low (L) | Low (L) | Medium (M) | | Moderate (2) | | Low (L) | Medium (M) | High (H) | | Complex (3) | | Medium (M) | High (H) | High (H) | | Final Matrix (Preliminary Score x Proximity) | Proximity → | Far (1) | Close (2) | Over (3) | | Preliminary Score ↓ | | | | | | Low (L) | | Low | Medium | High | | Medium (M) | | Medium | High | High | | High (H) | | High | High | High |

Specified Actions:

- Low Risk: No additional controls required.

- Medium Risk: Procedure requires procedural and/or physical controls.

- High Risk: Requires procedural, physical, and verification controls; engineering controls should be evaluated [5].

Impact on Data Integrity and GMP Compliance

Manual processes reliant on paper batch records and spreadsheet data entry are inherently susceptible to errors that compromise data integrity, directly contravening GMP principles and global regulatory expectations [8] [9]. The ALCOA+ framework (Attributable, Legible, Contemporaneous, Original, Accurate, Complete, Consistent, Enduring, and Available) defines the mandatory standards for GxP data [9]. Manual transcription, for example, jeopardizes "Accuracy," while a lack of robust audit trails in spreadsheets breaks the "Attributable" and "Contemporaneous" links, making data unreliable.

Evidence indicates that automating quality control (QC) processes, a major source of manual data handling, can fundamentally rectify these weaknesses. Integrated QC platforms that robotically handle samples and automatically upload data to Laboratory Information Management Systems (LIMS) demonstrably improve assay robustness, reduce manual labor, and ensure the generation of reliable electronic batch records [8].

Experimental Protocol for Assessing Aseptic Process Risks

The following protocol provides a detailed methodology for conducting an AREM-based risk assessment within a GMP environment, a critical first step in justifying process automation.

Protocol 1: Aseptic Risk Evaluation for a Cell Therapy Manufacturing Process

Objective: To systematically identify, analyze, and evaluate all aseptic manipulations within a specific cell therapy manufacturing process to determine their relative risk to sterility assurance and prioritize areas for control and automation.

Materials and Reagents:

- Batch Records: Complete manufacturing instructions for the drug product.

- Process Demonstrations: Access to the manufacturing suite or simulation materials (e.g., water, mock solutions) to observe manipulations.

- AREM Scoring Tool: Matrix tool for scoring duration, complexity, and proximity.

Procedure:

- Team Formation & Pre-work: Assemble a multidisciplinary team including SMEs from Manufacturing, MSAT, Quality Assurance, and QC Microbiology. A trained risk facilitator should lead the session.

- Scope Definition: Define the aseptic boundary and start/end points of the assessment. Document assumptions (e.g., inclusion/exclusion of sterile welding).

- Process Demonstration: Conduct a walkthrough or video review of the entire manufacturing process. Personnel who perform the process should demonstrate each aseptic manipulation.

- Manipulation Identification: Using the batch record and process demonstration, create a comprehensive list of every individual aseptic manipulation within the defined scope.

- Factor Rating: For each identified manipulation, the team collectively assigns scores (1-3) for Duration, Complexity, and Proximity based on the criteria in Table 1.

- Overall Risk Scoring: Input the factor scores into the AREM matrices (Table 2) to determine a preliminary risk value and then the final overall risk score (Low, Medium, High).

- Action Plan Development: For manipulations scoring Medium or High, document specific risk mitigation actions, which may include procedural enhancements, physical barriers, personnel training, or the implementation of automated/closed systems.

Transitioning to Automated Process Control

Quantifying the risks of manual processes provides the justification for investing in purpose-built automation, which simultaneously enhances quality, compliance, and scalability [3] [8]. Automated platforms, such as closed-system bioreactors and integrated manufacturing solutions, minimize human interventions by design. For example, a single-use consumable cartridge that integrates all unit operations allows patient material to remain within a closed system from initial loading until harvest, drastically reducing aseptic risks [8].

The logical workflow below contrasts the high-risk, complex pathway of a manual process with the streamlined, controlled pathway of an automated system, highlighting the reduction in intervention points and data handoffs.

The implementation of automated systems directly addresses the critical risks identified in manual processes. The following table quantifies the comparative benefits observed in automated cell therapy manufacturing.

Table 3: Comparative Analysis: Manual vs. Automated Process Control

| Performance Attribute | Manual Control | Automated Control | Quantitative Benefit of Automation |

|---|---|---|---|

| Aseptic Interventions | High frequency (e.g., welds, transfers) | Single closed-system load | Reduces contamination risk points by design [8] |

| Process Scalability | Limited by personnel and space | Parallel processing (e.g., 16 cartridges) | Scales from tens to hundreds of patients annually [8] |

| Data Integrity | Manual transcription, paper records | Automated data upload, electronic batch records | Improves data quality, consistency, and provides reliable audit trail [8] |

| Batch Consistency | High variability from operator technique | Software-defined, standardized workflows | Enables higher product consistency and quality [8] [7] |

The Scientist's Toolkit: Essential Research Reagent Solutions

The development and execution of robust, GMP-compliant processes for cell therapies require specific materials and software solutions. The following table details key reagents and systems critical for ensuring process control and data integrity.

Table 4: Essential Reagents and Systems for GMP Process Control

| Item | Function/Application | Relevance to Process Control & Data Integrity |

|---|---|---|

| Closed-System Consumable Cartridges | Integrated single-use units for cell enrichment, activation, expansion, and formulation. | Eliminates open processing steps, directly reducing aseptic risk identified in AREM [8]. |

| Laboratory Information Management System (LIMS) | Software for managing samples, associated data, and laboratory workflows. | Centralizes data, ensures ALCOA+ compliance, and integrates with automated equipment for end-to-end traceability [10]. |

| Automated QC Platforms | Integrated systems with robotic liquid handling and commercial analytical instruments. | Automates in-process and release testing, reducing human error and improving data robustness and throughput [8]. |

| Cell Orchestration Platform (COP) | Software for tracking and managing the chain of identity and chain of custody for patient materials. | Manages the complex, personalized supply chain, ensuring the right patient gets the right product [10]. |

| Stable Leukapheresis Product | Starting material for autologous therapies. Maintaining viability during hold is critical. | Defined hold times (e.g., up to 73h at 2-8°C) ensure starting material quality, a foundational CQA for manufacturing success [11]. |

The reliance on manual process control and spreadsheet-based data management represents an untenable risk in the modern GMP landscape for cell and gene therapies. By employing structured risk models like the AREM to quantitatively identify vulnerabilities and subsequently implementing purpose-built automated systems, manufacturers can proactively assure product quality and patient safety. The transition to automation is not merely a technical upgrade but a fundamental requirement for achieving the data integrity, regulatory compliance, and scalable production needed to deliver these life-changing advanced therapies reliably.

Chain of Identity (CoI) is a critical data integrity and process control system that ensures the correct patient-specific biological material is tracked throughout the entire cell therapy manufacturing process. In patient-specific autologous therapies, where the product is manufactured for a single individual from their own cells, maintaining an unbroken CoI is essential for patient safety and regulatory compliance. The ISBT 128 Chain of Identity Identifier provides a globally unique, structured system for securing the chain of custody throughout the lifecycle of human-derived biological material, from donation through manufacturing to administration for patient-specific therapy [12]. This system uses a structured sequence of alphanumeric characters including a Facility Identification Number (FIN) assigned by ICCBBA to ensure global uniqueness, creating an auditable trail that prevents product misidentification [12].

The complexity of autologous cell therapy manufacturing necessitates sophisticated software solutions that can manage the numerous variables and data points generated throughout the process. Platforms like OCELLOS by TrakCel support cell and gene therapy chain of identity, chain of custody, visibility, and auditing by leveraging a flexible, modular framework powered by Salesforce.com [13]. These systems centralize all records relating to every therapy journey for every patient, providing real-time tracking and reportable audit trails that leave searchable, time-stamped records [13]. Similarly, Clarkston's Cell Therapy Orchestration Platform (CTOP) supports the operational needs of cell therapies that do not fit into the current architectures of life sciences organizations by providing real-time access to the changing variables of patients and manufacturing in a single platform [14].

Quantitative Requirements for Chain of Identity Systems

Data Integrity and ALCOA+ Principles

Cell and gene therapy developers must implement robust data management systems that adhere to ALCOA+ principles, which stand for Attributable, Legible, Contemporaneous, Original, Accurate, plus Complete, Consistent, Enduring, and Available [15]. These principles form the foundation for reliable data throughout the chain of identity process. The variable nature of personalized therapies presents significant challenges for maintaining data integrity, as starting material changes for every patient, process parameters differ for every production process, and the final product varies for every patient [15].

Table 1: Data Integrity Control Framework for Chain of Identity Systems

| Control Type | Implementation Examples | ALCOA+ Principles Addressed |

|---|---|---|

| Behavioral Controls | Training on documentation protocols, Data integrity culture | Attributable, Contemporaneous, Accurate |

| Technical Controls | Automated audit trails, Access controls, Electronic signatures | Original, Enduring, Available, Legible |

| Procedural Controls | SOPs for data recording, Review processes, Metadata management | Complete, Consistent, Attributable |

The three areas of data integrity control setting—behavioral, technical, and procedural controls—must work in concert to ensure data is kept complete following ALCOA+ principles [15]. Most often, different types of controls need to be combined; for example, if procedures do not describe where to store data, the technical control on its own would fail from having an access-controlled data folder.

Regulatory and Compliance Requirements

Compliance with Good Manufacturing Practice (GMP) guidelines is mandatory for cell therapy manufacturing. GMP is defined by the Medicines and Healthcare Products Regulatory Agency (MHRA) in the United Kingdom as "that part of quality assurance which ensures that medicinal products are consistently produced and controlled to the quality standards appropriate to their intended use and as required by the marketing authorization or product specification" [16]. Both the U.S. Food and Drug Administration (FDA) and the European Medicines Agency (EMA) have similar definitions, with cell therapy products considered advanced-therapy medicinal products in Europe and reviewed by the Committee for Advanced Therapies [16].

Table 2: Key Regulatory Requirements for Patient-Specific Manufacturing

| Regulatory Area | Key Requirements | Applicable Regulations |

|---|---|---|

| GMP Compliance | Consistent production and control, Quality standards, Facility and equipment validation | 21 CFR 210, 211, 610, 820 (U.S.), Regulation (EC) No. 1394/2007 (EU) |

| Chain of Identity | Patient identification, Sample tracking, Audit trails, Reconciliation | 21 CFR 1271 (Human Cells, Tissues, and Cellular and Tissue-Based Products) |

| Data Integrity | ALCOA+ principles, Electronic records, Audit trails | 21 CFR Part 11 (Electronic Records; Electronic Signatures) |

| Product Testing | Identity, Safety (viral), Purity, Potency, Sterility, Endotoxin, Mycoplasma | FDA Guidance for Industry: CGMP for Phase 1 Investigational Drugs |

Implementing GMP guidelines for autologous cell therapy products requires a comprehensive approach that begins at the manufacturing process design stage. It is necessary to perform a gap analysis for GMP compliance from incoming raw material quality and source to the validation of final product shipping containers [16]. For each process step, the overall approach should be to reduce risk of contamination of the product, establish documentation to verify that the entire process is correctly performed, and minimize variability in the process while maintaining the salient characteristics and function of the cells of interest [16].

Experimental Protocols for Chain of Identity Implementation

Protocol: Implementing a Comprehensive Chain of Identity System

Objective: Establish and validate a robust chain of identity system for patient-specific autologous cell therapy manufacturing that ensures correct patient-product association throughout the process.

Materials:

- ISBT 128 Chain of Identity Identifier system [12]

- Cell therapy orchestration platform (e.g., OCELLOS, CTOP) [13] [14]

- Barcode labeling system

- Electronic documentation system

- Audit trail functionality

Methodology:

Patient Enrollment and Identification

- Utilize a wizard interface to guide users through patient enrollment process, capturing patient information and identification details [13]

- Assign a globally unique ISBT 128 CoI Identifier following the structured sequence of alphanumeric characters including the Facility Identification Number (FIN) [12]

- Generate patient-specific labels with barcodes containing the CoI Identifier

- Document all enrollment information in a centralized system with time-stamped audit trails [13]

Sample Collection and Processing

- Apply patient-specific labels to all collection materials prior to sample acquisition

- Verify patient identity using two independent identifiers before sample collection

- Scan barcodes at each process step to maintain chain of custody

- Record all sample manipulations with contemporaneous documentation [15]

Manufacturing Process Tracking

- Integrate CoI system with manufacturing equipment for automated data capture

- Implement electronic batch records linked to the CoI Identifier

- Establish process parameter controls with acceptable ranges defined

- Document all deviations and corrective actions within the system

Product Release and Administration

- Perform final reconciliation of CoI documentation

- Verify patient identity before product administration

- Scan product barcode and patient identifier at bedside

- Document administration details and complete the CoI audit trail

Validation Parameters:

- 100% accuracy in patient-product association throughout process

- Complete and searchable audit trail for all transactions [13]

- No unverified steps or data gaps in the chain of identity

- Successful data reconstruction after 5 years [15]

Figure 1: Chain of Identity Workflow with Data Integrity Controls

Protocol: Technology Transfer to Contract Manufacturing Organizations

Objective: Establish a robust technology transfer process for chain of identity systems when transitioning from academic development to contract manufacturing organizations (CMOs).

Materials:

- Complete documentation package

- CMO with cell therapy experience [16]

- Quality agreement

- Validation protocols

Methodology:

Pre-Transfer Preparation

- Select CMO with appropriate scientific and technical expertise, experience, and capacity to manufacture at the scale needed [16]

- Evaluate CMO's history and breadth of experience in cell therapy, including types of cell therapy products manufactured, length and scale of manufacturing campaigns, and track record of regulatory interactions [16]

- Prepare comprehensive documentation package including scientific background publications, cell isolation and manipulation protocols, assay protocols, bill of materials for production, raw material specifications, and process parameter data [16]

Information Transfer

- Conduct knowledge transfer sessions to educate CMO scientists and technical staff on the cell product concept and manufacturing process [16]

- Perform gap analysis for GMP compliance and identify critical process steps [16]

- Develop appropriate quality control assays and specifications for process control [16]

- Transfer and validate the chain of identity system at the CMO site

Process Implementation

- Establish comparable chain of identity documentation systems

- Validate all critical process steps and quality controls

- Conduct parallel processing runs to demonstrate equivalence

- Establish ongoing monitoring and communication protocols

Validation Parameters:

- Equivalent product quality and chain of identity maintenance

- Successful regulatory inspection readiness

- No interruptions in patient-specific product tracking

- Complete data transfer and system functionality

Essential Research Reagent Solutions for Chain of Identity

Table 3: Key Research Reagent Solutions for Patient-Specific Manufacturing

| Reagent/Material | Function | GMP Requirements |

|---|---|---|

| Cell Isolation Reagents | Isolation of specific cell types from patient samples | GMP-grade, United States Pharmacopeia, or European guidelines [16] |

| Cell Culture Media | Expansion and maintenance of cells during manufacturing | Serum-free, xeno-free formulations preferred; GMP-manufactured [16] [17] |

| Cryopreservation Solutions | Preservation of cell products during storage and transport | Defined composition, endotoxin testing, stability validation [16] |

| Genetic Modification Agents | Viral vectors or other agents for cell engineering | GMP-manufactured, purity and safety testing [16] |

| Quality Control Assays | Testing for identity, potency, safety, purity | Validated methods, qualification for intended use [16] |

The selection of appropriate reagents and materials is critical for maintaining chain of identity and ensuring product quality. Where practical, the use of single-use, disposable materials and closed systems for manipulating cells is preferred [16]. Reagents, media and supplements, and cytokines should be manufactured under GMP, United States Pharmacopeia, or European guidelines. If not available in these forms, then additional testing may be needed to ensure the appropriate level of purity and lot-to-lot consistency [16].

Automation systems play a crucial role in maintaining chain of identity by reducing manual interventions and associated errors. Systems like the Gibco CTS Rotea Counterflow Centrifugation System, CTS Dynacellect Magnetic Separation System, and CTS Xenon Electroporation System provide closed, automated processing that minimizes contamination risks and reduces the need for cleanroom environments [17]. These systems improve process consistency and reliability by reducing operator variability and hands-on time, which is essential for maintaining data integrity throughout the chain of identity [17].

Figure 2: Data Integrity Framework Supporting Chain of Identity

The implementation of a robust chain of identity system is fundamental to the successful development and commercialization of patient-specific cell therapies. As the field continues to evolve with over 4,238 gene, cell, and RNA therapies in development as of Q4 2024 [17], the importance of standardized, globally-recognized systems like the ISBT 128 Chain of Identity Identifier cannot be overstated [12]. These systems provide the foundation for maintaining patient safety through accurate product identification and tracking from donor to patient.

The integration of comprehensive data integrity practices with chain of identity systems ensures regulatory compliance and facilitates the technology transfer process from academic research to commercial manufacturing. By implementing the protocols and systems described in this document, researchers and developers can establish a solid foundation for the development of safe and effective patient-specific cell therapies that maintain the critical link between the patient and their personalized therapeutic product throughout the entire manufacturing and administration process.

In the rapidly advancing field of cell therapy, legacy software systems have emerged as a critical bottleneck, impeding scalability and threatening commercial viability. These outdated platforms, defined as aging hardware and applications no longer supported with regular patches or updates, create fundamental constraints on an industry where precision, data integrity, and process control are paramount [18]. With approximately 62% of organizations across various sectors, including life sciences, still dependent on legacy systems, the cell therapy industry faces disproportionate risks due to the highly specialized and regulated nature of its operations [19] [18].

The reliance on legacy systems creates a compounding dilemma: while these platforms once served as the backbone for early research and development, they now actively hinder transition to commercial-scale manufacturing. This directly impacts the ability to bring life-saving therapies to patients efficiently. Security vulnerabilities are particularly pressing in this context, with 43% of IT professionals identifying them as a major concern, especially when handling sensitive patient data and proprietary research [19]. Furthermore, system incompatibility with modern tools creates operational silos that prevent the unified data analysis essential for Quality by Design (QbD) principles and regulatory compliance [19] [20].

Perhaps most critically for the cell therapy sector, legacy software limitations directly impact process control and data integrity – two pillars of current Good Manufacturing Practice (cGMP). The inability to integrate with modern Process Analytical Technology (PAT) and real-time monitoring systems means manufacturers cannot implement the continuous process verification and adaptive control strategies needed for robust, scalable production of living medicines [3] [2].

Quantitative Impact: The Data Behind Legacy System Limitations

The constraints imposed by legacy systems translate into measurable impacts on operational efficiency, financial performance, and scalability. The following data, synthesized from industry surveys and research, quantifies these challenges specifically within technology-dependent sectors that mirror the operational requirements of cell therapy manufacturing.

Table 1: Quantitative Impacts of Legacy Systems on Business Operations

| Impact Category | Metric | Data Source | Relevance to Cell Therapy |

|---|---|---|---|

| Organizational Reliance | 62% of organizations still use legacy systems | Saritasa 2025 Survey [19] | Indicates industry-wide challenge affecting life sciences |

| Security Concerns | 43% cite security vulnerabilities as major concern | Saritasa 2025 Survey [19] | Critical for patient data protection and IP security |

| IT Budget Allocation | Up to 70% of IT budgets spent on legacy maintenance | McKinsey Research [21] | Diverts resources from innovation and process improvement |

| Modernization Drivers | 48% prioritize performance improvements as top goal | Saritasa 2025 Survey [19] | Directly impacts manufacturing throughput and efficiency |

| Financial Performance | Technical debt limits innovation ability for 70% of organizations | C-level Executive Survey [21] | Reduces competitive positioning in rapidly evolving field |

| Operational Costs | 30-50% reduction in maintenance costs after modernization | IBM Modernization Research [21] | Significant for cost-intensive cell therapy manufacturing |

Table 2: Modernization Benefits and Financial Justification

| Benefit Category | Improvement Range | Source Verification | Cell Therapy Application |

|---|---|---|---|

| Infrastructure Savings | 15-35% annually | IBM Modernization Research [21] | Funds reinvestment in advanced manufacturing technologies |

| Maintenance Cost Reduction | 30-50% reduction | IBM Modernization Research [21] | Lowers cost of goods (COGs) for therapeutic products |

| IT Operations Productivity | 30% improvement | Microsoft Azure Research [21] | Increases manufacturing team efficiency |

| Application Development Speed | 50% increase | Microsoft Azure Research [21] | Accelerates development of custom process control solutions |

| ROI from Modernization | 228% over three years | Microsoft Azure PaaS Study [21] | Strong financial justification for strategic investment |

The data reveals a clear pattern: legacy systems consume disproportionate resources while delivering diminishing returns. For cell therapy organizations, this resource drain directly impacts patient access and therapy affordability. As noted in industry analysis, "legacy manufacturing processes remain the leading driver of high therapeutic costs" because they are "complex, resource-intensive, and difficult to scale" [22]. This creates a bottleneck that ultimately limits patient access to transformative treatments.

Legacy System Challenges in Cell Therapy Manufacturing

The transition from laboratory-scale production to commercial manufacturing exposes specific vulnerabilities created by legacy software systems in cell therapy operations.

Data Integrity and Process Control Limitations

Legacy systems fundamentally lack the architecture required for modern quality-by-design (QbD) approaches essential to cell therapy manufacturing. These outdated platforms typically operate with disconnected data silos that prevent comprehensive process analysis and control [19] [18]. As one industry expert notes, "data on paper is not useful… it's impossible to evaluate for continuous monitoring and improvement" [2]. This limitation is particularly problematic in autologous cell therapy manufacturing, where each batch is unique to a patient and process consistency must be achieved across inherent biological variability.

The inability to implement real-time process control represents another critical limitation. Modern cell therapy manufacturing requires continuous monitoring of critical process parameters (CPPs) to ensure consistent product quality [20] [2]. Legacy systems lack the integration capabilities to connect with advanced sensors and analytical tools that enable real-time adjustment of process parameters. This forces manufacturers to rely on end-point testing, which often comes too late – a failed batch can mean wasted time, money, and, most importantly, a patient left waiting [2].

Scalability and Integration Barriers

Legacy systems create fundamental constraints on manufacturing scalability through incompatibility with modern automation platforms. As the industry moves toward purpose-built automation systems like Cellares' Cell Shuttle platform, which minimizes manual intervention and associated contamination risks, integration with legacy software becomes a significant challenge [8] [3]. This incompatibility prevents manufacturers from leveraging the benefits of closed, automated systems that can process multiple batches in parallel within a compact footprint [8].

Furthermore, legacy systems hinder the implementation of digital twins and advanced modeling approaches that are becoming essential for process optimization and scale-up. These virtual replicas of manufacturing processes require real-time data integration and sophisticated analytics capabilities that legacy architectures simply cannot support [2]. As noted in industry analysis, "digital twins allow manufacturers to simulate and optimise cell culture or processing steps virtually, using live process data to test 'what-if' scenarios without risking real product" [2]. Without this capability, process development and scale-up become more time-consuming, expensive, and risky.

Table 3: Specific Legacy System Challenges in Cell Therapy Context

| Challenge | Impact on Cell Therapy Manufacturing | Consequence |

|---|---|---|

| System Incompatibility | Cannot integrate with modern PAT tools | Limits real-time process control and quality monitoring |

| Data Silos | Prevents correlation of process parameters with product quality | Hinders process understanding and optimization |

| Limited Scalability | Unable to support parallel processing of multiple patient batches | Restricts manufacturing capacity and patient access |

| Security Vulnerabilities | Risk to sensitive patient data and intellectual property | Potential compliance violations and data integrity concerns |

| High Maintenance Costs | Diverts resources from process innovation | Increases overall cost of goods and therapy pricing |

Modernization Approaches: Frameworks for Transformation

Several strategic approaches exist for modernizing legacy systems, each with distinct advantages and implementation considerations for cell therapy organizations.

Modernization Methodologies

Replatforming: This approach involves migrating legacy systems to modern platforms, such as x86 servers or cloud environments, while preserving the existing application code and functionality [18]. For cell therapy organizations, this can provide immediate benefits in performance and scalability without the risk of completely redeveloping validated processes. The Stromasys Charon solution exemplifies this approach, using emulation to migrate legacy systems to modern platforms without code changes [18].

Refactoring: This methodology involves restructuring existing code and architecture to improve scalability and efficiency while maintaining core functionality [18]. For cell therapy software, this might involve modularizing monolithic applications to enable better integration with modern sensors and analytical tools.

Re-engineering or Re-designing: This comprehensive approach involves completely redesigning and rebuilding systems using modern technologies [18]. While more resource-intensive, this method offers the greatest long-term benefits by enabling purpose-built solutions specifically designed for cell therapy manufacturing challenges.

Containerization: This approach encapsulates legacy software and its dependencies in containers for easier deployment and management [18]. This can be particularly valuable for maintaining specific legacy functionalities while enabling better integration with modern data management systems.

Implementation Framework

Successful modernization requires a structured approach aligned with business objectives:

Assessment Phase: Comprehensive evaluation of existing systems, identifying dependencies, and prioritizing modernization candidates based on business criticality and technical debt.

Target Architecture Definition: Designing future-state architecture that supports scalability, integration, and compliance requirements specific to cell therapy manufacturing.

Migration Strategy: Developing a phased implementation plan that minimizes disruption to ongoing operations and maintains regulatory compliance.

Validation Approach: Establishing protocols for verifying system performance and data integrity throughout the migration process, ensuring continuous GMP compliance.

Experimental Protocols for Legacy System Assessment and Migration

Protocol 1: Legacy System Impact Assessment for Cell Therapy Manufacturing

Objective: Systematically evaluate the impact of legacy software systems on cell therapy manufacturing processes and identify priority areas for modernization.

Materials and Equipment:

- Process mapping software (e.g., Microsoft Visio)

- Data integration assessment toolkit

- Security vulnerability scanning tools

- Performance monitoring software

- Compliance assessment checklist (21 CFR Part 11, Annex 11)

Methodology:

- Process Mapping: Document complete cell therapy manufacturing workflow, identifying all software touchpoints and data transfer points.

- Integration Capacity Evaluation: Assess legacy system ability to integrate with modern Process Analytical Technology (PAT) tools using standardized API testing.

- Data Integrity Assessment: Execute data traceability tests across the manufacturing process, monitoring for gaps, errors, or manual transcription requirements.

- Security Vulnerability Analysis: Conduct penetration testing and vulnerability assessment specific to cell therapy data protection requirements.

- Performance Benchmarking: Quantify system performance against key metrics including process downtime, data retrieval times, and batch record generation efficiency.

- Regulatory Compliance Audit: Evaluate system compliance with current regulatory standards for electronic records and electronic signatures.

Validation Metrics:

- Percentage of manual data transcription steps

- Number of incompatible modern systems

- Frequency of system-related deviations in manufacturing

- Data retrieval time for lot traceability exercises

- Security vulnerabilities per system interface

Protocol 2: Legacy System Migration Validation for GMP Environments

Objective: Ensure continued data integrity and process control during and after migration from legacy systems to modern platforms in regulated cell therapy manufacturing environments.

Materials and Equipment:

- Validation protocol templates

- Test data sets representing full manufacturing variability

- Data comparison and verification tools

- User acceptance testing scenarios

- Audit trail verification software

Methodology:

- Test Environment Establishment: Create mirrored test environment containing both legacy and modernized systems.

- Data Migration Verification: Execute parallel processing of historical manufacturing data through both systems, comparing outputs for equivalence.

- Interface Validation: Verify all integration points with manufacturing equipment, analytical instruments, and quality management systems.

- User Acceptance Testing: Conduct structured simulations with manufacturing staff using real-world scenarios and emergency procedures.

- Performance Stress Testing: Subject new system to peak capacity demands representing scaled manufacturing requirements.

- Audit Trail Verification: Confirm complete data integrity and traceability across the entire manufacturing process.

Acceptance Criteria:

- 100% data fidelity between legacy and modernized systems

- Zero critical observations in mock regulatory audit

- User proficiency scores ≥90% for all manufacturing staff

- System uptime ≥99.5% during stability testing

- Successful integration with all designated manufacturing equipment

Research Reagent Solutions: Essential Tools for Digital Transformation

Table 4: Key Technology Solutions for Legacy System Modernization in Cell Therapy

| Solution Category | Specific Technologies | Function in Modernization | Application in Cell Therapy |

|---|---|---|---|

| Cloud Platforms | Microsoft Azure PaaS, AWS Modernization Accelerators | Provides scalable infrastructure with built-in compliance features | Enables real-time process data management with regulatory support |

| Process Automation | Cellares Cell Shuttle, Ori Biotech systems | Closed, automated processing with integrated data capture | Reduces manual intervention and improves batch consistency |

| Digital Twin Technology | DataHow software, UCL CAR-T digital twin | Virtual process modeling and optimization | Predicts cell growth and optimizes culture conditions without risk to actual product |

| Data Analytics | Machine learning algorithms, PAT data integration | Identifies critical process parameters and correlations | Enables real-time release testing and predictive quality control |

| Containerization | Docker, Kubernetes | Encapsulates legacy applications for better management | Maintains specific legacy functionalities while enabling cloud deployment |

Visualizing the Legacy System Modernization Workflow

The following diagram illustrates the structured approach for assessing and modernizing legacy systems in cell therapy manufacturing:

Diagram 1: Legacy System Modernization Workflow for Cell Therapy. This workflow outlines a structured approach to modernizing legacy systems, beginning with comprehensive assessment, through strategy development and implementation, culminating in enhanced capabilities that support scalable, compliant cell therapy manufacturing.

The modernization of legacy software systems represents not merely a technical upgrade but a strategic imperative for cell therapy organizations seeking commercial viability and scalable manufacturing. The data clearly demonstrates that legacy system constraints directly impact critical manufacturing metrics: they increase costs, limit scalability, compromise data integrity, and ultimately restrict patient access to transformative therapies.

The path forward requires a deliberate, structured approach to modernization that aligns technical capabilities with business objectives. By leveraging appropriate migration methodologies—whether replatforming, refactoring, or complete re-engineering—organizations can transition from legacy constraints to modern, data-driven manufacturing platforms. This transformation enables implementation of the real-time process control, advanced analytics, and digital twin technologies that are rapidly becoming standard in advanced therapy manufacturing [2].

For researchers, scientists, and drug development professionals, the message is clear: addressing legacy system limitations is not an IT concern alone, but a fundamental requirement for advancing cell therapy from research curiosity to commercially viable, broadly accessible treatment modality. The protocols and frameworks presented here provide a foundation for organizations to systematically address these challenges while maintaining regulatory compliance and product quality throughout the transition.

The advancement of cell therapy represents a frontier in modern medicine, but its complexity introduces significant challenges in manufacturing and quality control. Unlike traditional pharmaceuticals, cell therapies involve living, patient-specific products that require meticulous tracking and control throughout their lifecycle. This Application Note examines the critical digital systems—Laboratory Information Management Systems (LIMS) and Quality Management Systems (QMS)—that form the technological backbone for maintaining process control and data integrity in cell therapy research and production. We provide a structured overview of these systems, their interactions, and practical protocols for their evaluation and implementation, specifically framed within cell therapy software research for drug development professionals.

Software System Fundamentals

Laboratory Information Management Systems (LIMS)

A Laboratory Information Management System (LIMS) is a software platform that transforms laboratory operations by managing samples, automating tasks, and enforcing standard procedures [23]. In the context of cell therapy, LIMS functions as the digital backbone, ensuring the integrity of the chain of identity from patient sample collection through genetic modification and final product administration [24]. Modern LIMS are characterized by features such as sample management, workflow automation, instrument integration, and robust data management capabilities that are essential for regulatory compliance [25].

Quality Management Systems (QMS)

A Quality Management System (QMS) is a formalized system that documents processes, procedures, and responsibilities for ensuring products or services consistently meet customer and regulatory requirements [26]. In cell therapy, a QMS provides the framework for quality assurance, covering essential processes such as document control, corrective and preventive actions (CAPA), audit management, and change control [27]. By implementing a robust QMS, organizations can reduce risks that could impact product safety and quality, which in turn directly affects patient safety [26].

System Interrelationships in Cell Therapy

In cell therapy workflows, LIMS and QMS do not operate in isolation but function as interconnected components of a larger digital ecosystem. LIMS manages the operational data and sample tracking throughout the complex manufacturing process, while QMS ensures these processes are performed in a compliant, quality-controlled manner. This integration is crucial for maintaining both product quality and regulatory compliance throughout the cell therapy lifecycle.

Table: Core Functions of LIMS and QMS in Cell Therapy

| System | Primary Focus | Key Functions in Cell Therapy | Data Integrity Contribution |

|---|---|---|---|

| LIMS | Operational Execution | Sample genealogy tracking, workflow automation, instrument integration, inventory management | Ensures data capture at point of generation, maintains chain of identity, provides audit trails for all sample manipulations [24] [25] |

| QMS | Quality Assurance | Document control, CAPA, audit management, change control, employee training, compliance monitoring | Ensures processes are performed consistently, manages deviations, provides quality oversight and documentation [27] [28] |

| Integrated System | Holistic Process Control | Connected data flows, automated quality event triggering, unified reporting | Creates complete digital thread from process to quality data, enabling comprehensive lot release decisions [29] |

Essential Software Capabilities for Cell Therapy

Specialized LIMS Requirements

Cell therapy laboratories have requirements that distinguish them from traditional laboratory environments and necessitate specialized LIMS capabilities:

- Comprehensive Chain of Identity Tracking: Maintenance of perfect traceability from donor sample through every modification step back to the patient is fundamental. A single break in this chain can invalidate months of work and endanger patient safety [24].

- Sample Genealogy Management: Advanced systems must handle autologous and allogeneic workflows differently, tracking complex relationships between starting materials, intermediate products, and final therapeutic doses [24].

- Flexible Workflow Automation: Platforms must accommodate different therapeutic modalities (CAR-T protocols differ fundamentally from TIL therapies) without forcing artificial standardization [24].

- Instrument Integration: Seamless connectivity with specialized equipment like flow cytometers, bioreactors, and cell counters is essential for automating data capture and reducing transcription errors [24] [25].

Critical QMS Features

For cell therapy applications, QMS platforms must provide specific functionality to address the unique regulatory and quality challenges:

- Process Flexibility: The ability to respond to evolving customer requirements, market conditions, and organizational needs is essential in the dynamic cell therapy landscape [28].

- Built-in Compliance Support: Preconfigured forms and templates for regulations such as FDA 21 CFR Part 11, ISO 13485, and GxP requirements significantly reduce the time and effort required for compliance documentation [28] [29].

- Corrective and Preventive Actions (CAPA) Management: Robust systems for identifying, investigating, and addressing quality events are critical for continuous improvement and regulatory compliance [27].

- Expandable Systems: Scalable architecture that can grow with organizational needs prevents costly system migrations as product pipelines mature and manufacturing scales [28].

Digital Ecosystem Architecture

The integration between LIMS and QMS creates a digital ecosystem that enhances both operational efficiency and regulatory compliance. This architectural approach enables real-time quality monitoring, automated quality event creation, and comprehensive data analysis across the entire cell therapy workflow.

Diagram: Digital Ecosystem Architecture for Cell Therapy

Software Evaluation Protocol

Experimental Objective

To systematically evaluate and select LIMS and QMS software solutions for cell therapy process control and data integrity requirements through a structured assessment methodology.

Materials and Reagents

Table: Research Reagent Solutions for Software Evaluation

| Item | Function | Application in Evaluation |

|---|---|---|

| Validation Scripts | Predefined test cases for system functionality | Verify core capabilities against user requirements |

| Data Migration Tools | Utilities for transferring existing data | Assess implementation effort and data integrity during transfer |

| Performance Monitoring Software | Tools to measure system response times | Evaluate system performance under simulated load |

| Security Assessment Tools | Vulnerability scanners and audit log reviewers | Validate security controls and data protection measures |

| Regulatory Checklist | Compliance requirements specific to cell therapy | Confirm adherence to FDA, EMA, and other relevant guidelines |

Methodology

Requirement Weighting and Scoring

- Define Critical Requirements: Identify must-have capabilities specific to cell therapy, including chain of identity tracking, lot genealogy, and compliance with 21 CFR Part 11 [24] [29].

- Assign Priority Weights: Categorize requirements as high, medium, or low priority based on impact to cell therapy operations and regulatory compliance.

- Develop Scoring Rubric: Create a consistent scoring system (e.g., 1-5 scale) to evaluate how well each software platform meets the defined requirements.

- Conduct Vendor Demonstrations: Observe live demonstrations using cell therapy-specific use cases to assess real-world functionality.

- Perform Gap Analysis: Identify missing functionalities or required workarounds for each platform.

Technical Validation

- Architecture Assessment: Evaluate technical architecture, deployment models (cloud vs. on-premise), and integration capabilities [23] [26].

- Validation Testing: Execute predefined test scripts to verify critical functionality in sample management, audit trail generation, and electronic signatures.

- Performance Testing: Simulate peak load conditions representative of cell therapy manufacturing volumes to assess system stability.

- Security Validation: Verify user access controls, data encryption, and audit trail completeness per regulatory requirements [29].

Total Cost of Ownership Analysis

- Initial Implementation Costs: Quantify costs for implementation services, data migration, and initial training.

- Ongoing Operational Costs: Calculate subscription/maintenance fees, IT support requirements, and administrative overhead.

- Customization Expenses: Estimate costs for required configurations or custom developments specific to cell therapy workflows.

- Return on Investment Projection: Model efficiency gains, error reduction benefits, and compliance cost avoidance.

Expected Results and Interpretation

The evaluation protocol should yield a comprehensive comparison of software platforms, highlighting strengths and gaps relative to cell therapy requirements. Platforms scoring below threshold on mandatory requirements should be eliminated from consideration, while remaining options should be ranked by total weighted score.

Table: Sample Software Platform Evaluation Results

| Evaluation Criteria | Weight | Platform A | Platform B | Platform C | Platform D |

|---|---|---|---|---|---|

| Chain of Identity Management | 10% | 5/5 | 4/5 | 3/5 | 5/5 |

| Regulatory Compliance (21 CFR 11) | 10% | 5/5 | 5/5 | 4/5 | 5/5 |

| Workflow Flexibility | 8% | 4/5 | 3/5 | 5/5 | 4/5 |

| Integration Capabilities | 8% | 5/5 | 4/5 | 3/5 | 5/5 |

| Audit Trail Completeness | 8% | 5/5 | 5/5 | 4/5 | 5/5 |

| Implementation Timeline | 6% | 3/5 | 4/5 | 5/5 | 3/5 |

| Total Cost of Ownership | 10% | 3/5 | 4/5 | 5/5 | 3/5 |

| Vendor Support Quality | 7% | 5/5 | 4/5 | 3/5 | 5/5 |

| Mobile Accessibility | 5% | 4/5 | 3/5 | 4/5 | 5/5 |

| Reporting Capabilities | 8% | 5/5 | 4/5 | 4/5 | 5/5 |

| Weighted Total Score | 100% | 4.4/5 | 4.1/5 | 3.9/5 | 4.6/5 |

Implementation Protocol

Experimental Objective

To establish a validated, operational LIMS and/or QMS platform that supports cell therapy manufacturing with comprehensive process control and data integrity capabilities.

Methodology

Pre-Implementation Planning

- Stakeholder Alignment: Secure commitment from quality, manufacturing, IT, and senior management stakeholders.

- System Architecture Design: Create detailed architecture specifications addressing integration points between LIMS, QMS, and existing systems.

- Validation Master Plan: Develop a comprehensive plan outlining validation approach, deliverables, and acceptance criteria.

- Data Migration Strategy: Plan and test migration of existing data, with focus on maintaining data integrity and chain of identity.

Configuration and Customization

- Workflow Modeling: Map cell therapy-specific workflows (autologous and allogeneic) within the configured system.

- User Access Configuration: Establish role-based access controls aligned with organizational responsibilities and segregation of duties requirements.

- Interface Development: Build and test interfaces to instruments and other enterprise systems (ERP, MES, etc.).

- Documentation Setup: Configure document templates, SOP workflows, and quality records specific to cell therapy requirements.

Validation Testing

- Installation Qualification: Verify proper software installation and infrastructure configuration.

- Operational Qualification: Confirm system operates according to design specifications using cell therapy use cases.

- Performance Qualification: Demonstrate system consistently performs required functions under normal operating conditions.

- User Acceptance Testing: Engage end-users to validate system functionality meets business needs.

Deployment and Training

- Phased Rollout: Implement system using a phased approach, beginning with pilot groups before full deployment.

- Training Program Development: Create role-based training materials and deliver comprehensive training to all users.

- Go-Live Support: Provide intensive support during initial deployment period to address issues promptly.

- Continuous Improvement: Establish mechanisms for collecting user feedback and implementing system enhancements.

Expected Results

A fully validated and operational LIMS/QMS that enables end-to-end tracking of cell therapy products, maintains complete data integrity, and supports regulatory compliance requirements. System performance should be measured against predefined Key Performance Indicators (KPIs) including sample processing time, documentation errors, audit preparation time, and user satisfaction scores.

The successful implementation of integrated LIMS and QMS platforms is fundamental to advancing cell therapy research and commercialization. These systems provide the digital infrastructure necessary to maintain product quality, ensure patient safety, and demonstrate regulatory compliance throughout the complex cell therapy lifecycle. As the field continues to evolve, the digital ecosystem must simultaneously enforce rigorous process controls while maintaining the flexibility to support innovative therapeutic approaches. The protocols and analyses presented in this Application Note provide a framework for selecting, implementing, and leveraging these critical systems to advance cell therapy research and development.

Implementing Digital Solutions: A Guide to Core Software Capabilities and Integration

A Laboratory Information Management System (LIMS) serves as the specialized digital backbone of modern laboratories, automating operations from sample submission through testing and final reporting [30]. At its core, a LIMS handles sample management, workflow automation, data collection, and regulatory compliance, significantly reducing manual errors and improving overall efficiency and data integrity [30]. In the specific context of cell therapy software, a LIMS is indispensable for process control and data integrity research. It manages the immense complexity of tracking living cellular materials from donor sample through every modification step back to the patient, a process where a single break in the chain of identity can invalidate months of work and endanger patient safety [24].

For cell therapy research and development, the functionality of a traditional LIMS expands to meet unique demands. These systems must track donor screening, cell isolation, genetic modification, expansion, formulation, and cryopreservation while maintaining a perfect audit trail [24] [31]. This capability is critical for complying with rigorous regulatory standards such as FDA 21 CFR Part 11 for electronic records, GxP, and ISO/IEC 17025, which are foundational to gaining approval for advanced therapeutics [30] [32].

Quantitative Analysis of Leading LIMS Platforms

Selecting a LIMS requires a careful evaluation of its features against the specific needs of a cell therapy workflow. The table below provides a structured comparison of leading platforms, highlighting their specialization, key strengths, and implementation considerations for cell and gene therapy research.

Table 1: Comparative Analysis of Leading LIMS Platforms for Cell & Gene Therapy Research

| Platform | Specialization | Key Strengths | Implementation Timeline | Notable Features |

|---|---|---|---|---|

| LabWare LIMS [30] [33] | Broad enterprise-scale deployments in pharma, biotech, and manufacturing | High configurability, proven compliance track record (21 CFR Part 11, GLP), strong instrument integration | Often 6+ months for complex, enterprise-scale deployments [33] | Integrated LIMS & ELN suite, advanced workflow automation, multi-site data management |

| Thermo Fisher Core LIMS [33] | Highly regulated, enterprise-scale environments (Pharma, Biotech) | Native connectivity with Thermo Fisher instruments, advanced workflow builder, robust data security architecture | Several months, requiring significant IT support and planning [33] | Regulatory compliance readiness (GxP, FDA 21 CFR Part 11), cloud or on-prem deployment, multi-site support |

| LabVantage [30] [33] | Pharmaceutical R&D, Biobanking | Fully browser-based, integrated LIMS+ELN+SDMS+Analytics, highly configurable, global deployment support | Often 6+ months for full rollout [33] | Configurable workflows, sample and test lifecycle tracking, biobanking module |

| Scispot [24] | Cell & Gene Therapy Research | Purpose-built for cell therapy workflows, AI-powered analytics (Scibot), no-code configuration, specialized molecular biology tools | 6 to 12 weeks for deployment [24] | Sample genealogy visualization, automated data pipeline for instruments, CRISPR guide design and analysis |

| Benchling [24] | Biotechnology R&D | Cloud-based platform with combined ELN and LIMS, molecular biology tools for DNA sequence design | Information missing from search results | Sample tracking across research workflows; requires SQL for configuration per user reports [24] |

| Autoscribe Matrix Gemini [33] | Mid-sized labs (e.g., veterinary diagnostics, food testing) | True configuration without coding, modular licensing, cost-efficient scalability | Information missing from search results | Visual configuration tools, template library for common industries, flexible reporting |

Application Note: Implementing a LIMS for Cell Therapy Workflows

Experimental Protocol for Tracking a CAR-T Cell Therapy Batch

Objective: To establish a standardized, LIMS-managed protocol for tracking a single patient-specific CAR-T cell therapy batch from apheresis through to final cryopreservation, ensuring data integrity and chain of identity.

Materials and Reagents: Table 2: Research Reagent Solutions for Cell Therapy Workflows

| Item | Function in the Protocol |

|---|---|

| Patient Apheresis Material | The source material containing T-cells for genetic modification. |

| Cell Culture Media | Supports the growth, activation, and expansion of T-cells throughout the process. |

| Activation Beads/ Cytokines | Activates T-cells to prepare them for genetic modification. |

| Viral Vector | Delivers the CAR (Chimeric Antigen Receptor) gene to the T-cells. |

| QC Assay Reagents | Used in flow cytometry (phenotype), qPCR (vector copy number), and viability tests to ensure product quality and safety. |

| Cryopreservation Medium | Preserves the final CAR-T product for storage and transport. |

Methodology:

- Sample Registration and Login:

- Upon receipt, the patient's apheresis material is logged into the LIMS as the "Starting Material."

- The system automatically generates a unique barcode and a Chain of Identity ID that will follow the sample through all subsequent steps [34] [31].

- Donor/patient information and associated consent documentation are digitally linked to this sample record in the LIMS [31].

T-Cell Activation & Transduction:

- The protocol for T-cell activation is loaded from the LIMS-integrated Electronic Lab Notebook (ELN).

- Technicians are guided through the workflow, with the LIMS recording the lot numbers of reagents like activation beads and the viral vector, creating a complete audit trail [30] [32].

- The LIMS records the "parent" sample (apheresis material) and creates new sample records for the "activated T-cells" and subsequently "transduced CAR-T cells," establishing a clear sample genealogy [35] [24].

In-Process QC and Expansion:

- The LIMS scheduler automatically triggers required quality control tests during the expansion phase in the bioreactor.

- Instruments like flow cytometers and cell counters are integrated with the LIMS, allowing for automatic data capture of cell count, viability, and transduction efficiency, eliminating manual transcription errors [34] [25].

- Results that fall outside pre-defined specifications are flagged in real-time by the system for immediate review [24].

Final Formulation and Release:

- The final CAR-T product is harvested and formulated. The LIMS tracks the final fill and cryopreservation process, recording the exact storage location (e.g., freezer, shelf, box coordinates) [35].

- A Certificate of Analysis is automatically generated by the LIMS, compiling all data from the entire manufacturing process [36] [25].

- The system ensures a complete chain of custody is documented, and all electronic records are secured with electronic signatures in compliance with 21 CFR Part 11 before the product is released [30] [32].

Workflow Visualization

The following diagram illustrates the core logical workflow of a LIMS in managing a cell therapy process, from sample registration to final product release.

In the fast-evolving field of cell therapy, a robust Laboratory Information Management System (LIMS) is far more than a data repository; it is a critical enabler of process control, data integrity, and regulatory compliance. By implementing a platform specifically designed for the unique challenges of cellular and gene therapy workflows—such as maintaining an unbreakable chain of identity and managing complex sample genealogy—research organizations can significantly reduce errors, streamline operations, and accelerate the journey of life-saving therapies from the laboratory to the patient [24] [31]. As the industry moves toward more personalized and complex treatments, the strategic selection and implementation of a purpose-built LIMS will remain the backbone of successful and compliant cell therapy development.