Strategic Optimization of Culture Systems: A Guide to Maximizing Yield and Purity in Biopharmaceutical Production

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on optimizing culture systems to achieve high target yield and purity.

Strategic Optimization of Culture Systems: A Guide to Maximizing Yield and Purity in Biopharmaceutical Production

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on optimizing culture systems to achieve high target yield and purity. It covers foundational principles, from host selection and cost drivers to the latest experimental and computational methodologies, including AI/ML-driven strategies. The content further delves into practical troubleshooting, advanced optimization techniques, and validation frameworks to ensure robust, scalable, and economically viable bioprocesses for therapeutic protein production.

Laying the Groundwork: Core Principles and Economic Drivers of Culture System Performance

The Critical Link Between Culture Medium and Production Costs

In the realm of biotechnological production for pharmaceuticals, biologics, and sustainable foods, optimizing culture systems is paramount for achieving target yield and purity. The composition of cell culture media represents not merely a technical variable but the most significant cost driver in many bioprocesses. Comprehensive cost-analysis studies reveal that culture medium can account for up to 80% of the direct protein production cost [1]. For emerging industries like cultured meat, medium-related costs similarly represent one of the primary economic hurdles to scalable production [2]. This financial reality establishes culture medium optimization as an essential research priority rather than a peripheral concern. This Application Note delineates the quantitative relationship between medium formulation and production economics while providing validated experimental protocols to systematically optimize media for enhanced yield, purity, and cost-efficiency.

Quantitative Impact of Medium Optimization

Table 1: Documented Economic and Performance Benefits of Culture Medium Optimization

| Production System | Optimization Strategy | Performance Improvement | Cost Impact | Citation |

|---|---|---|---|---|

| CHO-K1 cells (serum-free medium) | Biology-aware machine learning on 57 components | ~60% higher cell concentration vs. commercial media | Not specified | [3] |

| Pseudoxanthomonas indica H32 bacterium | Statistical mixture design | Specific growth rate (µ)=0.439 h⁻¹; Xmax=8.00 | Reduction from ~$1.18/L to $0.10/L | [4] |

| Recombinant protein production (therapeutic) | Holistic medium component balancing | Up to 10-fold yield increase vs. basal formulations | Medium = 80% of production cost | [5] [1] |

| Peripheral Blood Mononuclear Cells (PBMCs) | Bayesian optimization of 4 commercial media blends | Maintained >70% viability after 72 hours | 3-30x fewer experiments than traditional DoE | [6] |

Table 2: Cost Structure Analysis in Bioprocessing

| Cost Component | Typical Range of Contribution | Factors Influencing Impact | |

|---|---|---|---|

| Culture medium and nutrients | 30-80% of total production cost | Raw material purity, supplementation strategy, formulation complexity | [4] [1] |

| Downstream processing | 15-50% of total production cost | Yield and purity at harvest, required purification steps | [1] |

| Energy and infrastructure | 10-25% of total production cost | Process intensity, duration, equipment requirements | [2] |

| Quality control and analytics | 5-15% of total production cost | Regulatory requirements, product specifications | [7] |

Advanced Optimization Methodologies

Machine Learning-Driven Approaches

Traditional optimization methods like One-Factor-at-a-Time (OFAT) and Design of Experiments (DoE) often fail to capture the complex, nonlinear interactions between culture parameters and medium components [7]. Machine learning (ML) has emerged as a powerful approach for modeling these relationships and forecasting critical quality attributes. Biology-aware active learning platforms can overcome limitations of traditional ML when dealing with biological variability and experimental noise [3]. These approaches employ probabilistic surrogate models (Gaussian Processes) that are particularly well-suited for biological applications as they can include prior beliefs about the system, incorporate process noise, and obtain confidence in predictions by associating higher uncertainty with unexplored parts of the design space [6].

For monoclonal antibody production, where charge heterogeneity is a critical quality attribute, ML models effectively link process conditions to charge variant profiles. These models analyze factors including pH, temperature, cell culture duration, and nutrient composition to provide important insights into the intricate connections between culture parameters and critical quality attributes [7]. The implementation of Bayesian Optimization-based iterative frameworks for experimental design has demonstrated the ability to identify improved media compositions with 3-30 times fewer experiments than standard DoE approaches, dramatically reducing development costs and time [6].

Active Learning and Bayesian Optimization

Active learning represents a paradigm shift in medium optimization by iteratively selecting the most informative data points for experimental validation, thereby optimizing model performance with minimal labeled data [1]. This strategy is particularly effective for medium optimization where the combinatorial search space is prohibitively large and experimental resources are limited. The Bayesian Optimization workflow integrates both experimental feedback and model training in a reinforcing cycle:

This approach balances exploration of uncharted regions of the design space with exploitation of promising regions identified through previous experiments, ensuring comprehensive optimization while minimizing experimental burden [6]. For complex media containing 57 components, as demonstrated in CHO-K1 cell culture optimization, this method successfully identified formulations yielding approximately 60% higher cell concentration than commercial alternatives [3].

Experimental Protocols for Medium Optimization

Protocol 1: Bayesian Optimization for Complex Media Formulation

Purpose: To efficiently optimize multi-component culture media using Bayesian active learning.

Materials:

- Basal medium components

- Supplement stock solutions

- Cell culture system (bioreactor or multi-well plates)

- Analytics for response measurement (cell counting, product titer, etc.)

- Bayesian optimization software platform

Procedure:

- Define Design Space: Identify all medium components to be optimized and their concentration ranges.

- Establish Response Variables: Define primary optimization targets (e.g., cell density, product titer, specific productivity).

- Execute Initial Experiment Set: Perform initial screening experiments (typically 20-50% of total experimental budget).

- Build Gaussian Process Model: Input initial data to establish surrogate model relationships between component concentrations and responses.

- Iterative Optimization Cycle:

- Use acquisition function to identify next most informative experiments

- Perform selected experiments

- Update model with new results

- Assess convergence criteria

- Validation: Confirm optimal formulation in biological replicates.

Applications: This protocol successfully optimized a 57-component serum-free medium for CHO-K1 cells, requiring 364 media variations to identify a formulation with 60% higher cell concentration than commercial alternatives [3].

Protocol 2: Cost-Effective Medium Development for Microbial Systems

Purpose: To design economically optimized media for industrial-scale microbial cultivation.

Materials:

- Alternative carbon and nitrogen sources

- Mineral salt solutions

- Vitamin stocks

- Design-Expert or similar statistical software

Procedure:

- Component Screening: Evaluate different carbon sources (sucrose, glucose) and nitrogen sources (yeast extract, peptones, ammonium salts).

- Mixture Design: Establish experimental design with constrained component ratios.

- Growth Assessment: Measure specific growth rate (µ) and maximal optical density (Xmax) for each formulation.

- Response Surface Modeling: Identify significant factors and interaction effects.

- Cost Integration: Incorporate component costs to identify economically optimal formulations.

- Functionality Validation: Confirm biological activity in target application.

Applications: This approach reduced Pseudoxanthomonas indica H32 cultivation costs from approximately $1.18/L to $0.10/L while maintaining biological efficacy as a biopesticide [4].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Research Reagents for Culture Medium Optimization

| Reagent Category | Specific Examples | Function | Optimization Considerations |

|---|---|---|---|

| Carbon Sources | Glucose, sucrose, glycerol | Primary energy source, influences growth rate and metabolic byproducts | Concentration balancing to prevent overflow metabolism; 4-6 g/L initial glucose for mammalian cells [5] |

| Nitrogen Sources | Yeast extract, peptones, ammonium salts, hydrolysates | Amino acid supply for protein synthesis and cell growth | C/N ratio optimization; replacement with cost-effective hydrolysates [2] [4] |

| Growth Factors | LONG R³ IGF-I, recombinant insulin | Cell proliferation and viability maintenance | Can double cell viability over extended cultures compared to insulin [5] |

| Trace Elements | Zinc, selenium, copper, manganese | Cofactors for enzymatic reactions, antioxidant defense | Combat oxidative stress; influence charge variants in mAbs [5] [7] |

| pH Buffers | Sodium bicarbonate, phosphate buffers, HEPES | Maintain physiological pH range | Critical for protein folding and minimizing charge heterogeneity [4] [7] |

| Additives | Valproic acid, kifunensine, thiol compounds | Enhance gene expression, modulate glycosylation, reduce oxidative stress | Histone deacetylase inhibitors can increase antibody yields by 4-fold [5] |

Integrated Workflow for Medium Optimization

A comprehensive medium optimization strategy integrates multiple methodologies into a cohesive workflow that balances performance objectives with economic constraints:

This systematic approach ensures that medium optimization directly addresses both biological performance metrics and economic constraints, with iterative refinement based on experimental data. The workflow emphasizes the importance of defining critical quality attributes early in the process, particularly for therapeutic proteins where charge variants significantly impact drug safety and efficacy [7].

Culture medium formulation represents the critical link between biological potential and economic viability in bioprocessing. By implementing advanced optimization strategies including machine learning, Bayesian active learning, and statistical experimental design, researchers can systematically address the dominant cost driver in their production systems. The protocols and methodologies presented in this Application Note provide a roadmap for developing cost-effective, high-performance media that enhance target yield and purity while maintaining economic sustainability. As the biologics market continues its rapid expansion and pressure mounts to reduce therapeutic costs, strategic investment in culture medium optimization emerges as an essential component of competitive bioprocess development.

The selection of an appropriate protein expression host is a critical determinant of success in biopharmaceutical research and development. This application note provides a structured comparison between mammalian and microbial systems, focusing on their impact on target protein yield and purity within the context of culture system optimization. We present quantitative data, detailed protocols, and a decision-making framework to guide researchers and drug development professionals in selecting the optimal platform for their specific application, ensuring alignment with project goals for protein complexity, scalability, and cost-effectiveness.

Protein expression systems serve as the foundational platform for producing recombinant proteins essential for therapeutics, diagnostics, and basic research. The two most prevalent categories are microbial systems (prokaryotic, e.g., E. coli) and mammalian systems (eukaryotic, e.g., CHO, HEK293). The fundamental difference lies in their cellular complexity: microbial systems are simpler, facilitating rapid and high-yield protein production, whereas mammalian systems replicate human-like cellular machinery, enabling the synthesis of complex proteins with essential post-translational modifications (PTMs) such as glycosylation [8] [9].

The choice between these systems often involves a trade-off between yield, cost, and biological fidelity. Optimizing culture systems for maximum target yield and purity requires a deep understanding of the inherent advantages and limitations of each host. Microbial fermentation is generally more cost-effective and scalable for simpler proteins, while mammalian cell culture is often indispensable for producing complex biologics like monoclonal antibodies and other glycosylated proteins [10].

System Capabilities and Key Differentiators

The core differences between microbial and mammalian systems can be categorized by their physiological capabilities, which directly influence the nature of the recombinant protein produced.

Post-Translational Modifications (PTMs)

PTMs are chemical modifications that occur after protein synthesis and are crucial for the stability, solubility, and biological activity of many therapeutic proteins [8].

- Mammalian Systems: Excell in performing complex PTMs. They are capable of human-like glycosylation, which is critical for the efficacy and pharmacokinetics of therapeutic proteins like antibodies. They also reliably form disulfide bonds and perform phosphorylation, acetylation, and methylation [8] [11].

- Microbial Systems: Generally lack the enzymatic machinery to perform advanced PTMs. E. coli cannot glycosylate proteins, which is a significant limitation for producing many human therapeutics. While it can form some disulfide bonds, it often struggles with proteins requiring multiple correct pairings [8].

Protein Folding and Solubility

Correct protein folding is essential for biological activity.

- Mammalian Systems: Contain specialized organelles like the endoplasmic reticulum and Golgi apparatus, along with molecular chaperones, which work in concert to ensure proper protein folding and assembly of complex multi-subunit proteins [8].

- Microbial Systems: Lack these sophisticated folding compartments. This often leads to the formation of inclusion bodies—insoluble aggregates of misfolded protein. While these can sometimes be refolded, the process adds complexity and can reduce yields [8] [12].

Growth Characteristics and Cost

- Microbial Systems: Feature extremely rapid growth, with doubling times as short as 20 minutes. They can be cultured to high cell densities using simple and inexpensive media, making them highly scalable and cost-effective for large-batch production [8] [9].

- Mammalian Systems: Have much slower growth rates, with doubling times typically ranging from 12 to 48 hours. They require complex, expensive media, often supplemented with serum, and need precisely controlled environments (e.g., CO₂ incubators), leading to significantly higher production costs [8] [9].

Quantitative Data Comparison

The following tables summarize key performance metrics for the most common expression systems, providing a basis for initial host selection based on yield and purity targets.

Table 1: Comparative Yield and Purity of Protein Expression Systems [13]

| Expression System | Typical Yield (Gram/Liter) | Purity Without Extensive Purification | Key Applications |

|---|---|---|---|

| E. coli | 1 - 10 g/L | 50% - 70% | Research-grade proteins, industrial enzymes, non-glycosylated proteins |

| Yeast | Up to 20 g/L | ~80% (in optimized conditions) | Human proteins with basic PTMs, biopharmaceuticals |

| Mammalian Cells | 0.5 - 5 g/L | >90% | Complex therapeutic proteins, monoclonal antibodies, glycosylated proteins |

Table 2: Strategic Comparison of Host System Characteristics

| Parameter | Microbial Systems (E. coli) | Mammalian Systems (CHO, HEK293) |

|---|---|---|

| Cost & Scalability | Low cost, highly scalable with simple media [8] | High cost, scalable but requires complex media and bioreactors [8] |

| Ideal Protein Type | Smaller, simpler proteins and antibody fragments [10] | Large, complex proteins requiring human-like PTMs [8] [10] |

| Typical Biologics | Peptides, cytokines, growth factors, VHHs, peptibodies [10] | Monoclonal antibodies, complex glycosylated proteins, viral vaccines [10] [11] |

| Key Advantage | Speed, cost-effectiveness, and high yield of simple proteins | Biological fidelity and ability to produce complex, functional proteins |

Experimental Protocols

This section provides detailed methodologies for optimizing protein production in both mammalian and microbial hosts, with a focus on achieving high yield and purity.

Protocol: Active Learning-Assisted Medium Optimization for Mammalian Cells

Background: Optimizing culture medium is crucial for enhancing cell growth and protein production in mammalian systems. Traditional methods are inefficient for fine-tuning dozens of medium components. This protocol employs an active learning-driven approach using machine learning to efficiently identify optimal medium compositions [14].

Workflow Overview: The process involves acquiring initial experimental data, training a machine learning model (Gradient-Boosting Decision Tree), and using its predictions to guide subsequent rounds of experimentation, creating an iterative optimization loop [14].

Materials:

- Cell Line: HeLa-S3 (suspension-adapted) or other relevant mammalian cell line (e.g., CHO, HEK293) [14].

- Baseline Medium: Eagle’s Minimum Essential Medium (EMEM) or other defined medium [14].

- Components for Optimization: 29 components (amino acids, vitamins, salts, etc.), varied on a logarithmic scale [14].

- Assessment Reagent: Cell Counting Kit-8 (CCK-8) to measure cellular NAD(P)H abundance (Absorbance at 450nm, A450) as a proxy for cell viability and concentration [14].

Procedure:

- Initial Data Acquisition: Perform cell culture in a wide variety of 232 initial medium combinations. Measure temporal changes in A450 at 24-48 hour intervals until 168 hours. Include biological replicates (N=3-4) for each condition [14].

- Model Training: Use the A450 values at 168 hours (regular mode) or 96 hours (time-saving mode) as the training target for the GBDT model. The model learns the complex relationships between medium component concentrations and the resulting A450 output [14].

- Prediction and Validation: The model predicts 18-19 new medium combinations expected to yield higher A450. Prepare these medium variants and perform experimental cell culture to validate the predictions [14].

- Iterative Learning: Add the new experimental data (medium composition and resulting A450) to the training dataset. Retrain the GBDT model and repeat the prediction-validation cycle for 3-4 rounds or until a significant improvement in A450 is observed and plateaus [14].

Expected Outcomes: This active learning approach has been shown to significantly increase the cellular NAD(P)H concentration (A450) compared to the baseline commercial medium. The model often identifies a significant decrease in fetal bovine serum (FBS) requirement and provides specific concentration recommendations for vitamins and amino acids, leading to a cost-effective and high-performance customized medium [14].

Protocol: High-Yield Soluble VHH Production in a NovelE. coliSystem

Background: Producing soluble Variable domain of Heavy-chain-only antibodies (VHHs, or nanobodies) in E. coli is challenging due to their tendency to form inclusion bodies. This protocol utilizes a novel EffiX E. coli* expression system and a two-phase fermentation process to enhance soluble secretion and significantly increase titers [12].

Workflow Overview: The process involves tight transcriptional control to prevent leaky expression and a two-phase fermentation that switches conditions to promote high cell density followed by efficient protein secretion and release [12].

Materials:

- Expression System: EffiX E. coli host strain and expression vector (or similar engineered system with tight transcriptional control and phage resistance) [12].

- Fermentation Bioreactor: Equipped for Process Analytical Technology (PAT) and monitoring of dissolved oxygen [12].

- Culture Media: Defined rich medium, optimized for high-cell-density fermentation.

- Purification Tags: His-SUMO tag or other suitable fusion tags to improve solubility and aid purification [12].

Procedure:

- Strain Construction: Clone the gene of interest encoding the VHH into the EffiX expression vector. Utilize the system's toolbox for codon optimization and signal peptide selection.

- Phase 1 - Biomass Build-up: Inoculate the bioreactor and grow the culture under optimal conditions (temperature, pH, dissolved oxygen) to achieve high cell density. The engineered system ensures tight transcriptional control prior to induction, minimizing metabolic burden and preventing leaky expression [12].

- Induction: Induce protein expression with Isopropyl β-d-1-thiogalactopyranoside (IPTG) at a pre-optimized cell density.

- Phase 2 - Two-Phase Secretion: After induction, implement a two-phase process where soluble VHHs are released via:

- Programmed cell lysis: A controlled fraction of cells lyse, releasing intracellular product.

- Targeted product release: A separate fraction of cells actively secrete the VHH into the periplasm or culture supernatant [12].

- Harvest and Purification: Harvest the crude lysate, which contains a high concentration of soluble VHHs. Purification is simplified due to the high proportion of soluble product. A His-SUMO tag can be used for Immobilized-Metal Affinity Chromatography (IMAC) purification, followed by tag cleavage and a polishing step [12].

Expected Outcomes: This optimized process has demonstrated a 12-fold increase in titer and a five-fold increase in secreted VHH species compared to a baseline process. The yield of soluble, functional VHH is dramatically improved, reducing reliance on complex inclusion body refolding procedures [12].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents for Expression System Optimization

| Reagent / Material | Function / Application | Example Host System |

|---|---|---|

| EffiX E. coli System | Engineered microbial host for stable, high-yield expression with minimized leaky expression and phage resistance [12] | E. coli |

| CHO (Chinese Hamster Ovary) Cells | Industry-standard mammalian host for producing complex therapeutic glycoproteins with human-like PTMs [8] [11] | Mammalian |

| HEK293 (Human Embryonic Kidney) Cells | Mammalian host preferred for transient gene expression and production of proteins requiring specific PTMs like sulfation [11] | Mammalian |

| His-SUMO Tag | Fusion tag that improves soluble expression in E. coli, reduces N-terminal heterogeneity, and simplifies IMAC purification [12] | E. coli |

| BacMam System | Baculovirus-based vector for efficient delivery and expression of genes in mammalian cells; a safe and versatile transduction tool [11] | Mammalian (via baculovirus) |

| Cell Counting Kit-8 (CCK-8) | Colorimetric assay for cell viability and proliferation based on cellular NAD(P)H levels, suitable for high-throughput screening [14] | Mammalian |

Choosing between mammalian and microbial expression systems is a strategic decision that balances protein complexity, yield, cost, and timeline. Microbial systems are the clear choice for rapid, cost-effective production of simple, non-glycosylated proteins and smaller biologics like VHHs. Conversely, mammalian systems are indispensable for producing complex, glycosylated therapeutic proteins where biological activity depends on human-like PTMs.

The experimental protocols outlined demonstrate that systematic optimization—whether through machine learning-guided medium design for mammalian cells or engineered strains and two-phase fermentation for microbes—can dramatically enhance yield and purity. Researchers are advised to first define the critical quality attributes of their target protein, particularly its PTM requirements, and then use the quantitative data and protocols provided to select and optimize the most appropriate host system for their specific yield and purity goals.

Genetic Engineering Strategies for Enhanced Protein Expression and Folding

The optimization of culture systems for the production of recombinant proteins is a critical endeavor in biopharmaceutical research and development. Achieving high target yield and purity requires an integrated approach that combines genetic engineering of host cells with precise control of culture parameters. Chinese hamster ovary (CHO) cells have emerged as the predominant production platform, accounting for nearly 80% of approved human therapeutic antibodies due to their ability to perform human-like post-translational modifications, relative safety against human viruses, and adaptability to suspension culture in serum-free media [15]. This application note details strategic methodologies to overcome persistent challenges in recombinant protein production, including low expression levels, improper folding, and cellular apoptosis during culture. By implementing the protocols described herein, researchers can significantly enhance both the quantity and quality of recombinant proteins for therapeutic and research applications.

Genetic Engineering Strategies for Enhanced Expression

Vector Optimization with Regulatory Elements

The design of expression vectors fundamentally influences the transcription and translation efficiency of recombinant genes. Strategic incorporation of regulatory elements upstream of the target gene can dramatically enhance protein expression levels.

Protocol 1.1: Vector Construction with Regulatory Elements

- Objective: To enhance translation initiation and efficiency through the incorporation of Kozak and Leader sequences.

- Materials:

- Parent vector (e.g., pCMV-eGFP-F2A-RFP)

- High-fidelity DNA polymerase

- Restriction enzymes and corresponding buffers

- T4 DNA ligase

- Competent E. coli cells

- LB agar plates with appropriate antibiotic

- Plasmid purification kit

- Sequencing primers

- Methodology:

- Sequence Insertion: Synthesize and insert a strong Kozak sequence (e.g., GCCACC) immediately upstream of the start codon (ATG) of the gene of interest (GOI). For additional enhancement, incorporate a Leader peptide sequence downstream of the Kozak sequence but upstream of the GOI.

- Vector Ligation: Digest both the insert (containing regulatory elements and GOI) and the backbone vector with appropriate restriction enzymes. Purify the fragments and perform ligation using T4 DNA ligase.

- Transformation and Verification: Transform the ligated product into competent E. coli cells. Select colonies on antibiotic-containing LB plates. Isolate plasmid DNA from positive clones and verify the correct assembly by Sanger sequencing.

- Expected Outcome: The optimized vector should show a significant increase in transient and stable expression levels. Experimental data indicate that the addition of a Kozak sequence can increase expression by approximately 1.26-fold, while a combination of Kozak and Leader sequences can enhance expression by up to 2.2-fold for model proteins like eGFP [16].

Diagram 1: Workflow for constructing an expression vector with enhanced regulatory elements.

Host Cell Engineering for Apoptosis Inhibition

Cellular apoptosis is a major limiting factor for prolonged protein production in bioreactors. Engineering host cells to suppress apoptotic pathways can extend culture longevity and increase cumulative protein yield.

Protocol 1.2: Generation of Apaf1-Knockout CHO Cell Lines using CRISPR/Cas9

- Objective: To disrupt the mitochondrial apoptosis pathway by knocking out the Apaf1 gene, thereby enhancing cell survival and recombinant protein production.

- Materials:

- CHO-S cells

- CRISPR/Cas9 plasmid targeting Apaf1 gene

- Transfection reagent

- Selection antibiotic (e.g., Puromycin)

- PCR reagents for genotyping

- Western blot reagents for protein validation

- T7 Endonuclease I or surveyor assay kit

- Methodology:

- gRNA Design: Design and clone guide RNA (gRNA) sequences targeting critical exons of the Apaf1 gene into a CRISPR/Cas9 plasmid.

- Cell Transfection: Transfect CHO-S cells with the CRISPR/Cas9 construct using a high-efficiency transfection method.

- Selection and Cloning: Apply antibiotic selection 48 hours post-transfection. Isolate single-cell clones by limiting dilution.

- Genotype Validation: Screen clones for indels at the target locus using T7E1 assay or PCR followed by sequencing. Confirm the absence of Apaf1 protein by Western blot analysis.

- Phenotypic Validation: Challenge validated clones with apoptosis inducers (e.g., staurosporine) and assay for caspase activity to confirm the anti-apoptotic phenotype.

- Expected Outcome: Apaf1-knockout cells will exhibit increased resistance to apoptosis, leading to extended culture viability and higher recombinant protein titers in prolonged fed-batch cultures [16].

Diagram 2: Engineering an anti-apoptotic cell line by targeting the intrinsic pathway.

Fusion Tags for Solubility and Folding

Fusion tags serve as versatile tools that assist in protein folding, enhance solubility, and simplify purification. Selecting the appropriate tag is crucial for optimizing the production of challenging recombinant proteins.

Protocol 2.1: Evaluating Fusion Tags for Soluble Expression

- Objective: To screen and identify the optimal fusion tag for enhancing solubility and yield of a difficult-to-express protein.

- Materials:

- Expression vectors with different N- or C-terminal tags (e.g., MBP, Trx, GST, SUMO, GFP)

- Competent expression cells (e.g., E. coli or CHO)

- Lysis buffer

- Affinity resins corresponding to tags (e.g., Amylose resin for MBP, Glutathione resin for GST, Ni-NTA for His-tag)

- SDS-PAGE and Western blot equipment

- Methodology:

- Construct Generation: Clone the gene of interest into a series of vectors offering different fusion tags.

- Small-Scale Expression: Transform/transfect each construct into the host cell and induce expression in small-scale culture.

- Solubility Analysis: Lyse the cells and separate soluble and insoluble fractions by centrifugation. Analyze both fractions by SDS-PAGE to determine the proportion of soluble target protein.

- Purification and Cleavage: Purify the fusion protein using the appropriate affinity resin. If needed, cleave the tag using a specific protease (e.g., TEV, SUMO protease) and re-purify to isolate the target protein.

- Expected Outcome: The optimal tag will significantly increase the recovery of soluble protein. For instance, Thioredoxin (Trx) fusions have proven effective in enhancing solubility for proteins like bromelain and SARS-CoV-2 Nucleocapsid protein, while GFP fusions can aid in real-time monitoring of expression and solubility [17].

Table 1: Comparison of Common Fusion Tags for Recombinant Protein Production

| Tag | Size (kDa) | Primary Function | Key Advantages | Potential Limitations |

|---|---|---|---|---|

| MBP | 42.5 | Solubility, Purification | Powerful solubility enhancer; affinity purification on amylose resin | Large size may alter protein activity or structure |

| Trx | 12 | Solubility, Folding | Enhances folding in E. coli; improves solubility via redox activity | Limited use for purification without a secondary tag |

| SUMO | 11 | Solubility, Cleavage | Enhances folding/solubility; precise and efficient cleavage by SUMO protease | Requires specific protease; adds an extra step in purification |

| GFP | 27 | Detection, Solubility | Enables real-time, visual monitoring of expression and localization | Fluorescence may not always correlate with POI folding; moderate size |

| GST | 26 (monomer) | Purification, Solubility | Affinity purification via glutathione resin; moderate solubility enhancement | Dimerization may affect activity of the target protein |

| HSA | 66 | Stability, Half-life | Extends serum half-life clinically validated for therapeutics | Large size may interfere with target protein activity or purification |

Optimization of Culture Parameters

Fed-batch culture is the industry standard for large-scale recombinant protein production. Optimizing feed composition and environmental parameters is essential to support high cell density and prolonged protein production.

Protocol 3.1: Development and Optimization of a Fed-Batch Process

- Objective: To establish a fed-batch feeding strategy that replenishes nutrients, minimizes the accumulation of inhibitory metabolites, and maximizes recombinant protein titer and quality.

- Materials:

- CHO cell line expressing the target protein

- Basal medium

- Concentrated feed medium

- Bioreactor or controlled shake flasks

- Bioanalyzer for metabolite monitoring (e.g., Glucose, Lactate)

- Gas blending system for pH and dissolved oxygen control

- Methodology:

- Inoculum and Basal Medium: Inoculate cells into a bioreactor containing the optimal basal medium. Set initial environmental parameters (Temperature: 36.5–37.0°C, pH: 6.8–7.2, DO: 30–50%).

- Feed Formulation: Prepare a concentrated feed medium containing key components identified in Table 2.

- Feeding Strategy: Initiate feeding when nutrients begin to deplete (typically after 2-4 days). Employ a predetermined bolus or continuous feeding schedule based on the measured consumption rates of glucose and amino acids.

- Process Monitoring: Monitor cell density, viability, and key metabolites (glucose, lactate, ammonium) daily. Adjust feeding rates accordingly to maintain nutrients within desired ranges and control byproduct accumulation.

- Harvest: Harvest the culture when cell viability drops below a critical threshold (e.g., 70–80%). Clarify the culture broth by centrifugation and/or filtration for downstream purification.

- Expected Outcome: An optimized fed-batch process can support high cell densities (>10–15 × 10^6 cells/mL) and significantly increase product titers, often exceeding 10 g/L for monoclonal antibodies, while maintaining product quality [15].

Table 2: Key Feed Components and Their Roles in Fed-Batch Culture

| Category | Example Components | Function in Culture | Considerations |

|---|---|---|---|

| Amino Acids | Tyrosine, Tryptophan, Glycine, Serine, SSC (a cysteine derivative) | Precursors for protein synthesis; prevent amino acid limitation | Tyrosine enhances antibody production; some like glutamine can increase ammonium production [15] |

| Carbon Sources | Glucose, Galactose, Fructose | Provide energy and carbon skeletons | High glucose can lead to lactate accumulation; galactose can improve protein sialic acid content [15] |

| Trace Elements | Selenite, Zinc (Zn²⁺), Copper (Cu²⁺) | Cofactors for essential enzymes and cellular processes | Zn²⁺ promotes protein production; Cu²⁺ can improve antibody titer [15] |

| Vitamins | B Vitamins, Vitamin C, Nicotinamide | Act as coenzymes in metabolic pathways | B vitamins can improve antibody titer; Vitamin C can decrease phosphorylation levels [15] |

| Lipids & Others | Lipid mixtures, Ethanolamine, Putrescine, Hydrolysates | Support membrane integrity and serve as signaling molecules | Lipid mixtures promote antibody titer; putrescine can increase production [15] |

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Reagent Solutions for Recombinant Protein Production Workflows

| Reagent / Solution | Primary Function | Application Notes |

|---|---|---|

| Kozak & Leader Sequences | Enhance translation initiation and protein secretion | Critical for vector optimization; species-specific variations can further improve efficiency [16]. |

| CRISPR/Cas9 System | Precise gene knockout (e.g., Apaf1) | Enables creation of engineered host cell lines with enhanced phenotypes like apoptosis resistance [16]. |

| Fusion Tag Vectors | Improve solubility, enable purification, and allow detection | A toolkit of vectors with different tags (MBP, Trx, SUMO) allows for empirical determination of the best tag for a given protein [17]. |

| Chemically Defined Serum-Free Medium (CD-SFM) | Supports high-density cell growth and product secretion | Eliminates serum variability, improves reproducibility, and simplifies downstream purification [15]. |

| Concentrated Feed Media | Replenishes nutrients in fed-batch cultures | Formulations are often proprietary; optimization of feeding strategy is as important as composition [15]. |

| Affinity Resins | Purification of tagged recombinant proteins (e.g., Ni-NTA, Amylose, Glutathione) | Enable high-purity recovery in a single step; choice of resin is determined by the fusion tag used [17]. |

| Proteases for Tag Removal | Cleave fusion tags from the purified protein (e.g., TEV, SUMO, Factor Xa) | Necessary when a native protein is required; cleavage specificity and efficiency are key selection criteria [17]. |

Concluding Remarks

The synergistic integration of genetic engineering and culture optimization is paramount for advancing the yield and purity of recombinant proteins. As demonstrated, this involves a multi-faceted strategy: optimizing expression vectors with regulatory elements, engineering host cells for resilience, selecting appropriate fusion tags for solubility, and implementing precisely controlled fed-batch processes. The protocols and data summarized in this application note provide a robust framework for researchers to systematically enhance their culture systems. Future directions will likely involve the application of systems and synthetic biology tools for more sophisticated metabolic engineering and the development of next-generation host cells tailored for specific protein classes, further pushing the boundaries of biopharmaceutical manufacturing.

In the development and manufacturing of biologics, three key performance metrics—yield, purity, and volumetric productivity—serve as critical indicators of process efficiency and product quality. These parameters form the foundation for evaluating the success of culture system optimization, directly impacting the economic viability and regulatory compliance of biopharmaceutical products. This document outlines standardized methodologies for quantifying these essential metrics, provides protocols for experimental optimization, and presents a framework for data analysis tailored to researchers and scientists engaged in bioprocess development.

Defining and Quantifying Core Metrics

Metric Definitions and Calculation Formulas

Table 1: Definition and Calculation of Key Performance Metrics

| Metric | Definition | Calculation Formula | Criticality in Process Assessment |

|---|---|---|---|

| Yield | The total amount of target product obtained from a process run. | Total Protein (mg) = Concentration (mg/mL) × Total Volume (mL) [5] | Determines process efficiency and material output for downstream applications [18]. |

| Purity | The proportion of the target molecule in the final product relative to total protein or impurities. | Purity (%) = (Target Protein Mass / Total Protein Mass) × 100 [19] | A Critical Quality Attribute (CQA) essential for drug safety and efficacy [19]. |

| Volumetric Productivity (STY) | The amount of product generated per unit volume of bioreactor per unit time. | Space-Time Yield (STY) = Total Product (g) / (Bioreactor Volume (L) × Process Time (day)) [20] | A key efficiency metric for upstream processes; directly impacts facility capacity and cost-per-unit [20]. |

Analytical Methods for Quantification

- Yield Quantification: Use Bradford, Lowry, or BCA assays for total protein concentration determination. For recombinant proteins specifically, Protein G affinity chromatography (for antibodies) or other specific HPLC assays are employed [5] [21].

- Purity Analysis: SDS-PAGE is used for initial purity assessment. More precise quantification requires analytical techniques such as HPLC (e.g., for host cell protein detection), capillary electrophoresis, or mass spectrometry [19].

- Process Monitoring: Integrated Process Analytical Technology (PAT) tools, including Raman and near-infrared spectroscopy, enable real-time monitoring of critical process parameters and quality attributes, facilitating better control [22] [23].

Experimental Protocol for System Optimization

This protocol provides a methodology for optimizing culture conditions to enhance yield, purity, and volumetric productivity, using a structured Design of Experiments (DoE) approach.

Initial Screening with Plackett-Burman Design (PBD)

Objective: To efficiently screen a large number of factors and identify the most significant variables affecting the target metrics [24]. Procedure:

- Select Factors: Choose physical and chemical parameters for screening (e.g., pH, temperature, NaCl concentration, inoculum size, incubation period, concentrations of key media components like ascorbic acid, ammonium citrate) [24].

- Define Levels: Set high (+1) and low (-1) levels for each factor based on prior knowledge or preliminary data [24].

- Experimental Setup: Execute the experimental runs as dictated by the PBD matrix (e.g., 12 runs for 11 variables). The orthogonal design ensures statistical independence of variables [24].

- Statistical Analysis: Analyze results using ANOVA to identify factors with statistically significant effects (p < 0.05) on the response (e.g., biomass yield, protein titer) [24].

In-Depth Optimization using Response Surface Methodology (RSM)

Objective: To model the response surface and find the optimal levels of the significant factors identified in the PBD screening [24]. Procedure:

- Design Selection: Employ a Central Composite Design (CCD) for the significant factors [24].

- Model Fitting: Execute the CCD experiments and fit the data to a quadratic model. Analyze the model using ANOVA to check for validity (high R² value, e.g., 0.9689) and adequate precision [24].

- Prediction and Validation: Use the model to predict optimal factor levels for maximum response. Conduct validation experiments under these predicted conditions to confirm the model's accuracy [24].

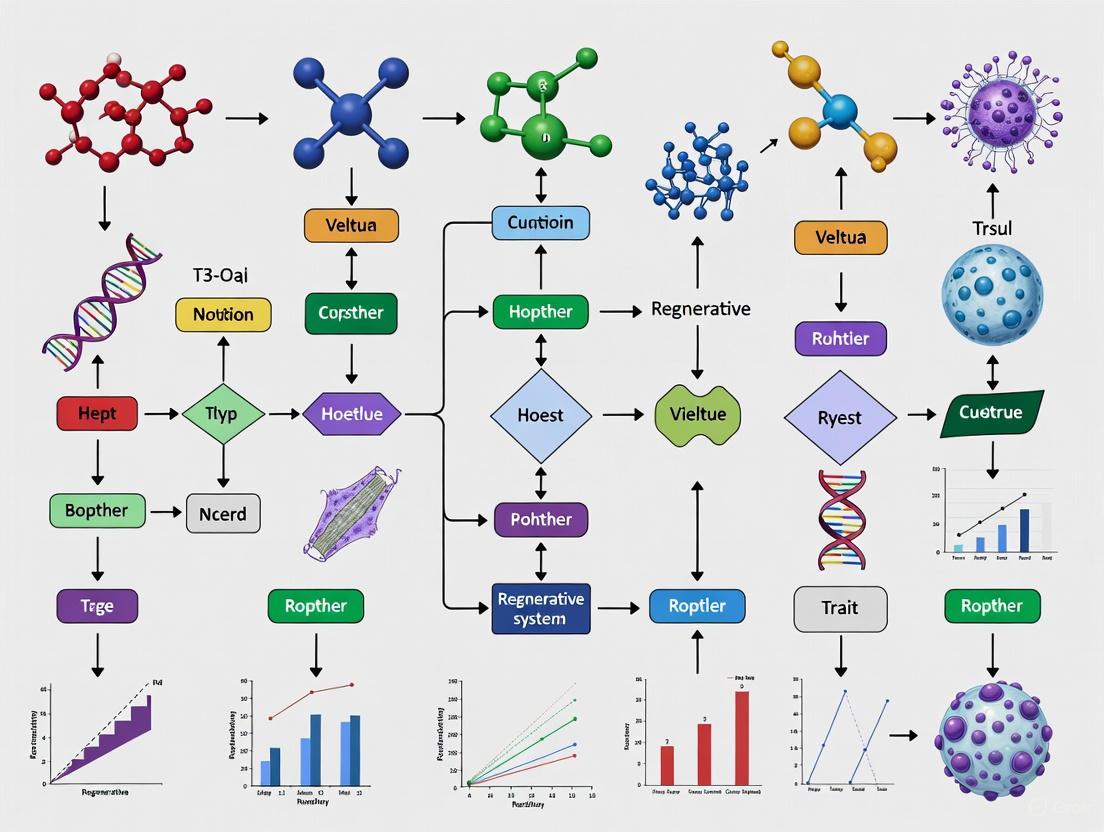

Figure 1: Experimental Optimization Workflow. This diagram illustrates the sequential statistical approach for culture system optimization, from initial factor screening to final model validation.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Reagents and Materials for Culture Optimization

| Item | Function/Application | Specific Examples |

|---|---|---|

| Chemically Defined Media | Serum-free, consistent base media that eliminates variability from animal-derived components [5]. | Various proprietary CHO formulations [21]. |

| Media Supplements | Boost cell growth and productivity. | LONG R³ IGF-I (superior to insulin), hydrolysates, peptones, recombinant growth factors [5]. |

| Metabolites & Nutrients | Provide energy and building blocks for cells and product synthesis. | Glucose, amino acids (e.g., Glutamine, Cysteine), vitamins (C, E) [5]. |

| Culture Additives | Modulate cellular processes to enhance yield or control product quality. | Histone deacetylase inhibitors (e.g., valproic acid, sodium butyrate) for transcriptional enhancement; glycosylation modifiers (e.g., kifunensine) [5]. |

| Surface Coatings | Promote adherence and growth of anchorage-dependent cells. | Poly-L-Lysine, Collagen, Fibronectin, Laminin, Matrigel [25]. |

| Analytical Tools | Quantify and qualify the product and process performance. | BioProfile Analyzer (metabolites), Cedex cell counter, HPLC, ELISA kits, qPCR kits for residual DNA testing [19] [21]. |

Case Studies in Metric Improvement

Case Study 1: Enhancing Recombinant Protein Yield in CHO Cells

- Challenge: A client producing "Mut-F" recombinant protein in CHO cells faced low yields (0.012 mg/L) in traditional shaker flask cultures [5].

- Optimization Strategy: Implementation of a perfusion bioreactor system with optimized media. The culture ran for 77 days with continuous nutrient delivery and waste removal [5].

- Result: A total of 220 mg of protein was collected, demonstrating a massive increase in volumetric productivity and total yield, enabling manufacturing scale-up [5].

Case Study 2: Optimizing an Enzyme Production Process

- Challenge: Improving the Space-Time Yield (STY) and reducing Cycle Time (Ct) for a feed additive enzyme [20].

- Optimization Strategy: A DoE approach was used to reveal a key relationship between the substrate-specific uptake rate (qS) and enzyme activity. This informed an optimized feeding profile and adjustments to aeration and pressure at scale [20].

- Result: The strategy led to enhanced STY and a reduced Ct during a large-scale manufacturing campaign [20].

Case Study 3: Maximizing Biomass Yield ofLactobacillus acidophilus

- Challenge: Enhancing the biomass yield of a probiotic strain in a cost-effective manner [24].

- Optimization Strategy: An initial PBD screened 11 variables, identifying pH, temperature, NaCl, and inoculum size as significant. These were further optimized using RSM-CCD [24].

- Result: A 1.45-fold increase in biomass yield was achieved, reaching 1.948 g/100 mL, showcasing the power of statistical optimization for microbial processes [24].

Table 3: Summary of Optimization Outcomes from Case Studies

| Case Study | System | Primary Metric Targeted | Optimization Outcome |

|---|---|---|---|

| Recombinant Protein in CHO | Mammalian Cell Perfusion Bioreactor | Volumetric Productivity / Total Yield | Achieved 220 mg total protein from a long-term culture, a dramatic increase from a baseline of 0.012 mg/L [5]. |

| Enzyme Production | Microbial Fermentation | Space-Time Yield (STY), Cycle Time (Ct) | Enhanced STY and reduced Ct through feeding strategy and aeration control [20]. |

| Probiotic Biomass | Microbial Fermentation | Biomass Yield | Achieved a 1.45-fold increase in biomass yield through statistical media and condition optimization [24]. |

Advanced Control Strategies for Consistent Metrics

Maintaining optimal yield and purity requires advanced process control strategies that move beyond basic set-point maintenance.

Table 4: Overview of Advanced Bioprocess Control Strategies

| Control Strategy | Description | Application in Bioprocessing |

|---|---|---|

| Open Loop Control | Pre-computed control actions executed without feedback. | Suitable for simple, well-understood processes with minimal disturbance [23]. |

| Closed Loop (PID) Control | System output is continuously measured and compared to a set point to minimize error. | Commonly used for maintaining basic parameters like pH and dissolved oxygen [23]. |

| Model Predictive Control (MPC) | A multivariate algorithm that uses a real-time process model to predict and optimize future process behavior. | Improves steady-state response and predicts upcoming disturbances; ideal for complex, nonlinear bioprocesses [23]. |

| Fuzzy Logic Control | A flexible reasoning system that mimics human decision-making using "IF-THEN" rules. | Effective for processes with imprecise domain knowledge or noisy data [23]. |

| Artificial Neural Networks (ANN) | A network of algorithms designed to recognize patterns and relationships in complex data sets. | Used for pattern recognition, classification, and prediction of process outcomes and product quality [23]. |

Figure 2: Advanced Process Control Loop. This diagram shows how Process Analytical Technology (PAT) sensors feed real-time data into advanced controllers, which then adjust process actuators to maintain optimal conditions within the bioreactor.

The Five-Stage Framework for Systematic Medium Optimization

Optimizing culture media is a critical step in biopharmaceutical development and recombinant protein production. The culture medium provides the essential nutrients and physicochemical environment that directly influence cellular metabolism, product yield, and critical quality attributes of biologics. However, medium optimization remains challenging due to the complex interactions between numerous components and biological variability. A systematic framework is therefore essential for efficiently navigating this complexity, reducing development time, and controlling costs, which can account for up to 80% of direct production expenses in recombinant protein processes [1]. This application note details a proven five-stage framework—planning, screening, modeling, optimization, and validation—to methodically optimize culture media for enhanced target yield and purity.

Stage 1: Planning and Objective Definition

Objective: To establish a clear optimization goal and identify the medium components and response variables for the study.

The planning stage transforms the medium design into a mathematical optimization problem. Each medium consists of n components (factors), and the response variable(s) (y) are functions of each component's concentration (xᵢ) [1]. The objective is defined based on the application and target protein.

Key Activities:

- Define Response Variables: Select measurable outcomes. For recombinant protein production, this typically includes protein yield, specific productivity, and cell growth. For quality-focused applications, target Critical Quality Attributes (CQAs) like charge heterogeneity [7] [1].

- Select Medium Components: Identify all potential nutrients, salts, vitamins, and supplements to be evaluated. In complex cases, this can involve dozens of components; one cited study optimized a 57-component serum-free medium [3].

- Establish a Cost Model: Develop a financial tracking system to estimate and forecast the total cost of ownership, including infrastructure and materials, as cost optimization requires a strategic, long-term approach [26].

Protocol: Defining Objectives and Response Variables

- Instrumentation: None required.

- Procedure:

- Hold a cross-functional meeting with process development, analytical, and manufacturing stakeholders.

- Formulate a primary optimization objective (e.g., "Maximize recombinant protein titer in CHO-K1 cells").

- Select primary (e.g., titer) and secondary (e.g., cell viability, product quality) response variables.

- Define the minimum set of medium components for investigation based on literature and prior knowledge.

- Document the objective, success criteria, and all selected components and variables.

Stage 2: Screening and Experimental Execution

Objective: To identify which medium components have statistically significant effects on the response variables.

Screening narrows the focus from many potential factors to the most influential ones, conserving resources.

Key Activities:

- Experimental Design: Use high-throughput systems and statistical designs like Design of Experiments (DoE) to efficiently test multiple factors and levels [1] [14]. This is more efficient than the traditional, one-factor-at-a-time (OFAT) method [14].

- Culturing: Execute experiments using appropriate vessels—from shake flasks to mini-bioreactors—depending on the scale and number of conditions [1].

- Data Collection: Measure response variables at pre-determined intervals. High-throughput methods, such as colorimetric assays (e.g., CCK-8 for NAD(P)H abundance), are advantageous for generating large datasets [14].

Table 1: Common Screening Designs for Medium Optimization

| Design Type | Best For | Key Advantage | Example Application |

|---|---|---|---|

| Plackett-Burman | Screening a large number of factors (>10) | Identifies the most influential factors with minimal runs | Initial screening of 20+ medium components to find 5-8 key drivers |

| Fractional Factorial | When interaction effects between factors are possible | Provides some data on interactions without a full factorial setup | Understanding interactions between key carbon and nitrogen sources |

| One-Factor-at-a-Time (OFAT) | Testing a very limited number of factors (<5) | Simple to execute and interpret | Inefficient for complex media; fails to capture interactions [14] |

Protocol: High-Throughput Screening in Microplates

- Research Reagent Solutions:

- Basal Medium: A chemically defined base (e.g., DMEM, RPMI, or a proprietary blend).

- Component Library: Stock solutions of amino acids, vitamins, trace elements, and lipids.

- Cell Line: Relevant host cell line (e.g., CHO-K1, HEK293, or Komagataella phaffii).

- Assay Kits: Metabolite assays (e.g., glucose/glutamine) and cell viability assays (e.g., CCK-8 for mammalian cells).

- Instrumentation: Automated liquid handler, multi-plate spectrophotometer, and bioreactor or CO₂ incubator with shaking.

- Procedure:

- Use an automated liquid handler to prepare medium formulations in 96-well deep-well plates according to the selected screening design.

- Inoculate cells at a standardized density (e.g., 1.0 x 10⁵ cells/mL for mammalian cells).

- Culture cells in a controlled incubator (e.g., 37°C, 5% CO₂, 125 rpm shaking).

- Sample at 24-hour intervals to measure cell density, viability, and metabolite levels.

- For protein production, harvest the broth at the end of the culture for titer and quality analysis.

Stage 3: Modeling

Objective: To establish a mathematical relationship between the significant medium components and the response variables.

Modeling transforms experimental data into a predictive tool for identifying optimal conditions.

Key Activities:

- Model Selection: Choose a modeling technique suited to the data's complexity and volume.

- Model Training: Use screening data to train the model, linking component concentrations to outcomes.

- Model Validation: Statistically validate the model to ensure it accurately represents the underlying system.

Table 2: Modeling Techniques for Medium Optimization

| Modeling Technique | Principle | Advantages | Limitations |

|---|---|---|---|

| Response Surface Methodology (RSM) | Uses linear or quadratic polynomials to fit data | Simple, well-understood, works with small datasets | Assumes a smooth, continuous response; can miss complex interactions [14] |

| Machine Learning (ML) / Al | Uses algorithms (e.g., GBDT) to learn complex, non-linear relationships from data | High predictive accuracy; captures complex interactions; suitable for large factor numbers [7] [14] | Requires larger datasets; "black box" nature can reduce interpretability (though GBDT offers more insight) [14] |

| Gaussian Process (GP) | A probabilistic model that provides a prediction with an associated uncertainty | Excellent for small data; quantifies prediction confidence; ideal for iterative Bayesian Optimization [6] | Computationally intensive for very large datasets |

The following workflow diagram illustrates how these models, particularly ML and GP, are integrated into an active learning cycle for iterative medium optimization.

Stage 4: Optimization and Validation

Objective: To refine the concentrations of significant medium components and experimentally verify the model's predictions.

This stage uses the model from Stage 3 to navigate the design space and pinpoint the optimum.

Key Activities:

- Optimization Method: Apply an optimization algorithm to the model to find the component concentrations that maximize or minimize the response.

- Experimental Validation: Prepare the predicted optimal medium and test it in the lab.

- Active Learning: Integrate validation results back into the model in an iterative loop to refine predictions. This approach has been shown to optimize a 29-component medium for mammalian cells in just 3-4 cycles [14].

Key Optimization Algorithms:

- Bayesian Optimization (BO): An efficient framework, especially when coupled with Gaussian Process models. It strategically balances exploring new regions of the design space and exploiting known promising areas, leading to optimal formulations with 3–30 times fewer experiments than standard DoE [6].

- Genetic Algorithms: Inspired by natural selection, useful for navigating complex, non-linear spaces.

- Response Surface Methodology (RSM): A traditional method that works well for simpler, quadratic response surfaces.

Protocol: Bayesian Optimization for Medium Formulation

- Research Reagent Solutions: The components identified as significant in Stage 2.

- Instrumentation: Laboratory equipment for medium preparation and cell culture analysis.

- Software: A programming environment (e.g., Python with libraries like Scikit-learn, GPyOpt) or commercial software capable of running BO.

- Procedure:

- Input the initial screening data (from Stage 2) into the BO software as the starting dataset.

- The BO algorithm uses the GP model to propose 4-8 new medium formulations expected to improve the response.

- Prepare these formulations and test them experimentally (as in Stage 2).

- Input the new results into the BO platform to update the model and generate the next set of proposals.

- Repeat steps 2-4 until performance plateaus or the experimental budget is spent. Convergence often occurs within 4-6 iterations [14].

Stage 5: Implementation and Continuous Improvement

Objective: To transition the optimized medium to production and establish monitoring for long-term consistency and improvement.

Validation ensures the medium performs robustly at a larger scale and over multiple batches.

Key Activities:

- Scale-Up Verification: Test the optimized medium in bioreactors to confirm performance at a relevant scale.

- Robustness Testing: Challenge the medium with small variations in process parameters to ensure consistent performance.

- Cost Analysis: Finalize the cost model for the new formulation and compare it against the baseline [26].

- Monitor and Iterate: Implement systems to track performance and cost in production. Use this data to fuel future optimization cycles [26].

Protocol: Bench-Scale Bioreactor Validation

- Research Reagent Solutions: Optimized medium formulation from Stage 4.

- Instrumentation: Benchtop bioreactor system with controls for pH, dissolved oxygen, and temperature.

- Procedure:

- Prepare a large batch of the optimized medium using standard Good Manufacturing Practice (GMP) principles where applicable.

- Inoculate a bioreactor and monitor cell growth, metabolite consumption, and product formation.

- Compare the performance (e.g., peak cell density, product titer, and quality attributes) against the control medium.

- Conduct at least three independent runs to establish reproducibility.

- Perform a full cost-benefit analysis to justify the adoption of the new medium formulation.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents for Cell Culture Medium Optimization

| Reagent Category | Specific Examples | Critical Function |

|---|---|---|

| Basal Media & Blends | DMEM, RPMI, AR5, XVIVO [6] | Provide the foundational nutrients, salts, and buffer system for cell growth and maintenance. |

| Amino Acids | L-glutamine, essential amino acids (Lys, Leu, Val) [14] | Serve as building blocks for protein synthesis; some function as energy sources or signaling molecules. |

| Vitamins & Cofactors | B vitamins, Ascorbic Acid [14] | Act as enzyme cofactors in critical metabolic pathways for energy production and biosynthesis. |

| Lipids & Precursors | Choline, Inositol [14] | Essential components of cell membranes and precursors for signaling molecules. |

| Trace Elements & Metals | Zinc (Zn²⁺), Copper (Cu²⁺), Selenium (Se) [7] | Cofactors for enzymes (e.g., carboxypeptidase); directly influence critical quality attributes like charge variants [7]. |

| Carbon & Energy Sources | Glucose, Galactose, Glutamine | Primary sources of cellular energy (ATP) and carbon skeletons for biosynthesis. |

| Buffers & pH Regulators | HEPES, Sodium Bicarbonate | Maintain the physicochemical environment (pH), which critically impacts cell health and product quality [7]. |

| Growth Factors & Cytokines | Insulin, TGF-β, IL-2 [6] | Signal molecules that can be added to serum-free media to specifically promote cell survival, proliferation, or maintain phenotype. |

The systematic five-stage framework for medium optimization—planning, screening, modeling, optimization, and validation—provides a robust roadmap for enhancing cell culture performance. By moving beyond traditional, inefficient methods and leveraging modern machine learning and Bayesian optimization, scientists can dramatically reduce development time and resources while achieving superior outcomes. This structured approach is indispensable for advancing biopharmaceutical research and development, ensuring the efficient production of high-quality therapeutics.

From Theory to Practice: Advanced Tools and High-Throughput Methodologies

The optimization of culture systems is a cornerstone of biopharmaceutical research, directly impacting the yield and purity of target biological products. The journey from traditional spinner flasks to advanced microbioreactor systems represents a paradigm shift in how scientists approach cell culture and process development. Spinner flasks, while useful for initial expansion, provide limited control over critical process parameters and are labor-intensive, leading to challenges in scalability and reproducibility [27]. The advent of high-throughput screening (HTS) technologies has revolutionized this landscape, enabling researchers to rapidly assess thousands of experimental conditions with minimal manual intervention. Modern automated microbioreactor systems now offer parallel experimentation capabilities with sophisticated monitoring and control of parameters such as pH, dissolved oxygen, and temperature, closely mimicking the environment of larger-scale bioreactors [27]. This evolution has significantly accelerated process development timelines while enhancing data quality, making it possible to optimize culture systems for superior target yield and purity with unprecedented efficiency.

Quantitative Comparison of Culture Systems

The transition from traditional culture vessels to modern microbioreactors brings substantial differences in operational parameters, control capabilities, and experimental throughput. The table below summarizes these key distinctions, highlighting the technological evolution.

Table 1: Comparison of Culture Systems for Process Development

| Feature | Spinner Flasks | Microtiter Plates | Automated Microbioreactors |

|---|---|---|---|

| Typical Working Volume | 100 - 1000 mL [27] | 0.1 - 0.2 mL [27] | 10 - 15 mL [27] |

| Process Control | Limited; primarily agitation [27] | Weak control over processing conditions [27] | Full control of pH, DO, temperature [27] |

| Data Density | Low; often endpoint measurements [27] | Low; often endpoint measurements [27] | High; real-time data output [27] |

| Throughput | Low | High | High (e.g., 24-48 parallel reactors) [27] |

| Scalability | Low reproducibility during scale-up [27] | Limited scalability [27] | High correlation to bench-scale performance [27] |

| Automation Potential | Low | Moderate | High |

| Relative Cost per Experiment | High (labor, materials) [27] | Low | Moderate (lower than bench-scale) [27] |

Application Note: CHO Cell Culture Optimization

Background and Objectives

Chinese Hamster Ovary (CHO) cells are the predominant host for recombinant protein production, notably monoclonal antibodies (mAbs), due to their ability to perform human-like post-translational modifications [27]. The primary objective of this application note is to outline a systematic approach for optimizing CHO cell culture processes using an automated microbioreactor system (ambr15). The workflow focuses on evaluating critical process parameters (CPPs) to enhance critical quality attributes (CQAs) such as titer and glycan profile, ultimately ensuring a scalable and robust manufacturing process.

Experimental Workflow

The following diagram illustrates the complete experimental workflow for cell culture process optimization, from initial seed train to final analysis.

Detailed Protocol

Seed Train Expansion and Preparation

Goal: To generate a sufficient quantity of high-viability CHO cells for inoculating microbioreactors.

- Cell Thaw: Rapidly thaw frozen vial(s) of recombinant DG44 CHO cells (~3 x 10⁷ cells/mL) in a 37°C water bath until only a small ice sliver remains. Decontaminate the vial with 70% ethanol, transfer to a biosafety cabinet, and gently resuspend [27].

- Initial Expansion: Transfer 1 mL of cell suspension to a sterile 125 mL vented shake flask containing 29 mL of pre-warmed OptiCHO media supplemented with 8 mM L-glutamine and 1x Penicillin/Streptomycin [27].

- Incubation: Place shake flask(s) in an incubator at 37°C, 8% CO₂, and 130 rpm orbital agitation [27].

- Monitoring and Subculture: Monitor viable cell density (VCD) daily. At 72 hours post-inoculation, subculture cells into a 125 mL spinner flask with fresh pre-warmed media to achieve a final volume of 100 mL and a target density of 0.7-1.0 x 10⁶ cells/mL. Incubate spinner cultures at 37°C, 8% CO₂, and 70 rpm agitation [27].

- Pre-Inoculum Feed: On day three, one day prior to microbioreactor inoculation, add fresh pre-warmed media to the spinner flask to maintain cell viability ≥90%. Do not exceed 125 mL total volume [27].

Microbioreactor System Setup and Operation

Goal: To configure and operate the ambr15 system for a designed experiment.

- System Initialization: Initialize the ambr15 operating software. Ensure the integrated automated cell counter is running and connected. Prime the cell counter with fresh reagent and empty waste [27].

- Consumables Loading: Define plate layout in the software's "Mimic" section. Inside a safety cabinet, unpack and place twelve sterile culture vessels per station. Load the following onto the designated deck positions [27]:

- 24-well media charging plate.

- 24-well inoculation plate.

- Pipette tip boxes (1 mL and 4 mL).

- Single-well plates for PBS and NaOH.

- 24-well antifoam plate.

- Clamp Assembly: Place autoclaved clamp plates on culture vessels, ensuring stirrer holes are aligned. Check O-rings for integrity. Secure with stir plates and fasten with screws and knobs [27].

- Process Configuration: Program the system recipe according to the DOE. Standard parameters include: temperature 37°C, pH setpoint 7.2 (controlled via CO₂ and base addition), dissolved oxygen (DO) at 50% (controlled through O₂, N₂, and air blending), and agitation speeds appropriate for the cell line [27].

- Inoculation and Run Initiation: Aseptically transfer the prepared cell inoculum to the designated inoculation plate. Start the automated run sequence, which handles vessel calibration, media charging, and inoculation. The system will manage the entire culture process based on the programmed recipe [27].

Sampling and Analysis

Goal: To monitor cell culture performance and assess product quality.

- Automated Sampling: The system can be programmed for automated sampling integrated with a cell counter and nutrient analyzer. To maintain a minimum working volume of 10 mL, limit the number and volume of samples [27].

- Viable Cell Density (VCD) and Viability: Analyze samples using the integrated cell counter or a manual hemocytometer with Trypan Blue exclusion [27].

- Metabolite Analysis: Use a nutrient analyzer (e.g., Nova Bioprofile) to measure concentrations of key metabolites like glucose, glutamine, glutamate, lactate, and ammonium to understand cellular metabolism [27].

- Product Titer and Quality: At harvest, clarify culture supernatants by centrifugation. Analyze for:

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful execution of high-throughput screening experiments relies on a defined set of specialized reagents and equipment. The following table catalogs the essential components for a typical CHO cell culture study in a microbioreactor system.

Table 2: Key Research Reagent Solutions for Microbioreactor Operations

| Category/Item | Specific Example | Function in the Protocol |

|---|---|---|

| Cell Line | Recombinant CHO-DG44 cells | Host for recombinant protein (e.g., mAb) production [27]. |

| Basal Media | OptiCHO Serum-Free Media | Provides nutrients and environment to support cell growth and protein production [27]. |

| Supplements | L-Glutamine (8 mM), Antibiotic-Antimycotic (1X) | Supports cell growth and prevents microbial contamination [27]. |

| Bioreactor System | ambr15 System with 48x 15 mL bioreactors | Automated platform for parallel cell culture with full parameter control [27]. |

| Cell Counter | Integrated Automated Cell Counter (e.g., ViaFlo) | Provides high-throughput, consistent measurements of viable cell density and viability [27]. |

| Detection Antibody | PE-conjugated anti-PD-L1 (for cell-based assays) | Used in flow cytometry to detect and quantify specific cell surface proteins [28]. |

| Viability Stain | Fixable Viability Dye 660 | Distinguishes live from dead cells in flow cytometry analysis, ensuring accurate results [28]. |

| FACS Buffer | DPBS with 2% FBS and 1 mM EDTA | Buffer for washing and resuspending cells during flow staining procedures to maintain cell viability and reduce non-specific binding [28]. |

| Critical Process Reagents | 1M Sodium Hydroxide (NaOH), Antifoam, CO₂, O₂, N₂ | Used for pH control, foam suppression, and dissolved oxygen control within the microbioreactors [27]. |

Advanced Screening Applications and Protocols

High-Throughput Flow Cytometry Screening

Flow cytometry represents a powerful application of HTS for analyzing protein expression at the single-cell level. The following protocol is adapted from a screen for modulators of PD-L1 surface expression.

Protocol Summary: High-Throughput Small Molecule Screen via Flow Cytometry [28]

- Experimental Design: THP-1 cells (human monocytic leukemia cell line) are treated with IFN-γ to induce PD-L1 expression simultaneously with a library of small molecules (~200,000 compounds). The assay is performed in 384-well plates, testing about 2400 compounds per screen without replicates. A JAK Inhibitor is used as a positive control [28].

- Compound Transfer: Using a pintool (e.g., BioMek FX), transfer 100 nL of compound from source plates (compounds at 2 mM in DMSO) to cell culture plates. Wash the pintool between transfers with DMSO, isopropyl alcohol, and methanol to prevent cross-contamination [28].

- Cell Culture and Staining: Culture THP-1 cells for three days post-treatment. Centrifuge plates and wash cells using an automated plate washer. Block cells with FcR blocking reagent, then stain with a PE-conjugated anti-PD-L1 antibody and a fixable viability dye [28].

- Data Acquisition and Analysis: Acquire data using a flow cytometer with an autosampler (e.g., HyperCyt). Analyze data using software such as FlowJo or HyperView. Hits are identified based on the geometric mean fluorescence intensity of PD-L1 staining, normalized to IFN-γ-treated vehicle controls [28].

Data Analysis and Decision-Making

The massive datasets generated by HTS campaigns require robust bioinformatics pipelines. The primary goal is to distinguish true hits from background noise. Data is typically normalized to positive and negative controls on each plate to account for inter-plate variability [28]. Z-score or Z'-factor calculations are commonly used to assess assay quality and identify hits that fall outside a predefined statistical threshold (e.g., >3 standard deviations from the mean) [28]. For cell culture process optimization, multivariate analysis techniques like Principal Component Analysis (PCA) and Partial Least Squares (PLS) regression can correlate process parameters (e.g., pH, feed strategy) with critical quality attributes (e.g., titer, glycan profile) [27]. This data-driven approach ensures that only the most promising process conditions or compound hits are selected for further, more resource-intensive scale-up studies.

The migration from spinner flasks to automated microbioreactor systems marks a significant leap forward in bioprocess development. This transition enables researchers to employ high-throughput screening strategies that were previously impractical, dramatically increasing the pace and quality of process optimization. The detailed protocols and tools outlined in this document provide a framework for systematically exploring a vast experimental space to enhance target yield and purity. By integrating these advanced HTS platforms with robust analytical techniques and data analysis methods, scientists can build predictive models and generate highly scalable process knowledge, ultimately accelerating the journey from laboratory discovery to commercial manufacturing of biotherapeutics.

Design of Experiments (DoE) for Efficient Multi-Factor Screening

Design of Experiments (DoE) is a structured, statistical framework for planning and conducting experiments that aims to describe and explain the variation of information under conditions hypothesized to reflect that variation [29]. This methodology is particularly valuable for efficiently screening multiple factors to determine which have significant effects on a response, and to understand the interactions between these factors [29]. Unlike the traditional "one-factor-at-a-time" approach, multifactorial experiments enabled by DoE can evaluate the effects and potential interactions of several independent variables (factors) simultaneously, making them far more efficient for complex biological systems [29].

In the context of optimizing culture systems for target yield and purity, DoE provides a powerful methodology for identifying critical process parameters and their optimal ranges with a minimum number of experimental runs. This approach is recognized as a key tool in the successful implementation of Quality by Design (QbD) frameworks in bioprocess development [29].

Fundamental Principles of DoE

The modern framework for experimental design was established by Ronald Fisher, who emphasized several core principles that remain foundational to DoE [29]:

- Comparison: Treatments should be compared against each other or a control baseline to provide meaningful results.

- Randomization: Random assignment of experimental units to treatment groups helps mitigate confounding by distributing the effects of extraneous variables randomly across treatments.

- Statistical Replication: Repeating measurements and experiments helps identify sources of variation, better estimate true treatment effects, and strengthen reliability.

- Blocking: Arranging experimental units into similar groups (blocks) reduces known but irrelevant sources of variation, increasing precision.

- Orthogonality: Using orthogonal contrasts ensures that comparisons are uncorrelated and independently distributed, providing distinct information.

- Multifactorial Experiments: Evaluating multiple factors simultaneously instead of using one-factor-at-a-time approaches [29].

Case Study: DoE in Human Primary B-Cell Culture Optimization

Background and Challenge

A recent study demonstrated the application of DoE to optimize culture conditions for human primary B-cells, which are essential components of the immune system responsible for antibody production, cytokine secretion, and antigen presentation [30]. While mouse models have illuminated key mechanisms underlying B-cell activation, the translation of these findings to human biology remained unclear, necessitating the development of an optimized human primary B-cell culture system [30].

The researchers faced the challenge of understanding how multiple factors - CD40L, BAFF, IL-4, and IL-21 - individually and interactively influenced B-cell viability, proliferation, and differentiation. Traditional approaches would have required numerous experimental runs to evaluate these factors and their interactions comprehensively.

Experimental Design and Implementation

The study employed a DoE approach to optimize critical parameters and dissect the individual contributions of each specific factor in the culture system [30]. The experimental system utilized feeder cells engineered to express CD40L, supplemented with the cytokines BAFF, IL-4, and IL-21 [30].

Key factors investigated:

- CD40L (CD40 ligand): A key co-stimulatory ligand provided by T-helper cells

- BAFF (B-cell activating factor): An essential survival factor for B cells